According to Gartner, 95% of data-driven decisions in supply chain operations are expected to be partially automated by the next year, and 25% of key performance indicator (KPI) reporting will be powered by GenAI models by 2028.

In line with this, Oracle recently announced adding generative AI capabilities within Oracle Fusion Cloud Supply Chain & Manufacturing (SCM). This was done to help businesses manage their supply chains more efficiently and effectively.

“We are working with customers to help them optimise their supply chains, which has a direct impact on financial KPIs,” said Sunil Wahi, vice president JAPAC, Oracle, in an exclusive interview with AIM. “Every operational KPI is linked with financial KPIs.”

“Supply chain is where a whole lot of costs and margins are hidden,” said Wahi, adding that a beautiful supply chain is when it is invisible. “If you are getting your orders and shipments on time, you would never be bothered about what supply chain is running behind.”

Oracle has brought in prediction driven supply chain command centres. Wahi said that using this tool, a company can plan its entire supply chain and make decisions on the placement of distribution centres, logistic hubs, RDCs, and so on, which then breaks down into a more visible supply chain for the company.

“It functions like a mission control centre for your supply chain, bringing together data, intelligence, and recommendations to give you a holistic view and enable faster decision-making,” he added.

Oracle is not Alone

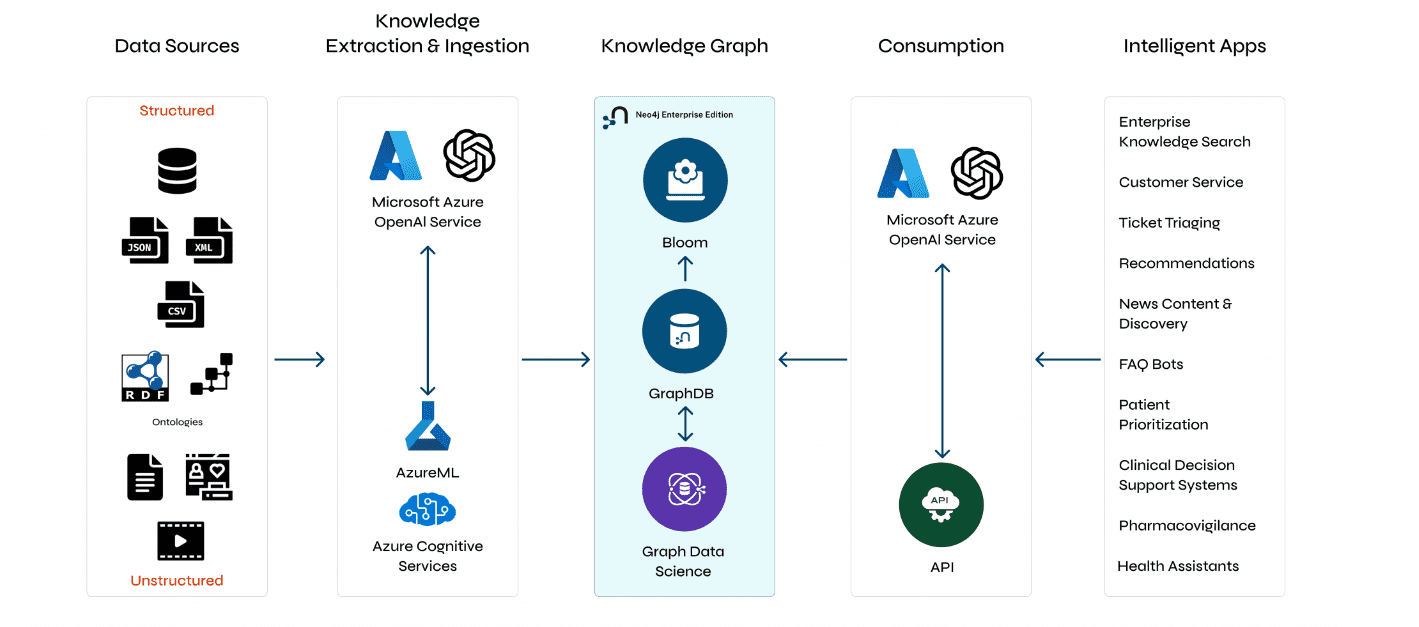

AWS Supply Chain recently added generative AI to simplify the data ingestion process and improve customer’s application on-boarding and setup experience.

Similarly, Microsoft is also incorporating generative AI capabilities within Microsoft Dynamics 365 Supply Chain Management. For instance, the AI-powered Microsoft Supply Chain Center news module proactively flags external issues such as weather, financial, and geopolitical news that may impact key supply chain processes.

Meanwhile, Google Cloud is working with Accenture and Infosys develop a suite of transformative AI platforms and industry solutions for a range of business scenarios including optimising supply chains, using generative AI.

Oracle’s GenAI Prowess

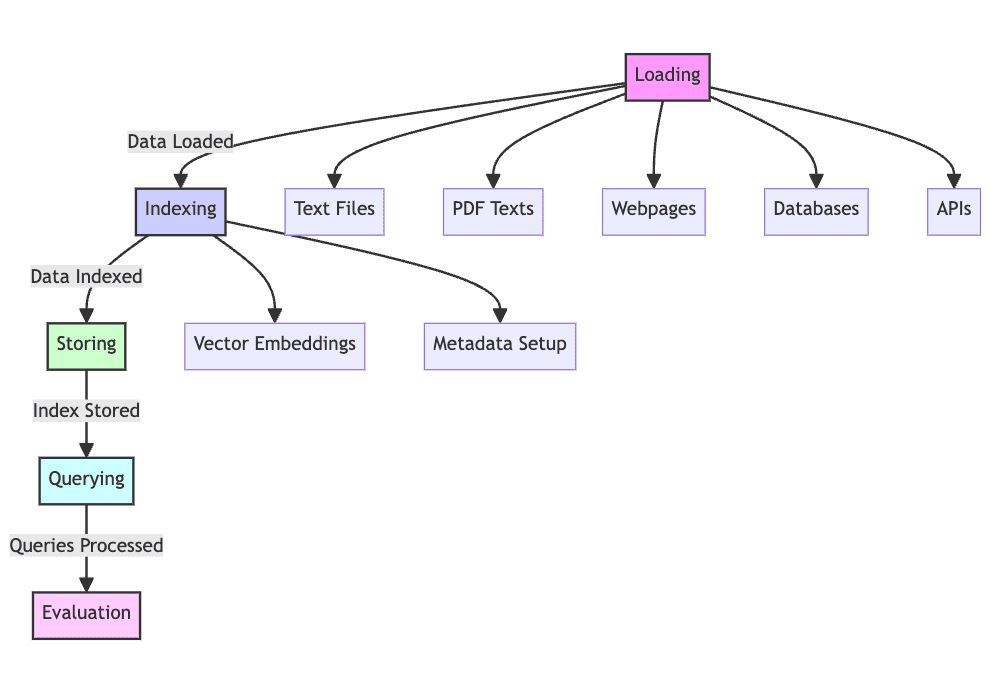

Generative AI within Oracle SCM is designed to automate and optimise various supply chain tasks, from inventory management to order fulfilment.

“There are multiple generative AI use cases, such as capturing item descriptions in an AI-assisted authoring mode, fulfilling supplier contracts, and capturing manufacturing productivity enhancements with an AI-assisted mobile app,” said Wahi.

Moreover, Oracle has introduced generative AI support in Oracle Product Lifecycle Management which helps product specialists create SEO-focused product descriptions quickly. This saves time, reduces errors, and improves overall quality, leading to increased customer engagement and sales for organisations.

OCI also uses generative AI to perform root cause analysis in supply chain operations. It ingests real-time data and uses a query-based system to provide actionable insights, helping to identify and resolve supply chain issues efficiently.

“We are working with a large pharma customer in India that had just automated their entire supply planning processes on Fusion Cloud. For them, the whole objective was to allocate constrained resources to the right customer orders, which are most profitable,” said Wahi.

Generative AI is changing how the supply chain manages sourcing and procurement. It handles purchase orders, negotiates deals, selects suppliers, and assists in contract preparations. For example, Walmart is using generative AI to automate supplier negotiations.

According to a report, over 65% preferred negotiating with a generative AI bot instead of an employee at the company. There have also been instances where companies are using GenAI tools to negotiate against each other.

Wahi was positive that with generative AI-powered supplier recommendations embedded in Oracle Procurement, organisations will be able to use information such as product descriptions and purchase categories to identify suppliers, improve sourcing efficiency, help lower costs, and reduce supplier risk.

“Generative AI itself will be a huge transformative space for us. The partnership with Cohere is extremely important because that’s where we are drawing upon the learnings, and I would say it will be an extremely important partnership which will continue to grow,” concluded Wahi, adding that the company will bring in about 100+ use cases within Fusion Cloud applications around generative AI.

The post Oracle Integrates GenAI to Enhance Supply Chain and Automate KPIs appeared first on Analytics India Magazine.

pic.twitter.com/NWowAlDLMv

pic.twitter.com/NWowAlDLMv