Image created by Author using Midjourney

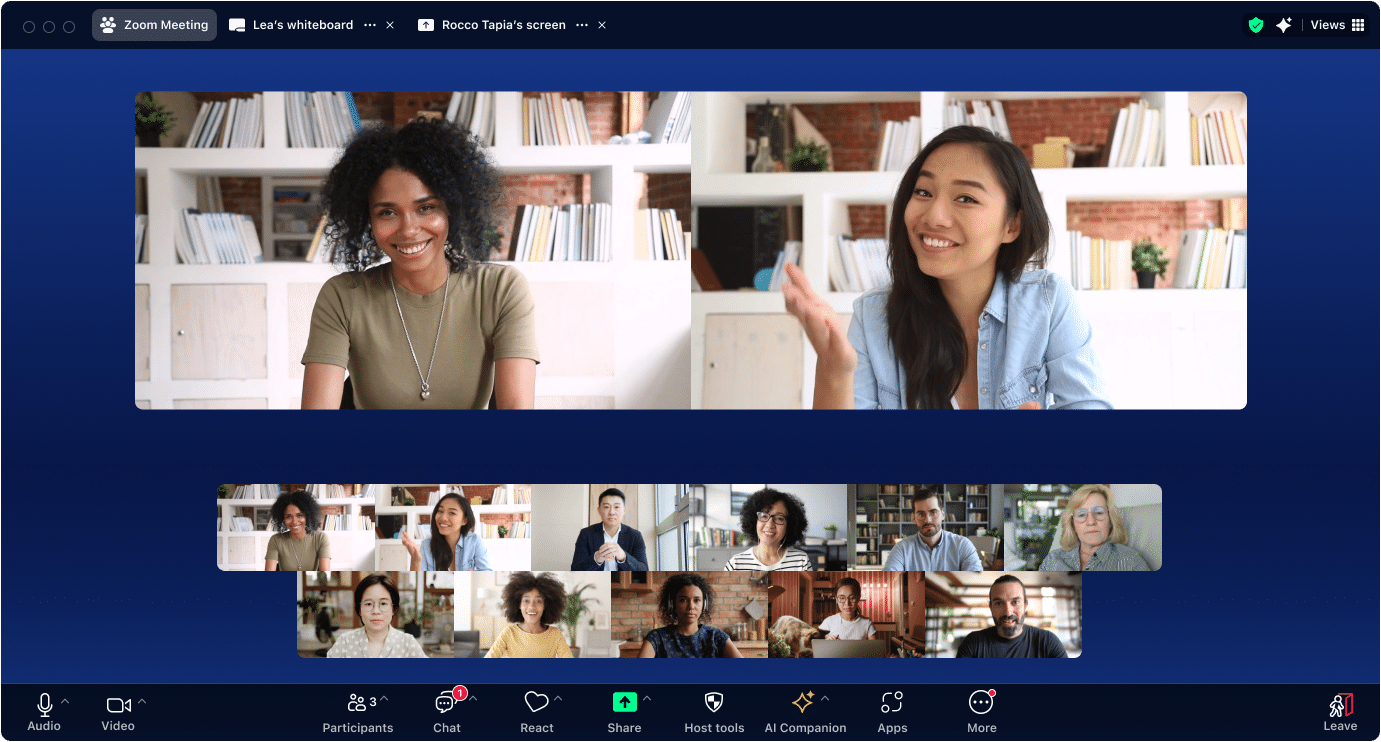

Introduction

Sentiment analysis refers to natural language processing (NLP) techniques that are used to judge the sentiment expressed within a body of text and is an essential technology behind modern applications of customer feedback assessment, social media sentiment tracking, and market research. Sentiment helps businesses and other organizations assess public opinion, offer improved customer service, and augment their products or services.

BERT, which is short for Bidirectional Encoder Representations from Transformers, is a language processing model that, when initially released, improved the state of the art of NLP by having an important understanding of words in context, surpassing prior models by a considerable margin. BERT's bidirectionality — reading both the left and right context of a given word — proved especially valuable in use cases such as sentiment analysis.

Throughout this comprehensive walk-through, you will learn how to fine-tune BERT for your own sentiment analysis projects, using the Hugging Face Transformers library. Whether you are a newcomer or an existing NLP practitioner, we are going to cover a lot of practical strategies and considerations in the course of this step-by-step tutorial to ensure that you are well equipped to fine-tune BERT properly for your own purposes.

Setting Up the Environment

There are some necessary prerequisites that need to be done prior to fine-tuning our model. Specifically, this will require Hugging Face Transformers, in addition to both PyTorch and Hugging Face's datasets library at a minimum. You might do so as follows.

pip install transformers torch datasets

And that's it.

Preprocessing the Data

You will need to choose some data to be using to train up the text classifier. Here, we'll be working with the IMDb movie review dataset, this being one of the places used to demonstrate sentiment analysis. Let's go ahead and load the dataset using the datasets library.

from datasets import load_dataset dataset = load_dataset("imdb") print(dataset)

We will need to tokenize our data to prepare it for natural language processing algorithms. BERT has a special tokenization step which ensures that when a sentence fragment is transformed, it will stay as coherent for humans as it can. Let’s see how we can tokenize our data by using BertTokenizer from Transformers.

from transformers import BertTokenizer tokenizer = BertTokenizer.from_pretrained('bert-base-uncased') def tokenize_function(examples): return tokenizer(examples['text'], padding="max_length", truncation=True) tokenized_datasets = dataset.map(tokenize_function, batched=True)

Preparing the Dataset

Let's split the dataset into training and validation sets to evaluate the model's performance. Here’s how we will do so.

from datasets import train_test_split train_testvalid = tokenized_datasets['train'].train_test_split(test_size=0.2) train_dataset = train_testvalid['train'] valid_dataset = train_testvalid['test']

DataLoaders help manage batches of data efficiently during the training process. Here is how we will create DataLoaders for our training and validation datasets.

from torch.utils.data import DataLoader train_dataloader = DataLoader(train_dataset, shuffle=True, batch_size=8) valid_dataloader = DataLoader(valid_dataset, batch_size=8)

Setting Up the BERT Model for Fine-Tuning

We will use the BertForSequenceClassification class for loading our model, which has been pre-trained for sequence classification tasks. This is how we will do so.

from transformers import BertForSequenceClassification, AdamW model = BertForSequenceClassification.from_pretrained('bert-base-uncased', num_labels=2)

Training the Model

Training our model involves defining the training loop, specifying a loss function, an optimizer, and additional training arguments. Here is how we can set up and run the training loop.

from transformers import Trainer, TrainingArguments training_args = TrainingArguments( output_dir='./results', evaluation_strategy="epoch", learning_rate=2e-5, per_device_train_batch_size=8, per_device_eval_batch_size=8, num_train_epochs=3, weight_decay=0.01, ) trainer = Trainer( model=model, args=training_args, train_dataset=train_dataset, eval_dataset=valid_dataset, ) trainer.train()

Evaluating the Model

Evaluating the model involves checking its performance using metrics such as accuracy, precision, recall, and F1-score. Here is how we can evaluate our model.

metrics = trainer.evaluate() print(metrics)

Making Predictions

After fine-tuning, we are now able to use the model for making predictions on new data. This is how we can perform inference with our model on our validation set.

predictions = trainer.predict(valid_dataset) print(predictions)

Summary

This tutorial has covered fine-tuning BERT for sentiment analysis with Hugging Face Transformers, and included setting up the environment, dataset preparation and tokenization, DataLoader creation, model loading, and training, as well as model evaluation and real-time model prediction.

Fine-tuning BERT for sentiment analysis can be valuable in many real-world situations, such as analyzing customer feedback, tracking social media tone, and much more. By using different datasets and models, you can expand upon this for your own natural language processing projects.

For additional information on these topics, check out the following resources:

- Hugging Face Transformers Documentation

- PyTorch Documentation

- Hugging Face Datasets Documentation

These resources are worth investigating in order to dive more deeply into these issues and advance your natural language processing and sentiment analysis abilities.

Matthew Mayo (@mattmayo13) holds a Master's degree in computer science and a graduate diploma in data mining. As Managing Editor, Matthew aims to make complex data science concepts accessible. His professional interests include natural language processing, machine learning algorithms, and exploring emerging AI. He is driven by a mission to democratize knowledge in the data science community. Matthew has been coding since he was 6 years old.

More On This Topic

- How to Use Hugging Face AutoTrain to Fine-tune LLMs

- Mistral 7B-V0.2: Fine-Tuning Mistral’s New Open-Source LLM with…

- Comparing Natural Language Processing Techniques: RNNs, Transformers, BERT

- How To Fine-Tune ChatGPT 3.5 Turbo

- Fine-Tuning BERT for Tweets Classification with HuggingFace

- Training BPE, WordPiece, and Unigram Tokenizers from Scratch using…