Image by Author

SQLite is a lightweight, serverless relational database management system (RDBMS) that is widely used due to its simplicity and ease of embedding within applications.

So whether you're building a small application, managing data locally, or prototyping a project, SQLite provides a convenient solution for storing and querying structured data. In this tutorial, you’ll learn how to work with SQLite databases from Python using the built-in sqlite3 module.

Particularly, you’ll learn how to connect to an SQLite database from Python and perform basic CRUD operations. Let’s get started.

Setting Up the Environment

As a first step create a dedicated virtual environment for your project (in the project directory) and activate it. You can do it using the built-in venv module like so:

$ python3 -m venv v1 $ source v1/bin/activate

In this tutorial, we’ll use Faker to generate synthetic records. So install it using pip:

$ pip3 install Faker

The sqlite3 module is built into the Python standard library, so you don't have to install it. So if you’ve installed Faker and are using a recent version of Python, you’re good to go!

Connecting to an SQLite Database

In the project directory, create a Python script and get started. As a first step to interact with the database, we should establish a connection with the database.

To connect to a sample database example.db, you can use the connect() function from the sqlite3 module like so:

conn = sqlite3.connect(‘example.db’)

If the database already exists, then it connects to it. Else it creates the database in the working directory.

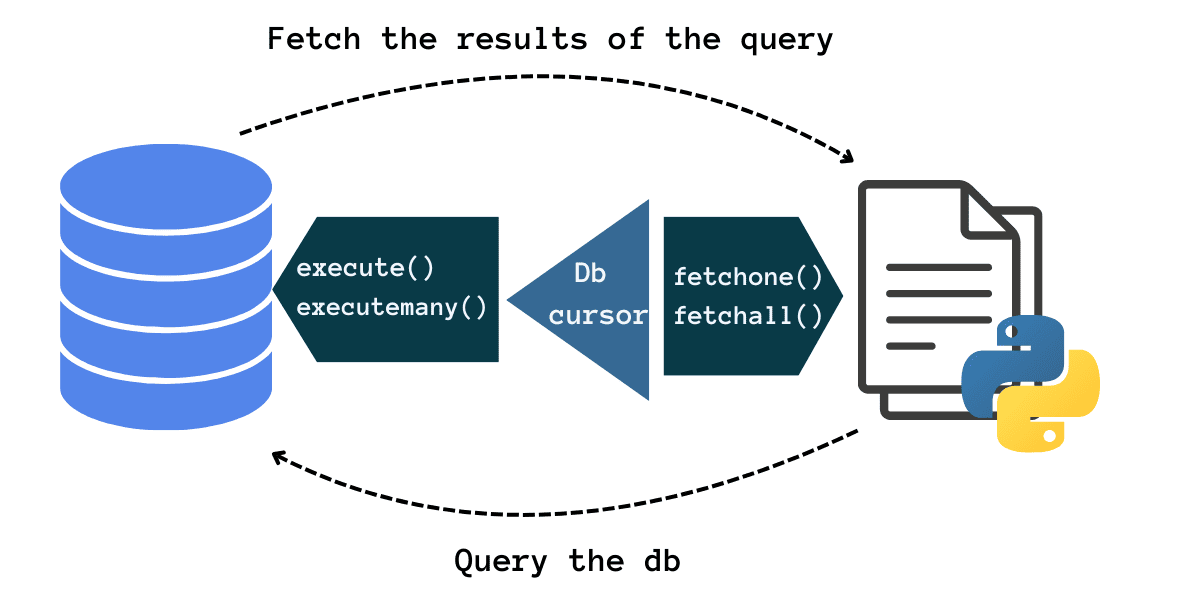

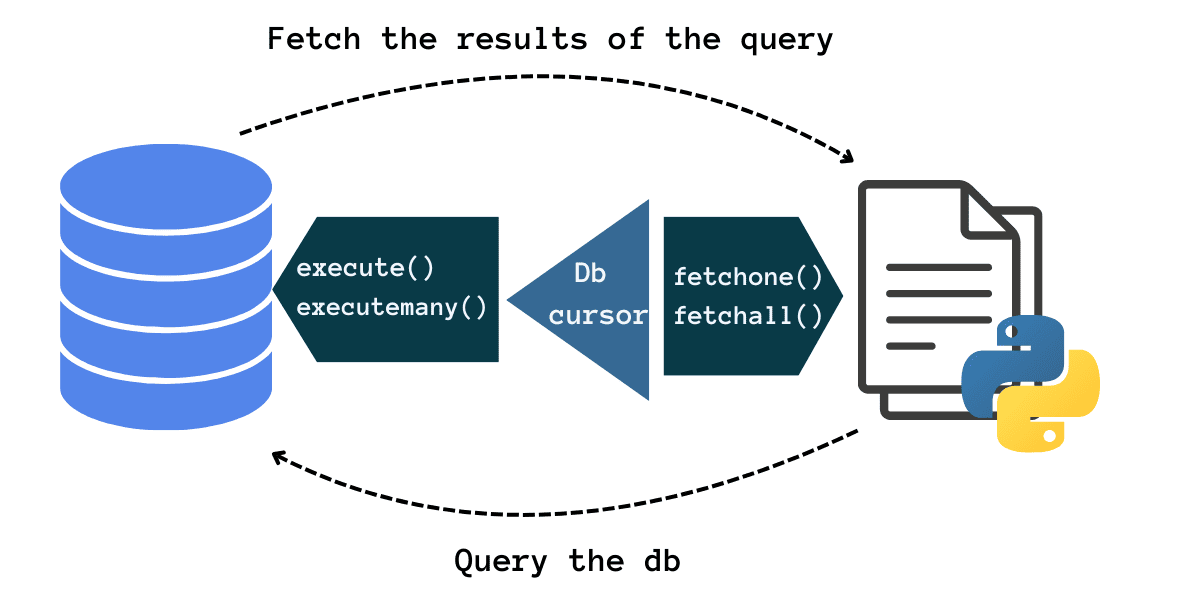

After connecting to the database, we’ll create a database cursor that will help us run queries. The cursor object has methods to execute queries and fetch the results of the query. It works very similarly to a file handler.

Database Cursor | Image by Author

It’s often helpful to use the connection as a context manager in a with statement like so:

import sqlite3 # Connect to the db with sqlite3.connect('example.db') as conn: # create db cursor # run queries # commit changes

This way you don’t have to worry about closing the connection object. The connection is automatically closed when the execution exits the with block. We’ll explicitly close the cursor objects in this tutorial, though.

Creating Database Tables

Now let’s create a customers table with the required fields in the database. To do so, we first create a cursor object. We then run a CREATE TABLE statement, and pass in the query string to the execute() method called on the cursor object:

import sqlite3 # Connect to the db with sqlite3.connect('example.db') as conn: cursor = conn.cursor() # Create customers table cursor.execute(''' CREATE TABLE IF NOT EXISTS customers ( id INTEGER PRIMARY KEY, first_name TEXT NOT NULL, last_name TEXT NOT NULL, email TEXT UNIQUE NOT NULL, phone TEXT, num_orders INTEGER ); ''') conn.commit() print("Customers table created successfully.") cursor.close()

When you run the script, you should see the following output:

Output >>> Customers table created successfully.

Performing CRUD Operations

Let’s perform some basic CRUD operations on the database table. If you’d like you may create separate scripts for each operation.

Inserting Records

Now we’ll insert some records into the customers table. We’ll use Faker to generate synthetic records. To keep the outputs readable, I’ve inserted only 10 records. But you may insert as many records as you’d like.

import sqlite3 import random from faker import Faker # Initialize Faker object fake = Faker() Faker.seed(24) # Connect to the db with sqlite3.connect('example.db') as conn: cursor = conn.cursor() # Insert customer records num_records = 10 for _ in range(num_records): first_name = fake.first_name() last_name = fake.last_name() email = fake.email() phone = fake.phone_number() num_orders = random.randint(0,100) cursor.execute(''' INSERT INTO customers (first_name, last_name, email, phone, num_orders) VALUES (?, ?, ?, ?, ?) ''', (first_name, last_name, email, phone, num_orders)) print(f"{num_records} customer records inserted successfully.") conn.commit() cursor.close()

Notice how we use parameterized queries: instead of hardcoding the values into the INSERT statement, we use ? placeholders and pass in a tuple of values.

Running the script should give:

Output >>> 10 customer records inserted successfully.

Reading and Updating Records

Now that we’ve inserted records into the table, let’s run a query to read in all the records. Notice how we use the execute() method to run queries and the fetchall() method on the cursor to retrieve the results of the query.

Because we’ve stored the results of the previous query in `all_customers`, let’s also run an UPDATE query to update the num_orders corresponding to the id 1. Here’s the code snippet:

import sqlite3 # Connect to the db with sqlite3.connect('example.db') as conn: cursor = conn.cursor() # Fetch and display all customers cursor.execute('SELECT id, first_name, last_name, email, num_orders FROM customers') all_customers = cursor.fetchall() print("All Customers:") for customer in all_customers: print(customer) # Update num_orders for a specific customer if all_customers: customer_id = all_customers[0][0] # Take the ID of the first customer new_num_orders = all_customers[0][4] + 1 # Increment num_orders by 1 cursor.execute(''' UPDATE customers SET num_orders = ? WHERE id = ? ''', (new_num_orders, customer_id)) print(f"Orders updated for customer ID {customer_id}: now has {new_num_orders} orders.") conn.commit() cursor.close()

This outputs both the records and the message after the update query:

Output >>> All Customers: (1, 'Jennifer', 'Franco', 'jefferyjackson@example.org', 54) (2, 'Grace', 'King', 'erinhorne@example.org', 43) (3, 'Lori', 'Braun', 'joseph43@example.org', 99) (4, 'Wendy', 'Hubbard', 'christophertaylor@example.com', 11) (5, 'Morgan', 'Wright', 'arthur75@example.com', 4) (6, 'Juan', 'Watson', 'matthewmeadows@example.net', 51) (7, 'Randy', 'Smith', 'kmcguire@example.org', 32) (8, 'Jimmy', 'Johnson', 'vwilliams@example.com', 64) (9, 'Gina', 'Ellison', 'awong@example.net', 85) (10, 'Cory', 'Joyce', 'samanthamurray@example.org', 41) Orders updated for customer ID 1: now has 55 orders.

Deleting Records

To delete a customer with a specific customer ID, let’s run a DELETE statement as shown:

import sqlite3 # Specify the customer ID of the customer to delete cid_to_delete = 3 with sqlite3.connect('example.db') as conn: cursor = conn.cursor() # Execute DELETE statement to remove the customer with the specified ID cursor.execute(''' DELETE FROM customers WHERE id = ? ''', (cid_to_delete,)) conn.commit() f"Customer with ID {cid_to_delete} deleted successfully.") cursor.close()

This outputs:

Customer with ID 3 deleted successfully.

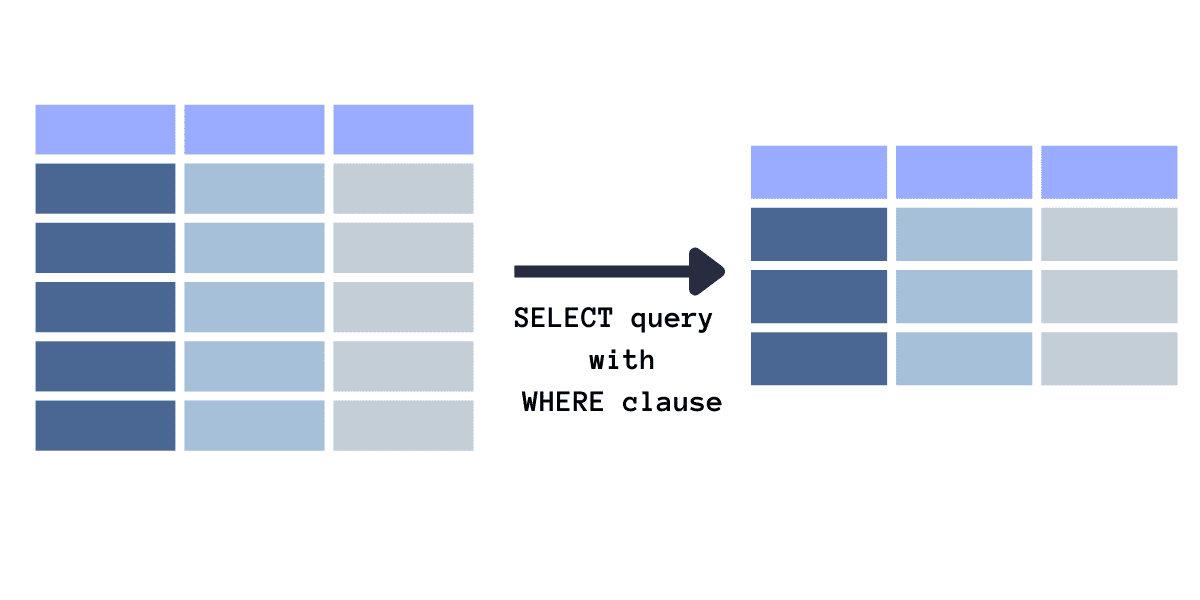

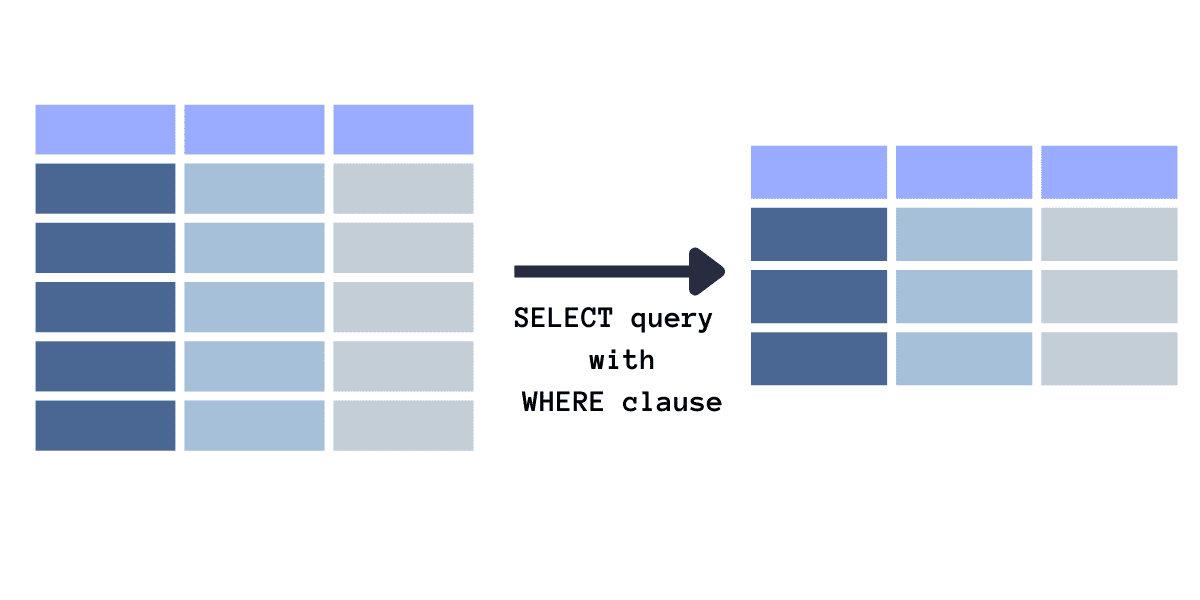

Filtering Records Using the WHERE Clause

Image by Author

Let’s say we want to fetch records of customers who’ve made fewer than 10 orders, say to run targeted campaigns and the like. For this, we run a SELECT query with the WHERE clause specifying the condition for filtering (in this case the number of orders). Here's how you can achieve this:

import sqlite3 # Define the threshold for the number of orders order_threshold = 10 with sqlite3.connect('example.db') as conn: cursor = conn.cursor() # Fetch customers with less than 10 orders cursor.execute(''' SELECT id, first_name, last_name, email, num_orders FROM customers WHERE num_orders < ? ''', (order_threshold,)) # Fetch all matching customers filtered_customers = cursor.fetchall() # Display filtered customers if filtered_customers: print("Customers with less than 10 orders:") for customer in filtered_customers: print(customer) else: print("No customers found with less than 10 orders.")

And here’s the output:

Output >>> Customers with less than 10 orders: (5, 'Morgan', 'Wright', 'arthur75@example.com', 4)

Wrapping Up

And that’s a wrap! This was a guide to getting started with SQLite with Python. I hope you found it helpful. You can find all the code on GitHub. In the next part, we’ll look at running joins and subqueries, managing transactions in SQLite, and more. Until then, happy coding!

If you’re interested in learning how database indexes work, read How To Speed Up SQL Queries Using Indexes [Python Edition].

Bala Priya C is a developer and technical writer from India. She likes working at the intersection of math, programming, data science, and content creation. Her areas of interest and expertise include DevOps, data science, and natural language processing. She enjoys reading, writing, coding, and coffee! Currently, she's working on learning and sharing her knowledge with the developer community by authoring tutorials, how-to guides, opinion pieces, and more. Bala also creates engaging resource overviews and coding tutorials.

More On This Topic

- Working with Python APIs For Data Science Project

- A Comprehensive Guide to Pinecone Vector Databases

- Working With Sparse Features In Machine Learning Models

- How to Ace Data Science Interview by Working on Portfolio Projects

- Want to Join a Bank? Everything Data Scientists Need to Know About…

- Working with Big Data: Tools and Techniques