This Week in Apps: Apple ‘sherlocks’ journaling apps, Twitter’s checkmark apocalypse, Snap summit recap Sarah Perez @sarahintampa / 4 days

Welcome back to This Week in Apps, the weekly TechCrunch series that recaps the latest in mobile OS news, mobile applications and the overall app economy.

The app economy in 2023 hit a few snags, as consumer spending last year dropped for the first time by 2% to $167 billion, according to data.ai’s “State of Mobile” report. However, downloads are continuing to grow, up 11% year-over-year in 2022, to reach 255 billion. Consumers are also spending more time in mobile apps than ever before. On Android devices alone, hours spent in 2022 grew 9%, reaching 4.1 trillion.

This Week in Apps offers a way to keep up with this fast-moving industry in one place with the latest from the world of apps, including news, updates, startup fundings, mergers and acquisitions, and much more.

Do you want This Week in Apps in your inbox every Saturday? Sign up here: techcrunch.com/newsletters

Top Stories

Apple plans its next “sherlock:” journaling apps

My hope is this brings millions more into journaling, and those who are serious about it will upgrade to a serious paid tool like Day One. https://t.co/yJe867frze

— Matt Mullenweg (@photomatt) April 21, 2023

Apple is planning to “sherlock” a new class of applications if a new report from The Wall Street Journal holds true. The paper reported Apple is planning to introduce an iPhone journaling application as part of its expansion of health initiatives. The new app, which is unnamed, would challenge those on the market like Day One (acquired by WordPress.com maker Automattic in 2021). The WSJ said a document describing Apple’s app noted journaling helps to improve mental and physical well-being.

The app is reportedly set to arrive with the launch of iOS 17 and would put Apple again in the crosshairs of regulatory scrutiny. The company has come under fire in recent years for its habit of lifting ideas from the wider app developer and partner community. The practice has become so common, it’s got its own name — sherlocking — a reference to Apple software that started this trend decades ago.

The timing of this move is worth noting. Apple is currently under DoJ investigation for alleged anticompetitive behavior in the App Store and in other business practices. The DoJ has spoken to companies who have been “sherlocking” victims as part of its inquiry, including Tile, whose business was hit by the launch of Apple’s AirTag. The Justice Dept. has also spoken to other app developers, including smaller companies like Basecamp and parent control software maker Mobicip, as well as bigger developers like Match and Spotify, about Apple’s App Store terms.

For Apple to now launch yet another app that competes with a number of third-party developers shows Apple is not worried much about the regulatory pressure and isn’t adjusting its behavior.

Related to this, The WSJ also recently ran a feature on Apple’s “kiss of death,” citing partners who detailed what it felt like when the tech giant came calling. After initially being excited by the prospect of an Apple partnership, many partners now say Apple has stolen their ideas for itself, hurting their own businesses.

Twitter’s Checkmark Apocalypse has arrived — and it’s quite the debacle

Image Credits: Bryce Durbin / TechCrunch

Twitter has finally made good on its promise to yank its users’ verification checkmarks from their profiles in what has to be one of the more ridiculous decisions Elon Musk has made to date since taking ownership of the social media platform.

Seemingly not understanding the value of the company he owns, Musk believes that no one should be verified unless they’re paying Twitter. But in reality, the verification service was a resource provided to Twitter’s community that added value. The blue checkmark symbol indicated that a high-profile figure, celebrity, institution or journalist was who they said they were and not an impersonator.

Twitter’s legacy blue check mark era is officially over

Twitter is not a curated, visual platform like Instagram, where a verification mark (which you can also now pay for!) provides an influencer with clout or bragging rights. Instead, Twitter is a network that’s centered around the rapid-fire dissemination of news and information in real time. The checkmark meant the source had been already vetted to be the real person, organization or official in question, allowing for faster fact-checks. This aids in newsgathering and establishes a baseline of trust across the platform.

But of course, Musk doesn’t understand this.

He has such a low opinion and value for journalism that he went around adding “state-affiliated media” and then “government-funded” labels to the profiles of news outlets like PBS, NPR, CBC, BBC and others, lumping these editorially independent news-gathering organizations alongside state-run media entities like the Kremlin-backed Russia Today. Some of the news organizations finally left Twitter — something more should do, in fact. (No, I don’t control TC’s social media efforts.)

It’s unclear what’s happening with those labels now, as they’re disappearing from accounts on Friday, including those of China state-affiliated media.

Musk historically has demonstrated a callous disregard for journalism, calling The NYT “fake,” while tweeting out actual fake news himself. He also has Twitter’s comms email respond to press inquiries with a poop emoji. For that reason, it’s almost funny to watch Musk run headfirst into a wall with his complete mishandling of such a pivotal Twitter feature.

After all, if Musk had wanted to generate revenue from Twitter power users, he could have done so by giving ID-verified users their own checkmarks, perhaps with a different color scheme, that provided the set of special features and timeline prioritization that Twitter is now selling with its Blue subscription. That would have added value without disrupting the existing system.

Chaos reigns after Twitter’s blue checks vanish

Instead, he’s again created chaos by removing checkmarks from almost everyone, allowing for impersonation — and, in some cases, the spread of dangerous misinformation, as well. On top of that, he left legacy checkmarks on some high-profile accounts, like LeBron James and Stephen King, both of who said they would not pay for Twitter Blue. It was a power play, clearly. If the celebs don’t leave, they’re tacitly confirming they’ve accepted the new system.

In addition, Twitter is being dishonest about who is truly a paid Twitter Blue subscriber.

Yours truly paid for Blue earlier this year to fact-check a story and then immediately canceled. I now continue to have a checkmark despite the subscription’s expiration in February, as documented below. (In any event, don’t bother to follow me on Twitter, by the way — I’m on Bluesky, T2, Post and Mastodon.)

I briefly paid for Blue earlier this year to check something out I needed to confirm. I immediately canceled. The subscription expired on Feb. 18, 2023. Yet Twitter would like you to believe that I am a continued Blue subscriber. That's not true. pic.twitter.com/N6eXQRY3Pr

— Sarah Perez – please use Signal not DMs (@sarahintampa) April 20, 2023

Along with the checkmark removals, Twitter has also now begun pressuring advertisers to either pay for Twitter Blue or Verified Organizations to continue running ads on the platform. Those businesses that already spend over $1,000 per month will have gold checks automatically, Twitter said.

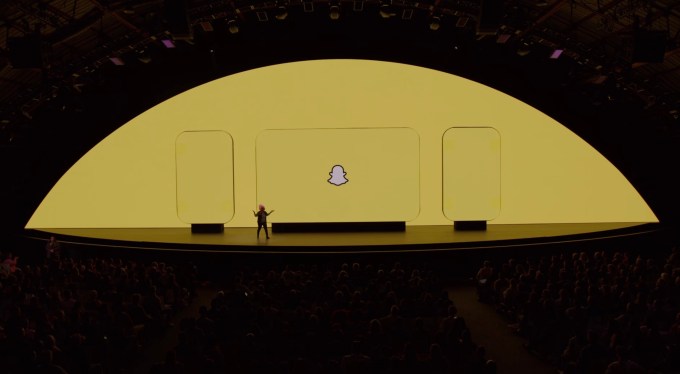

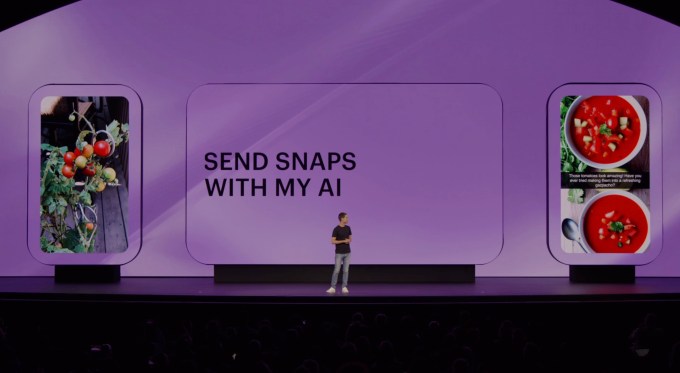

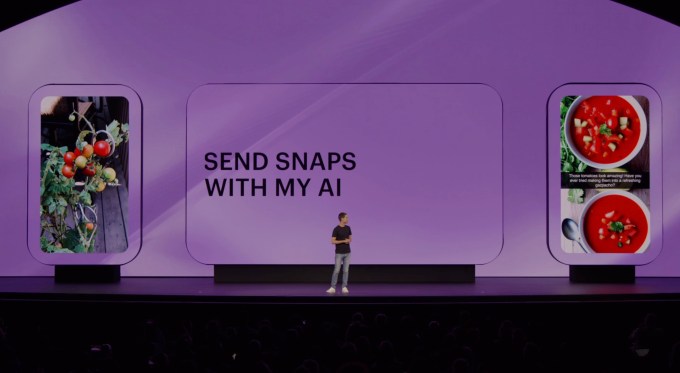

Snap’s Partner Summit focuses on Shopping, AR and AI

Image Credits: Snap

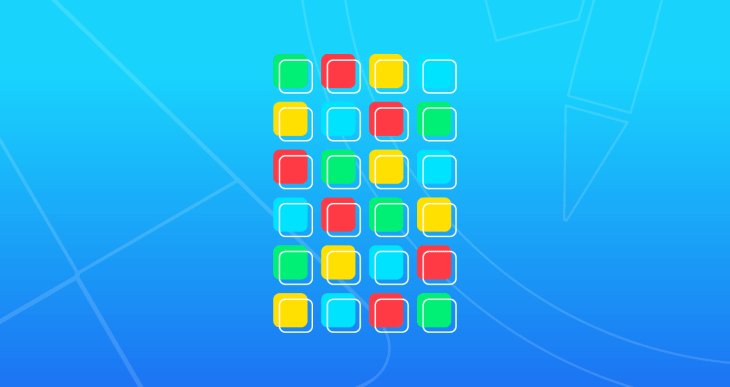

Snap this week hosted its Partner Summit where it shared a number of features, updates and initiatives in areas like e-commerce, AR and AI. The company also used the time to introduce a range of consumer-facing updates for its Snapchat mobile app.

At the event, CEO Evan Spiegel commented on the proposed TikTok ban in the U.S., joking at first that Snap would “love that,” but noting that such a ban sets a dangerous precedent for other social platforms. Though he acknowledged there could be national security concerns, the exec, like Zuckerberg has, also pushed for tech regulations.

“It is important for us to be thoughtful and really develop a regulatory framework to deal with security concerns, especially around technology,” Spiegel.

Snap CEO Evan Spiegel on TikTok ban: ‘We’d love that’

Among the other event highlights and news:

- Snap said the Snapchat+ paid subscription now has over 3 million users. That’s up from 2 million in February and 1 million last August.

- Snap opened its Public Story revenue share program to creators with at least 50,000 followers and 25,000 monthly Snap views who post at least 10x per month.

Image Credits: Snapchat

- Snapchat added new Story modes like “After Dark” for posts after 8 pm and “Communities” which let users interact with people in their same school.

- Snapchat updated its flashback feature Memories to show friends what they were doing on a given day exactly a year ago.

- The Snap Map will start suggesting places that Snap thinks users would like. A new “Popular Last Night” tag will also show people where their friends were hanging out.

- Snapchat is adding an interactive Lens that lets users complete puzzles and play games together while they’re face-to-face on a video call.

Image Credits: Snapchat

- Snap also announced new AR Lenses powered by generative AI, starting with a new “Cosmic Lens” that turns you and your surroundings into an immersive, animated sci-fi scene. The move follows TikTok’s recently successful launch of the AI filter, “Bold Glamour.” The app will also use AI to recommend Lenses based on the photo or video users provided.

Image Credits: Snapchat

- Bitmoji’s avatar style is being updated with a more expressive look with realistic dimensions, shading and lighting.

- Snap’s enterprise biz, ARES, introduced AR Mirrors — a way to bring AR experiences to real-world locations, like retail stores. Men’s Wearhouse and Nike have used its AR Mirrors in stores and Coca-Cola is building a prototype drink machine with Snap that lets consumers use hand gestures to control the screen.

Image Credits: Snap

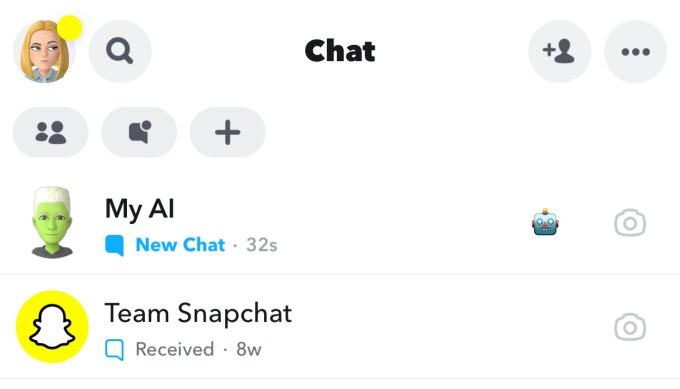

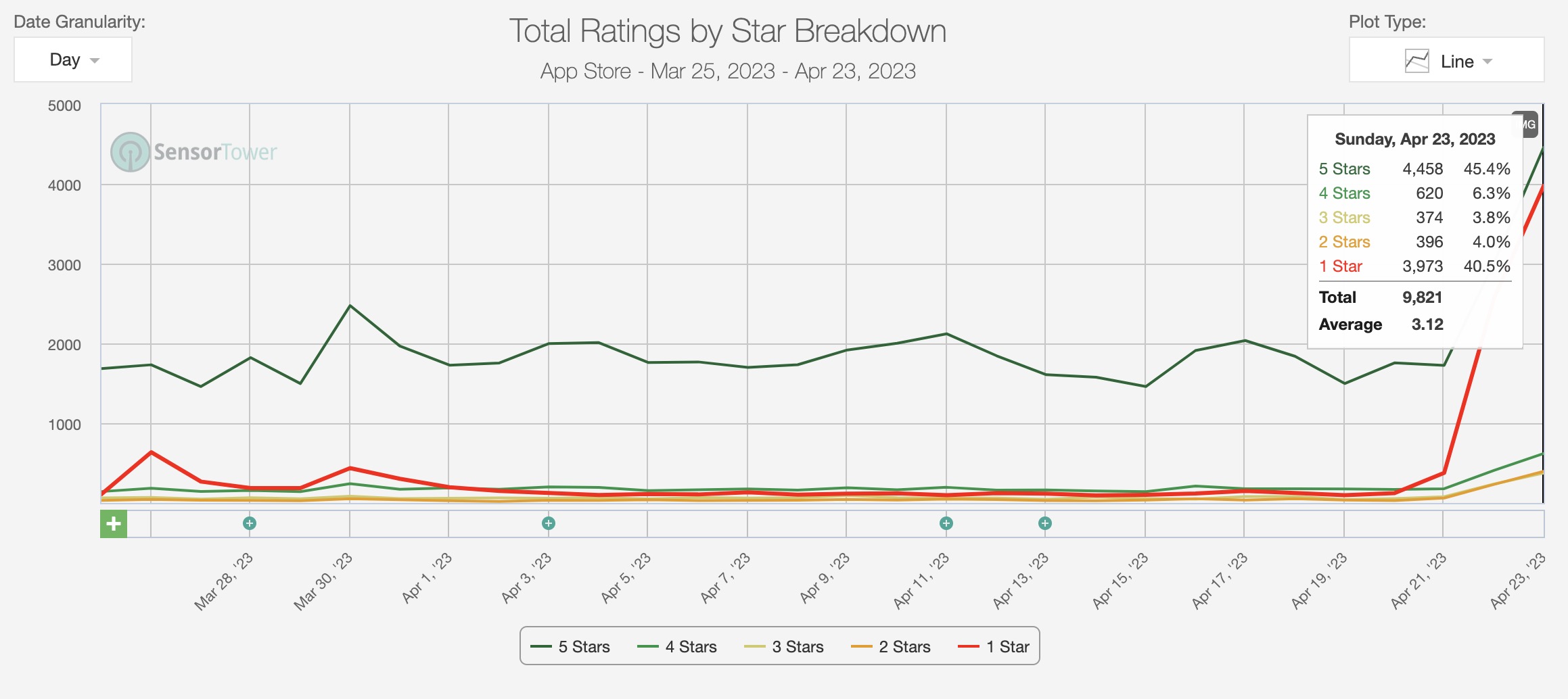

- Snapchat announced its AI chatbot, My AI, is now free for all Snapchat global users instead of only Snapchat+ subscribers, as before. However, Snap is also rolling out a subscriber-only My AI feature which will see the chatbot able to “Snap” you back using generative AI to create photos. The AI chatbot will also now be able to be added to group chats with an @mention, make recommendations for places on Snap Map, suggest Lenses and send chat replies when you send it Snaps.

Image Credits: Snap

Platform News

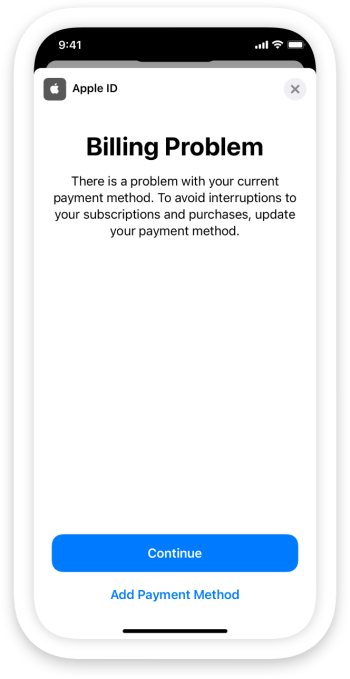

Apple

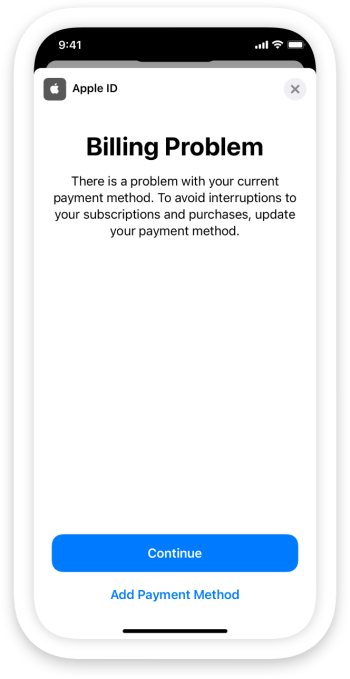

- Apple is introducing a new feature that will reduce the burden on app developers when it comes to solving subscription billing issues. Often, when an app’s subscribers have a payment method that fails, they’ll turn to the app developer for help. But the developer doesn’t handle billing issues for their App Store apps — those are managed by Apple itself. Now, Apple says a new warning message will appear to prompt users inside the app when their payment method fails, meaning they’ll no longer need to bother the developer for help with this common issue.

- Apple is rumored to be developing VR apps and services for its upcoming mixed-reality headset in categories like gaming, fitness, live sports and collaboration.

- Researchers said they found evidence that Apple’s Lockdown Mode has helped block an attack by hackers using spyware made by the infamous mercenary hacking provider NSO Group.

- Apple launched its Apple Card savings account inside Apple Wallet offering an attention-getting 4.15% APY. The accounts are open to Apple Card holders in the U.S. and are technically managed by Goldman Sachs, so they have FDIC protections.

- Apple Watch’s software is due to get its biggest update since its release, according to a new report by Bloomberg’s Mark Gurman. Details were sparse but we expect to hear more at WWDC.

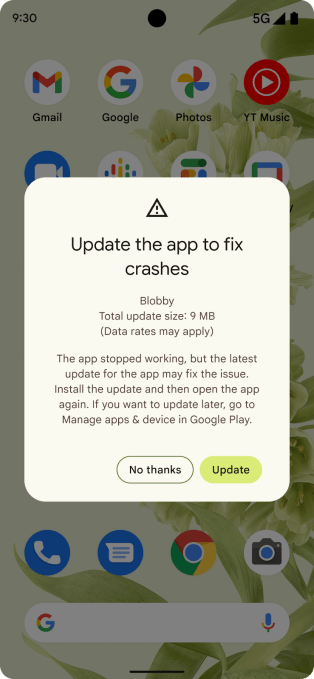

Google

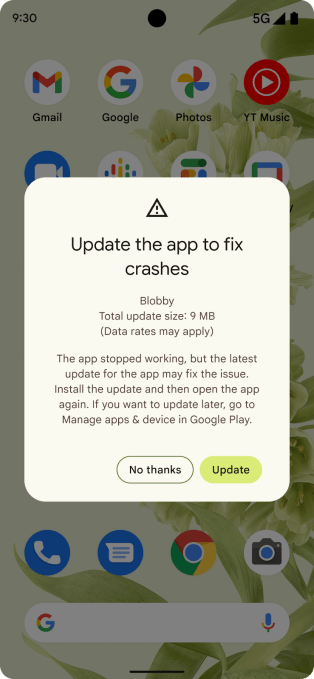

- Google Play will tell users to update their buggy, crashing apps: Google announced a new Play Store feature that will prompt users to update developers’ apps if the app crashes in the foreground and there’s a more stable version of the outdated app already available for download. The feature will apply to phones and tablets running Android 7.0 (SDK level 24) and above. Developers don’t need to do any integration work to take advantage of the feature, which is enabled automatically when Google Play determines a newer version of the app has a statistically relevant, lower crash rate.

- Ahead of Google I/O, a leak is suggesting the upcoming Google Pixel Tablet will be priced around €600-650 ($658.63-$713.52 if converted directly to USD) — pricier than rivals — and the dock will cost around $120.

- Google shared a number of updates to help app publishers increase revenue and grow their businesses with AdMob, including those around inventory access, bidding, revenue optimization and more.

- Google Play Points can now get you more stuff. The company this week announced changes to the program which rewards users with points for making purchases on Google Play to now include app offers — like $10 off DoorDash or Instacart; Google merchandise (like Chrome dino game socks!); in-game items and coupons; and Google Play Credit for making in-app purchases, apps, books and subscriptions.

Image Credits: Google

App Updates

Messaging & Communications

- Telegram’s latest update brings shareable chat folders, custom wallpapers and other features to users. The app’s chat folders can now be shared with a link, the company says, allowing users to invite friends or colleagues to dozens of work groups, collections of news channels and more.

- Google Fi, the tech giant’s carrier service, is being rebranded to Google Fi Wireless and gaining new features, including the ability to add on a Pixel Watch or Samsung Galaxy Watch to their plan at no extra charge. Users can also get a free phone for adding a line if they agree to stay with the service for 24 months, among other things. The options are available from the Google Fi mobile app and website, where consumers manage their service.

- The company behind the popular iPhone customization app Brass and others launched an AI chat app called Superchat, which allows iOS users to chat with virtual characters powered by OpenAI’s ChatGPT. Other companies already offer AI chats with characters in more advanced ways, including D-ID. Meanwhile, the developer of another AI chat app called Superchat says their concept was ripped off by another Superchat app before they could launch. “Super chat” is not a unique name, though, as it’s well-known as YouTube’s paid live chatting feature for creators and fans.

Gaming

- Roblox’s reach into a slightly older demographic is expanding, data shows. The gaming platform maker’s 17-to-24 age group has grown 33% year-over-year as kids are aging up but remaining on the platform.

- Netflix is launching a follow-up to the supernatural thriller Oxenfree after acquiring the studio behind the game (Night School Studio) in 2021. The company says Oxenfree II: Lost Signals will arrive on July 12 on Netflix, Nintendo Switch, PS4/PS5 and Steam. Netflix recently announced it has 40 games slated for launch this year and has 70 in development with its partners.

- Netflix also just hired former Halo Infinite creative head Joseph Staten to develop a multi-platform AAA title for the Netflix Games division. Staten will serve as a creative director at Netflix, he announced in a tweet, adding that his work will focus on developing on original IP.

- Meta opened up its social VR space Horizon Worlds to teen users aged 13 to 17 after originally keeping it to 18 and up. The company said as part of its expansion it would include age-appropriate protections and safety defaults. Children’s rights activists had earlier urged Meta to abandon its plans to court younger users.

- Niantic announced a partnership with Capcom to launch a game within the Monster Hunter franchise later this year. The new mobile title will come to both iOS and Android and will have players hunt monsters in the real world.

The hunt is on. “Monster Hunter Now” launches September 2023. @MH_Now_En @CapcomUSA_ 🗡 https://t.co/EPA8vRXt8X 🗡 #monsterhunternow pic.twitter.com/oudIHJ90iD

— Niantic (@NianticLabs) April 18, 2023

Social

Image Credits: Meta

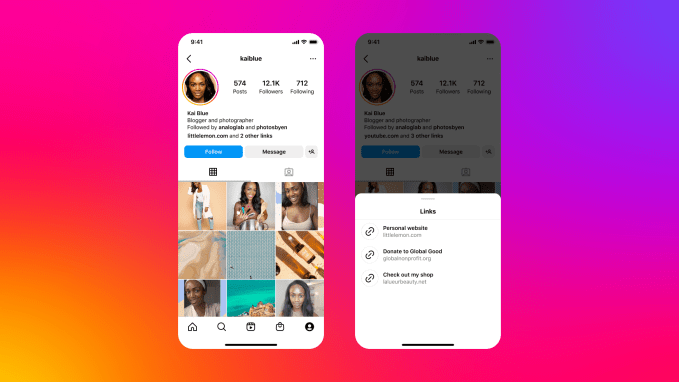

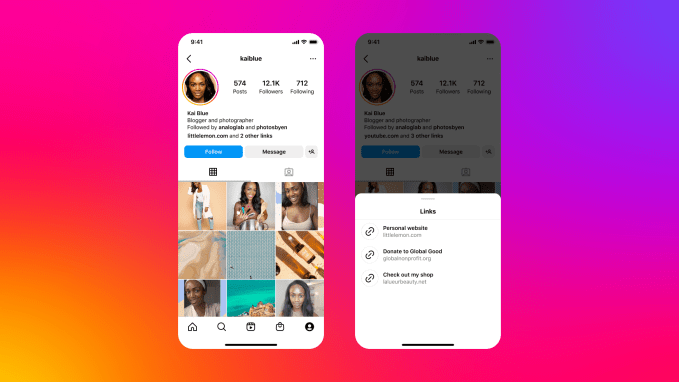

- Instagram said users can now add up to five links to their profiles, in a move that challenges Linktree, Beacons and numerous other “link in bio” solution providers.

- Reddit is shifting to a paid subscription model for API access, impacting app developers like the makers of the popular Reddit app, Apollo. The change will likely mean most third-party apps will need to shift to their own subscription model going forward. The company’s decision has to do with the demand for data to train AI models like OpenAI’s ChatGPT and others. “The Reddit corpus of data is really valuable…we don’t need to give all of that value to some of the largest companies in the world for free,” said Reddit CEO Steve Huffman.

- The Verge does a deep divide into ActivityPub, the open source, decentralized social networking protocol powering Mastodon and the wider Fediverse. Want to get up to speed on the state of the Fediverse and its potential? This is a good place to start.

- Fiction apps Wattpad and Yonder are now being overseen by KB Nam, previously head of Strategy and Research at their parent company Naver Webtoon. Nam will report directly to Webtoon Americas president, Ken Kim.

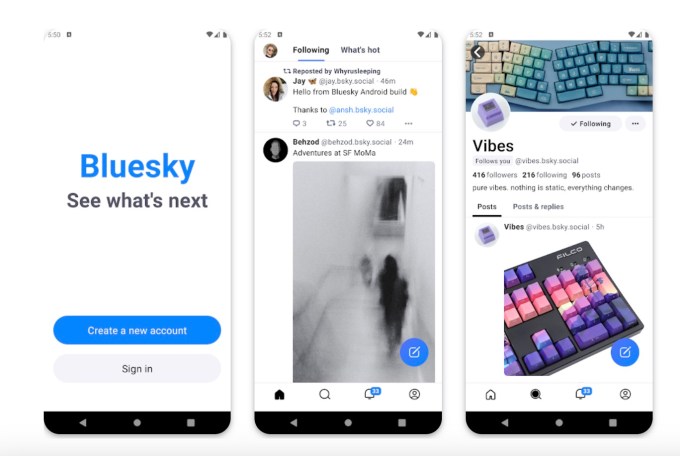

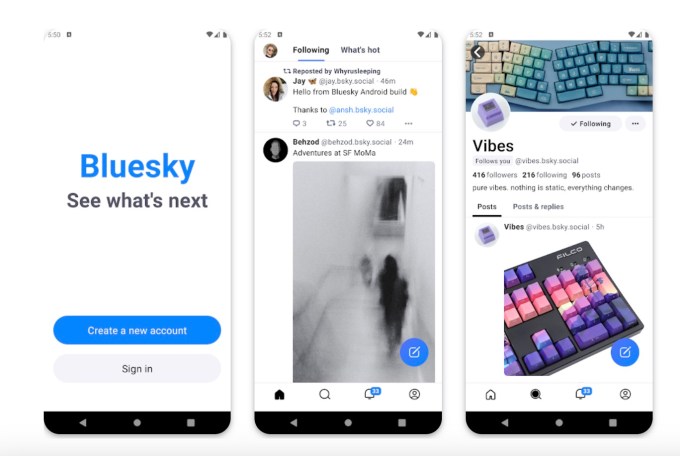

- The Jack Dorsey-backed Twitter alternative Bluesky arrived on Android but remains invite-only. The community has around 20,000 users but the app has been downloaded 240,000 times on iOS to date.

Image Credits: Bluesky

- Magazine app Flipboard is furthering its investment in the Fediverse with its newly launched “editorial desks” that curate news for the Mastodon community. Initially, the company will launch four desks — News, Tech, Culture and Science — which it says won’t be automated by bots but instead by professional curators who have expertise in discovering and elevating interesting content.

- Pinterest hired a Google Pixel VP to fill its CPO position. Sabrina Ellis spent the last 12 years at Google, where she led the work on Google Pixel. Previously, she spent eight years at Yahoo in numerous leadership roles. She will replace Pinterest’s current senior vice president of product, Naveen Gavini.

- Imgur plans to ban explicit images, while still allowing for artistic nudity starting on May 15. The company says the service will adopt a mix of automatic and human moderation. The changes may impact NSFW subreddits (communities) on Reddit which allow for explicit images. The MediaLab-owned company said explicit content was a risk to Imgur’s “community and its business,” as the reason for the move.

Streaming and Entertainment

Image Credits: Spotify

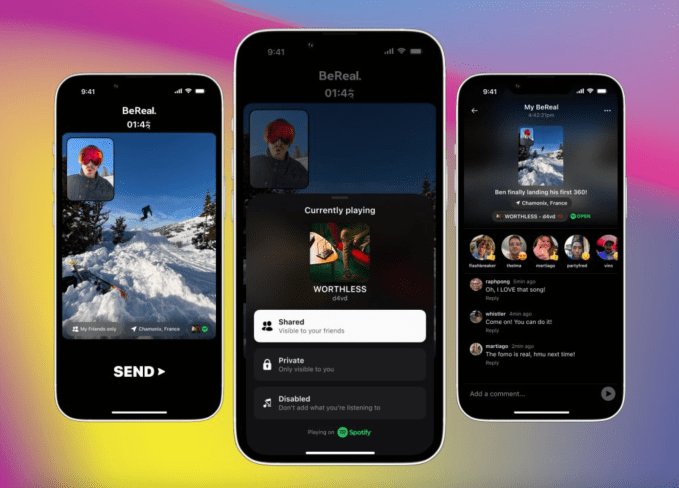

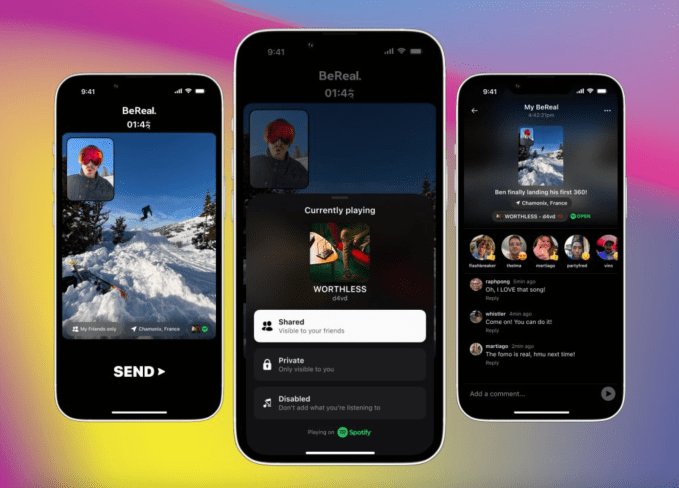

- Spotify announced it will now work with BeReal to allow the social app’s users to share what they’re listening to on Spotify through a new integration. After connecting your accounts, BeReal will automatically pull in the song or podcast you’re listening to on Spotify at the time you capture a BeReal.

- Creator company Jellysmack is partnering with Spotify to bring its creators to the streaming platform. A selection of its creators will upload weekly video podcast episodes to the service, including Ed Bolian (VINwiki), Audit the Audit, Christina Randall, Brooke Makenna, and Jessica Kent.

- Cameo introduced Cameo Collage, a free group-gifting feature. Gift givers can now combine celebrity Cameo videos with more personalized videos, images, GIFS and written messages from friends and family to create a digital collage for the recipient.

- Netflix in its Q1 earnings said it would begin its password-sharing crackdown in the U.S. and other countries this summer (Q2). It has already implemented the changes in Canada, New Zealand, Portugal and Spain.

- Netflix reported mixed earnings with revenue of $8.16 billion, behind estimates of $8.18 billion. It reported higher-than-expected earnings of $2.88 per share in Q1, as analysts had anticipated $2.86 per share.

Transportation

Image Credits: Google / Waze

- Waze on Google built-in has come to Volvo Cars and Polestar 2 cars. After a one-time setup, Volvo and Polestar drivers can access Waze’s real-time routing, navigation, alerts, settings, preferences and saved places on a bigger, eye-level display.

Health & Fitness

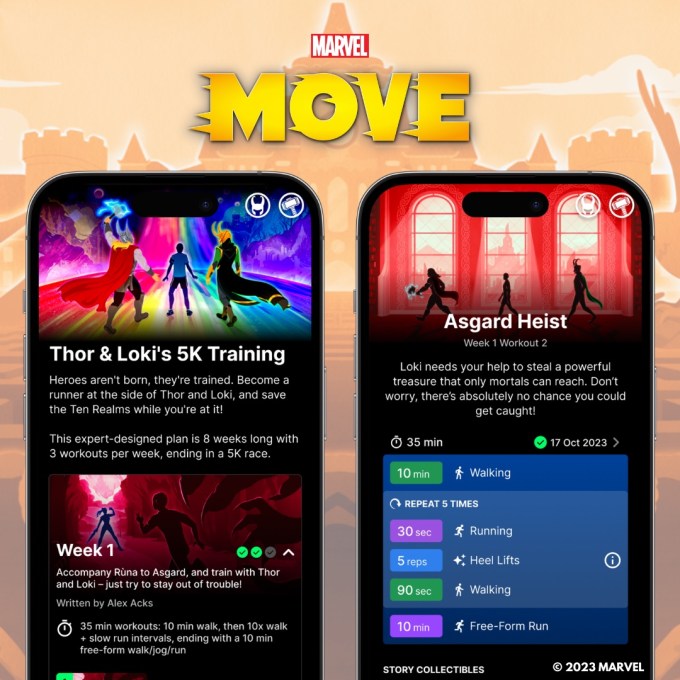

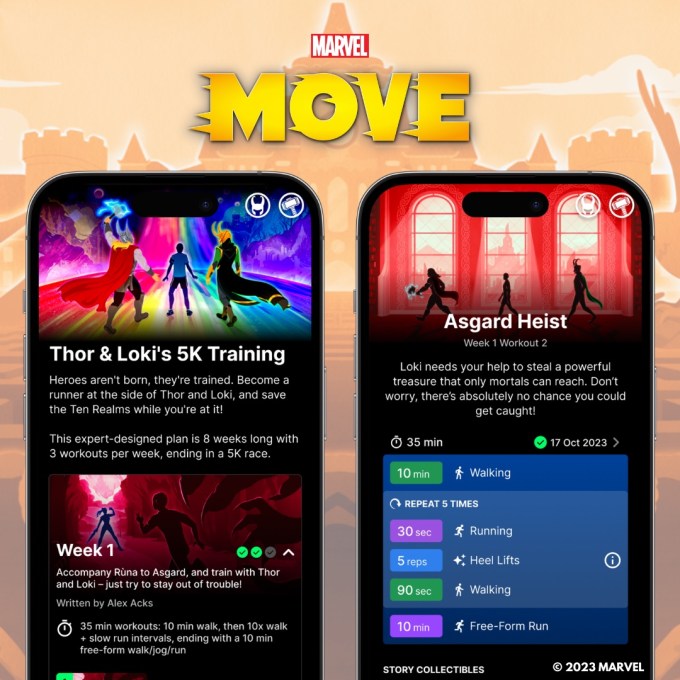

- Marvel announced a new mobile fitness app, Marvel Move, featuring immersive audio-based running routines with popular Marvel Comics characters. The app, part of a collaboration with Six to Start, co-creator of the popular fitness app Zombies, Run!, includes five storylines to choose from including Thor & Loki, X-Men, The Hulk, Daredevil and Doctor Strange and the Scarlet Witch.

News

- Samsung launched its own take on Apple News with its new “Samsung News” app that gives users access to everyday news from a variety of publications. The app will replace the company’s current “Samsung Free” app, and includes custom news feeds in addition to morning and evening briefings about the top news of the day.

Government, Policy and Lawsuits

- WhatsApp, Signal, Viber, Wire and other encrypted messaging apps signed an open letter asking the U.K. government to “urgently rethink” its Online Safety Bill legislation, which they say will force tech companies to break end-to-end encryption on private messaging services, weakening the “privacy of billions of people around the world.”

- Google has asked the court to dismiss multiple claims in its antitrust trial with Epic Games, Match, state AGs and others. In a new filing, Google’s legal team is now asking the court to dismiss several of the plaintiffs’ arguments regarding the nature of its app store business, revenue-sharing agreements and other app store-related projects in a partial motion for summary judgment. Google believes the court should now have enough information on hand to make determinations on a handful of the plaintiffs’ claims before the case goes to trial, saying that these items are not in violation of antitrust law. If the court agrees with Google’s position, the trial would still move forward as other claims would still need to be argued in court.

Google asks court to dismiss multiple claims in Epic Games antitrust trial

- The U.K. Competition and Markets Authority (CMA) opened a consultation on Google’s proposal to let developers use alternative payment methods for in-app purchases on Android, aka “User Choice Billing.” It’s inviting interested stakeholders to respond to Google’s proposal by May 19 and will then make a decision on whether to accept the comments and resolve the case. Google is suggesting it cuts its commission to 4% if the developer offers Google’s own billing alongside their own. But this would only be cut to 3% if just third-party billing was offered.

- Montana lawmakers approved a bill that would ban TikTok and would bar app stores from offering the app within the state, starting on January 1, 2024. It’s unclear how such a measure would be enforced as the app stores don’t offer a way to block distribution by state, only by country.

Funding and M&A

- Starboard (Formerly Olympic Media) concluded the acquisition of right-wing Twitter alternative Parler and shut it down. The company said of the decision: “No reasonable person believes that a Twitter clone just for conservatives is a viable business any more [sic].” The Parler app will undergo a strategic assessment and it’s not clear what the company has in store for its future.

- Epic Games expanded its Latin American footprint with its acquisition of the Brazilian game development studio Aquiris. The developer is best known for “Wonderbox: The Adventure Maker,” a magic-themed game-creation sandbox available on Apple Arcade.

- Myxt, an audio file management platform for creators, raised $2 million in seed funding led by Accel Ventures and Quiet Capital. The startup offers a collaborative workplace app for audio creators that’s available on web, iOS and Android, where users can stream tracks, organize files and back up their library.

- SoundHound closed on $100 million in strategic funding from Atlas Credit Partners as part of a new $125 million loan facility. The publicly traded company is using the money to refinance its debt and continue to fund its long-term strategy.

- Japanese gaming giant Sega is acquiring Finland’s Rovio in an all-cash deal worth €706 million ($775 million). The deal is expected to close in Q2 or Rovio’s fiscal year (in the next couple of months). Sega’s offer represents a 63.1% premium on Rovio’s closing price on January 19.

Downloads

Wavelength

Image Credits: Wavelength

An interesting new chat app called Wavelength has arisen out of the ashes of the social networking app Telepath, which shut down last year. Now the team has shifted its focus to improving group chat experiences. Instead of having different group chats, it introduces the idea of threaded messaging combined with AI. The threads help to keep group chats less cluttered by making it easier to follow multiple conversations at once.

In addition, users can add OpenAI’s GPT-3.5 into their group chats by mentioning @AI. This makes the app among the first to offer chatting with AI. Snapchat is also now doing this with its My AI feature as is Ghost, which allows groups to chat with ChatGPT.

The startup aims to focus in other areas as well, like privacy, moderation, discovery and more. Notably, John Gruber is an advisor for the currently iOS and Mac-only app.

You can read more about Wavelength here.

Wavelength is a new app trying to make group chat suck less

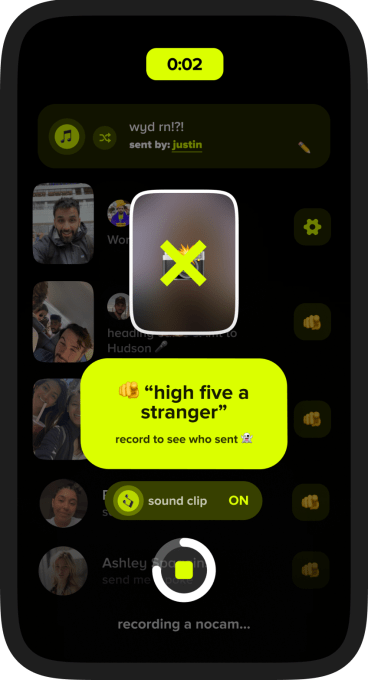

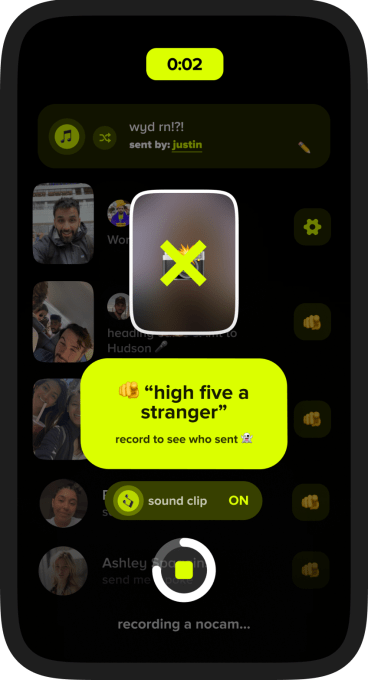

Nocam

A new social video app called Nocam has a radical idea to make social networking more authentic — it’s turning off the camera so you can’t see how you look while filming. The idea is to make capturing a moment feel natural while reducing the friction that comes with seeing a preview of your own image, which can often leave users hesitant to post or scrambling to add edits and filters to touch up their appearance.

Image Credits: Nocam

Nocam believes this concept better reflects the way people interact in real life, where we aren’t faced by a mirror that shows us what we look like, that is. The company describes its app as BeReal meets TikTok. But perhaps it’s more accurate to say BeReal meets TikTok Challenges, as the app focuses on sending users fun or silly prompts they have to act out with the camera, like doing a dance or just showing what they’re up to. Users can also prompt their friends, too.

You can read more about Nocam here.

Nocam unveils a social video app that’s like BeReal meets TikTok challenges

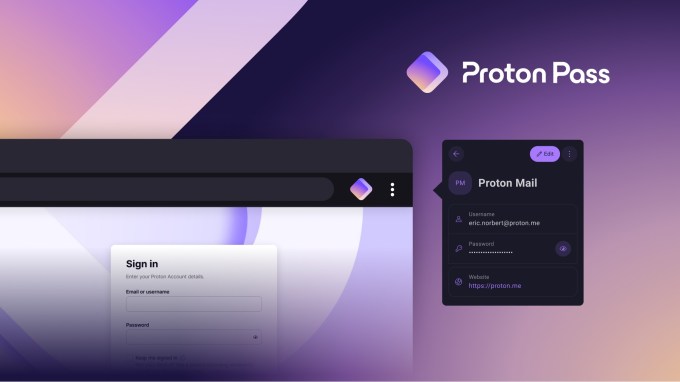

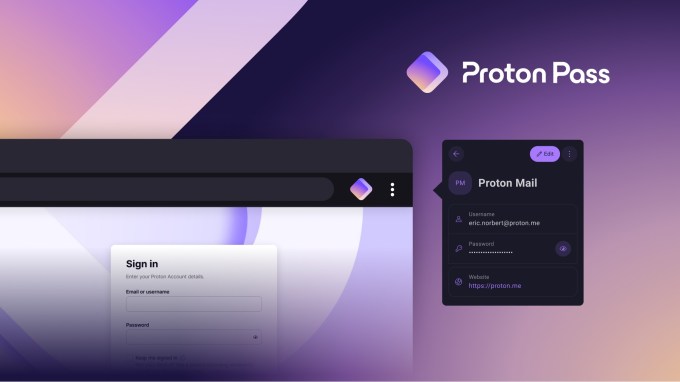

Proton Pass

Image Credits: Proton

Proton, the maker of the end-to-end encrypted email service Proton Mail, Proton VPN, Proton Drive and Proton Calendar, this week launched a new password manager called Proton Pass. Everything stored in the app is end-to-end encrypted, and Proton itself never has access to your data. The beta version is live now to Proton users with a lifetime plan and will then roll out to other subscribers and customers in the future.

The app comes about from the company’s acquisition of SimpleLogin, an email alias startup, and is available as a desktop as a browser extension, iOS or Android app.

You can read more about Proton Pass here.

Proton announces Proton Pass, a password manager

Etc.

Does anyone wish they still had their old phone?