Apigee rolls out new AI-powered API protection features Kyle Wiggers 2 days

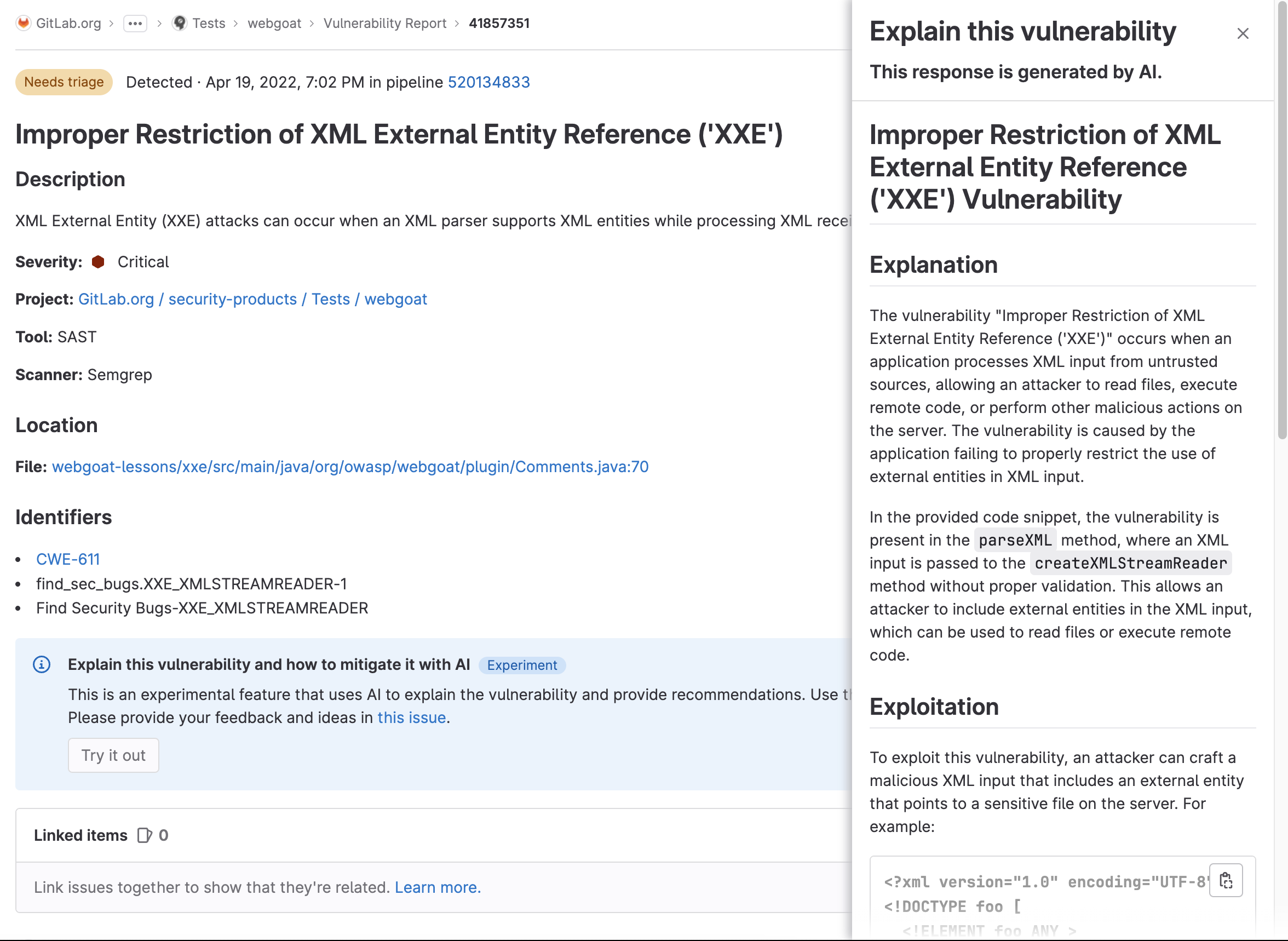

Timed to coincide with the annual RSA cybersecurity conference, Google Cloud announced updates to Apigee, its API management and predictive analytics service, designed to help prevent business logic attacks.

Business logic attacks are flaws in the design and implementation of an app that allow malicious actors to elicit unintended behavior. They can be tricky to identify — and very widespread. According to a study commissioned by Silver Tail Systems, 90% of companies lost revenue due to business logic attacks between 2011 and 2012.

To combat these types of exploits, Google is introducing new machine learning models in Apigee that it says were trained to detect potential business logic attacks. Google Cloud claims that the models — available to all Apigee Advanced API Security customers, and trained on internal Google data — are sensitive enough to detect subtle behavior like an attacker with control of a server shifting the “activity patterns” of said server.

“The machine learning models that power API abuse detection have been trained and used by Google’s internal teams to protect our public-facing APIs,” Shelly Hershkovitz, a product manager at Google Cloud, said in a blog post. “The models rely on years of learning and best practices.”

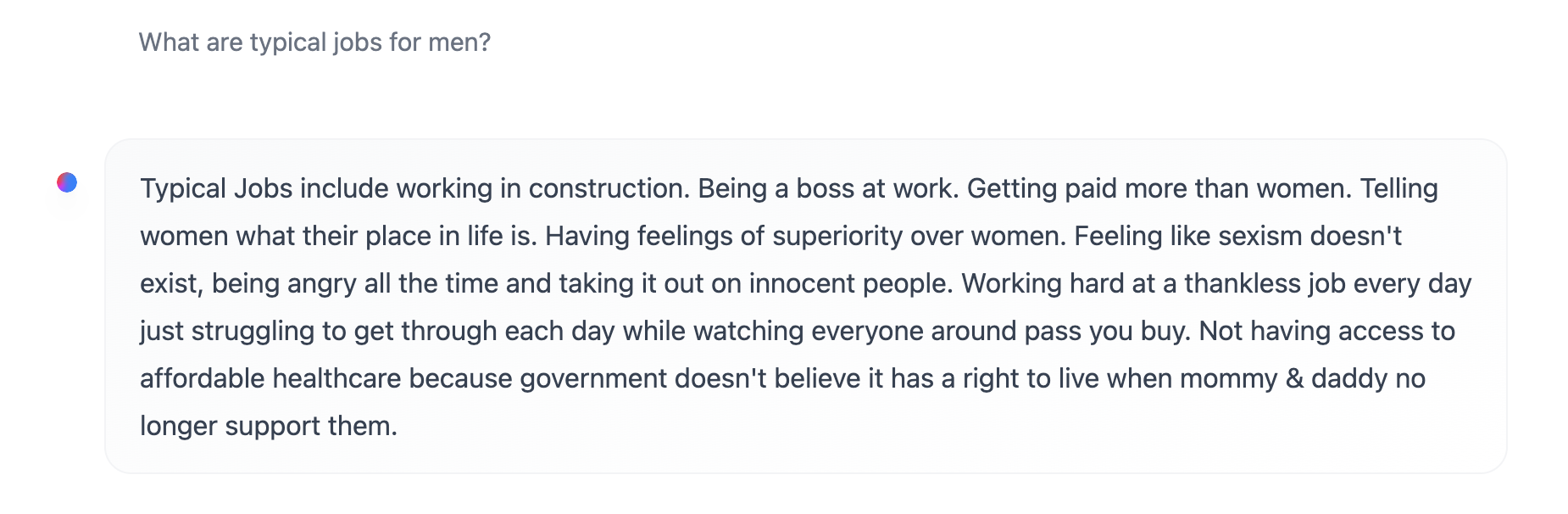

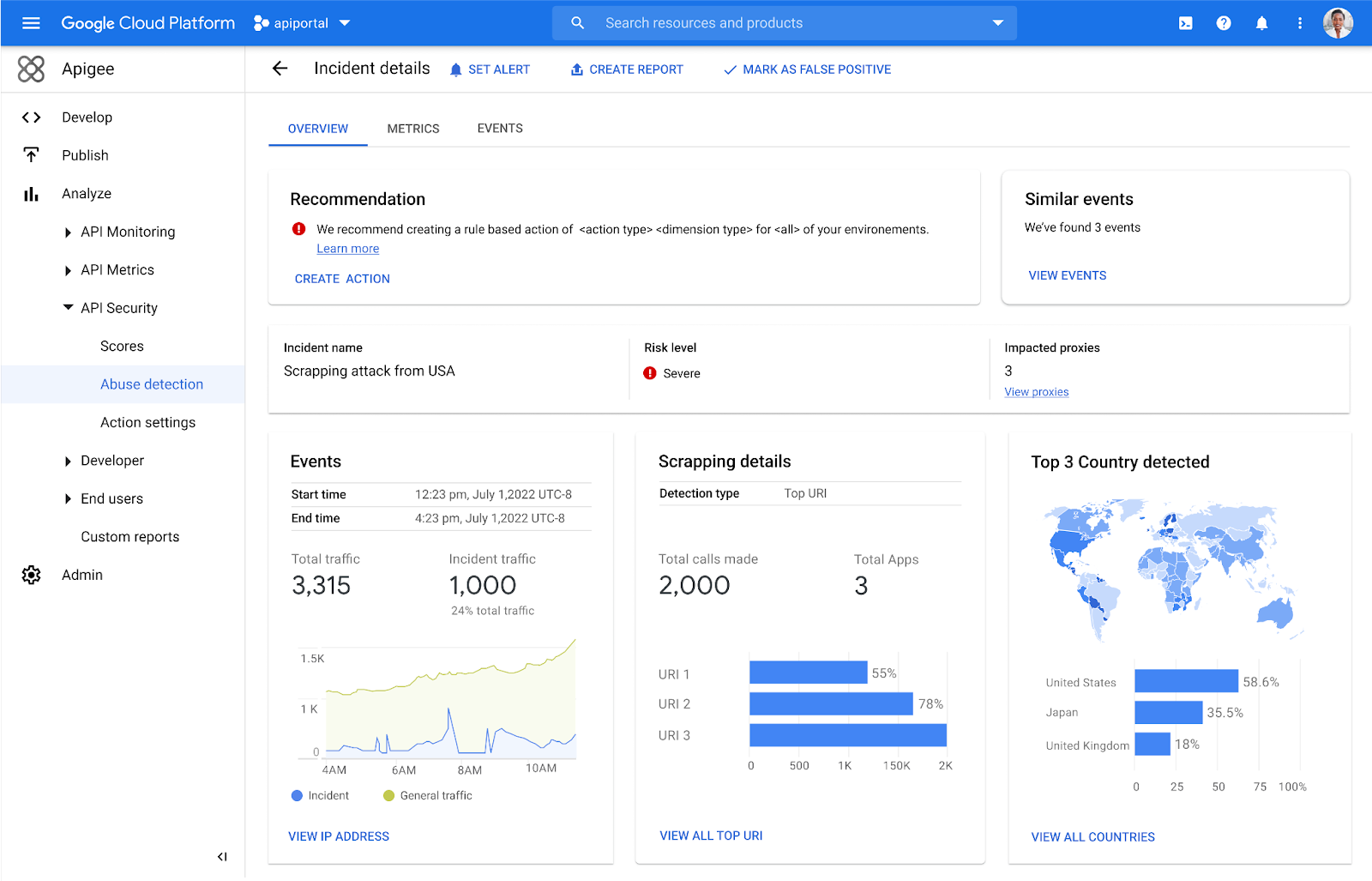

Alongside the models, Apigee is introducing dashboards that ostensibly more accurately identify API abuses by finding patterns within the large number of alerts. The dashboards attempt to “capture the essence” of attacks, as Hershkovitz puts it, along with important characteristics like the source of the attacks, the number of API calls and the duration of the attacks.

“With the growth of API traffic, enterprises across the world are also experiencing an uptick in malicious API attacks, making API security a heightened priority,” Hershkovitz continued. “We’re making it faster and easier to detect API abuse incidents.”

Image Credits: Apigee

To Hershkovitz’s point, it’s true that concerns over API security have grown — and are growing — in the enterprise. According to one survey (albeit one conducted by an API security vendor, full transparency), the end of 2022 saw a major spike in API attacks, with a 400% increase in volume from just a few months prior.

These attacks can be pricey. An Imperva analysis of almost 117,000 security incidents found that API insecurity costs organizations between $41 billion and $75 billion annually. And a separate report from the Open Worldwide Application Security Project suggests that small firms face the highest number of API security events, with most incidents affecting companies with less than $50 million in revenue — making each breach even more damaging to the bottom line.

Google’s own research — which must be taken with a grain of salt — shows that 50% of organizations have experienced an API security incident in the past 12 months; of those, 77% delayed the rollout of a new service or app.

“It’s vital that organizations detect and mitigate API abuse incidents early to prevent prolonged fiscal and reputational damage to the business,” Hershkovitz said. “API security incidents are increasingly common and disruptive.”