The availability of vast data sources and advanced machine learning technologies has given rise to a new system of influence known as influence engineering. It can guide user behavior and lead to new customer acquisition.

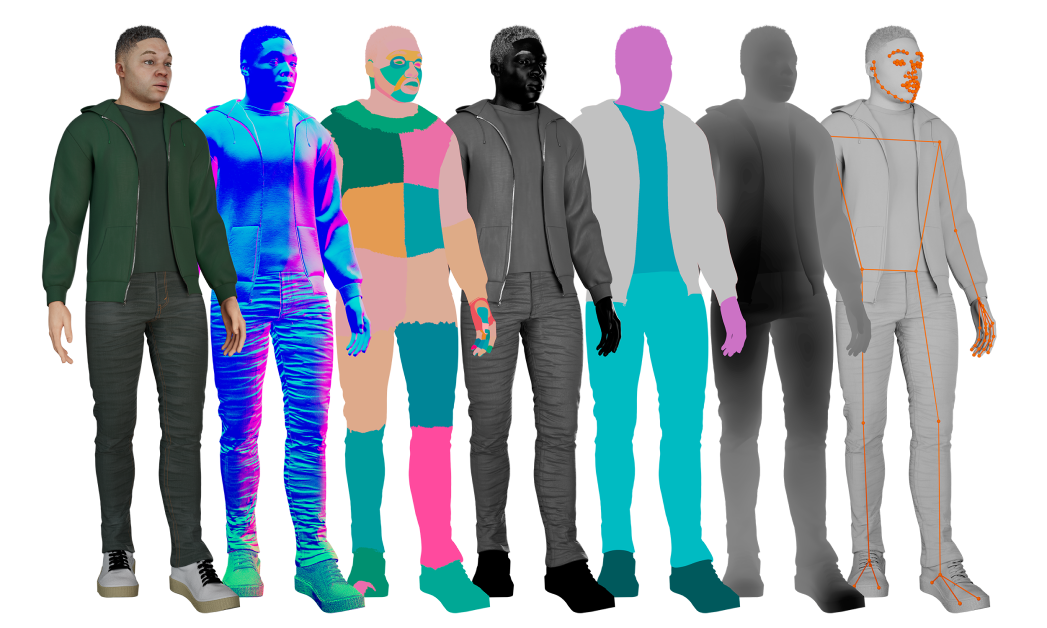

Using computer vision and pattern analysis techniques, companies can now recognize user emotions using emotion detection techniques (generally called emotion AI) to direct their decision-making process.

Also, the advancements in emotion detection and natural language processing techniques present a significant opportunity to automate influential aspects of consumer communication and digital marketing. In fact, in 2021, Gartner named influence engineering as one of the six emerging technologies expected to drive growth for digital marketing.

But what is influence engineering exactly and how it relates to emotion AI? Let’s explore this concept below along with its benefits and applications.

What is Influence Engineering?

Influence engineering (IE) involves developing algorithms that utilize behavioral science techniques to automate particular aspects of the digital experience that can influence user choices on a large scale.

Companies collect and analyze data on user behavior and buying preferences to gain behavioral insights. and then use this information to create targeted messages and experiences that influence users’ decision-making processes. This involves personalization, social proof, scarcity, and other persuasion strategies related to marketing.

Types of Influence Engineering

The three main types of influence engineering include sentiment analysis, facial expression recognition, and voice analysis. Let’s look at them in detail below.

- Sentiment Analysis: Sentiment analysis, also known as opinion mining, is an NLP technique that categorizes user/customer data (reviews) as positive, negative, or neutral. It is commonly used on textual data to monitor brand or product sentiment in customer feedback and gain insights into customer needs.

- Facial Expression Recognition or FER: It uses computer vision algorithms to detect and analyze facial movements and expressions to determine an individual’s emotional state. FER is often used in psychology and marketing to gain insights into customers’ emotional responses and improve their buying or product experiences.

- Voice Analysis: Voice analysis identifies, measures, and quantifies emotions in the human voice. This technique can be used for various applications, such as identifying speakers, detecting emotions or sentiments in speech, and detecting stress or other psychological states based on vocal cues.

Benefits of Influence Engineering

The advantages of influence engineering differ depending on the industry. For instance, on the healthcare front, it can monitor and detect changes in a patient’s mental health, providing early intervention and support to those in need. It can also assist therapists in providing more accurate diagnoses and tailored treatment plans.

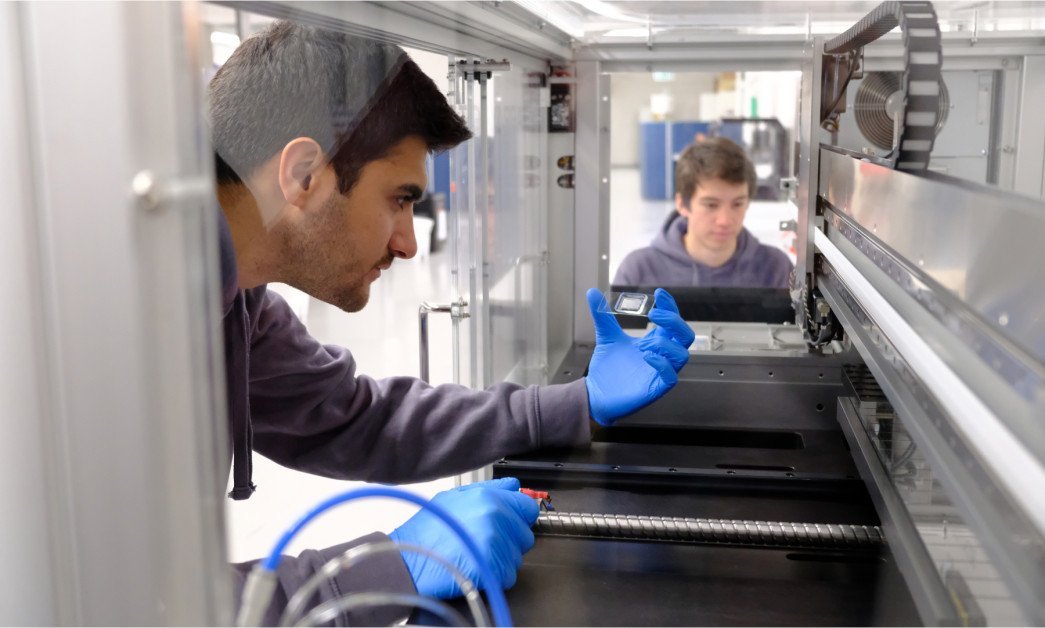

Hence, it can offer valuable input and feedback to all knowledge workers such as marketers, advertisers, designers, engineers, and developers from their relevant customers. Some major benefits of influence engineering include:

- Craft effective marketing campaigns: Influence engineering is well suited for making marketing decisions. It helps marketers better understand customer preferences, emotions, and behaviors and create more effective marketing campaigns that resonate with their target audience.

- Personalized products and services: By analyzing customer emotions and preferences, IE helps businesses develop personalized products and services that meet individual customers’ unique needs and preferences.

- Optimize store layouts and displays: It provides vendors and retailers with valuable insights into customer demographics, mood, and reactions in-store, helping them to optimize store layouts and displays to improve customer experiences.

- Enhanced customer support: IE can assist customer service representatives in detecting customer emotions and providing more personalized and empathetic interactions that improve customer satisfaction.

How Influence Engineering Relates to Emotion AI?

Influence engineering and emotion AI are interrelated as they both aim to understand and influence human behavior. Gartner states that:

“Emotion AI (or affective computing) is part of the larger trend of influence engineering. It uses AI techniques to analyze the emotional state of a user via computer vision, audio/voice input, sensors, and/or software logic. It can initiate responses by performing specific, personalized actions to fit the mood of the customer.”

Over the past five years, searches for emotion AI have increased by 380%. In 2022, the emotion detection and recognition (EDR) market, which utilizes emotion AI to accurately identify, process, and replicate human emotions and feelings, was valued at $39.63 billion.

These technologies are expected to become more mainstream in the coming years, considering that the AI-powered EDR market is projected to grow at a compound annual growth rate (CAGR) of around 17%, amounting to $136.46 billion by 2030.

5 Useful Applications of Influence Engineering

Businesses have been leveraging emotion AI-based influence engineering in various applications, from personalized marketing campaigns to recruiting.

Here is a list of some major IE applications.

1. Market Research & Personalized Marketing Campaigns

Influence engineering enables market research and personalized marketing campaigns. It helps businesses analyze customer reactions to their products and services to improve marketing tactics and tailor strategies to meet customer preferences. Hence, it leads marketers towards data-driven decision-making which results in personalized campaigns that increase customer engagement and loyalty.

2. Patient Care

Influence engineering in healthcare aids in patient care and counseling. For instance, an AI bot can be used to monitor patients’ physical and mental well-being. Affective computing, which uses speech analysis, can aid in diagnosing disorders like depression and dementia.

3. Biofeedback Gaming For Patients

Biofeedback gaming leverages influence engineering and emotion AI to understand the gamer’s (patient) feelings and moods. It is used in healthcare to help patients practice relaxation techniques while playing games. It aims to create methods that enable patients to acquire stress-management abilities through video game play.

4. Autonomous Driving & Driver Assistance

In autonomous driving and driver assistance applications, influence engineering is used to track the driver’s emotional state and send alerts for risky driving. Also, affective computing can evaluate the driving performance of self-driving vehicles by monitoring passengers’ emotional states. By utilizing these technologies, automobile manufacturers can improve driving safety and experience.

5. Personalized Learning Experience For Students

Influence engineering can also be used to personalize the learning experience for students. Sensors like video cameras or microphones can monitor students’ emotional states to adjust lesson plans accordingly. Also, educators can use it to test online learning software prototypes by evaluating a learner’s emotional feedback. It results in a tailored and effective learning environment.

Major Challenges of Influence Engineering

As a result of influence engineering, the collection and monetization of personal emotional data pose significant risks to user safety and privacy. Companies that fail to manage or analyze emotional data carefully can lose customer trust. As a result, it affects their brand reputation and decreases customer retention rate.

Let’s discuss some major challenges of influence engineering below.

- Intimacy: Influence engineering deals with data that is profoundly intimate and personal. It can reveal a person’s behaviors, thoughts, and emotions. Sharing this kind of personal data is complex and requires great care from companies collecting and utilizing it.

- Intangibility: Emotional data can be difficult to understand and recognize. Sharing personal emotions is far more complex than sharing information like a street address, date of birth, or browsing history. Hence, the intangibility of emotional data presents a significant challenge for companies that use influence engineering.

- Ambiguity: The AI techniques used to interpret emotional data are neither transparent nor easily confirm-able by consumers. Hence, it leaves room for interpretation errors and misreadings.

- Escalation: The decentralized nature of data collection and the speed at which data can be processed and disseminated means that mistakes can have far-reaching and difficult-to-reverse consequences.

While influence engineering, and particularly collecting emotional data are significant challenges, as technology progresses, companies can overcome these issues and generate better customer outcomes.

Stay up-to-date with the latest trends in technology. Visit Unite.ai.