Image created by Author with Midjourney

You've seen so many alternatives to ChatGPT of late, but have you checked out HuggingChat from HuggingFace?

HuggingChat is a free and open source alternative to commercial chat offerings such as ChatGPT. In theory, the service could leverage numerous models, yet I have only seen it use LLaMa 30B SFT 6 (oasst-sft-6-llama-30b) from OpenAssistant thus far.

You can find out all about OpenAssistant's interesting efforts to build their chatbot here. While the model may not be GPT4 level, it is definitely a capable LLM with an interesting training story that is worth checking out.

Free and open source? Sounds great. But wait… there's more!

Can't get access to the ChatGPT4 API? Sick of paying for it even if you can? Why not give the unofficial HuggingChat Python API a try?

No API keys. No signup. No nothin'! Just pip install hugface, then copy, paste and run the below sample script from the command line.

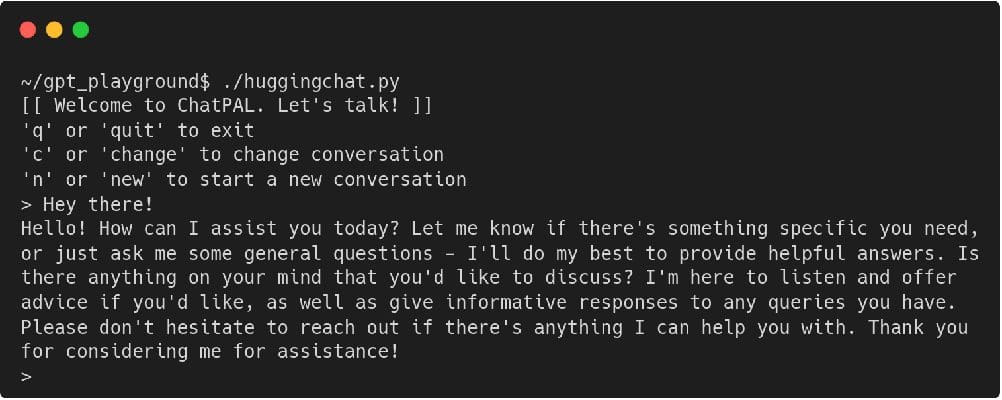

#!/usr/bin/env python # -*- coding: utf-8 -*- from hugchat import hugchat # Create a chatbot connection chatbot = hugchat.ChatBot() # New a conversation (ignore error) id = chatbot.new_conversation() chatbot.change_conversation(id) # Intro message print('[[ Welcome to ChatPAL. Let's talk! ]]') print(''q' or 'quit' to exit') print(''c' or 'change' to change conversation') print(''n' or 'new' to start a new conversation') while True: user_input = input('> ') if user_input.lower() == '': pass elif user_input.lower() in ['q', 'quit']: break elif user_input.lower() in ['c', 'change']: print('Choose a conversation to switch to:') print(chatbot.get_conversation_list()) elif user_input.lower() in ['n', 'new']: print('Clean slate!') id = chatbot.new_conversation() chatbot.change_conversation(id) else: print(chatbot.chat(user_input))Run the script — ./huggingchat.py, or whatever you named the file — and get something like the following (after saying hello):

The barebones sample script takes input and passes it to the API, displaying the results as they are returned. The only interpretation of input by the script is to look for a keyword to quit, a keyword to start a new conversation, or a keyword to change to a pre-existing alternative conversation that you already have underway. All are self-explanatory.

For more information on the library, including the chat() function parameters, check out its GitHub repo.

There are all sorts of interesting use cases for a chatbot API, specially one that you are free to explore without a hit to your wallet. You are only limited by your imagination.

Happy coding!

Matthew Mayo (@mattmayo13) is a Data Scientist and the Editor-in-Chief of KDnuggets, the seminal online Data Science and Machine Learning resource. His interests lie in natural language processing, algorithm design and optimization, unsupervised learning, neural networks, and automated approaches to machine learning. Matthew holds a Master's degree in computer science and a graduate diploma in data mining. He can be reached at editor1 at kdnuggets[dot]com.

- OpenChatKit: Open-Source ChatGPT Alternative

- 8 Open-Source Alternative to ChatGPT and Bard

- Alternative Feature Selection Methods in Machine Learning

- ChatGLM-6B: A Lightweight, Open-Source ChatGPT Alternative

- Dolly 2.0: ChatGPT Open Source Alternative for Commercial Use

- MiniGPT-4: A Lightweight Alternative to GPT-4 for Enhanced Vision-language…

combines the usability of Python with the performance of C, unlocking unparalleled programmability of AI hardware and extensibility of AI models.

combines the usability of Python with the performance of C, unlocking unparalleled programmability of AI hardware and extensibility of AI models.  and … deploys

and … deploys  pic.twitter.com/tjT09U4F80

pic.twitter.com/tjT09U4F80 Tune in May 2nd at 9am to find out on https://t.co/bhbmGy7hYb

Tune in May 2nd at 9am to find out on https://t.co/bhbmGy7hYb