Unite.AI‘s Images.ai is an AI image generator that utilizes the cutting-edge stable diffusion open-source code to create stunning visual content. With a focus on simplicity and user-friendly design, Images.ai makes it easy for anyone to generate spectacular pieces of art with just a search term.

The platform also offers meme functionality, allowing users to share their creations with their community. To get the most out of our AI image generator, we'll explore how to use Images.ai effectively, diving into text prompts, effective prompt writing, prompt recipes, and more.

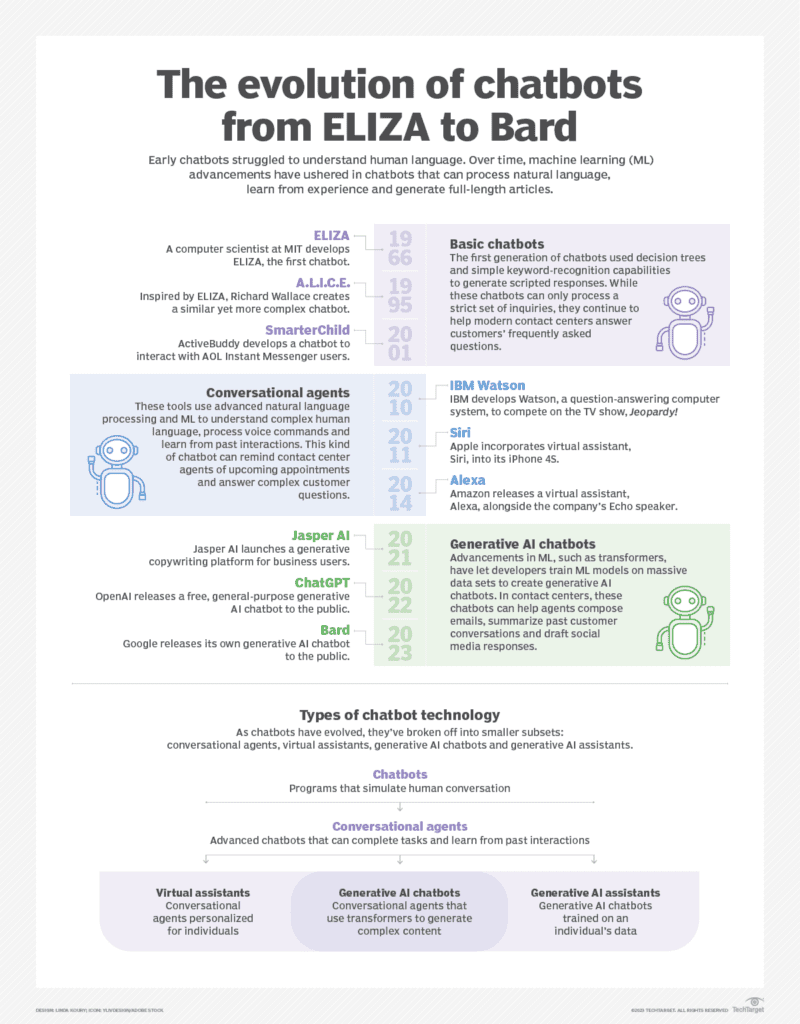

What are text prompts?

Text prompts serve as the input for AI image generators like Images.ai. They are simple phrases or descriptions that guide the AI in generating a visual representation based on the user's input. By providing clear and concise text prompts, users can communicate their desired image concept to the AI, allowing it to generate an image that closely matches their vision.

Understanding the importance of text prompts in AI image generation is crucial for effective results. A well-crafted prompt can mean the difference between a captivating, accurate image and one that misses the mark. The AI interprets the user's input and draws upon its vast database of images and styles to generate a unique visual representation that aligns with the provided prompt.

How to write effective prompts for Images.ai

Writing effective prompts is essential for obtaining accurate and visually appealing results from Images.ai. Here are some tips to help you write compelling prompts:

- Be specific: Provide clear and detailed descriptions to guide the AI in generating the image you envision. Vague or ambiguous prompts can lead to unpredictable results. For instance, instead of writing “a dog,” try “a happy golden retriever playing in a field.”

- Use adjectives: Descriptive words can help communicate the style, mood, or atmosphere you want to achieve in the generated image. Examples of adjectives include “surreal,” “vibrant,” “mystical,” or “serene.” These terms can help guide the AI in generating an image that matches your desired aesthetic.

- Experiment with different phrasings: If you're not satisfied with the initial results, try rephrasing your prompt or using synonyms to explore different interpretations of your concept. For example, if “a mysterious forest” doesn't yield the desired outcome, consider using “an enigmatic woodland” or “a shadowy grove.”

- Combine concepts: Merge two or more ideas in your prompt to create a unique and interesting image. For example, instead of just “a cityscape,” try “a futuristic cityscape with floating cars and skyscrapers connected by sky bridges.”

Image generated by Images.ai

What are prompt recipes?

Prompt recipes are pre-defined templates or patterns that users can follow to create effective text prompts for AI image generators like Images.ai. These recipes usually consist of specific structures or combinations of words that have been proven to yield impressive results with AI image generation. By using prompt recipes, users can take advantage of tried-and-tested formulas to generate high-quality images more consistently.

Examples of prompt recipes might include:

- [Subject] in a [setting] with a [mood] atmosphere: This recipe encourages users to provide a subject, setting, and mood to guide the AI in generating a detailed and evocative image.

- A [style] interpretation of [concept]: This recipe prompts users to specify a particular artistic style (e.g., impressionist, cubist, or abstract) and a concept for the AI to generate an image inspired by that style.

- A mashup of [concept 1] and [concept 2]: This recipe allows users to combine two seemingly unrelated concepts or ideas, resulting in a unique and intriguing image generated by the AI.

Image generated by Images.ai

Mastering Images.ai for your creative projects

Images.ai offers a powerful and user-friendly platform for generating unique visual content using AI. To make the most of this tool, remember the following tips:

- Experiment with text prompts: Don't be afraid to try different prompts and phrasings to discover the full potential of Images.ai. With each iteration, you'll gain a better understanding of how the AI interprets your input and refine your prompts accordingly. The more you experiment, the more diverse and captivating your AI-generated images will become.

- Use the meme functionality: Tap into the platform's meme functionality to add a touch of humor or pop culture references to your images. This feature allows users to create engaging and shareable content that resonates with a broader audience. By incorporating trending memes, catchphrases, or popular characters, you can enhance the appeal of your AI-generated images.

- Create a personalized gallery: Sharing your gallery via a unique link will enable others to appreciate and admire your AI-generated art. To see your gallery link simply click on “Profile” in the top right navigation menu, scroll down and you'll find your gallery link.

- Explore different artistic styles: Images.ai‘s AI algorithms are capable of generating images in various artistic styles, from impressionism to abstract art. Experiment with different styles in your text prompts to diversify your AI-generated image collection and gain a deeper understanding of the platform's capabilities.

- Collaborate and learn from the community: Engage with other Images.ai users to learn from their experiences, share tips, and discuss the platform's features. By actively participating in the community, you can gain insights into the most effective strategies for using Images.ai and stay updated on new features and improvements.

Image generated by Images.ai

Images.ai by Unite.AI is a powerful and accessible AI image generator that empowers users to create stunning visual content with ease. By understanding and mastering text prompts, effective prompt writing, and prompt recipes, you can harness the full potential of Images.ai for your creative projects. As the platform continues to grow and evolve, users can expect even more impressive and versatile image generation capabilities. Embrace your inner artist and start exploring the possibilities with Images.ai today. With persistence and practice, you'll be well on your way to becoming an AI-generated art connoisseur.

We can’t way to see what creations you come up with!

Click Here to visit Images.ai