Please note that all information contained in this article is provided on an “as is” basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained herein or any third-party websites that may be linked. Before engaging in scraping activities of any kind you should consult your legal advisors and carefully read the particular website’s terms of service or receive a scraping license.

A lot has been written about fake news in recent years. Supercharged by events like the pandemic and the Russo-Ukrainian war, the topic has reached incredible levels of importance. With the advent of publicly available Large Language Models, the production times of fake news are likely to decrease, making the issue even more pressing.

Unfortunately, detecting fake news manually requires some level of expertise in the topic at hand, so recognizing inaccuracies and misleading content is impossible for most people. Everyone can’t be an expert at anything – we might be able to understand geopolitics well, but the same person is unlikely to be equally as qualified in medicine and vice versa.

Defining fake news would be a good starting point, however. There have been several studies that have shown that even in academic research, a singular definition has not been agreed upon. In our case, however, we may define fake news as a report that masquerades as reputable while having low facticity and high intent to deceive while presenting a specific narrative.

Another topic, less spoken about, is news bias. Some reporting might not be fake news per se, but might provide a highly specific interpretation of events, which verges awfully close on factual inaccuracy. While these may not be as harmful, over a long period of time they may represent important events in an overly negative or positive light and skew the public perception in a certain way.

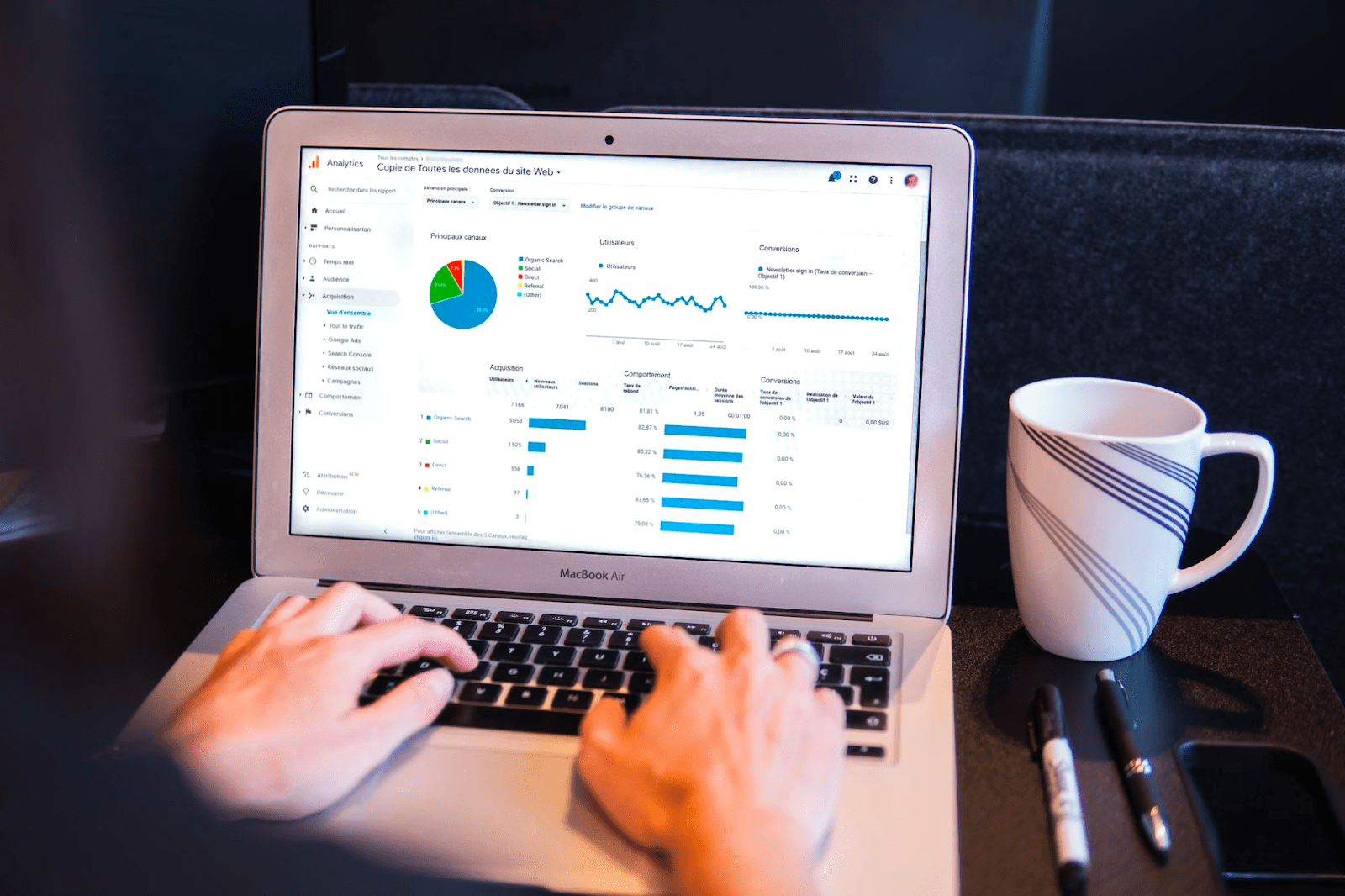

Both of these issues can be partly handled through the usage of web scraping and machine learning. The former can allow us to gather incredible amounts of information from news sources, while the latter can provide evaluations on the veracity and sentiment of the content.

Starting assumptions

I believe that sentiment analysis, when we consider news slant and its veracity, is important due to several factors. First, reporting pure facts is considered boring and, while truthful, usually does not attract large volumes of attention. We can see that even in reputable news sources, where clickbait is still often woefully abundant in titles.

So, most news sources, reputable or not, are inclined to build eye-catching headlines by including various emotional words into them. Most of the time, however, fake news or those who have a strong intention to provide a specific interpretation of events will use significantly more emotional language to generate attention and clicks.

Additionally, we can define news slant, in general, as the overuse of emotional language to produce a specific interpretation of events. For example, politically leaning outlets will often report some news that paints an opposing party in a negative light with some added emotional charge.

There’s also a subject I won’t be covering as it’s somewhat technically complicated. Some news sources outright do not publish specific news, fake or real, and in doing so, skew the world view of their audience. It is definitely possible to use web scraping and machine learning to discover outlets, which have missed specific events, however, it’s an entirely different problem that requires a different approach.

Finally, there’s an important distinction one could make when creating an advanced model – separating intentional and unintentional fake news. For example, it is likely that there were some factually inaccurate reports coming from the recent Turkey earthquakes, largely due to the shock, horror, and immense pain caused by the event. Such reports are mostly unintentional and, while not entirely harmless, they’re not malicious as the intent is not to deceive.

What is of most interest, however, is intentional fake news wherein some party spreads misinformation with some ulterior motive. Such news can be truly harmful to society.

Separating between these types of fake news can be difficult if we want to be extremely accurate, however, a band-aid solution could be to filter out recent events (say, less than a week old), as most unintentional reports appear soon after something happens.

Finding news slant with machine learning

Sentiment analysis is the way to go when we want to discover slants within news articles. Before starting, a large collection of articles should be collected, mostly from reputable sources, to establish a decent baseline for sentiment within regular articles.

Doing so is a necessary step as no news source, no matter how objective, can create articles without having some sort of sentiment in it. It’s nearly impossible to write an enticing news report and avoid using any language that showcases some sort of sentiment.

Additionally, some events will naturally pull writers towards a specific choice of words. Deaths, for example, will nearly always be written in a way that avoids negative sentiment towards the person, as it’s often considered a courtesy to do so.

So, establishing a baseline is necessary in order to make proper predictions. Luckily, there are numerous publicly available datasets, such as EmoBank or WASSA-2017. It should be noted, however, that these are mostly intended for smaller pieces of text, such as tweets.

Building an in-house machine learning model for sentiment, however, isn’t necessary. In fact, there are many great options that can do all the heavy lifting for you, such as the Google Cloud Natural Language API. Additionally, the accuracy of their machine learning model is amazing, so any sentiment analysis can be relied upon.

For data interpretation, any article falling far outside the established baseline should be eyed with some suspicion. There can be false positives, however, it’s more likely that a specific interpretation of events is being provided, which might not be entirely truthful.

Detecting fake news

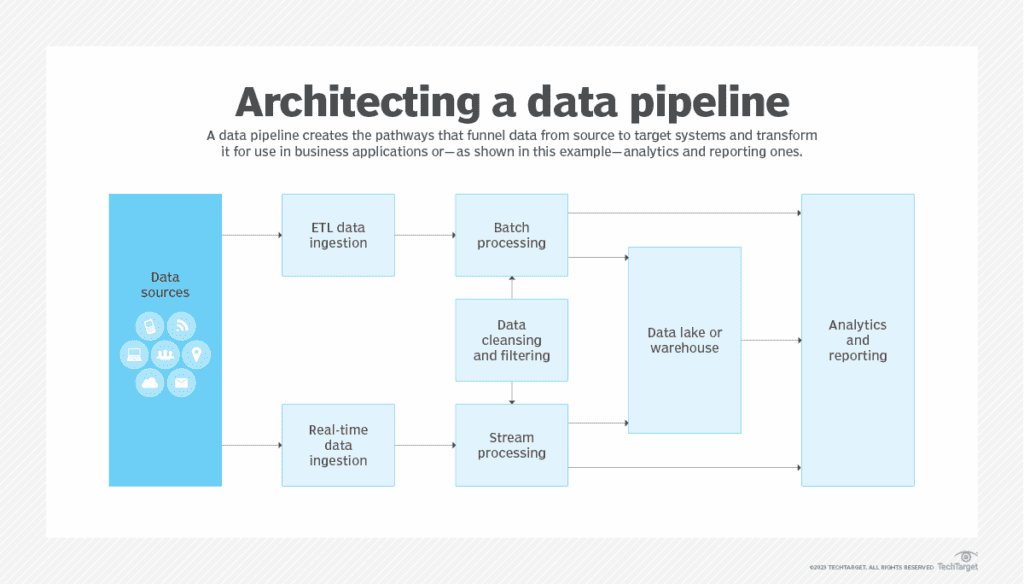

Sentiment alone will not provide enough data to decide whether some reports are true or not, as many more aspects of a news article and its source must also be evaluated. Machine learning is extremely useful when attempting to derive a decision on a complicated network of interconnected data points. It has the distinct advantage that we do not have to define specific factors that separate legitimate from fake news. Machine learning models simply intake data and learn the patterns within it.

As such, web scraping can be utilized to collect an enormous amount of data from various websites and then label it accordingly. While there are predefined datasets for such use cases, they are quite limited and might not be as up-to-date with news cycles as one would expect.

Additionally, since news articles are largely text-based, extracting enough data from them won’t be an issue. Labeling the data, however, might be a little more complicated. We would have to be privy to our own biases to provide an objective assessment. All datasets should be double-checked by other people, as simple mistakes or biases could create a biased model.

Sentiment analysis may again be used to iron out any errors. As we have assumed previously, fake news will have greater usage of highly sentimental language, so, before labeling a dataset, one may run the articles through an NLP tool and take the results into account.

Finally, with the dataset prepared, all that needs to be done is to run it through a machine-learning model. A critical part of doing it correctly will be picking the correct classifier as not all of them perform equally well. Luckily, there has been academic research conducted in this area for exactly the same purpose.

In short, the study authors recommend picking either SVM or logistic regression with the former having slightly, but not statistically significantly better, results. Although it should be noted that most perform almost equally well with the exception of Stochastic Gradient Classifier.

Conclusion

Fake news classifiers were usually relegated to an academic exercise as collecting enough articles for a proper model was enormously difficult, so many turned to publicly available datasets. Web scraping, however, can completely remove that problem, making a fake news classifier much more realistic in practice.

So, here’s a quick rundown of how web scraping can be employed to detect fake news:

- Fake news is likely to be more emotive, about pressing topics, and a high intent to deceive. Sentiment and emotions are the most important part as that’s often how interpretations of events are provided.

- The emotional value of an article can be assessed through sentiment analysis, using widely available tools such as Google NLP or by creating a machine learning model with datasets such as Emobank.

- An important point to consider is event recency as these might have reporting inaccuracies without a particular intention to deceive.

- A trained model could be used to assess all of the above factors and compare articles of a similar nature across media outlets to assess accuracy.

Aleksandras Šulženko is a Product Owner at Oxylabs. Since 2017, he has been at the forefront of changing web data gathering trends. Aleksandras started off as an account manager overseeing the daily operations and challenges of the world’s biggest data-driven brands. This experience has inspired Aleksandras to shift his career path towards product development in order to shape the most effective services for web data collection.

Today as a Product Owner for some of the most innovative web data gathering solutions at Oxylabs, he continues to help companies of all sizes to reach their full potential by harnessing the power of data.