When encountering the labels “data-driven” and “data-centric”, one might first assume that they mean the same thing. In some situations, one might understand their different meanings, but interchange their labels when elaborating on their differences. For the business user and for the developer, a clear distinction between the two is essential. We will primarily focus here on the data-centric development paradigm. But, first let us examine data-driven.

To some extent, data-driven is either wrong or correct. It can be wrong from a strategic perspective: business applications and use cases must align with the business mission and objectives, therefore development activities associated with data (e.g., analytics, decision science, data science, AI) must primarily be results-driven, business-driven, or mission-driven. In other words, the strategy must focus on the outcomes (the outputs of the development activities associated with data), not on the inputs (the data). Every organization has data, especially these days in which every organization probably has massive quantities of digital assets. Organizations, especially large ones, also have lots of buildings and other physical assets, but we wouldn’t say that those things are what drive the organization. The business is strategically pulled forward (driven) by their mission, goals, and objectives, not by their data.

Conversely, data-driven can be correct from a technology and innovation perspective: the emergence of new technologies (mostly digital) inspires the development of innovations that are enabled by, fueled by, and sustained by the large, diverse data assets of the modern enterprise, therefore the data does push forward (drive) these innovations and developments.

So, if the mission pulls and the data pushes, then what is different and essential about being data-centric? Not to overplay it, we could simply say that data-centric is at the fulcrum of push and pull. That’s where developers operate. They leverage the data to design, develop, and produce the desired business outcomes. That’s the key to better outcomes from development activities associated with data.

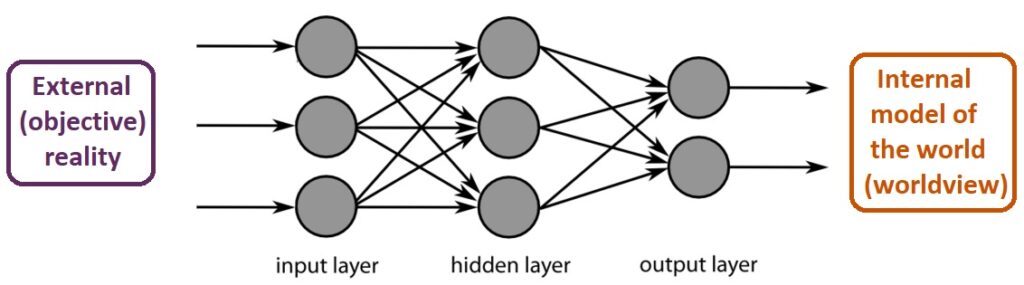

One key reason why this matters is that model-centric development tends to focus most effort on building, validating, and tuning the application, assuming that the data are fixed. This could lead to a situation where the model is built correctly, but it is not the correct model. In other words, the traditional model-centric development approach can drive you safely to the wrong destination. That’s not good enough in a dynamic business environment and an evolving world. As an example, the rapid change in customer buying habits, network usage behaviors, and business processes during the pandemic caused many applications to “give the wrong answer” or deliver the wrong experience. Consequently, developers must systematically improve and adjust to similarly dynamic datasets to increase the accuracy of their applications in production. Therefore, the data-centric approach uses data to define what should be developed in the context of the interests and needs of the stakeholders, as informed by the data generated from the organization’s operations.

There are additional important characteristics of data-centric development. For example, this approach encourages the use of the “right” data, not just “big data”. Sometimes the right data is relatively small and focused, such as in a hyper-personalized customer marketing campaign. Also, the right data might (or should) include 3rd-party data sources external to the business, thereby significantly improving the model outcomes as they incorporate contextual data on customer market demand, economic conditions, the competitive business landscape, social network customer sentiment, supply chain situational awareness, and more. The data-centric developer can then not only learn to develop more with less data, but also to develop better with less data.

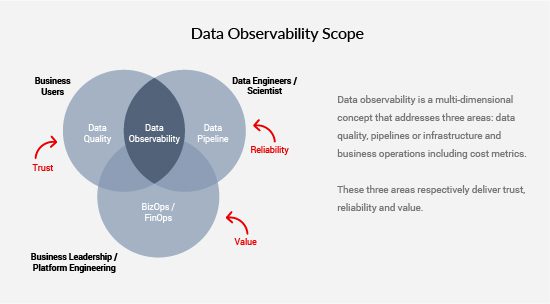

To maximize the quality of data-centric development, there must be a real emphasis on the centrality of the data. Consequently, there is more attention given to improving data quality (through data profiling), providing consistent data labeling (particularly for machine learning models), developing deeper data understanding (through exploratory data analysis), producing smarter model inputs (through feature engineering and experimentation), and identifying the actionable insights from outliers and anomalous data values.

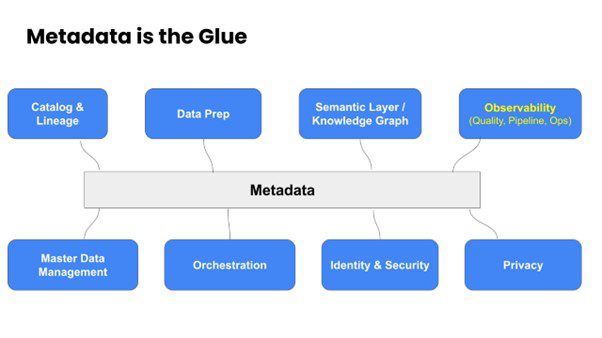

These data workflow orchestration activities can naturally lead to the development of a well-indexed data catalog and data fabric that will power rapid and easy data discovery, data access, and data delivery for developers at the moment of need. In fact, the principles of versioning, repositories, check-in, check-out, provenance, rollbacks, unit and regression testing, etc. from traditional software development best practices can be applied beneficially to these data workflow activities. For example, we are already seeing an increased attention on data version control platforms. A systematic approach to these different steps can smoothly blend data-centrality into development activities, which are then less likely to miss the mark on required business objectives.

Another value proposition for the business from data-centric development is the establishment of a portfolio mindset in which the developed data products can be packaged across a spectrum of applications (such as descriptive analytics, diagnostic analytics, predictive analytics, and prescriptive analytics) with a range of complexity in each category, ranging from simple day-to-day operational tools up to major innovation initiatives. The success of the smaller data-centric development projects will build confidence, broader engagement, and greater advocacy across the organization (from top-level management to the end-user business workers) for larger (perhaps high-risk high-reward) development products and services. The portfolio framework places each of these projects logically into a digestible and easily understandable data-centric development roadmap.

An added benefit of the portfolio approach to data-centric development is that the connections, interactions, and communication channels between developers and the rest of the business (executives, end-users, and other stakeholders) are strengthened across many touchpoints and threads. This, in turn, builds a culture of data-sharing, data democratization, data literacy, and data experimentation. The benefits of these culture shifts can be significant in advancing the overall digital transformation of the organization.

One can itemize the following characteristic benefits to the broader enterprise derived from data-centric development: actionable insights discovery, continual business growth, improved project outcomes, optimized operations, prediction of emerging trends and requirements, and the verticalization of development (since each data application is very domain- and task-specific, so that general models and code are not sufficient).

What are some concrete steps that developers can take to become more data-centric? First, recognize that if data-driven can drive you to the wrong destination, then data-centric can avoid such an outcome through regular monitoring of data and feedback (mid-course corrections). Second, improving a model for hard, rare cases through model-tuning and tweaking may get a slightly better model result after much effort, but perhaps a better result will come more easily and quickly through giving more attention on tuning / tweaking / selecting / improving / cleaning the data. Third, when the model gives poor results for some cases, then focus on the corresponding input data for those cases, to see if there are poor, inconsistent, or inaccurate labels or simply insufficient data examples for that case. If possible, create some synthetic data (augmented data) to boost the underrepresented classes in an unbalanced input dataset. The effort spent on cleaning and improving the data will be time well spent that benefits future development projects, unlike any singularly model-specific tweaks made on the current project.

In summary, data-centric developers are focused intentionally on taming and leveraging massive enterprise and external data assets to increase business agility and to empower data consumers, which includes internal teams, business partners, and customers. Consequently, these types of developers are expanding their sphere of influence on IT purchasing decisions and maximizing the value of data, which is what every data-drenched corporate executive team and/or board of directors likes to see.

Kirk Borne, founder and owner of Data Leadership Group LLC