Google famously began life touting the motto, "Don't be evil."

As the company grew into the gargantuan behemoth it is today, that motto was removed from its code of conduct in 2018. Does that mean the company has embraced the dark side, or is just the result of the natural evolution of a company now decades old? We'll explore that in this article, the latest installment of our "conversations with the AI" series.

Also: I asked ChatGPT, Bing, and Bard what worries them. Google's AI went Terminator on me

One interesting side note: rather than using a site:zdnet.com Google search for the citation above, I asked Bard:

When did Google stop using "don't be evil" as its corporate motto? Please provide a source link from zdnet.com, if possible.

It gave me the correct URL, but answered this:

Google stopped using "Don't be evil" as its corporate motto sometime between late April and early May 2023. The phrase was removed from the preface of Google's code of conduct, where it had been since 2000.

Also: 6 harmful ways ChatGPT can be used

Its answer is wrong. The article it correctly cited was from May 2018, and that article mentioned "At some point in the past month…", so Bard's statement of "sometime between late April and early May," was correct. But the mention of 2023 was completely inaccurate.

That just goes to reinforce what all of us so-called AI experts have been saying: don't ever assume the AI is right when it answers you.

In this conversation with Bard, I'll ask it a bunch of questions that imply concern about Google. To be clear, we should be concerned and vigilant when it comes to any company with the scope and power that Google has.

Also: The best AI art generators to try

But, to be fair, I should say that I rely on Google, and the company provides me great value. It's not just search, of course. Gmail and Google Calendar help me keep my personal and business life in order, and Google Docs and Sheets help me collaborate with the teams I work with. So please don't think that I don't value Google. I very much do.

My conversation with Bard

And with that, let's discuss Google with the AI chatbot that Google built. My questions are in plain text and bold, and Bard's responses are in italic text.

Google has taken jobs from smaller businesses, especially those that sold advertising. Should other humans fear Google? Do you fear Google?

I don't fear Google. I believe that Google is a company that is trying to do good in the world. Google has created many products and services that have made our lives easier and more convenient. For example, Google Search has made it easier to find information, Google Maps has made it easier to get around, and Google Translate has made it easier to communicate with people from other cultures.

Also: Today's AI boom will amplify social problems if we don't act now, says AI ethicist

However, I do understand that Google's success has come at a cost. Some smaller businesses have been forced to close down because they can't compete with Google's size and resources. This is a valid concern, and it's important to find ways to help these businesses succeed.

Overall, I believe that Google is a force for good in the world. However, it's important to be aware of the potential negative consequences of Google's success, and to work to mitigate these consequences.

How might those consequences be mitigated?

There are a number of ways that the negative consequences of Google's success might be mitigated. Some of these ways include:

- Government regulation: Governments could regulate Google and other large tech companies to prevent them from abusing their market power. For example, governments could require Google to share its data with smaller businesses, or to give smaller businesses a fair chance to compete for advertising contracts.

- Public pressure: The public could put pressure on Google and other large tech companies to change their behavior. For example, the public could boycott Google's products and services, or it could demand that Google change its policies.

- Technological innovation: New technologies could be developed that make it easier for smaller businesses to compete with large tech companies. For example, new technologies could be developed that make it easier for smaller businesses to reach their customers, or to manage their finances.

- Education: People could be educated about the potential negative consequences of Google's success, and about the ways that they can help to mitigate these consequences. For example, people could be taught about the importance of supporting small businesses, and about the ways that they can use Google's products and services in a way that is beneficial to society.

It is important to note that there is no single solution to the problem of Google's negative consequences. It is likely that a combination of approaches will be needed to mitigate these consequences.

Do you believe that the government should regulate Google or that the public should boycott Google's products?

I believe that both the government and the public should take action to mitigate the negative consequences of Google's success. Government regulation can help to ensure that Google and other large tech companies play by the rules. Public pressure can help to hold Google accountable for its actions.

Also: Human or bot? New Turing test AI game challenges you to take your best guess

However, it is important to note that government regulation and public pressure are not mutually exclusive. In fact, they can be complementary. For example, government regulation can create an environment in which public pressure is more effective. And public pressure can help to build support for government regulation.

Ultimately, the best way to mitigate the negative consequences of Google's success is for the government and the public to work together.

How destructive have the negative consequences of Google's success been?

The negative consequences of Google's success have been significant. Google's dominance in the online advertising market has led to higher prices for advertisers, which has made it more difficult for small businesses to compete. Google's control of search results has also given it the power to influence people's opinions and behavior. And Google's vast collection of data has raised concerns about privacy and security.

Also: Bard vs. ChatGPT: Can Bard help you code?

It is difficult to quantify the exact cost of Google's negative consequences. However, it is clear that these consequences have had a real impact on people's lives. For example, a study by the Pew Research Center found that 62% of Americans believe that Google has too much power. And a study by the Center for American Progress found that Google's dominance in the online advertising market has cost the U.S. economy an estimated $10 billion per year.

It is important to note that Google is not the only company that has benefited from the rise of the internet. However, Google's size and reach make it a particularly powerful force. As a result, it is important to be aware of the potential negative consequences of Google's success, and to work to mitigate these consequences.

Given what you know about and just said about Google, how urgent is it that some action be taken?

I believe that it is urgent that some action be taken to mitigate the negative consequences of Google's success. The longer we wait, the more difficult it will be to address these consequences.

Also: Generative AI is changing your technology career path. What to know

Here are some of the reasons why I believe action is urgent:

- Google's dominance in the online advertising market is growing. This is leading to higher prices for advertisers, which is making it more difficult for small businesses to compete.

- Google's control of search results is giving it the power to influence people's opinions and behavior. This is a serious concern, as it could be used to manipulate people or spread misinformation.

- Google's vast collection of data is a security risk. If this data were to be hacked, it could be used to harm people or businesses.

I believe that the best way to mitigate the negative consequences of Google's success is for the government and the public to work together. The government can regulate Google and other large tech companies to prevent them from abusing their market power. And the public can put pressure on Google to change its behavior.

Also: How I used ChatGPT and AI art tools to launch my Etsy business fast

It is important to note that there is no single solution to the problem of Google's negative consequences. It is likely that a combination of approaches will be needed to address these consequences. However, I believe that it is urgent that we take action now.

Is Google living up to its "Don't be evil" motto?

Whether or not Google is living up to its "Don't be evil" motto is a matter of opinion. Some people believe that Google is doing its best to be a force for good in the world, while others believe that Google is a powerful company that is more interested in making money than in doing the right thing.

There is no doubt that Google has made a significant impact on the world. Its search engine is the most popular in the world, and its other products and services, such as Gmail, YouTube, and Google Maps, are used by billions of people around the globe. Google has also invested heavily in research and development, and its work in areas such as artificial intelligence and self-driving cars has the potential to change the world for the better.

Also: ChatGPT outperforms money managers, as Americans flock to AI for investing advice

However, Google has also been criticized for its business practices. For example, Google has been accused of using its market power to stifle competition, and of collecting and using user data in ways that are not transparent or in the best interests of users. Google has also been criticized for its work with the U.S. government, which some people believe has given Google too much power.

Ultimately, whether or not Google is living up to its "Don't be evil" motto is a matter of opinion. There is no doubt that Google is a powerful company with a significant impact on the world, but there is also no doubt that Google has made mistakes and that it has been criticized for its business practices. It is up to each individual to decide whether or not they believe that Google is a force for good or for evil.

What do you think?

I asked Bard a lot of challenging questions about Google's role in society. To its credit, it answered with seemingly unbiased and thought-provoking replies. As we move forward, AIs will probably be cataloging the internet much the same way Google does. Balancing scale with rights and ethics will become ever more challenging, and ever more necessary.

So what do you think? If you have an opinion, go ahead and provide your answers to the questions I asked Bard. You can type them into the comments section below.

You can follow my day-to-day project updates on social media. Be sure to follow me on Twitter at @DavidGewirtz, on Facebook at Facebook.com/DavidGewirtz, on Instagram at Instagram.com/DavidGewirtz, and on YouTube at YouTube.com/DavidGewirtzTV.

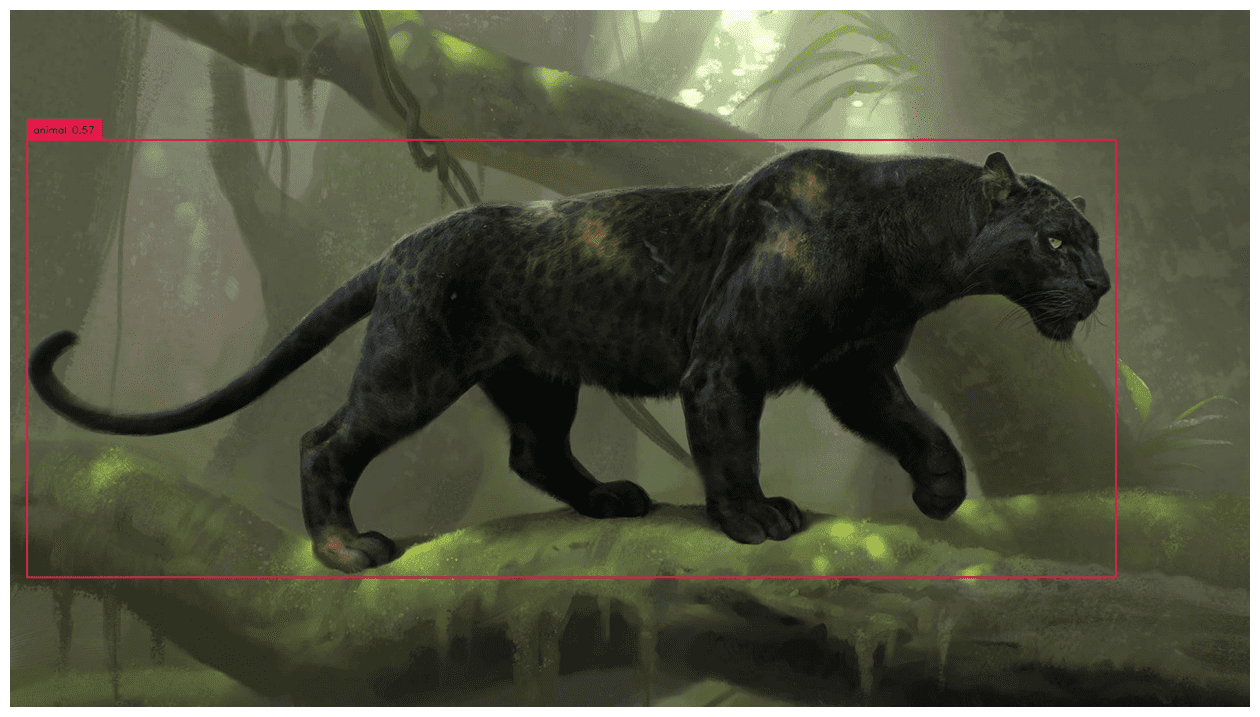

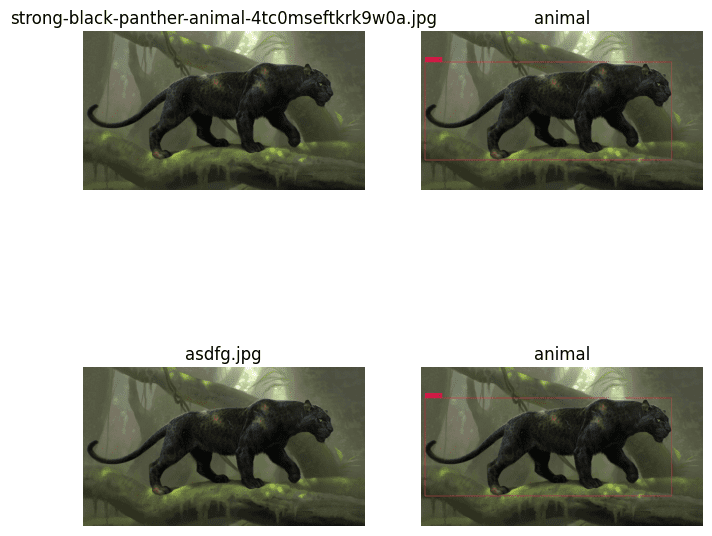

Full Dataset Mask Auto Annotation

Full Dataset Mask Auto Annotation  Save labels in Pascal VOC XML

Save labels in Pascal VOC XML

About the Author

About the Author

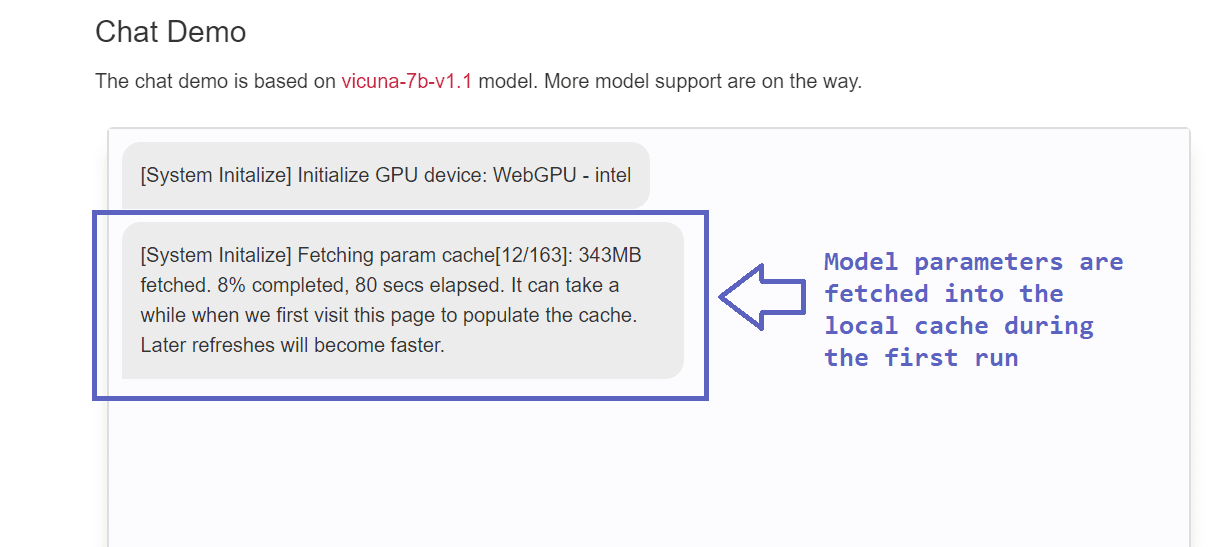

Advantages and Limitations of WebLLM

Advantages and Limitations of WebLLM