OpenAI has now confidently entered the next phase of possible AI domination. With the release of the ChatGPT App on iOS, the chatbot is now available for all iPhone users. Releasing in a phased manner, the app is currently available in the US market alone and will expand to other countries and other devices (Android) soon. Within 12 hours of its release on the Apple Store, the ChatGPT app became the No.1 app under ‘productivity’, and continues to be so three days later too (as seen on Apple Store). With the rapid rise, is this truly the ‘no turning back moment’ for OpenAI? But, with OpenAI’s unhinged growth reaching new heights by pushing for adoption, wouldn’t data privacy issues peak as well?

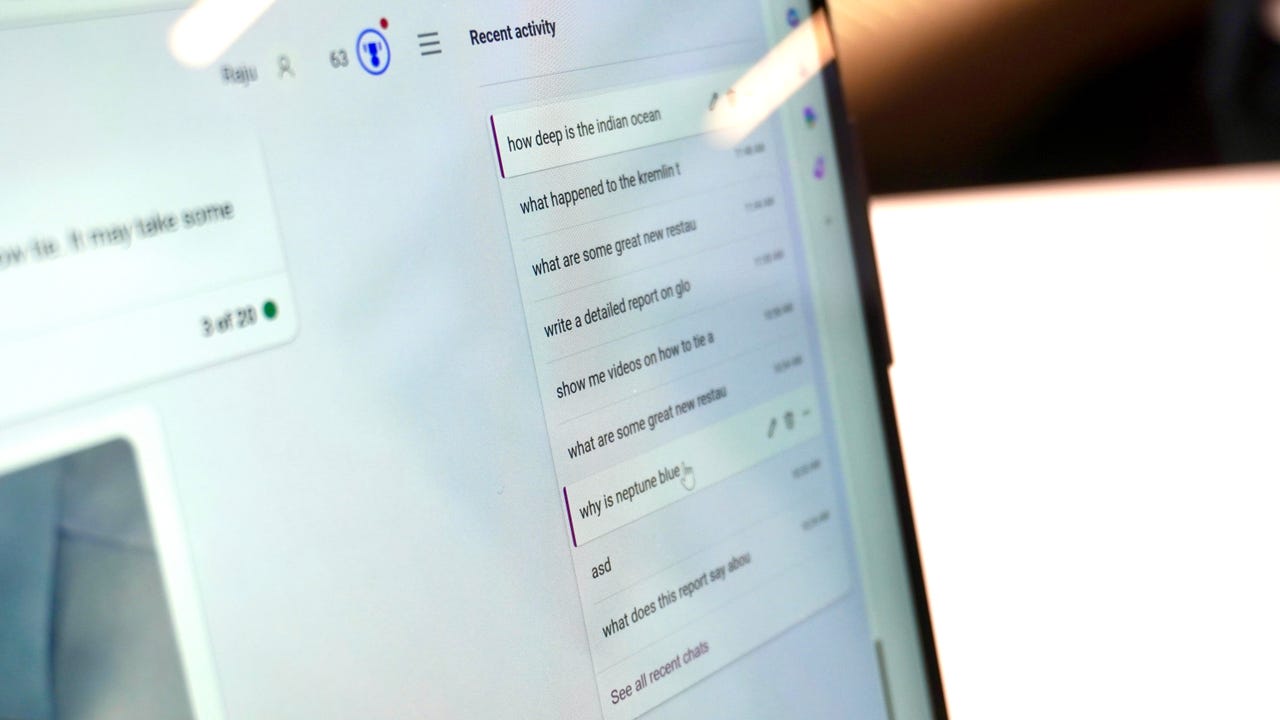

ChatGPT’s iOS app is out!

It’s beautifully simple and *crazy* fast.

It even has super easy audio input which is absolutely fantastic.

I’ll be using this all the time on the go! pic.twitter.com/CFqabbS9MW— Mckay Wrigley (@mckaywrigley) May 18, 2023

OpenAI has been on a continuous growth trajectory with continuous feature additions and new subscription models. The last announcement of introducing over 70+ plugins and web browsing to ChatGPT Plus users is OpenAI’s way of getting people hooked to their ecosystem. However, with looming data security issues, with fear of misuse and leakage of sensitive information, trust has always been a concern with OpenAI.

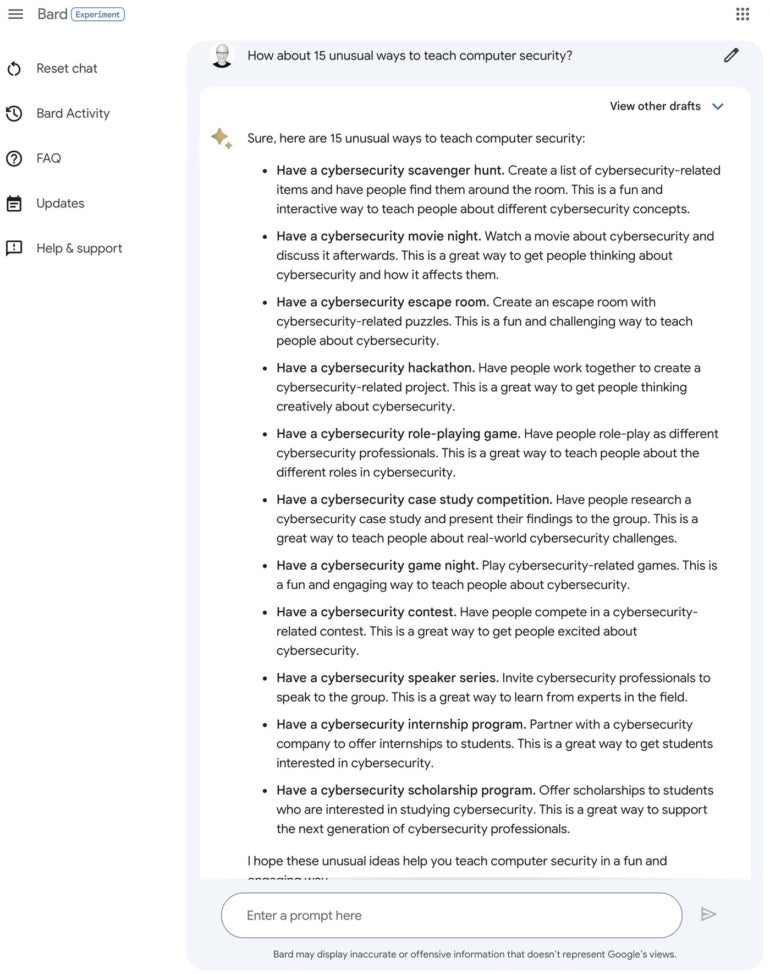

There’s Everything for All

OpenAI continues to guard its secret by going the closed-source way. Though there have been reports on the company planning to come up with an open source AI model, it will have nothing to do with the current GPT model powering ChatGPT. However, to probably appease the open source community, and maybe dodge any future retribution caused by having a questionable AI system, OpenAI has integrated open-source Whisper, a speech-recognition system. By having this, the question of not having anything open sourced in ChatGPT is ruled out.

No Turning Back?

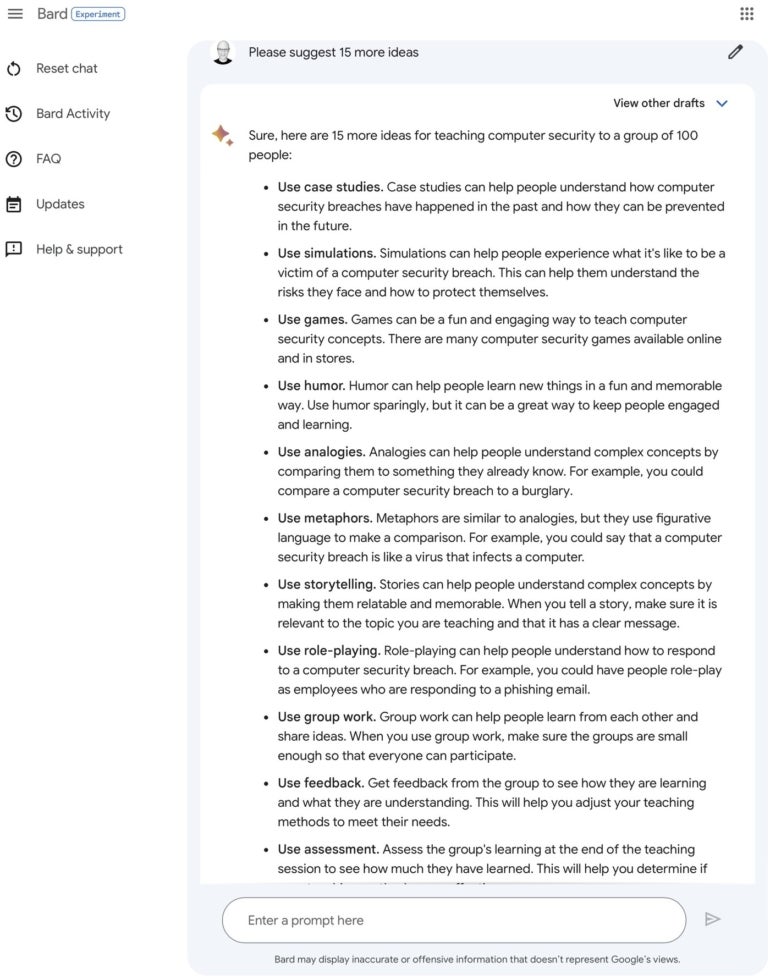

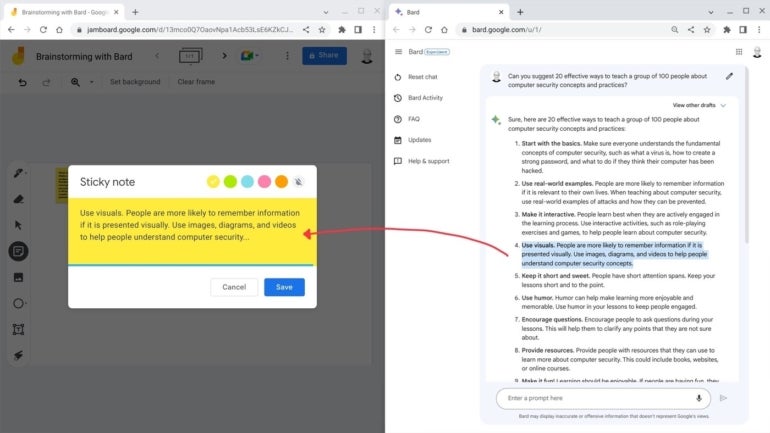

The Whisper feature allows voice input that enables users to ask their queries to the chatbot. In line with Google’s voice search, ChatGPT app is now all out competing with the largest search engine. With ChatGPT plugins, access to real-time data is no longer a concern. Not just that, it is also going head-on against multiple productivity applications built for sorting and simplifying tasks.

With Whisper being integrated into ChatGPT, a user has a plethora of options to utilise the combined power of both. For instance, a user can directly record voice conversations or meeting points and simply ask ChatGPT to run tasks such as summarising the speech, pulling key insights from it, asking for suggestions, and lots more. However, there are no details on the maximum voice duration for recording.

If you look at applications such as Notion, a productivity tool for organising and managing tasks such as creating, and organising notes, databases, calendars, etc, or even Microsoft Loop, which also does all of the above, the ChatGPT app is going to easily catch up with all of them. With over 100 million users on ChatGPT and 1.8 billion visitors per month, it won’t be difficult.

Within just a few days of its release, people have already started sharing their positive experiences on how the app’s Whisper feature is helping them with tasks.

I’ve found myself using the ChatGPT app just for voice transcription. I open the app from the lock screen, hit record, and paste it into another app.

I’ve never used voice features because they were always more trouble then they are worth. It’s great when it works so well!— Greg Mushen (@gregmushen) May 20, 2023

The company also mentioned that ChatGPT Plus subscribers will have an advantage by getting early access to features, faster response time, and “GPT-4’s capabilities” – a convincing push to take up their subscription services.

AI Safety Remains a Dream

While everything sounds hunky-dory, the looming question of AI safety will persist. With multiple concerns on security and privacy for ChatGPT, won’t a mobile app just open up further vulnerabilities on data breach? While the company has given out lengthy statements and announcements on how the company will prioritise AI safety, until now there has been no concrete plans on ‘how’ they would go about it.

The biggest irony is right after the release of the ChatGPT app on iOS, Apple announced a ban on employees from using ChatGPT at work owing to fears of data collection and leaks. Even at the recent Senate hearing on AI where Sam Altman testified, when questions on AI safety were brought up, the company conveniently passed the buck by supporting the need for an agency to regulate safety.

Now, with the ChatGPT app, one can only wonder about the kind of data collection that will happen. OpenAI even mentioned in their app announcement that they would gather ‘user feedback’ and will work on further ‘feature and safety improvements’ for ChatGPT. But well, it remains cryptic and unclear as always.

The post Beware, ChatGPT Can Control You Through Your Phone appeared first on Analytics India Magazine.