IBM emerges as a top contender to gain from generative AI, according to a recent study. IBM’s generative AI offering, Watsonx, positions the tech giant as a significant beneficiary of the emerging technology. “With Watsonx, enterprises can scale and accelerate the impact of advanced AI by accessing a full technology stack for training, tuning, and deploying AI models,” Geeta Gurnani, IBM Technology CTO and technical sales leader, IBM India & South Asia, told AIM.

Generative AI gained momentum after the launch of ChatGPT with its ability to converse almost like a human. IBM, however, on the other hand, has been working on Watson for more than a decade, which was initially designed as a question answering computer system. Hence, it could be alright to say that IBM already had the premise in place, to bring generative AI capabilities to its customers. Gurnani states that while many generative AI tools primarily focus on consumers and social purposes, Watsonx is tailored specifically for enterprises.

“Generative AI opens doors to improved learning, productivity, and innovation across industries. Business leaders are excited about the prospect of using foundation models and machine learning with their own data to accelerate generative AI workloads. Watsonx allows just that, enabling them to deploy models for various enterprise use cases that could range from improving IT operations to enhancing the HR function, and everything in between,” Gurnani said.

Watsonx

With Watsonx, IBM is offering its customers an AI development studio with access to IBM-curated and trained foundation models and open-source models, access to a data store to enable the gathering and cleansing of training and tuning data, and a toolkit for data and AI governance. “We have been a leader in the work of foundation models – and Watsonx is IBM’s push to put state-of-the-art foundation models in the hands of businesses. We are going beyond capabilities that focus on generating the next word or image in a sequence – and rather building and applying foundation models for entirely unexplored business domains such as geospatial intelligence, code, and IT operations.”

Further, explaining with the example of IBM’s collaboration with NASA, Gurnani said that IBM announced the joint development of large-scale geospatial AI models for NASA’s earth science satellite data and a large language model for its earth science literature. “This is the first-time foundation models have been applied to NASA’s satellite data. This could potentially help estimating climate-related risks to agriculture, monitoring forests for carbon-offset programs, and developing predictive models to mitigate and adapt to climate change.”

IBM’s customers will be able to leverage open-source models on the Hugging Face platform, along with the foundational models developed by IBM. “The collaboration with Hugging Face enables IBM’s customers to benefit from open-source models trained on accessible datasets, running within a secure environment with compliance and proper data governance. It expands the range of models and architectures available, allowing clients to leverage the best AI capabilities for their specific business requirements,” Gurnani said.

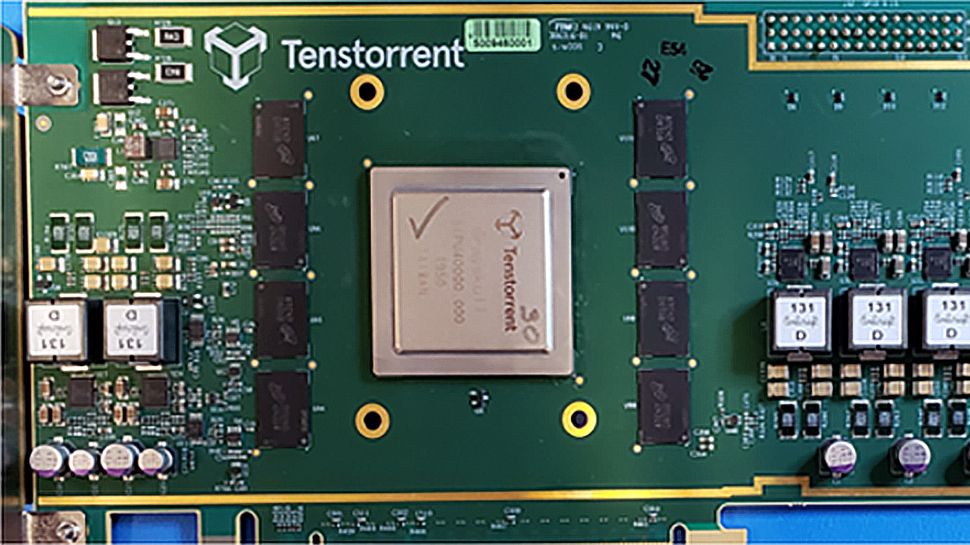

Foundational models powering Watsonx

With Watsonx, IBM’s customers will also get access to IBM’s foundational models, which use a large, curated set of enterprise data backed by a robust filtering and cleansing process and auditable data lineage. “These models are being trained not just on language, but on a variety of modalities, including code, time-series data, tabular data, geospatial data, and IT events data.”

However, not everyone will have access to these models as of yet. IBM plans to allow selective access to these models to a few of its clients and will be available in beta version to begin with, however, soon, the models will be made available for all its customers. IBM’s foundational models have been categorised in three main segments. Firstly, called fm.code, these models are built to automatically generate code for developers through a natural-language interface to boost developer productivity and enable the automation of many IT tasks.

Secondly, fm.NLP, is a collection of large language models (LLMs) designed for specific or industry-specific domains that utilise curated data where bias can be mitigated more easily and can be quickly customised using client data. Lastly, fm.geospatial, is a model built on climate and remote sensing data to help organisations understand and plan for changes in natural disaster patterns, biodiversity, land use, and other geophysical processes that could impact their businesses.

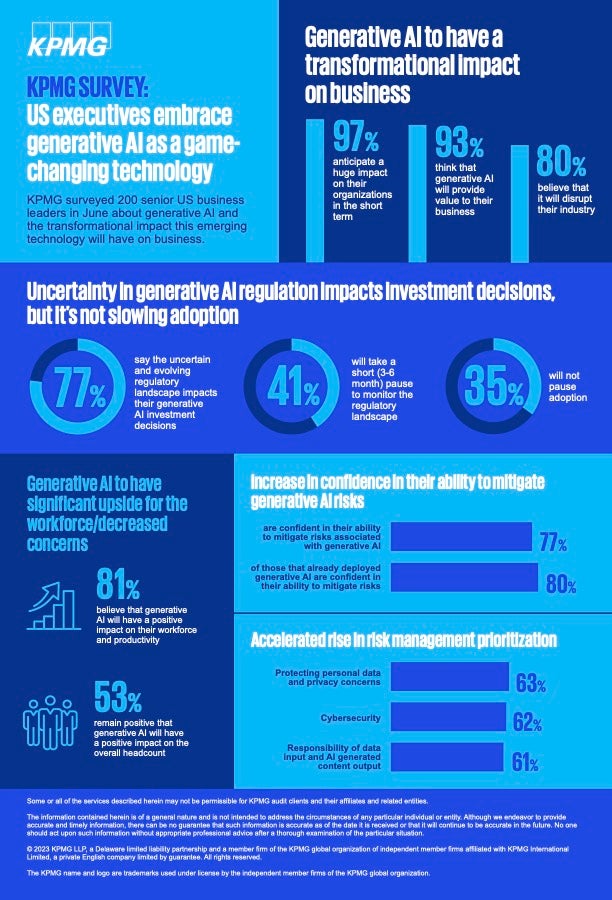

Mitigating generative AI risk

While most enterprises are eager to leverage generative capabilities, it comes with its own sets of challenges, for example, hallucinations. “At IBM, we are actively addressing challenges such as hallucinations and security while ensuring the ethical and responsible use AI within Watsonx.”

According to Gurnani, IBM is reducing the risk of hallucination using retrieval augmented generation, which would enable models to retrieve relevant data from a knowledge corpus before generating an answer. “Users also have the ability to tune existing models to perform specific tasks using domain-specific datasets, which can also help reduce the risk of hallucination.”

Moreover, she adds that for any technology to be widely used, its output must be trusted by users. Hence, IBM is introducing an AI governance toolkit which is expected to be generally available later this year, which will help operationalise governance to mitigate the risk, time and cost associated with manual processes and provides the documentation necessary to drive transparent and explainable outcomes. “It will also have mechanisms to protect customer privacy, proactively detect model bias and drift, and help organisations meet their ethics standards.”

The post IBM Watsonx is Tailored Specifically For Enterprises appeared first on Analytics India Magazine.