Prompt engineers are emerging as key players in the development and optimization of AI models as artificial intelligence (AI) continues its evolution and becomes an integral part of various industries. As experts at crafting effective prompts, they have been instrumental in shaping the future of artificial intelligence through their ability to enable models to deliver accurate, contextually relevant responses.

Doubtlessly, Prompt engineering is one of the most sought-after professions for 2023, according to recent statistics. If you would like to catch the hottest wave in AI at the moment, you may want to consider this career path. For developers who wish to maximize the potential of large language models, such as ChatGPT, prompt engineering tools may be of interest. These tools are a new class of tools designed to direct the LLM toward achieving the desired result. The article discusses the rise and importance of prompt engineers in artificial intelligence.

These words must have caught your attention – ‘AI will not take away your job, but someone who knows AI will.’

Prompting Effectively:

Prompt engineers specialize in designing prompts that achieve desired results from artificial intelligence models. With this knowledge, they can formulate precise and context-aware instructions based on the underlying architecture and capabilities of AI systems. In order to ensure high-quality responses, prompt engineers carefully select wording, adjust parameters, and optimize instructions.

Linguistic Skills and Domain Expertise:

In order to be a successful prompt engineer, it is necessary to have domain knowledge as well as technical expertise. In industries like healthcare, finance, and customer service, they are sensitive to nuances. As a result of their understanding of these domains, they are able to develop prompts that are tailored to meet the needs and challenges of each domain, thus ensuring accurate and reliable results generated by AI. To communicate instructions effectively and capture the desired intent, prompt engineers must also possess strong linguistic skills.

Refinement and Training of Models in an Iterative Manner:

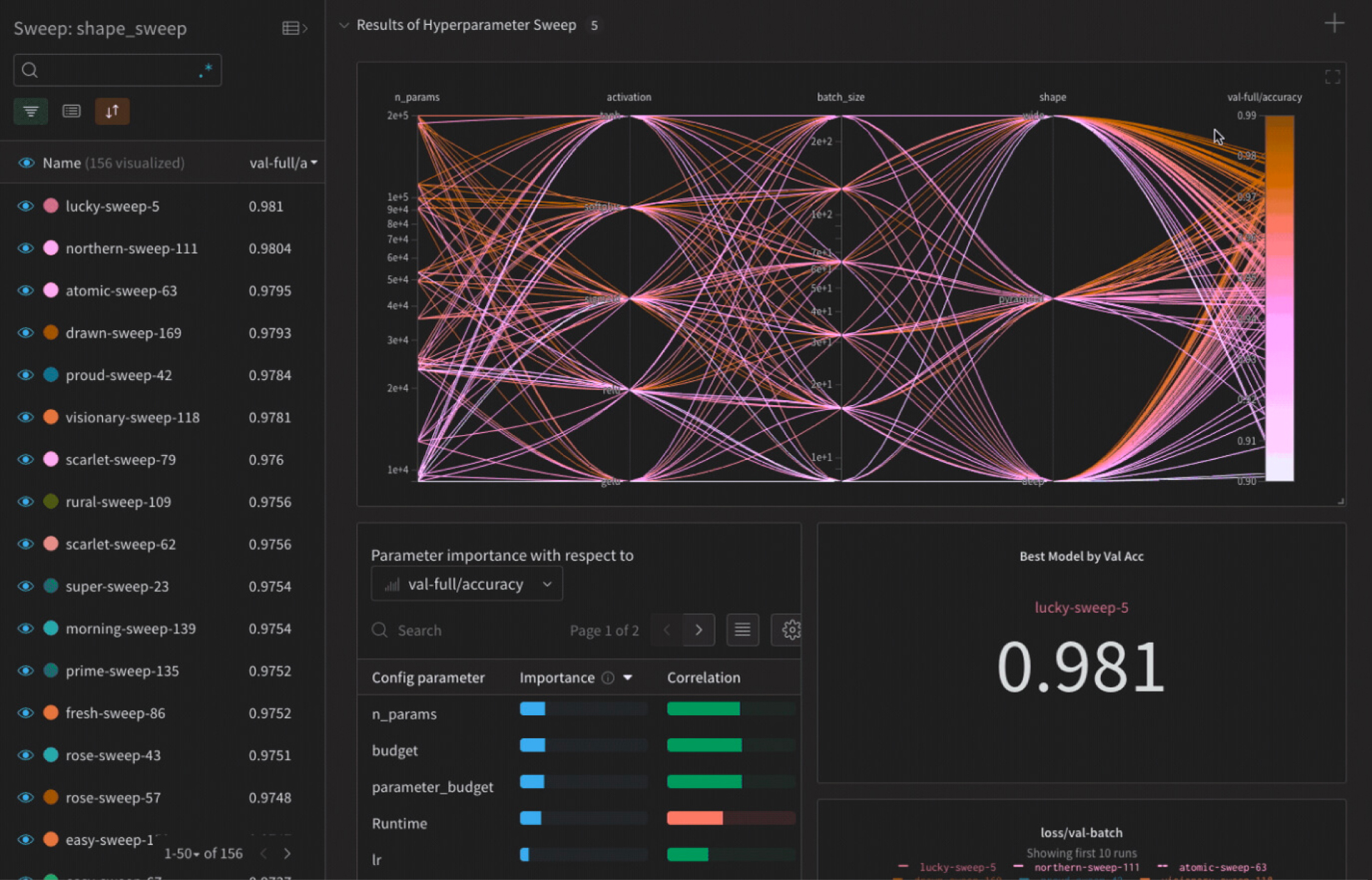

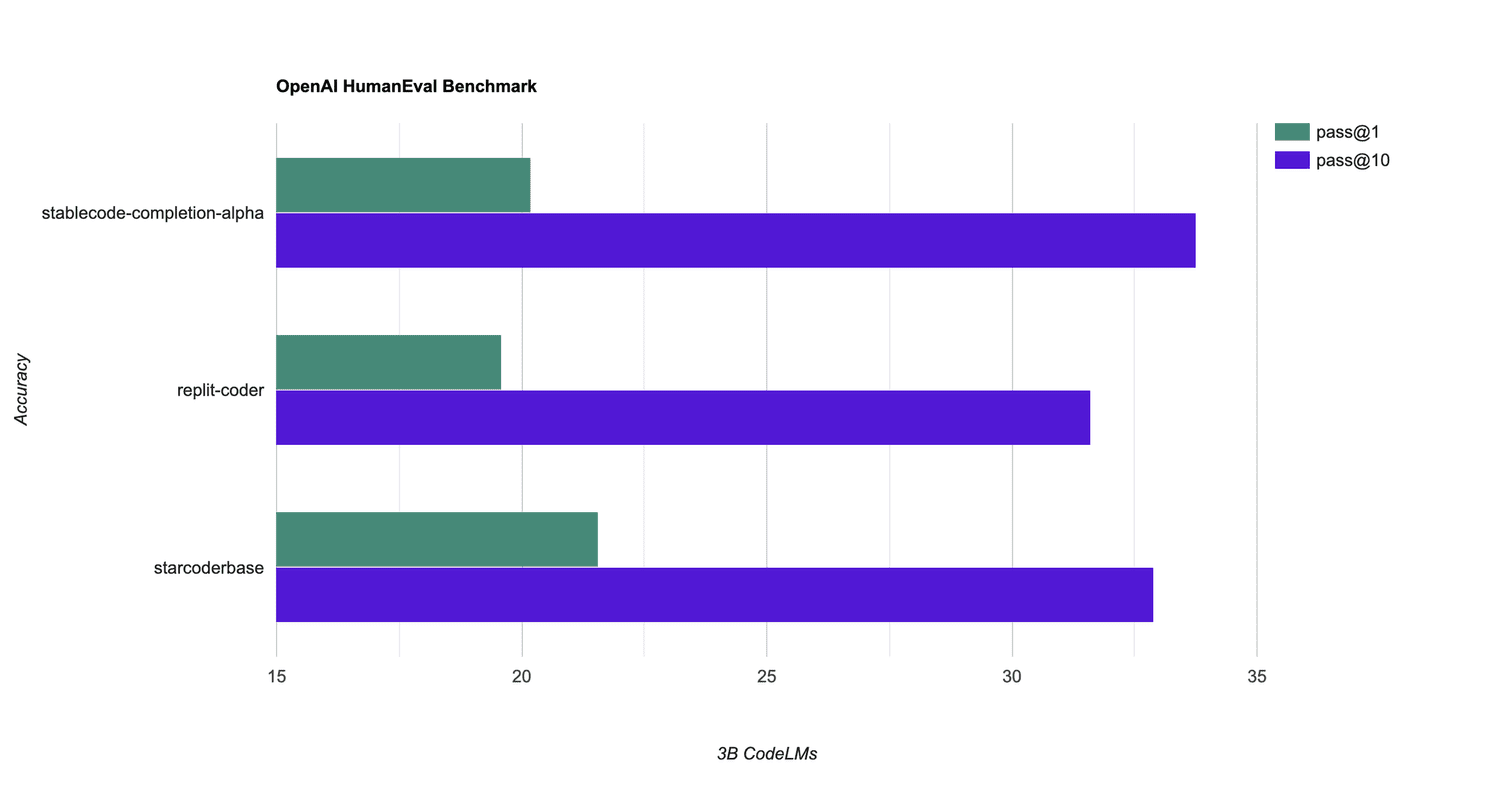

In prompt engineering, prompts are refined and AI models are fine-tuned in an iterative process. Iteratively adjusting prompts achieves the desired outcomes by analyzing the output of the model, identifying improvements, and iteratively analyzing the output of the model. A collaborative effort between prompt engineers and data scientists ensures continuous improvement and better performance by training and optimizing AI models.

Bias Mitigation and Ethical Considerations:

AI ethics are greatly influenced by prompts. By preventing harmful or discriminatory outputs from AI models, prompt engineers ensure fairness, mitigate biases, and ensure fairness. By promoting inclusivity, adhering to ethical guidelines, and preventing bias or harmful information from being propagated, they seek to advance responsible AI development.

As AI Technologies Continue to Evolve, we must Adapt:

Professional engineers should stay abreast of the latest developments in artificial intelligence (AI). Prompt engineers should take into consideration the distinctive characteristics of new models and architectures as they emerge and adapt their prompt engineering strategies accordingly. In order for AI to be pushed to its limits, it is imperative that scientists are able to experiment with cutting-edge technologies and leverage innovative models.

Interdisciplinary Skills and Collaboration:

Data scientists, engineers, linguists, and subject matter experts all work closely with prompt engineers as part of a team. In order to effectively understand diverse perspectives, gather domain-specific knowledge, and establish actionable prompts, effective communication, collaboration, and interdisciplinary skills are essential.

Empowering Prompt Engineers with Prompt Engineering Tools in AI Development

As prompt engineers play a more prominent role in the AI ecosystem, new tools are emerging to support and streamline their work. Through prompt engineering tools, you can automate tasks, experiment with prompts, and enhance efficiency when creating chatbots, autonomous agents, and other AI applications. Artificial intelligence is rapidly developing and expanding, and prompt engineering tools have become a key component of that process.

• Generating and optimizing prompts: It is possible to generate and optimize prompts using prompt engineering tools. The company offers prebuilt prompt templates, natural language generation services, and automatic parameter optimization services. Various prompts can be explored by developers, customized for different use cases, and iteratively refined to achieve the desired results. • The context-based prompting system consists of: AI model context and behavior can be controlled by these tools through prompts. Additional context can be specified, constraints can be defined, and conditional instructions can be added to prompts. Developing prompts that are contextually appropriate and more accurate can help developers guide AI models. • A method for detecting and mitigating bias is described below: Bias detection and mitigation features are included in prompt engineering tools. As a result, prompt engineers are able to identify and address any unfair or discriminatory outcomes that may be present in prompts and model outputs as a result of those analyses. The tools enable prompt engineering to be conducted in a fair, transparent, and ethical manner. • Workflow management and collaboration: Collaboration among prompt engineers, data scientists, and other stakeholders is enhanced by prompt engineering tools. To facilitate teamwork, track prompt iterations, and manage the overall workflow, they provide shared workspaces, version control, and collaboration features. Sharing knowledge and coordinating efficiently is facilitated by this. • Monitoring and analytics of performance: It is possible to assess the effectiveness of prompts and model responses using these tools, which provide analytics and performance monitoring features. By utilizing this technology, developers can gain insight into how different prompts affect the performance of AI systems, understand user interactions, and measure the quality of output generated by the AI systems. In order to refine and optimize prompt strategies, prompt engineers utilize this data-driven approach. • Platforms and libraries integrated with artificial intelligence: With prompt engineering tools, developers can leverage existing AI infrastructure and frameworks by seamlessly integrating them with popular AI platforms and libraries. Prompt engineers can seamlessly integrate prompt engineering workflows within larger AI pipelines since they are compatible with language models, conversational AI frameworks, and deployment platforms. • Fine-tuning and model training: It is possible to train and fine-tune models using some prompt engineering tools that provide built-in functionality. AI models can be trained iteratively using annotated data, prompt strategies are adjusted, and performance is evaluated. These tools provide prompt engineers with a unified environment for refining prompts and optimizing models.Developers can automate, collaborate, and optimize their AI development workflows with prompt engineering tools. Prompt engineers are increasingly needed, and these tools enable them to develop contextually relevant and responsible AI applications as they experiment with prompts, address biases, monitor performance, and create contextually relevant and responsible AI applications. AI-powered solutions will continue to evolve and succeed with continued advancements in prompt engineering tools.

Final Thoughts:

A prompt engineer plays a crucial role in the development of Artificial Intelligence, creating carefully crafted prompts to guide AI models in their behavior and output. AI systems they design align with real-world needs and values due to their expertise in domain knowledge, linguistic competencies, and ethical considerations. Prompt engineers will become increasingly important as artificial intelligence advances and are harnessed in order to drive innovation across various industries.

Conclusion

Conclusion