The advent of GPT models, along with other autoregressive or AR large language models har unfurled a new epoch in the field of machine learning, and artificial intelligence. GPT and autoregressive models often exhibit general intelligence and versatility that are considered to be a significant step towards general artificial intelligence or AGI despite having some issues like hallucinations. However, the puzzling problem with these large models is a self-supervised learning strategy that allows the model to predict the next token in a sequence, a simple yet effective strategy. Recent works have demonstrated the success of these large autoregressive models, highlighting their generalizability and scalability. Scalability is a typical example of the existing scaling laws that allows researchers to predict the performance of the large model from the performance of smaller models, resulting in better allocation of resources. On the other hand, generalizability is often evidenced by learning strategies like zero-shot, one-shot and few-shot learning, highlighting the ability of unsupervised yet trained models to adapt to diverse and unseen tasks. Together, generalizability and scalability reveal the potential of autoregressive models to learn from a vast amount of unlabeled data.

Building on the same, in this article, we will be talking about Visual AutoRegressive or the VAR framework, a new generation pattern that redefines autoregressive learning on images as coarse-to-fine “next-resolution prediction” or “next-scale prediction”. Although simple, the approach is effective and allows autoregressive transformers to learn visual distributions better, and enhanced generalizability. Furthermore, the Visual AutoRegressive models enable GPT-style autoregressive models to surpass diffusion transfers in image generation for the first time. Experiments also indicate that the VAR framework improves the autoregressive baselines significantly, and outperforms the Diffusion Transformer or DiT framework in multiple dimensions including data efficiency, image quality, scalability, and inference speed. Further, scaling up the Visual AutoRegressive models demonstrate power-law scaling laws similar to the ones observed with large language models, and also displays zero-shot generalization ability in downstream tasks including editing, in-painting, and out-painting.

This article aims to cover the Visual AutoRegressive framework in depth, and we explore the mechanism, the methodology, the architecture of the framework along with its comparison with state of the art frameworks. We will also talk about how the Visual AutoRegressive framework demonstrates two important properties of LLMs: Scaling Laws and zero-shot generalization. So let’s get started.

Visual AutoRegressive Modeling: Scaling Image Generation

A common pattern among recent large language models is the implementation of a self-supervised learning strategy, a simple yet effective approach that predicts the next token in the sequence. Thanks to the approach, autoregressive and large language models today have demonstrated remarkable scalability as well as generalizability, properties that reveal the potential of autoregressive models to learn from a large pool of unlabeled data, therefore summarizing the essence of General Artificial Intelligence. Furthermore, researchers in the computer vision field have been working parallelly to develop large autoregressive or world models with the aim to match or surpass their impressive scalability and generalizability, with models like DALL-E and VQGAN already demonstrating the potential of autoregressive models in the field of image generation. These models often implement a visual tokenizer that represent or approximate continuous images into a grid of 2D tokens, that are then flattened into a 1D sequence for autoregressive learning, thus mirroring the sequential language modeling process.

However, researchers are yet to explore the scaling laws of these models, and what’s more frustrating is the fact that the performance of these models often falls behind diffusion models by a significant margin, as demonstrated in the following image. The gap in performance indicates that when compared to large language models, the capabilities of autoregressive models in computer vision is underexplored.

On one hand, traditional autoregressive models require a defined order of data, whereas on the other hand, the Visual AutoRegressive or the VAR model reconsiders how to order an image, and this is what distinguishes the VAR from existing AR methods. Typically, humans create or perceive an image in a hierarchical manner, capturing the global structure followed by the local details, a multi-scale, coarse-to-fine approach that suggests an order for the image naturally. Furthermore, drawing inspiration from multi-scale designs, the VAR framework defines autoregressive learning for images as next scale prediction as opposed to conventional approaches that define the learning as next token prediction. The approach implemented by the VAR framework takes off by encoding an image into multi-scale token maps. The framework then starts the autoregressive process from the 1×1 token map, and expands in resolution progressively. At every step, the transformer predicts the next higher resolution token map conditioned on all the previous ones, a methodology that the VAR framework refers to as VAR modeling.

The VAR framework attempts to leverage the transformer architecture of GPT-2 for visual autoregressive learning, and the results are evident on the ImageNet benchmark where the VAR model improves its AR baseline significantly, achieving a FID of 1.80, and an inception score of 356 along with a 20x improvement in the inference speed. What’s more interesting is that the VAR framework manages to surpass the performance of the DiT or Diffusion Transformer framework in terms of FID & IS scores, scalability, inference speed, and data efficiency. Furthermore, the Visual AutoRegressive model exhibits strong scaling laws similar to the ones witnessed in large language models.

To sum it up, the VAR framework attempts to make the following contributions.

- It proposes a new visual generative framework that uses a multi-scale autoregressive approach with next-scale prediction, contrary to the traditional next-token prediction, resulting in designing the autoregressive algorithm for computer vision tasks.

- It attempts to validate scaling laws for autoregressive models along with zero-shot generalization potential that emulates the appealing properties of LLMs.

- It offers a breakthrough in the performance of visual autoregressive models, enabling the GPT-style autoregressive frameworks to surpass existing diffusion models in image synthesis tasks for the first time ever.

Furthermore, it is also vital to discuss the existing power-law scaling laws that mathematically describe the relationship between dataset sizes, model parameters, performance improvements, and computational resources of machine learning models. First, these power-law scaling laws facilitate the application of a larger model’s performance by scaling up the model size, computational cost, and data size, saving unnecessary costs and allocating the training budget by providing principles. Second, scaling laws have demonstrated a consistent and non-saturating increase in performance. Moving forward with the principles of scaling laws in neural language models, several LLMs embody the principle that increasing the scale of models tends to yield enhanced performance outcomes. Zero-shot generalization on the other hand refers to the ability of a model, particularly a LLM that performs tasks it has not been trained on explicitly. Within the computer vision domain, the interest in building in zero-shot, and in-context learning abilities of foundation models.

Language models rely on WordPiece algorithms or Byte Pair Encoding approach for text tokenization. Visual generation models based on language models also rely heavily on encoding 2D images into 1D token sequences. Early works like VQVAE demonstrated the ability to represent images as discrete tokens with moderate reconstruction quality. The successor to VQVAE, the VQGAN framework incorporated perceptual and adversarial losses to improve image fidelity, and also employed a decoder-only transformer to generate image tokens in standard raster-scan autoregressive manner. Diffusion models on the other hand have long been considered to be the frontrunners for visual synthesis tasks provided their diversity, and superior generation quality. The advancement of diffusion models has been centered around improving sampling techniques, architectural enhancements, and faster sampling. Latent diffusion models apply diffusion in the latent space that improves the training efficiency and inference. Diffusion Transformer models replace the traditional U-Net architecture with a transformer-based architecture, and it has been deployed in recent image or video synthesis models like SORA, and Stable Diffusion.

Visual AutoRegressive : Methodology and Architecture

At its core, the VAR framework has two discrete training stages. In the first stage, a multi-scale quantized autoencoder or VQVAE encodes an image into token maps, and compound reconstruction loss is implemented for training purposes. In the above figure, embedding is a word used to define converting discrete tokens into continuous embedding vectors. In the second stage, the transformer in the VAR model is trained by either minimizing the cross-entropy loss or by maximizing the likelihood using the next-scale prediction approach. The trained VQVAE then produces the token map ground truth for the VAR framework.

Autoregressive Modeling via Next-Token Prediction

For a given sequence of discrete tokens, where each token is an integer from a vocabulary of size V, the next-token autoregressive model puts forward that the probability of observing the current token depends only on its prefix. Assuming unidirectional token dependency allows the VAR framework to decompose the chances of sequence into the product of conditional probabilities. Training an autoregressive model involves optimizing the model across a dataset, and this optimization process is known as next-token prediction, and allows the trained model to generate new sequences. Furthermore, images are 2D continuous signals by inheritance, and to apply the autoregressive modeling approach to images via the next-token prediction optimization process has a few prerequisites. First, the image needs to be tokenized into several discrete tokens. Usually, a quantized autoencoder is implemented to convert the image feature map to discrete tokens. Second, a 1D order of tokens must be defined for unidirectional modeling.

The image tokens in discrete tokens are arranged in a 2D grid, and unlike natural language sentences that inherently have a left to right ordering, the order of image tokens must be defined explicitly for unidirectional autoregressive learning. Prior autoregressive approaches flattened the 2D grid of discrete tokens into a 1D sequence using methods like row-major raster scan, z-curve, or spiral order. Once the discrete tokens were flattened, the AR models extracted a set of sequences from the dataset, and then trained an autoregressive model to maximize the likelihood into the product of T conditional probabilities using next-token prediction.

Visual-AutoRegressive Modeling via Next-Scale Prediction

The VAR framework reconceptualizes the autoregressive modeling on images by shifting from next-token prediction to next-scale prediction approach, a process under which instead of being a single token, the autoregressive unit is an entire token map. The model first quantizes the feature map into multi-scale token maps, each with a higher resolution than the previous, and culminates by matching the resolution of the original feature maps. Furthermore, the VAR framework develops a new multi-scale quantization encoder to encode an image to multi-scale discrete token maps, necessary for the VAR learning. The VAR framework employs the same architecture as VQGAN, but with a modified multi-scale quantization layer, with the algorithms demonstrated in the following image.

Visual AutoRegressive : Results and Experiments

The VAR framework uses the vanilla VQVAE architecture with a multi-scale quantization scheme with K extra convolution, and uses a shared codebook for all scales and a latent dim of 32. The primary focus lies on the VAR algorithm owing to which the model architecture design is kept simple yet effective. The framework adopts the architecture of a standard decoder-only transformer similar to the ones implemented on GPT-2 models, with the only modification being the substitution of traditional layer normalization for adaptive normalization or AdaLN. For class conditional synthesis, the VAR framework implements the class embeddings as the start token, and also the condition of the adaptive normalization layer.

State of the Art Image Generation Results

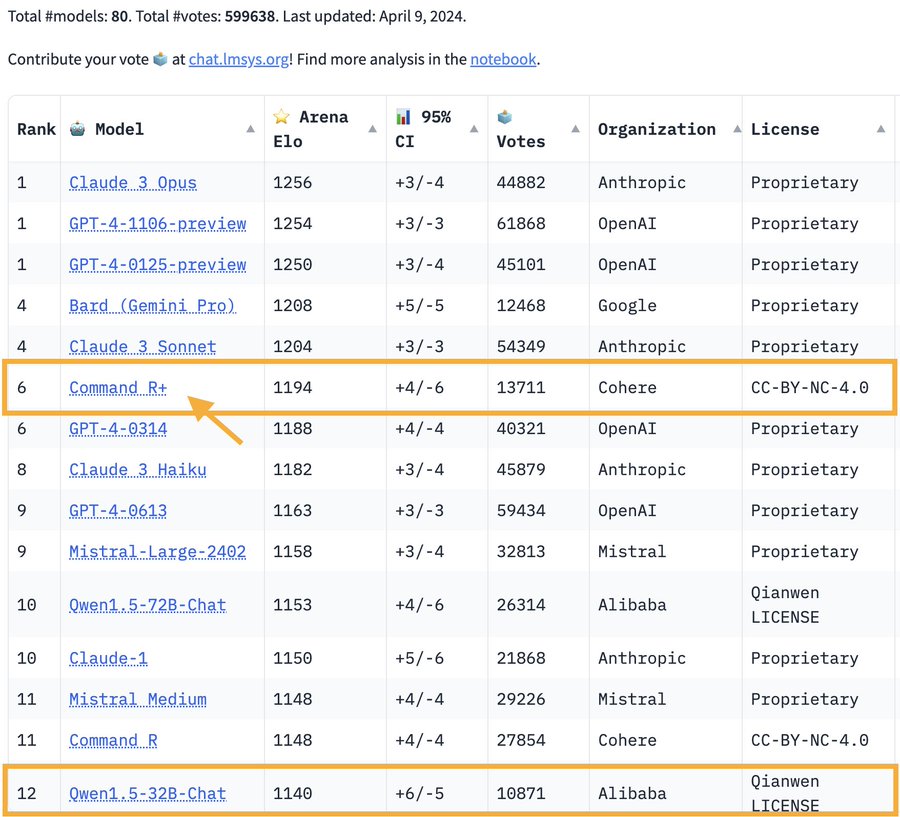

When paired against existing generative frameworks including GANs or Generative Adversarial Networks, BERT-style masked prediction models, diffusion models, and GPT-style autoregressive models, the Visual AutoRegressive framework shows promising results summarized in the following table.

As it can be observed, the Visual AutoRegressive framework is not only able to best FID and IS scores, but it also demonstrates remarkable image generation speed, comparable to state of the art models. Furthermore, the VAR framework also maintains satisfactory precision and recall scores, which confirms its semantic consistency. But the real surprise is the remarkable performance delivered by the VAR framework on traditional AR capabilities tasks, making it the first autoregressive model that outperformed a Diffusion Transformer model, as demonstrated in the following table.

Zero-Shot Task Generalization Result

For in and out-painting tasks, the VAR framework teacher-forces the ground truth tokens outside the mask, and lets the model generate only the tokens within the mask, with no class label information being injected into the model. The results are demonstrated in the following image, and as it can be seen, the VAR model achieves acceptable results on downstream tasks without tuning parameters or modifying the network architecture, demonstrating the generalizability of the VAR framework.

Final Thoughts

In this article, we have talked about a new visual generative framework named Visual AutoRegressive modeling (VAR) that 1) theoretically addresses some issues inherent in standard image autoregressive (AR) models, and 2) makes language-model-based AR models first surpass strong diffusion models in terms of image quality, diversity, data efficiency, and inference speed. On one hand, traditional autoregressive models require a defined order of data, whereas on the other hand, the Visual AutoRegressive or the VAR model reconsiders how to order an image, and this is what distinguishes the VAR from existing AR methods. Upon scaling VAR to 2 billion parameters, the developers of the VAR framework observed a clear power-law relationship between test performance and model parameters or training compute, with Pearson coefficients nearing −0.998, indicating a robust framework for performance prediction. These scaling laws and the possibility for zero-shot task generalization, as hallmarks of LLMs, have now been initially verified in our VAR transformer models.

) (@JeffDean) April 9, 2024

) (@JeffDean) April 9, 2024