Image generated with Ideogram.ai

Who hasn’t heard about OpenAI? The AI research laboratory has changed the world because of its famous product, ChatGPT.

It literally changed the landscape of AI implementation, and many companies now rush to become the next big thing.

Despite much competition, OpenAI is still the go-to company for any Generative AI business needs because it has one of the best models and continuous support. The company provides many state-of-the-art Generative AI models with various task capabilities: Image generation, Text-to-Speech, and many more.

All of the models OpenAI offers are available via API calls. With simple Python code, you can already use the model.

In this article, we will explore how to use the OpenAI API with Python and various tasks you can do. I hope you learn a lot from this article.

OpenAI API Setup

To follow this article, there are a few things you need to prepare.

The most important thing you need is the API Keys from OpenAI, as you cannot access the OpenAI models without the key. To acquire access, you must register for an OpenAI account and request the API Key on the account page. After you receive the key, save that somewhere you can remember, as it will not appear again in the OpenAI interface.

The next thing you need to set is to buy the pre-paid credit to use the OpenAI API. Recently, OpenAI announced changes to how their billing works. Instead of paying at the end of the month, we need to purchase pre-paid credit for the API call. You can visit the OpenAI pricing page to estimate the credit you need. You can also check their model page to understand which model you require.

Lastly, you need to install the OpenAI Python package in your environment. You can do that using the following code.

pip install openai

Then, you need to set your OpenAI Key Environment variable using the code below.

import os os.environ['OPENAI_API_KEY'] = 'YOUR API KEY'

With everything set, let’s start exploring the API of the OpenAI models with Python.

OpenAI API Text Generations

The star of OpenAI API is their Text Generations model. These Large Language Models family can produce text output from the text input called prompt. Prompts are basically instructions on what we expect from the model, such as text analysis, generating document drafts, and many more.

Let’s start by executing a simple Text Generations API call. We would use the GPT-3.5-Turbo model from OpenAI as the base model. It’s not the most advanced model, but the cheapest are often enough to perform text-related tasks.

from openai import OpenAI client = OpenAI() completion = client.chat.completions.create( model="gpt-3.5-turbo", messages=[ {"role": "system", "content": "You are a helpful assistant."}, {"role": "user", "content": "Generate me 3 Jargons that I can use for my Social Media content as a Data Scientist content creator"} ] ) print(completion.choices[0].message.content)

- "Unleashing the power of predictive analytics to drive data-driven decisions!"

- "Diving deep into the data ocean to uncover valuable insights."

- "Transforming raw data into actionable intelligence through advanced algorithms."

The API Call for the Text Generation model uses the API Endpoint chat.completions to create the text response from our prompt.

There are two required parameters for text Generation: model and messages.

For the model, you can check the list of models that you can use on the related model page.

As for the messages, we pass a dictionary with two pairs: the role and the content. The role key specified the role sender in the conversation model. There are 3 different roles: system, user, and assistant.

Using the role in messages, we can help set the model behavior and an example of how the model should answer our prompt.

Let’s extend the previous code example with the role assistant to give guidance on our model. Additionally, we would explore some parameters for the Text Generation model to improve their result.

completion = client.chat.completions.create( model="gpt-3.5-turbo", messages=[ {"role": "system", "content": "You are a helpful assistant."}, {"role": "user", "content": "Generate me 3 jargons that I can use for my Social Media content as a Data Scientist content creator."}, {"role": "assistant", "content": "Sure, here are three jargons: Data Wrangling is the key, Predictive Analytics is the future, and Feature Engineering help your model."}, {"role": "user", "content": "Great, can you also provide me with 3 content ideas based on these jargons?"} ], max_tokens=150, temperature=0.7, top_p=1, frequency_penalty=0 ) print(completion.choices[0].message.content)

Of course! Here are three content ideas based on the jargons provided:

- "Unleashing the Power of Data Wrangling: A Step-by-Step Guide for Data Scientists" — Create a blog post or video tutorial showcasing best practices and tools for data wrangling in a real-world data science project.

- "The Future of Predictive Analytics: Trends and Innovations in Data Science" — Write a thought leadership piece discussing emerging trends and technologies in predictive analytics and how they are shaping the future of data science.

- "Mastering Feature Engineering: Techniques to Boost Model Performance" — Develop an infographic or social media series highlighting different feature engineering techniques and their impact on improving the accuracy and efficiency of machine learning models.

The resulting output follows the example that we provided to the model. Using the role assistant is useful if we have a certain style or result we want the model to follow.

As for the parameters, here are simple explanations of each parameter that we used:

- max_tokens: This parameter sets the maximum number of words the model can generate.

- temperature: This parameter controls the unpredictability of the model's output. A higher temperature results in outputs that are more varied and imaginative. The acceptable range is from 0 to infinity, though values above 2 are unusual.

- top_p: Also known as nucleus sampling, this parameter helps determine the subset of the probability distribution from which the model draws its output. For instance, a top_p value of 0.1 means that the model considers only the top 10% of the probability distribution for sampling. Its values can range from 0 to 1, with higher values allowing for greater output diversity.

- frequency_penalty: This penalizes repeated tokens in the model's output. The penalty value can range from -2 to 2, where positive values discourage the repetition of tokens, and negative values do the opposite, encouraging repeated word use. A value of 0 indicates that no penalty is applied for repetition.

Lastly, you can change the model output to the JSON format with the following code.

completion = client.chat.completions.create( model="gpt-3.5-turbo", response_format={ "type": "json_object" }, messages=[ {"role": "system", "content": "You are a helpful assistant designed to output JSON.."}, {"role": "user", "content": "Generate me 3 Jargons that I can use for my Social Media content as a Data Scientist content creator"} ] ) print(completion.choices[0].message.content)

{

"jargons": [

"Leveraging predictive analytics to unlock valuable insights",

"Delving into the intricacies of advanced machine learning algorithms",

"Harnessing the power of big data to drive data-driven decisions"

]

}

The result is in JSON format and adheres to the prompt we input into the model.

For complete Text Generation API documentation, you can check them out on their dedicated page.

OpenAI Image Generations

OpenAI model is useful for text generation use cases and can also call the API for image generation purposes.

Using the DALL·E model, we can generate an image as requested. The simple way to perform it is using the following code.

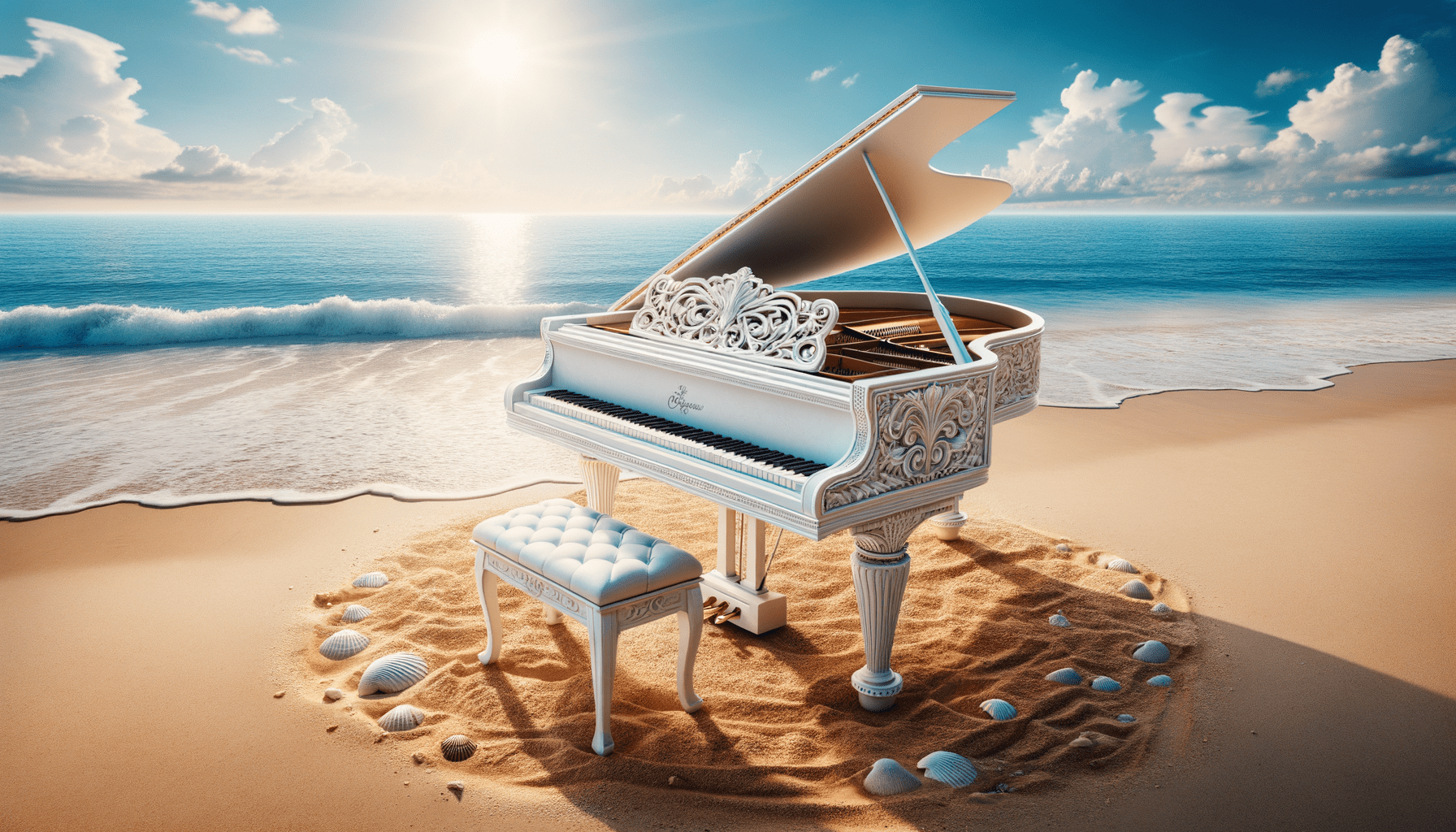

from openai import OpenAI from IPython.display import Image client = OpenAI() response = client.images.generate( model="dall-e-3", prompt="White Piano on the Beach", size="1792x1024", quality="hd", n=1, ) image_url = response.data[0].url Image(url=image_url)

Image generated with DALL·E 3

For the parameters, here are the explanations:

- model: The image generation model to use. Currently, the API only supports DALL·E 3 and DALL·E 2 models.

- prompt: This is the textual description based on which the model will generate an image.

- size: Determines the resolution of the generated image. There are three choices for the DALL·E 3 model (1024×1024, 1024×1792 or 1792×1024).

- quality: This parameter influences the quality of the generated image. If computational time is needed, “standard” is faster than “hd.”

- n: Specifies the number of images to generate based on the prompt. DALL·E 3 can only generate one image at a time. DALL·E 2 can generate up to 10 at a time.

It is also possible to generate a variation image from the existing image, although it’s only available using the DALL·E 2 model. The API only accepts square PNG images below 4 MB as well.

from openai import OpenAI from IPython.display import Image client = OpenAI() response = client.images.create_variation( image=open("white_piano_ori.png", "rb"), n=2, size="1024x1024" ) image_url = response.data[0].url Image(url=image_url)

The image might not be as good as the DALL·E 3 generations as it is using the older model.

OpenAI Vision

OpenAI is a leading company that provides models that can understand image input. This model is called the Vision model, sometimes called GPT-4V. The model is capable of answering questions given the image we gave.

Let’s try out the Vision model API. In this example, I would use the white piano image we generate from the DALL·E 3 model and store it locally. Also, I would create a function that takes the image path and returns the image description text. Don’t forget to change the api_key variable to your API Key.

from openai import OpenAI import base64 import requests def provide_image_description(img_path): client = OpenAI() api_key = 'YOUR-API-KEY' # Function to encode the image def encode_image(image_path): with open(image_path, "rb") as image_file: return base64.b64encode(image_file.read()).decode('utf-8') # Path to your image image_path = img_path # Getting the base64 string base64_image = encode_image(image_path) headers = { "Content-Type": "application/json", "Authorization": f"Bearer {api_key}" } payload = { "model": "gpt-4-vision-preview", "messages": [ { "role": "user", "content": [ { "type": "text", "text": """Can you describe this image? """ }, { "type": "image_url", "image_url": { "url": f"data:image/jpeg;base64,{base64_image}" } } ] } ], "max_tokens": 300 } response = requests.post("https://api.openai.com/v1/chat/completions", headers=headers, json=payload) return response.json()['choices'][0]['message']['content']

This image features a grand piano placed on a serene beach setting. The piano is white, indicating a finish that is often associated with elegance. The instrument is situated right at the edge of the shoreline, where the gentle waves lightly caress the sand, creating a foam that just touches the base of the piano and the matching stool. The beach surroundings imply a sense of tranquility and isolation with clear blue skies, fluffy clouds in the distance, and a calm sea expanding to the horizon. Scattered around the piano on the sand are numerous seashells of various sizes and shapes, highlighting the natural beauty and serene atmosphere of the setting. The juxtaposition of a classical music instrument in a natural beach environment creates a surreal and visually poetic composition.

You can tweak the text values in the dictionary above to match your Vision model requirements.

OpenAI Audio Generation

OpenAI also provides a model to generate audio based on their Text-to-Speech model. It’s very easy to use, although the voice narration style is limited. Also, the model has supported many languages, which you can see on their language support page.

To generate the audio, you can use the below code.

from openai import OpenAI client = OpenAI() speech_file_path = "speech.mp3" response = client.audio.speech.create( model="tts-1", voice="alloy", input="I love data science and machine learning" ) response.stream_to_file(speech_file_path)

You should see the audio file in your directory. Try to play it and see if it’s up to your standard.

Currently, there are only a few parameters you can use for the Text-to-Speech model:

- model: The Text-to-Speech model to use. Only two models are available (tts-1 or tts-1-hd), where tts-1 optimizes speed and tts-1-hd for quality.

- voice: The voice style to use where all the voice is optimized to english. The selection is alloy, echo, fable, onyx, nova, and shimmer.

- response_format: The audio format file. Currently, the supported formats are mp3, opus, aac, flac, wav, and pcm.

- speed: The generated audio speed. You can select values between 0.25 to 4.

- input: The text to create the audio. Currently, the model only supports up to 4096 characters.

OpenAI Speech-to-Text

OpenAI provides the models to transcribe and translate audio data. Using the whispers model, we can transcribe audio from the supported language to the text files and translate them into english.

Let’s try a simple transcription from the audio file we generated previously.

from openai import OpenAI client = OpenAI() audio_file= open("speech.mp3", "rb") transcription = client.audio.transcriptions.create( model="whisper-1", file=audio_file ) print(transcription.text)

I love data science and machine learning.

It’s also possible to perform translation from the audio files to the english language. The model isn’t yet available to translate onto another language.

from openai import OpenAI client = OpenAI() audio_file = open("speech.mp3", "rb") translate = client.audio.translations.create( model="whisper-1", file=audio_file )

Conclusion

We have explored several model services that OpenAI provides, from Text Generation, Image Generation, Audio Generation, Vision, and Text-to-Speech models. Each model have their API parameter and specification you need to learn before using them.

Cornellius Yudha Wijaya is a data science assistant manager and data writer. While working full-time at Allianz Indonesia, he loves to share Python and data tips via social media and writing media. Cornellius writes on a variety of AI and machine learning topics.

More On This Topic

- Free ChatGPT Course: Use The OpenAI API to Code 5 Projects

- OpenAI’s Whisper API for Transcription and Translation

- OpenAI API for Beginners: Your Easy-to-Follow Starter Guide

- Exploring Data Cleaning Techniques With Python

- Exploring Infinite Iterators in Python's itertools

- HuggingChat Python API: Your No-Cost Alternative

(@gordic_aleksa) April 10, 2024

(@gordic_aleksa) April 10, 2024