Image by Author

Building simple projects is a great way to learn Python and any programming language in general. You can learn the syntax to write for loops, use built-in functions, read files, and much more. But it’s only when you start building something that you actually “learn”.

Following the “learning by building” approach, let’s code a simple TO-DO list app that we can run at the command line. Along the way we’ll explore concepts like parsing command-line arguments and working with files and file paths. We’ll as well revisit basics like defining custom functions.

So let’s get started!

What You’ll Build

By coding along to this tutorial, you’ll be able to build a TO-DO list app you can run at the command line. Okay, so what would you like the app to do?

Like TO-DO lists on paper, you need to be able to add tasks, look up all tasks, and remove tasks (yeah, strikethrough or mark them done on paper) after you’ve completed them, yes? So we’ll build an app that lets us do the following.

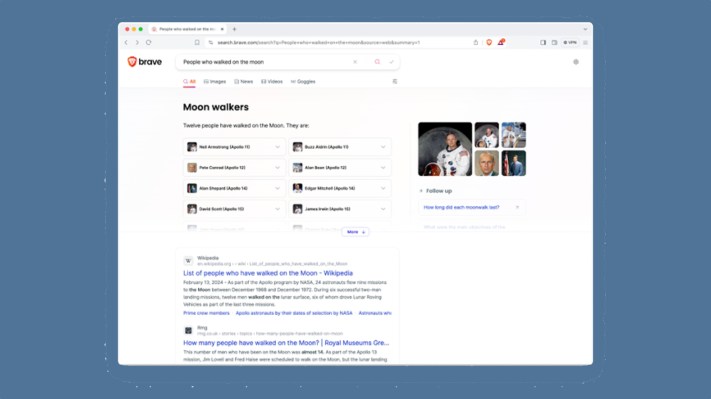

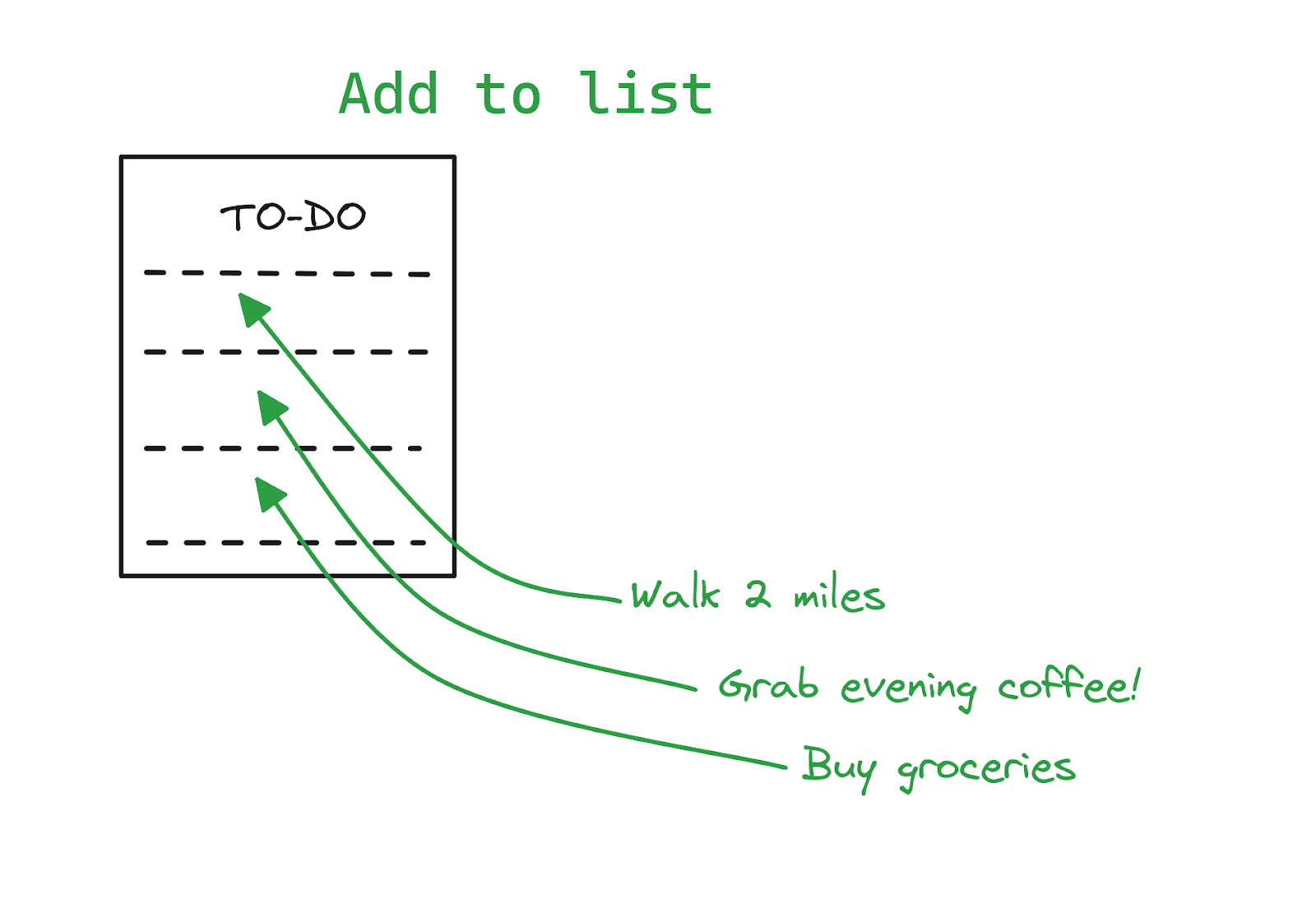

Add tasks to the list:

Image by Author

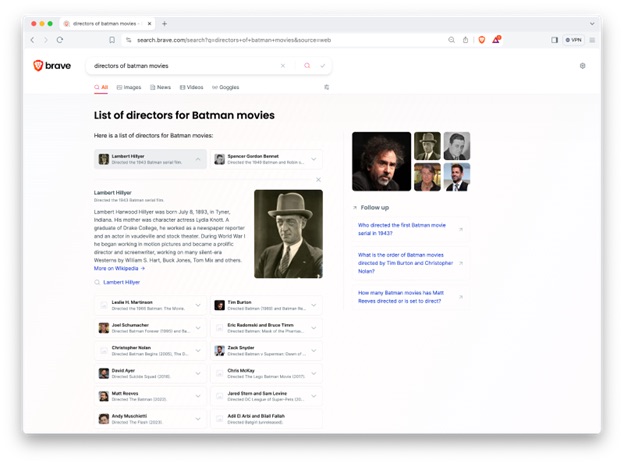

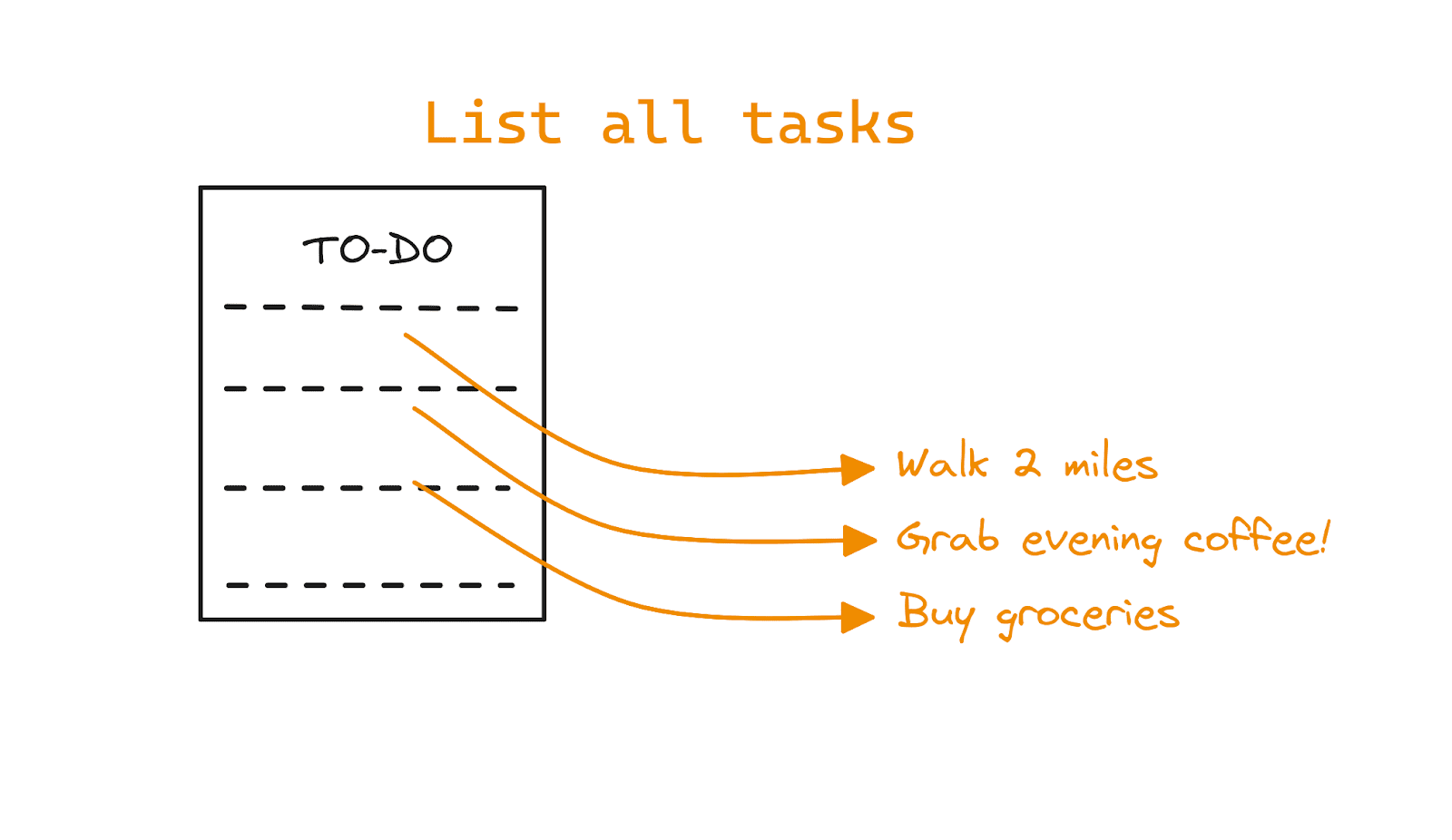

Get a list of all tasks on the list:

Image by Author

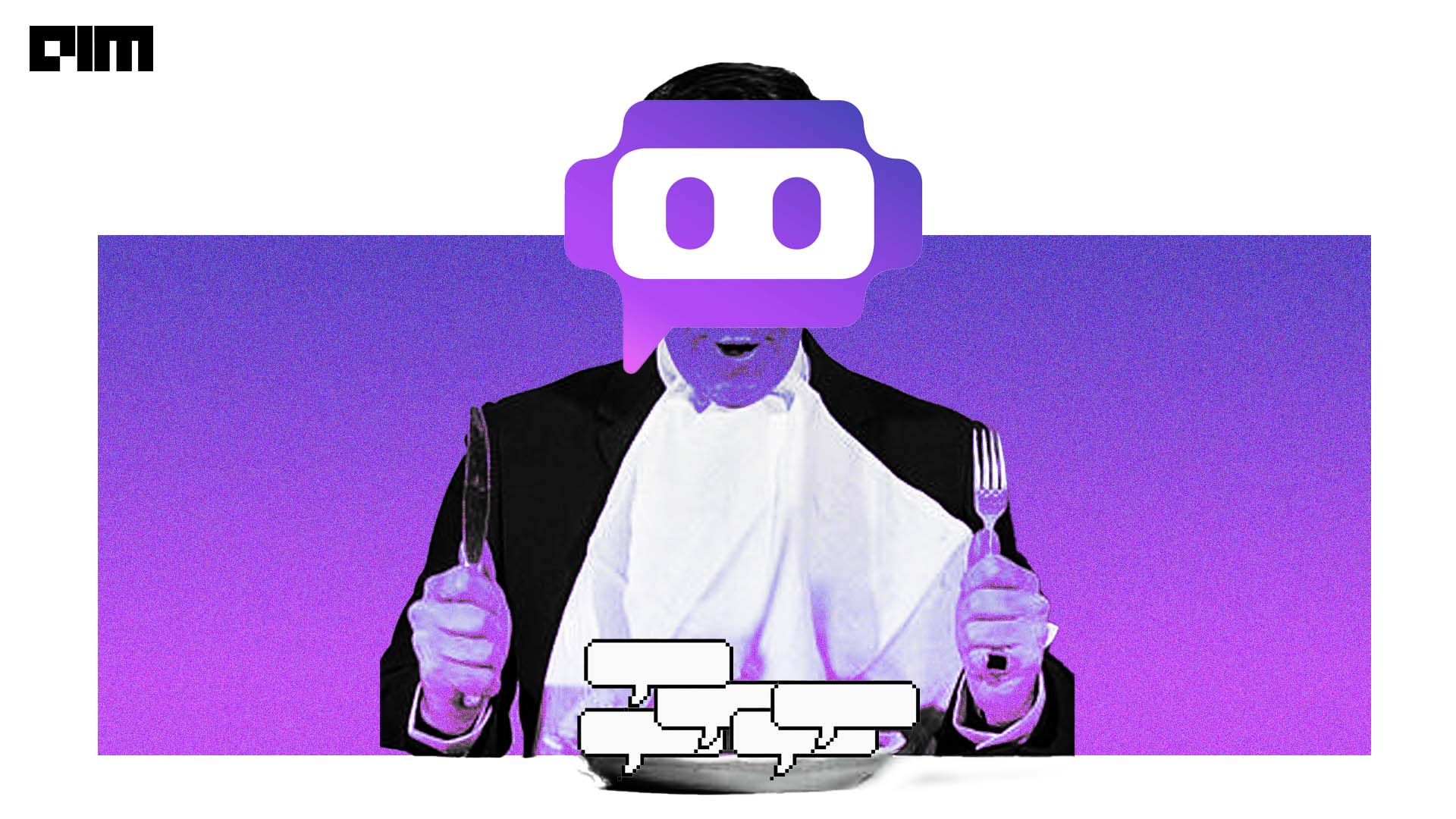

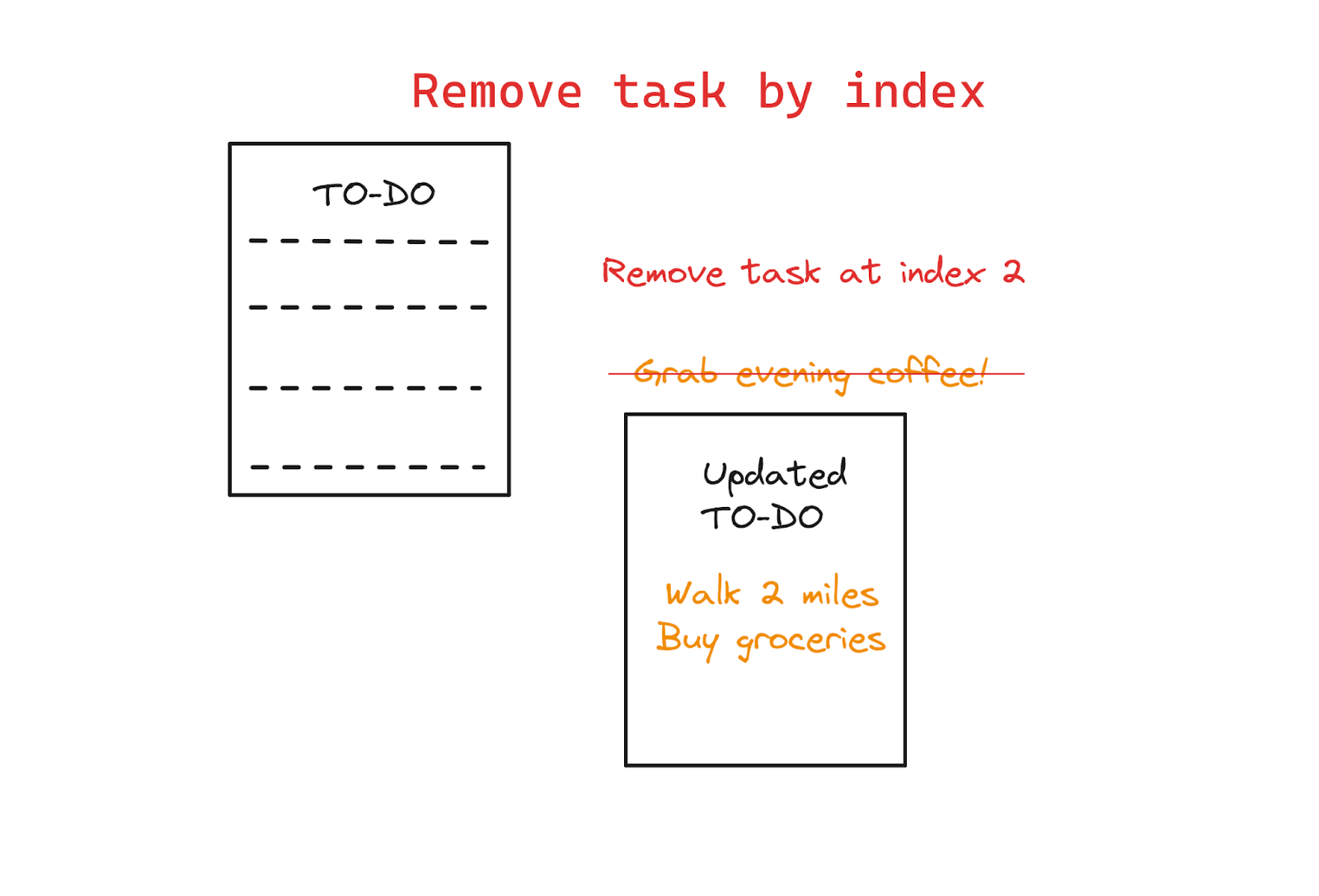

And also remove a task (using its index) after you’ve finished it:

Image by Author

Now let’s start coding!

Step 1: Get Started

First, create a directory for your project. And inside the project directory, create a Python script file. This will be the main file for our to-do list app. Let's call it todo.py.

You don't need any third-party libraries for this project. So only make sure you’re using a recent version of Python. This tutorial uses Python 3.11.

Step 2: Import Necessary Modules

In the todo.py file, start by importing the required modules. For our simple to-do list app, we'll need the following:

- argparse for command-line argument parsing

- os for file operations

So let’s import them both:

import argparse import os

Step 3: Set Up the Argument Parser

Recall that we’ll use command-line flags to add, list, and remove tasks. We can use both short and long options for each argument. For our app, let’s use the following:

-a or --add to add tasks -l or --list to list all tasks -r or --remove to remove tasks using index

Here’s where we’ll use the argparse module to parse the arguments provided at the command line. We define the create_parser() function that does the following:

- Initializes an

ArgumentParser object (let’s call it parser).

- Adds arguments for adding, listing, and removing tasks by calling the

add_argument() method on the parser object.

When adding arguments we add both the short and long options as well as the corresponding help message. So here’s the create_parser() function:

def create_parser(): parser = argparse.ArgumentParser(description="Command-line Todo List App") parser.add_argument("-a", "--add", metavar="", help="Add a new task") parser.add_argument("-l", "--list", action="store_true", help="List all tasks") parser.add_argument("-r", "--remove", metavar="", help="Remove a task by index") return parser

Step 4: Add Task Management Functions

We now need to define functions to perform the following task management operations:

- Adding a task

- Listing all tasks

- Removing a task by its index

The following function add_task interacts with a simple text file to manage items on the TO-DO list. It opens the file in the ‘append’ mode and adds the task to the end of the list:

def add_task(task): with open("tasks.txt", "a") as file: file.write(task + "n")

Notice how we’ve used the with statement to manage the file. Doing so ensures that the file is closed after the operation—even if there’s an error—minimizing resource leaks.

To learn more, read the section on context managers for efficient resource handling in this tutorial on writing efficient Python code.

The list_tasks function lists all the tasks by checking if the file exists. The file is created only when you add the first task. We first check if the file exists and then read and print out the tasks. If there are currently no tasks, we get a helpful message. :

def list_tasks(): if os.path.exists("tasks.txt"): with open("tasks.txt", "r") as file: tasks = file.readlines() for index, task in enumerate(tasks, start=1): print(f"{index}. {task.strip()}") else: print("No tasks found.")

We also implement a remove_task function to remove tasks by index. Opening the file in the ‘write’ mode overwrites the existing file. So we remove the task corresponding to the index and write the updated TO-DO list to the file:

def remove_task(index): if os.path.exists("tasks.txt"): with open("tasks.txt", "r") as file: tasks = file.readlines() with open("tasks.txt", "w") as file: for i, task in enumerate(tasks, start=1): if i != index: file.write(task) print("Task removed successfully.") else: print("No tasks found.")

Step 5: Parse Command-Line Arguments

We’ve set up the parser to parse command-line arguments. And we’ve also defined the functions to perform the tasks of adding, listing, and removing tasks. So what’s next?

You probably guessed it. We only need to call the correct function based on the command-line argument received. Let’s define a main() function to parse the command-line arguments using the ArgumentParser object we’ve created in step 3.

Based on the provided arguments, call the appropriate task management functions. This can be done using a simple if-elif-else ladder like so:

def main(): parser = create_parser() args = parser.parse_args() if args.add: add_task(args.add) elif args.list: list_tasks() elif args.remove: remove_task(int(args.remove)) else: parser.print_help() if __name__ == "__main__": main()

Step 6: Run the App

You can now run the TO-DO list app from the command line. Use the short option h or the long option helpto get information on the usage:

$ python3 todo.py --help usage: todo.py [-h] [-a] [-l] [-r] Command-line Todo List App options: -h, --help show this help message and exit -a , --add Add a new task -l, --list List all tasks -r , --remove Remove a task by index

Initially, there are no tasks in the list, so using --list to list all tasks print out “No tasks found.”:

$ python3 todo.py --list No tasks found.

Now we add an item to the TO-DO list like so:

$ python3 todo.py -a "Walk 2 miles"

When you list the items now, you should be able to see the task added:

$ python3 todo.py --list 1. Walk 2 miles

Because we’ve added the first item the tasks.txt file has been created (Refer to the definition of the list_tasks function in step 4):

$ ls tasks.txt todo.py

Let's add another task to the list:

$ python3 todo.py -a "Grab evening coffee!"

And another:

$ python3 todo.py -a "Buy groceries"

And now let’s get the list of all tasks:

$ python3 todo.py -l 1. Walk 2 miles 2. Grab evening coffee! 3. Buy groceries

Now let's remove a task by its index. Say we’re done with evening coffee (and hopefully for the day), so we remove it as shown:

$ python3 todo.py -r 2 Task removed successfully.

The modified TO-DO list is as follows:

$ python3 todo.py --list 1. Walk 2 miles 2. Buy groceries

Step 7: Test, Improve, and Repeat

Okay, the simplest version of our app is ready. So how do we take this further? Here are a few things you can try:

- What happens when you use an invalid command-line option (say

-w or --wrong)? The default behavior (if you recall from the if-elif-else ladder) is to print out the help message but there’ll be an exception, too. Try implementing error handling using try-except blocks.

- Test your app by defining test cases that include edge cases. To start, you can use the built-in unittest module.

- Improve the existing version by adding an option to specify the priority for each task. Also try to sort and retrieve tasks by priority.

▶️ The code for this tutorial is on GitHub.

Wrapping Up

In this tutorial, we built a simple command-line TO-DO list app. In doing so, we learned how to use the built-in argparse module to parse command-line arguments. We also used the command-line inputs to perform corresponding operations on a simple text file under the hood.

So where do we go next? Well, Python libraries like Typer make building command-line apps a breeze. And we’ll build one using Typer in an upcoming Python tutorial. Until then, keep coding!

Bala Priya C is a developer and technical writer from India. She likes working at the intersection of math, programming, data science, and content creation. Her areas of interest and expertise include DevOps, data science, and natural language processing. She enjoys reading, writing, coding, and coffee! Currently, she's working on learning and sharing her knowledge with the developer community by authoring tutorials, how-to guides, opinion pieces, and more. Bala also creates engaging resource overviews and coding tutorials.

More On This Topic

- Data Science at the Command Line: The Free eBook

- 5 More Command Line Tools for Data Science

- ChatGPT CLI: Transform Your Command-Line Interface Into ChatGPT

- Master The Art Of Command Line With This GitHub Repository

- Build An AI Application with Python in 10 Easy Steps

- Build a Machine Learning Web App in 5 Minutes