GenAI analysis startup Bud Ecosystem has launched Bud Runtime, a pioneering resolution that allows generative AI deployment on CPU-based infrastructure, lowering prices and enhancing accessibility.

Aimed toward tackling the rising monetary and environmental prices of generative AI, Bud Runtime permits organisations to deploy AI fashions utilizing their present {hardware}, bypassing costly and infrequently scarce GPUs.

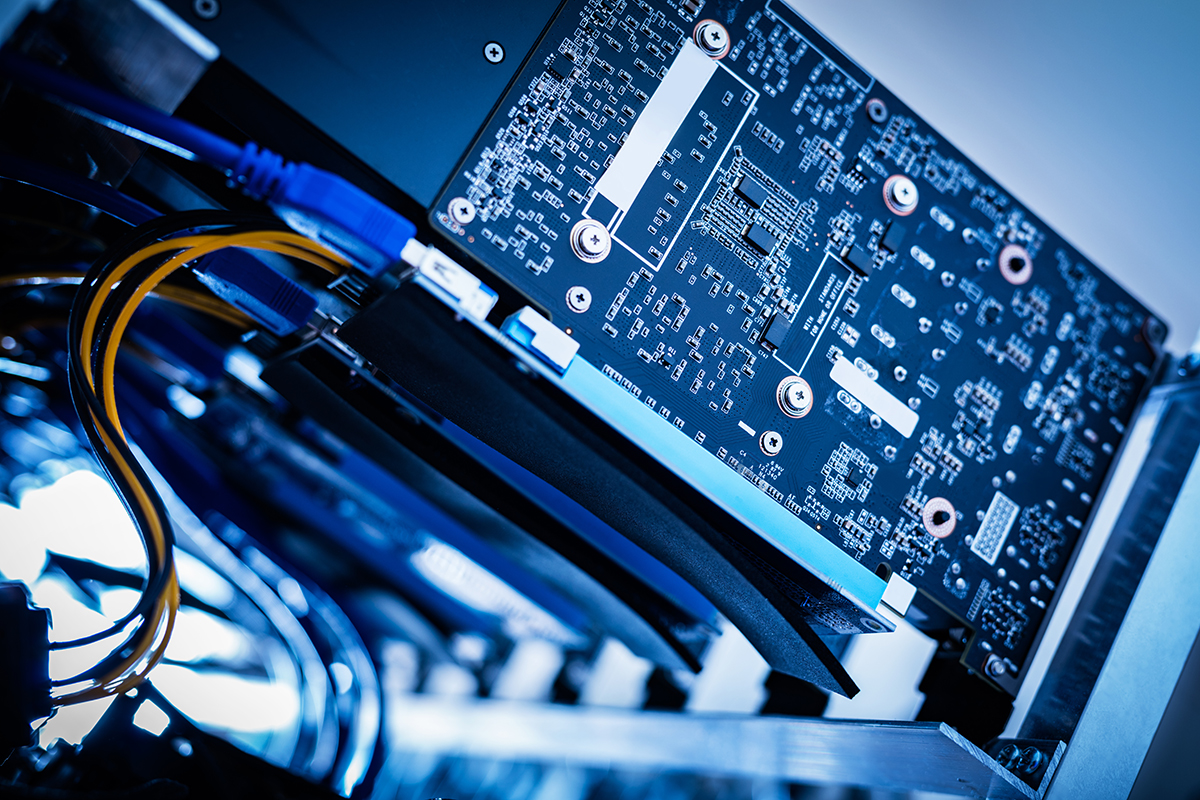

This platform helps CPU inference alongside GPUs, HPUs, TPUs, and NPUs from main distributors reminiscent of Nvidia, Intel, AMD, and Huawei. Its standout function—heterogeneous cluster parallelism—lets firms deploy AI workloads throughout blended {hardware} environments, enabling smoother scalability and assuaging GPU provide constraints.

“We started our GenAI journey in early 2023 and rapidly encountered the excessive value of GPUs,” mentioned Jithin VG, CEO, Bud Ecosystem.

To deal with the problem, the crew developed the preliminary model of the Bud Runtime, designed to function smaller fashions on their present infrastructure. In addition they assist mid-sized fashions on CPUs and ensured compatibility with {hardware} from numerous producers, together with Nvidia, AMD, Intel, and Huawei.

With Bud Runtime, firms can kickstart generative AI initiatives for as little as $200 per thirty days, a fraction of the normal value. This makes it significantly useful for startups and analysis establishments usually priced out of the AI panorama.

The launch builds on Bud Ecosystem’s ongoing collaboration with main expertise gamers, together with Intel, Microsoft, Infosys, and LTIM. For the previous 18 months, the corporate has been working with Intel to optimise generative AI inference on Intel Xeon CPUs and Gaudi accelerators.

“Our mission is to democratise GenAI at scale by commoditising it,” saidLinson Joseph, chief technique officer, Bud Ecosystem. “That is solely potential if we use commodity {hardware} for GenAI at scale.”

Based with a concentrate on basic AI analysis, Bud Ecosystem has made vital strides in transformer architectures for low-resource environments, hybrid inference fashions, and decentralised AI programs.

The corporate has revealed a number of analysis papers and launched over 20 open-source fashions. Notably, it stays the one Indian startup to have topped the Hugging Face LLM leaderboard with a mannequin on par with GPT-3.5.