Over the past six decades, operating systems have evolved progressively, advancing from basic systems to the complex and interactive operating systems that power today's devices. Initially, operating systems served as a bridge between the binary functionality of computer hardware, such as gate manipulation, and user-level tasks. Over the years, however, they have developed from simple batch job processing systems to more sophisticated process management techniques, including multitasking and time-sharing. These advancements have enabled modern operating systems to manage a wide array of complex tasks. The introduction of graphical user interfaces (GUIs) like Windows and MacOS has made modern operating systems more user-friendly and interactive, while also expanding the OS ecosystem with runtime libraries and a comprehensive suite of developer tools.

Recent innovations include the integration and deployment of Large Language Models (LLMs), which have revolutionized various industries by unlocking new possibilities. More recently, LLM-based intelligent agents have shown remarkable capabilities, achieving human-like performance on a broad range of tasks. However, these agents are still in the early stages of development, and current techniques face several challenges that affect their efficiency and effectiveness. Common issues include the sub-optimal scheduling of agent requests over the large language model, complexities in integrating agents with different specializations, and maintaining context during interactions between the LLM and the agent. The rapid development and increasing complexity of LLM-based agents often lead to bottlenecks and sub-optimal resource use.

To address these challenges, this article will discuss AIOS, an LLM agent operating system designed to integrate large language models as the ‘brain' of the operating system, effectively giving it a ‘soul.' Specifically, the AIOS framework aims to facilitate context switching across agents, optimize resource allocation, provide tool services for agents, maintain access control, and enable concurrent execution of agents. We will delve deep into the AIOS framework, exploring its mechanisms, methodology, and architecture, and compare it with state-of-the-art frameworks. Let's dive in.

After achieving remarkable success in large language models, the next focus of the AI and ML industry is to develop autonomous AI agents that can operate independently, make decisions on their own, and perform tasks with minimal or no human interventions. These AI-based intelligent agents are designed to understand human instructions, process information, make decisions, and take appropriate actions to achieve an autonomous state, with the advent and development of large language models bringing new possibilities to the development of these autonomous agents. Current LLM frameworks including DALL-E, GPT, and more have shown remarkable abilities to understand human instructions, reasoning and problem solving abilities, and interacting with human users along with external environments. Built on top of these powerful and capable large language models, LLM-based agents have strong task fulfillment abilities in diverse environments ranging from virtual assistants, to more complex and sophisticated systems involving creating problem solving, reasoning, planning, and execution.

The above figure gives a compelling example of how an LLM-based autonomous agent can solve real-world tasks. The user requests the system for a trip information following which, the travel agent breaks down the task into executable steps. Then the agent carries out the steps sequentially, booking flights, reserving hotels, processing payments, and more. While executing the steps, what sets these agents apart from traditional software applications is the ability of the agents to show decision making capabilities, and incorporate reasoning in the execution of the steps. Along with an exponential growth in the quality of these autonomous agents, the strain on the functionalities of large language models, and operating systems has witnessed an increase, and an example of the same is that prioritizing and scheduling agent requests in limited large language models poses a significant challenge. Furthermore, since the generation process of large language models becomes a time consuming task when dealing with lengthy contexts, it is possible for the scheduler to suspend the resulting generation, raising a problem of devising a mechanism to snapshot the current generation result of the language model. As a result of this, pause/resume behavior is enabled when the large language model has not finalized the response generation for the current request.

To address the challenges mentioned above, AIOS, a large language model operating system provides aggregations and module isolation of LLM and OS functionalities. The AIOS framework proposes an LLM-specific kernel design in an attempt to avoid potential conflicts arising between tasks associated and not associated with the large language model. The proposed kernel segregates the operating system like duties, especially the ones that oversee the LLM agents, development toolkits, and their corresponding resources. As a result of this segregation, the LLM kernel attempts to enhance the coordination and management of activities related to LLMs.

AIOS : Methodology and Architecture

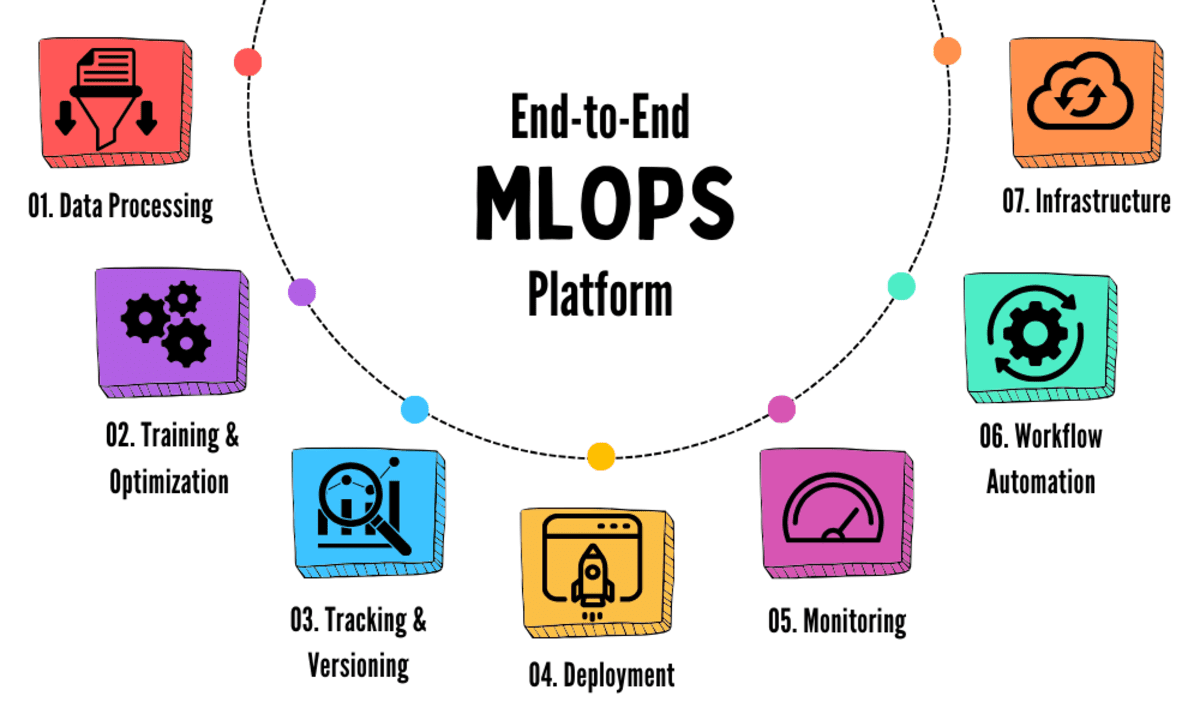

As you can observe, there are six major mechanisms involved in the working of the AIOS framework.

- Agent Scheduler: The task assigned to the agent scheduler is to schedule and prioritize agent requests in an attempt to optimize the utilization of the large language model.

- Context Manager: The task assigned to the context manager is to support snapshots along with restoring the intermediate generation status in the large language model, and the context window management of the large language model.

- Memory Manager: The primary responsibility of the memory manager is to provide short term memory for the interaction log for each agent.

- Storage Manager: The storage manager is responsible to persist the interaction logs of agents to long-term storage for future retrieval.

- Tool Manager: The tool manager mechanism manages the call of agents to external API tools.

- Access Manager: The access manager enforces privacy and access control policies between agents.

In addition to the above mentioned mechanisms, the AIOS framework features a layered architecture, and is split into three distinct layers: the application layer, the kernel layer, and the hardware layer. The layered architecture implemented by the AIOS framework ensures the responsibilities are distributed evenly across the system, and the higher layers abstract the complexities of the layers below them, allowing for interactions using specific modules or interfaces, enhancing the modularity, and simplifying system interactions between the layers.

Starting off with the application layer, this layer is used for developing and deploying application agents like math or travel agents. In the application layer, the AIOS framework provides the AIOS software development kit (AIOS SDK) with a higher abstraction of system calls that simplifies the development process for agent developers. The software development kit offered by AIOS offers a rich toolkit to facilitate the development of agent applications by abstracting away the complexities of the lower-level system functions, allowing developers to focus on functionalities and essential logic of their agents, resulting in a more efficient development process.

Moving on, the kernel layer is further divided into two components: the LLM kernel, and the OS kernel. Both the OS kernel and the LLM kernel serve the unique requirements of LLM-specific and non LLM operations, with the distinction allowing the LLM kernel to focus on large language model specific tasks including agent scheduling and context management, activities that are essential for handling activities related to large language models. The AIOS framework concentrates primarily on enhancing the large language model kernel without alternating the structure of the existing OS kernel significantly. The LLM kernel comes equipped with several key modules including the agent scheduler, memory manager, context manager, storage manager, access manager, tool manager, and the LLM system call interface. The components within the kernel layer are designed in an attempt to address the diverse execution needs of agent applications, ensuring effective execution and management within the AIOS framework.

Finally, we have the hardware layer that comprises the physical components of the system including the GPU, CPU, peripheral devices, disk, and memory. It is essential to understand that the system of the LLM kernels cannot interact with the hardware directly, and these calls interface with the system calls of the operating system that in turn manage the hardware resources. This indirect interaction between the LLM karnel’s system and the hardware resources creates a layer of security and abstraction, allowing the LLM kernel to leverage the capabilities of hardware resources without requiring the management of hardware directly, facilitating the maintenance of the integrity and efficiency of the system.

Implementation

As mentioned above, there are six major mechanisms involved in the working of the AIOS framework. The agent scheduler is designed in a way that it is able to manage agent requests in an efficient manner, and has several execution steps contrary to a traditional sequential execution paradigm in which the agent processes the tasks in a linear manner with the steps from the same agent being processed first before moving on to the next agent, resulting in increased waiting times for tasks appearing later in the execution sequence. The agent scheduler employs strategies like Round Robin, First In First Out, and other scheduling algorithms to optimize the process.

The context manager has been designed in a way that it is responsible for managing the context provided to the large language model, and the generation process given the certain context. The context manager involves two crucial components: context snapshot and restoration, and context window management. The context snapshot and restoration mechanism offered by the AIOS framework helps in mitigating situations where the scheduler suspends the agent requests as demonstrated in the following figure.

As demonstrated in the following figure, it is the responsibility of the memory manager to manage short-term memory within an agent’s lifecycle, and ensures the data is stored and accessible only when the agent is active, either during runtime or when the agent is waiting for execution.

On the other hand, the storage manager is responsible for preserving the data in the long run, and it oversees the storage of information that needs to be retained for an indefinite period of time, beyond the activity lifespan of an individual agent. The AISO framework achieves permanent storage using a variety of durable mediums including cloud-based solutions, databases, and local files, ensuring data availability and integrity. Furthermore, in the AISO framework, it is the tool manager that manages a varying array of API tools that enhance the functionality of the large language models, and the following table summarizes how the tool manager integrates commonly used tools from various resources, and classifies them into different categories.

The access manager organizes access control operations within distinct agents by administering a dedicated privilege group for each agent, and denies an agent access to its resources if they are excluded from the agent’s privilege group. Additionally, the access manager is also responsible to compile and maintain auditing logs that enhances the transparency of the system further.

AIOS : Experiments and Results

The evaluation of the AIOS framework is guided by two research questions: first, how is the performance of AIOS scheduling in improving balance waiting and turnaround time, and second, whether the response of the LLM to agent requests are consistent after agent suspension?

To answer the consistency questions, developers run each of the three agents individually, and subsequently, execute these agents in parallel, and attempt to capture their outputs during each stage. As demonstrated in the following table, the BERT and BLEU scores achieve the value of 1.0, indicating a perfect alignment between the outputs generated in single-agent and multi-agent configurations.

To answer the efficiency questions, the developers conduct a comparative analysis between the AIOS framework employing FIFO or First In First Out scheduling, and a non scheduled approach, wherein the agents run concurrently. In the non-scheduled setting, the agents are executed in a predefined sequential order: Math agent, Narrating agent, and rec agent. To assess the temporal efficiency, the AIOS framework employs two metrics: waiting time, and turnaround time, and since the agents send multiple requests to the large language model, the waiting time and the turnaround time for individual agents is calculated as the average of the waiting time and turnaround time for all the requests. As demonstrated in the following table, the non-scheduled approach displays satisfactory performance for agents earlier in the sequence, but suffers from extended waiting and turnaround times for agents later in the sequence. On the other hand, the scheduling approach implemented by the AIOS framework regulates both the waiting and turnaround times effectively.

Final Thoughts

In this article we have talked about AIOS, an LLM agent operating system that is designed in an attempt to embed large language models into the OS as the brain of the OS, enabling an operating system with a soul. To be more specific, the AIOS framework is designed with the intention to facilitate context switching across agents, optimize resource allocation, provide tool service for agents, maintain access control for agents, and enable concurrent execution of agents. The AISO architecture demonstrates the potential to facilitate the development and deployment of large language model based autonomous agents, resulting in a more effective, cohesive, and efficient AIOS-Agent ecosystem.