India’s most exciting hackathon, Bhasha Techathon, is organised by Machine Hack in collaboration with Digital India Bhashini Division and Google Cloud to innovate technology solutions for Indian languages.

India, a land of vibrant cultures and diverse tongues, deserves to have its rich linguistic heritage reflected in the technological landscape.

Bhashini aims to develop robust AI models that understand and process Indian languages effectively. This paves the way for a more inclusive digital world where everyone, regardless of their primary language, can access information, engage with technology, and participate in the digital economy.

Here are the five reasons why you should participate in this hackathon.

[Continue reading until the end, where you’ll find our cheat sheet]

Addressing Crucial Language Challenges

Bhasha Techathon addresses six critical problem statements in NLP, ranging from voice-to-text applications to video-to-text conversions and the categorisation of complaints.

These challenges are not only technical but also highly relevant to real-world applications, providing participants with the opportunity to work on projects that have direct societal impacts, particularly in enhancing accessibility and understanding across India’s multitude of languages.

Open to All

One of the most compelling reasons to participate in the Bhasha Techathon is its inclusivity. Whether you are a student, a professional, or simply an AI enthusiast, the techathon welcomes individuals from all backgrounds. This inclusivity fosters a diverse environment where different perspectives and skills come together to innovate and solve complex problems.

Collaboration and Networking

Participants can either compete individually or as part of a team. This setup not only enhances collaboration, allowing individuals to learn from each other, but also provides a fantastic networking opportunity. Engaging with peers and industry leaders can open doors to future collaborations and career opportunities, especially as participants are invited to present their solutions to a jury of experts.

Career Advancement

The techathon is not just about winning; it’s about building and showcasing your capabilities. Participants gain hands-on experience with the latest technologies in AI and NLP, guided by the expertise of leaders from Google Cloud and MachineHack. This experience is invaluable and can significantly boost one’s career, providing exposure to practical applications of theoretical knowledge.

Recognition and Rewards

The rewards at Bhasha Techathon are substantial, with prize money offered to the top performers. However, beyond the financial incentives, participants gain recognition for their skills and innovations. This recognition can enhance their professional profile and open up further opportunities in the tech industry.

[Click here to participate now!]

[End Date: 15th May 2024]

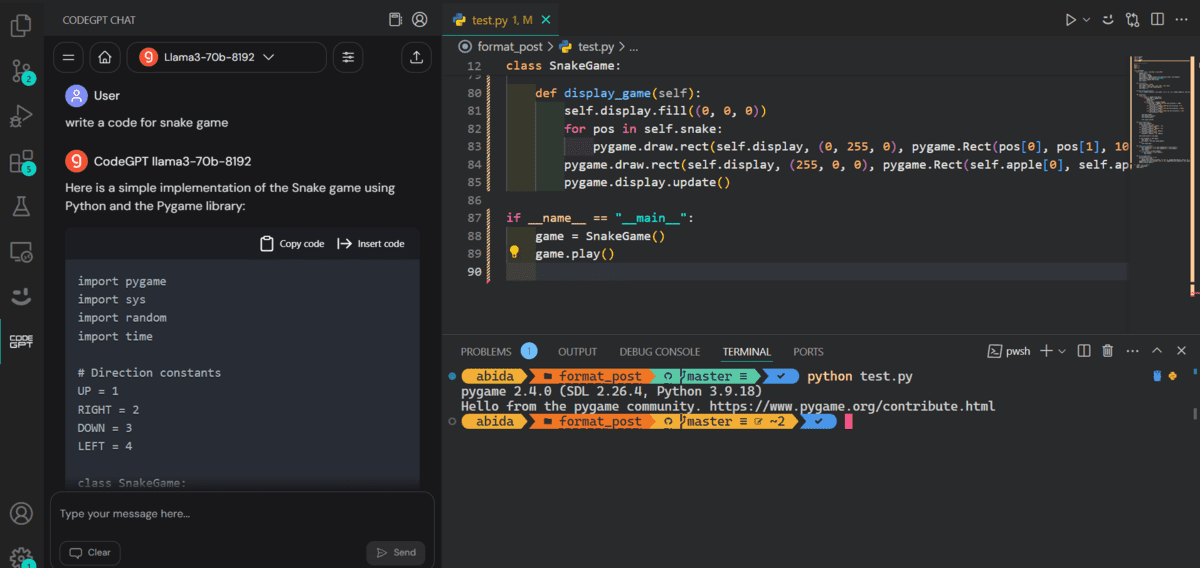

A Cheatsheet for Bhasha Techathon Participants

Let’s break down each problem statement and provide key pointers on how to approach them:

Chatbot Assistance in Regional Languages for MOPR Users

- Language Support: Integrate all 22 Indian scheduled languages, prioritize language selection functionality for user convenience.

- NLP Integration: Train NLP models extensively on diverse datasets to ensure accurate understanding of queries in different regional languages.

- Contextual Understanding: Develop algorithms that analyze user queries considering specific Panchayati Raj terminology and nuances.

- Database Integration: Establish APIs to retrieve relevant information from Ministry of Panchayati Raj databases seamlessly.

- User Interface Design: Design an intuitive chatbot interface with clear language selection options and instructions for users.

- Testing and Evaluation: Conduct rigorous testing across languages, gather user feedback for continuous improvement.

Conversion of FAQs Section on the Website

- Multilingual Support: Enable access to FAQs in all 22 Indian languages with a language selector for user preference.

- Translation and Transliteration: Ensure accurate presentation of FAQ content using translation and transliteration techniques.

- Interactive Chatbot: Implement language-specific interactive chatbots for real-time engagement.

- NLP Capabilities: Integrate NLP for conversational understanding and response to user queries.

- Search Functionality: Include language-specific search features for quick access to relevant information.

- Multimedia Integration: Enhance FAQs with multimedia elements for enhanced user experience.

Voice to Text and Complaint Categorization through AI/ML

- Voice-to-Text Conversion: Develop accurate voice message transcription in 22 Indian languages.

- Text Embedding: Use techniques like Word2Vec for efficient complaint categorisation based on word relationships.

- NLP Processing: Employ NLP for text preprocessing and feature extraction to improve complaint analysis accuracy.

- Integration with CMS: Seamlessly integrate categorised complaints into existing systems for analysis and reporting.

Video-to-text and Complaint Categorization through AI/ML

- Video-to-Text Conversion: Develop systems for accurate video transcription and consider multi-modal analysis for complaint understanding.

- Complaint Categorization: Train AI/ML models to categorise transcribed text from videos using NLP techniques.

- Embedding and NLP Processing: Utilize techniques like BERT for semantic understanding and sentiment analysis.

- Integration with CMS: Ensure seamless integration of categorised complaints into existing systems for efficient processing.

CDSS in Multiple Indian Languages

- CDSS Development: Create a comprehensive CDSS with multilingual support and adaptive recommendations.

- Interface Design: Develop a user-friendly interface supporting all 22 Indian languages with customisation options.

- Medical Terminology: Incorporate accurate medical terminology in each Indian language for precision.

- Language-Adaptive Recommendations: Train the CDSS to deliver recommendations in chosen languages considering linguistic nuances.

- Compliance: Ensure adherence to regulatory guidelines and standards for healthcare technologies in India.

What are you waiting for?

The Bhasha Techathon isn’t just a competition; it’s a call to action. It’s a chance to leverage your tech skills for the greater good while propelling yourself to the forefront of AI innovation. Imagine developing a language translation tool that empowers rural communities or a virtual assistant that speaks your native tongue. The possibilities are boundless!

The post Top 5 Reasons Why You Must Participate in Bhasha Techathon appeared first on Analytics India Magazine.

Introducing Tokenizer Arena

Introducing Tokenizer Arena