When we said that AI will replace your toxic manager, we weren’t joking.

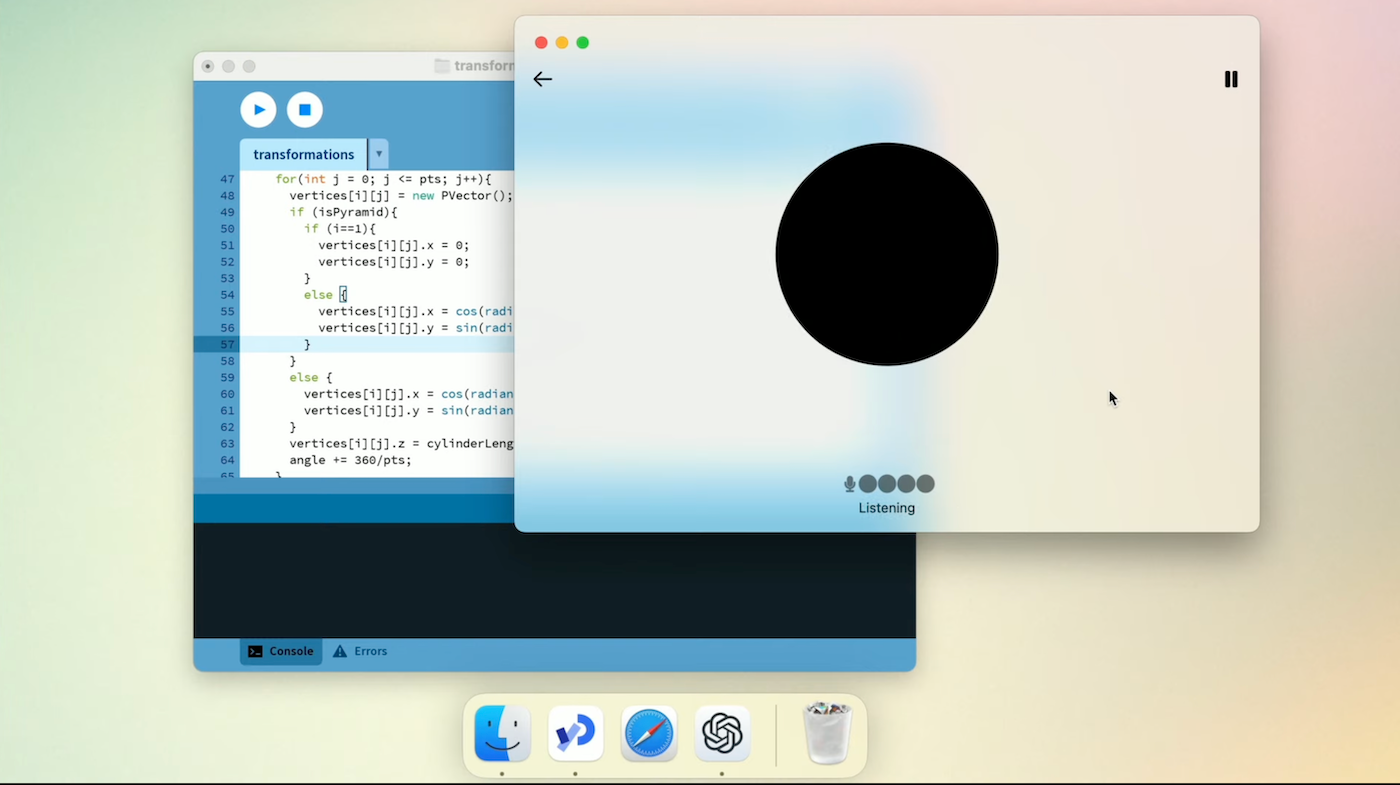

OpenAI’s recent ChatGPT desktop app, powered by the latest GPT-4o, changes everything. Among its many superpowers is its ability to read users’ screens in real-time – acting as your friendly, go-to colleague in times of crisis.

During the launch of GPT-4o, OpenAI gave us a glimpse of how it works, and the general consensus is that it is going to change how people work.

According to WEF, LLMs were predicted to impact about 40% of working hours last year. But now, many anticipate it might even reach 90%, making employees either super lazy or more productive than ever before.

The latter seems more likely.

The ChatGPT desktop app just became the best coding assistant on the planet.

Simply select the code, and GPT-4o will take care of it.

Combine this with audio/video capability, and you get your own engineer teammate. pic.twitter.com/g4fWcbhXy2— Pietro Schirano (@skirano) May 13, 2024

Coincidentally, in his recent interview for the All-In Podcast, OpenAI CEO Sam Altman expressed similar views of AI systems acting like a “senior employee” who can engage with users much like a trusted employee would with a CEO. This includes the ability to push back, reason, and access emails within specified constraints.

Borriss explains this perfectly:

The AI Senior Employee (AISE)

In the latest @theallinpod pod, @sama explained the next possible iteration of an AI assistant as something that is similar to a "Senior Employee"

I agree.

Here is how I see it in practice:

You work on your computer, and the AISE sees your screen… pic.twitter.com/ADqE47gtry— Borriss (@_Borriss_) May 11, 2024

Altman emphasised that an AI assistant shouldn’t be like an “agent” but like a “senior employee”. He pointed out that while an agent will unquestioningly follow commands, a senior employee will question requests that appear illogical.

This brings us to Marshall Brain’s 2003 science fiction novel Manna: Two Visions of Humanity’s Future. The narrative warns of the transformative power of emerging technologies like inexpensive computer vision systems, potentially displacing millions of jobs, mostly middle management.

Or, if you are a Marvel fan, OpenAI’s ChatGPT (GPT-4o) upgrade is certainly the baby version of J.A.R.V.I.S—or most likely the start of a pre-AGI era.

OpenAI co-founder and president Greg Brockman certainly gave us a glimpse into the future, and it is nothing short of astonishing.

Introducing GPT-4o, our new model which can reason across text, audio, and video in real time.

It's extremely versatile, fun to play with, and is a step towards a much more natural form of human-computer interaction (and even human-computer-computer interaction): pic.twitter.com/VLG7TJ1JQx— Greg Brockman (@gdb) May 13, 2024

Companies are Already Replacing Employees with AI

According to a McKinsey report, investment in AI has surged along with its growing adoption. For instance, five years ago, 40% of the respondents from organisations using AI reported that over 5% of their digital budgets went to AI, but now more than half of the respondents report that level of investment.

Going forward, 63% of respondents expect their organisations’ AI investments to increase over the next three years.

The transition to AI-powered solutions is evident in recent corporate decisions. In July 2023, Bengaluru-based e-commerce company Dukaan replaced 90% of its customer support staff with an in-house chatbot.

Similarly, Turnitin CEO Chris Caren’s announcement at the 2023 ASU+GSV Summit signalled a strategic shift towards AI-driven solutions.

Caren revealed that the company, which currently employs a few hundred engineers, anticipates a significant reduction in staffing needs within 18 months. “We will need 20% of the current engineering staff,” Caren stated.

This also indicates a shift towards hiring individuals directly from high school rather than four-year colleges, a trend likely to extend to sales and marketing functions.

AI Senior Manager or AI Monitoring?

Amidst the buzz surrounding AI’s role in the workplace, another perspective emerges. While AI holds promise in replacing certain job functions, its current application appears more focused on monitoring.

In many organisations, AI is primarily utilised for employee monitoring, encompassing tasks such as job application screening and productivity tracking.

Some people think that AI will replace some jobs and create new ones. That’s the story of how AI is being used.

How to Survive the AI Wave?

As generative AI becomes increasingly efficient, its applications will likely expand, surpassing initial expectations and challenging conventional notions of job displacement.

Instead of simply replacing human roles, AI is fundamentally altering the nature of work by reshaping how tasks are executed.

This transformative shift underscores the urgency for individuals to adapt and reskill to remain aligned with the evolving landscape of AI technology. Embracing continuous learning becomes imperative to remain competitive and relevant in an era increasingly defined by AI-driven innovations.

The post Soon, ChatGPT (Powered by GPT-4o) will Replace Your ‘Senior Employees’ appeared first on Analytics India Magazine.

https://t.co/PLQh78BJjl pic.twitter.com/EypCONNhCh

https://t.co/PLQh78BJjl pic.twitter.com/EypCONNhCh

#OpenAI pic.twitter.com/joxgml3RXU

#OpenAI pic.twitter.com/joxgml3RXU