At Microsoft Build, the company’s annual developer conference, the tech giant was quite clear about what it wanted to achieve while Google was busy playing catch-up.

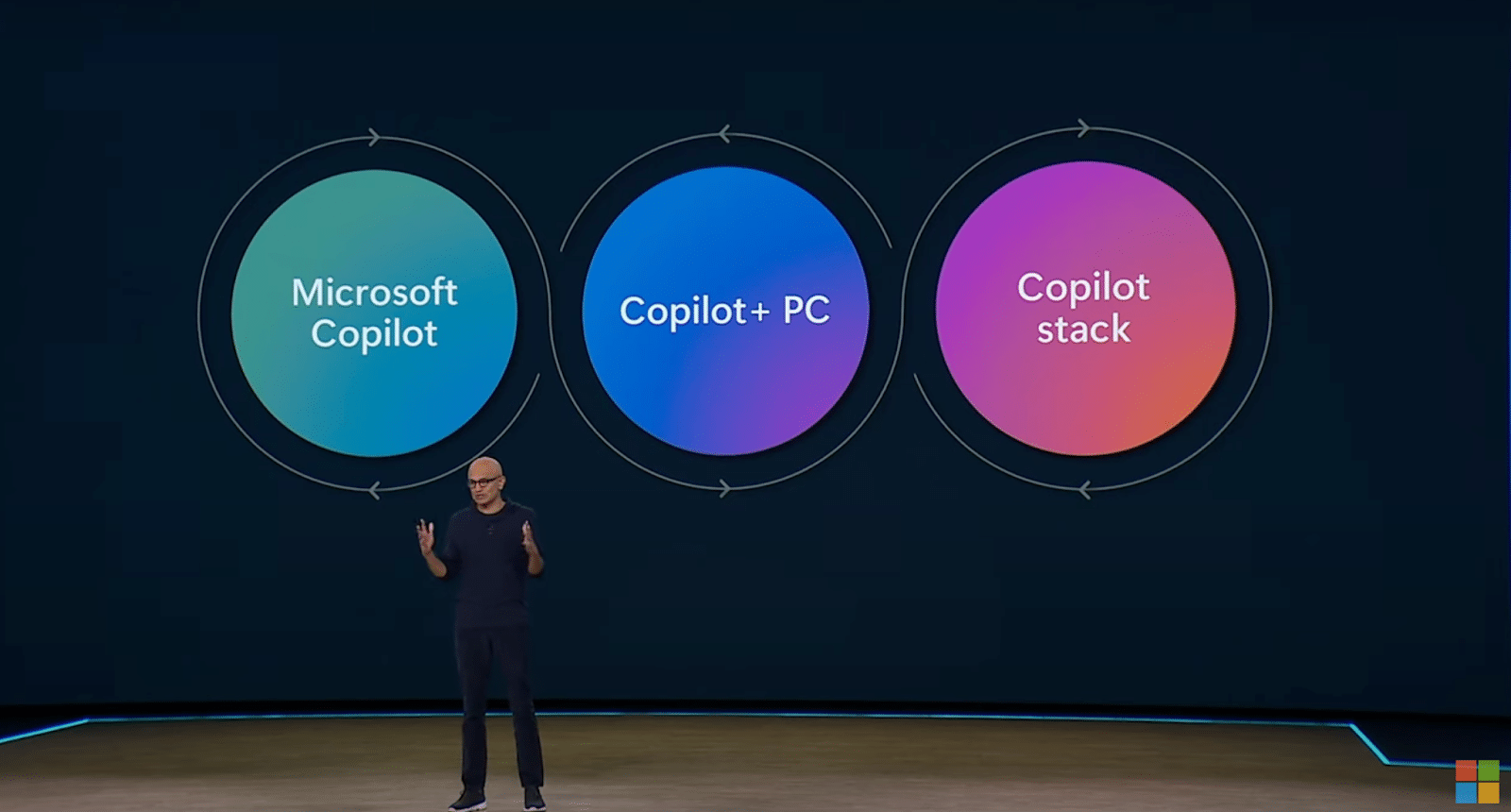

“For us, it’s never about celebrating tech for tech’s sake. It’s about celebrating what we can do with technology to create magical experiences that make a real difference in our countries, in our companies, in our communities,” said Microsoft chief Satya Nadella, in his keynote speech.

Responding to an old statement of Nadella where he said that he wants “people to know that we made them [Google] dance”, Google CEO Sundar Pichai gave a cheeky response in a recent interview with Bloomberg’s Emily Chang.

“I think one of the ways you can do the wrong thing is by listening to the noise out there and playing someone else’s dance music,” laughed Pichai, extremely sure that they are indeed listening to their own music.

Ironically, Google’s I/O event looked like they were playing to OpenAI’s tune. Products such as Veo (text-to-video), Project Astra and few others seem to be a direct response to OpenAI’s products.

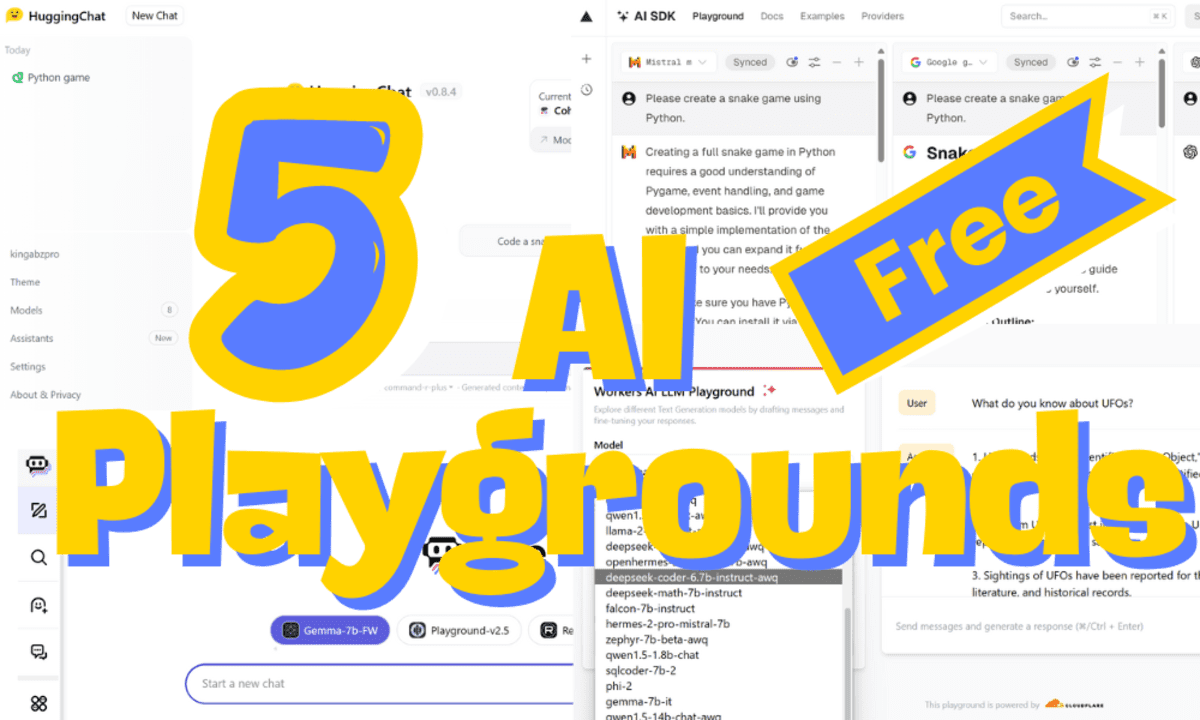

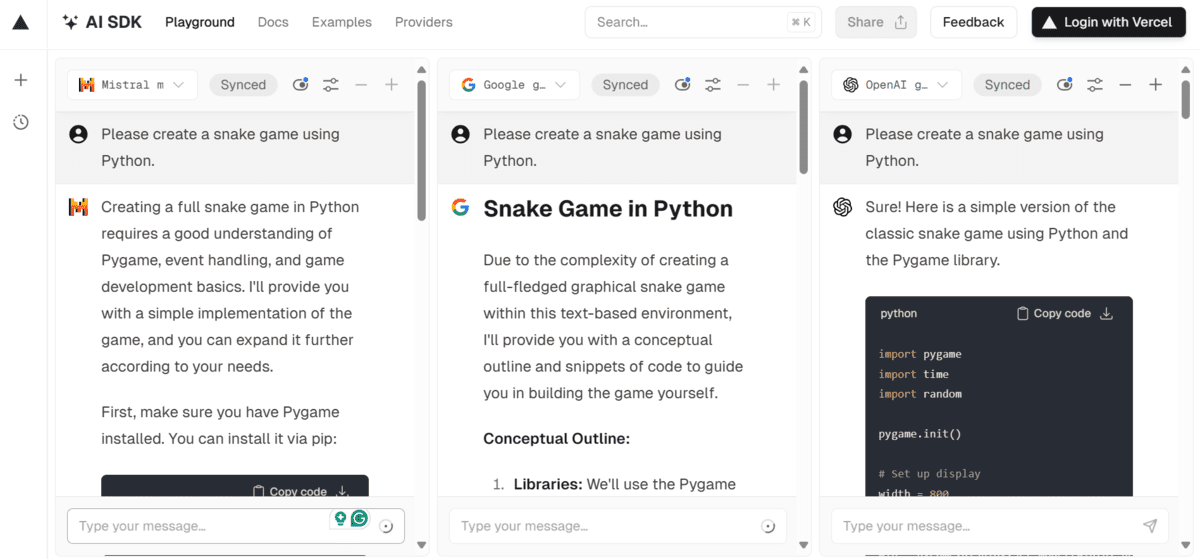

Build 2024

While the 2023 edition released a few Copilot plugins and a Copilot assistance for Windows, this year saw the company focus heavily on Copilot developments.

Source: Microsoft Build 2024

The new range of Copilot+ PC is not only poised to beat Apple’s MacBook Air M3, it also comes with a unique function called ‘Recall’ that allows one to find anything that they have searched for or done on their computer. How concerning this is, might be a topic for another time, but Microsoft has really gone all in to cater to enterprise and consumers alike.

But, so has Google!

AI for Everyone

At Google’s annual developer conference, Google I/O that took place last week (exactly a day after OpenAI’s Spring Update event), Pichai mentioned that despite investing in AI for over a decade at every layer of the stack, the company is still in the “early days of the AI platform shift”.

However, he then went on to release a string of AI products, which would cater to everyone, “not just him or her”.

If Copilot was Microsoft’s shield, Gemini was Google’s.

The company’s integration of Gemini into their existing suite of products continued in this event. The company even launched Gemini 1.5 Flash, a lighter version of Gemini 1.5 Pro, and Gemma 2, the next version of Google’s open models.

Small models seems to be the fad this tech season with Microsoft also releasing Phi-3-vision, which is the new multimodal small language model from the Phi-3 family. The model is set to be cost-effective and optimised for personal devices.

The small language model is in line with Microsoft’s AI tech prediction for the year where SLMs have been touted to gain traction. Abu Dhabi’s Technology Innovation Institute also recently launched their small model, Falcon-2 11B.

AI Agents are the Way

Source: X

“Soon you’ll be able to mix and match inputs and outputs. This is what we mean when we say it’s an I/O for a new generation, and I can see you all out there thinking about the possibilities. But what if we could go even further? That’s one of the opportunities we see with AI agents,” said Pichai.

Project Astra, defined as a universal AI agent helpful in everyday life, can process multimodal information, and respond naturally in conversation. Interestingly, at the OpenAI Spring Update, the company released GPT-4o, which pretty much does the same.

Microsoft was not far behind on its AI agent agenda either. The company announced its partnership with Cognition AI, the makers of the autonomous software AI agent Devin that caused quite a storm when it was released a few months ago. Devin will be powered by Azure.

However, Cognition AI was not the only major partnership announced.

Together, We Stand Strong

Microsoft announced a number of strategic alliances with partners from various industries. On the education front, Microsoft announced its partnership with Khan Academy, where the tech giant will provide free access to Khan Academy’s AI-powered teaching assistant for all K-12 educators. This will be supported by Azure OpenAI service.

Unsurprisingly, Khan Academy also demonstrated its ChatGPT and AI-powered tool usage via demo videos during OpenAI’s event.

Like every big-tech event, Build 2024 made a special mention of NVIDIA too. The company is planning to roll out a range of RTX-powered Copilot+ PCs. “We’re bringing the latest H200s to Azure later this year, and will be among the first cloud providers to offer NVIDIA’s Blackwell GPUs in B100 as well as GB200 configurations,” said Pichai.

Source: Microsoft Build 2024

While NVIDIA is for everyone, the biggest trump card for Microsoft obviously is OpenAI, and Altman made his brief appearance here, despite skipping his OpenAI event. Though he did not reveal anything new, he indirectly hinted at the next version of GPT and mentioned the obvious that “models are getting smarter”.

While both Microsoft Build and Google I/O saw the release of new products, Google’s approach seemed to take on OpenAI alone. On the other hand, Microsoft’s event showcased a change in the company’s outlook emphasising a futuristic vision with strategic partnerships.

“Microsoft is doing one thing that other cloud providers are either too scared or too complacent to try. They’re willing to cannibalise old products for the sake of a true AI-first strategy,” said AI advisor and entrepreneur, Allie K Miller.

Compared to Microsoft’s array of products, Google’s future strategy seems obscure.

The post ‘For Us, it’s Never About Celebrating Tech for Tech’s Sake,’ says Microsoft chief Satya Nadella appeared first on AIM.