In my previous blog, ‘AI Dividend, Universal Basic Income, and Economic Multiplier Effect,’ I explored how Artificial Intelligence (AI) can create an AI Dividend that yields staggering economic benefits by intelligently automating routine tasks, optimizing decision-making processes and fostering innovation across all sectors of society. This blog expands on that discussion by providing a ‘How To’ guide for activating and realizing the AI Dividend[1].

The “AI Dividend” encapsulates the substantial and recurring economic benefits generated by advancements in artificial intelligence (AI), emphasizing the importance of harnessing these gains to improve societal well-being and economic equity.

This blog will expand the conversation by providing a “How To” guide concerning what we must do to activate and realize the AI Dividend.

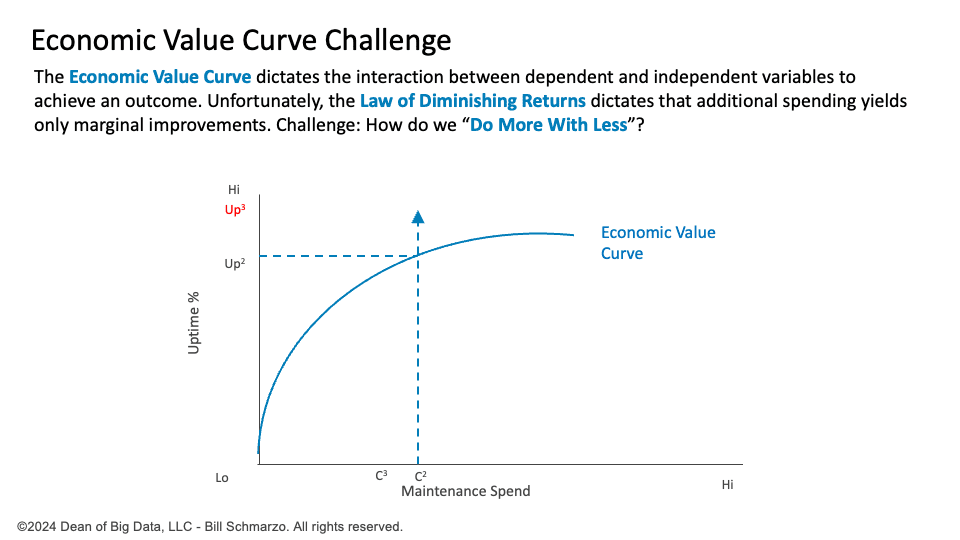

Step 1: Understanding The Economic Value Challenge

The economic value curve quantifies the relationship between an organization’s resources invested and the resulting value generated. This curve illustrates how initial capital, labor, and technology investments yield significant increases in value and productivity (Figure 1).

Figure 1: Economic Value Curve Challenge

However, as investments continue to rise, the incremental gains diminish, reflecting the Law of Diminishing Returns. After a certain point, each additional input unit contributes less to the output than the previous unit. This phenomenon imposes a critical challenge on economic growth, as it limits the potential for sustained increases in efficiency and productivity.

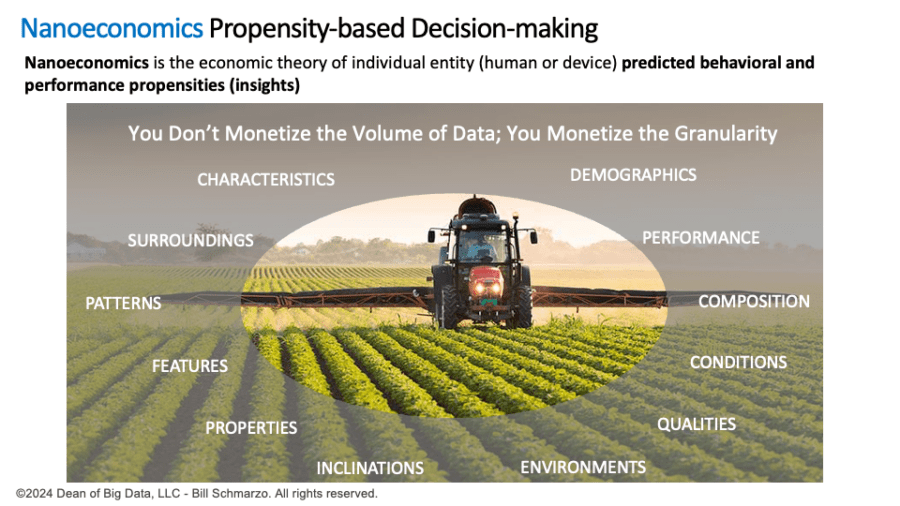

Step 2: Mastering Nanoeconomics

Nanoeconomics is the economic theory of individual entity (human or device) predicted behavioral and performance propensities (insights).

Nanoeconomics is a growing area of study within economics that focuses on measuring and codifying the behaviors and performance of individual entities such as consumers, patients, students, operators, and devices like compressors and motors. Unlike traditional macroeconomic and microeconomic approaches that deal with broader trends, nanoeconomics derives and drives value from the predicted propensities of the individual entities (Figure 2).

Figure 2: Nanoeconomics

The critical difference between Nanoeconomics and traditional economic analysis lies in the scope and granularity of the data. While conventional economics often relies on averages and general trends, Nanoeconomics captures individual entities’ specific behaviors and performance characteristics, enabling precise actions that increase efficiency in resource allocation and operational execution.

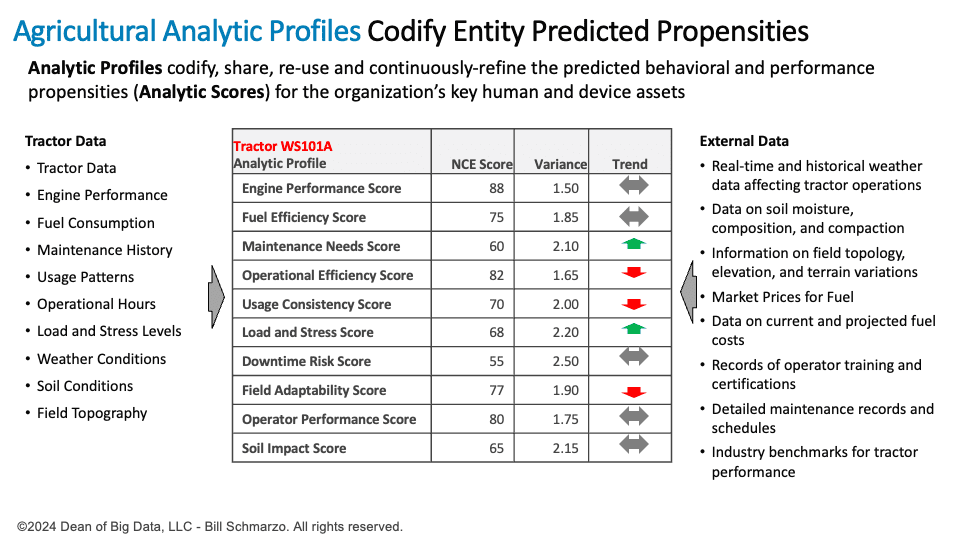

Step 3: Leveraging AI to Create Entity-based Analytic Profiles

An Analytical Profile is an analytics-driven representation of a human or device/thing entity’s behaviors, preferences, and interactions, enabling more accurate, relevant, and meaningful predictions and personalized decision-making.

Using Generative AI (GenAI), we can create individual-entity Analytic Profiles by analyzing vast amounts of data to uncover and codify individual-level behaviors, preferences, interactions, patterns, and correlations. These insights create highly accurate models that predict future behaviors and preferences, facilitating more targeted and effective decision-making. For instance, a retailer could use GenAI to develop detailed customer profiles that predict purchasing habits and tailor marketing efforts to individual preferences, enhancing customer engagement and satisfaction (Figure 3).

Figure 3: Analytic Profiles

Analytic Profiles are instrumental in transforming the economic value curve and enabling organizations to “do more with less.” For example,

- Finance: Investor and financial instrument analytic profiles enable highly individualized financial products and personalized investment advice, delivering tailored financial solutions that maximize returns with minimized risk.

- Healthcare: Patient and treatment analytic profiles enable personalized treatment plans and more accurate health outcome predictions, which improve the quality of care while significantly reducing overall healthcare costs by optimizing resource utilization and minimizing unnecessary treatments.

- Retail: Customer and product analytic profiles optimize inventory management and pricing strategies that reduce inventory costs and enhance customer satisfaction through better availability and pricing accuracy.

- Manufacturing: Analytic profiles of machinery and production lines facilitate granular predictive maintenance, optimize production schedules, and minimize downtime that increases operational efficiency, lowers costs, and extends equipment lifespan.

- Hospitality: Guest analytic profiles enable personalized services that enhance guest experiences while reducing maintenance, management, and inventory costs.

- Transportation: Vehicle and driver analytic profiles enable optimized routing, predictive maintenance, and enhanced safety measures while reducing fuel consumption, lowering emissions, and enhancing service reliability.

- Energy: Energy production analytic profiles optimize grid management, improve demand forecasting, and offer personalized energy-saving recommendations to consumers that contribute to more efficient and sustainable energy use while reducing waste and operational costs.

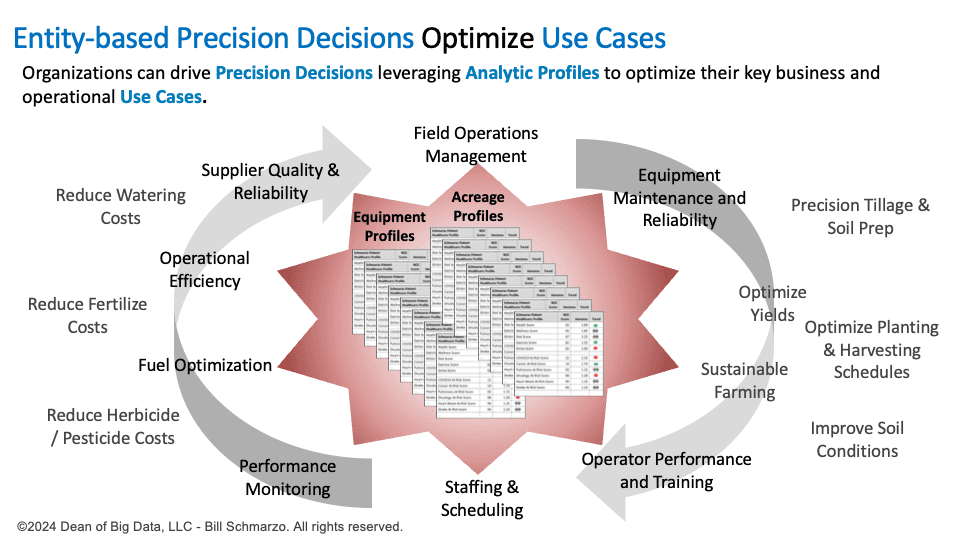

- Agriculture: Analytic profiles for crops and soil conditions enable farmers to customize irrigation schedules, fertilization usage, and pest control measures, resulting in higher crop yields and more sustainable farming practices while reducing costs associated with fertilizers, herbicides, pesticides, and water (Figure 4).

Figure 4: Entity-based Precision Decisions to Optimize Use Cases

These industry examples highlight how GenAI-generated analytic profiles can drive significant efficiency gains and value creation while reducing costs and operational risks by applying micro-level entity predictive propensities to improve operational effectiveness and resource optimization.

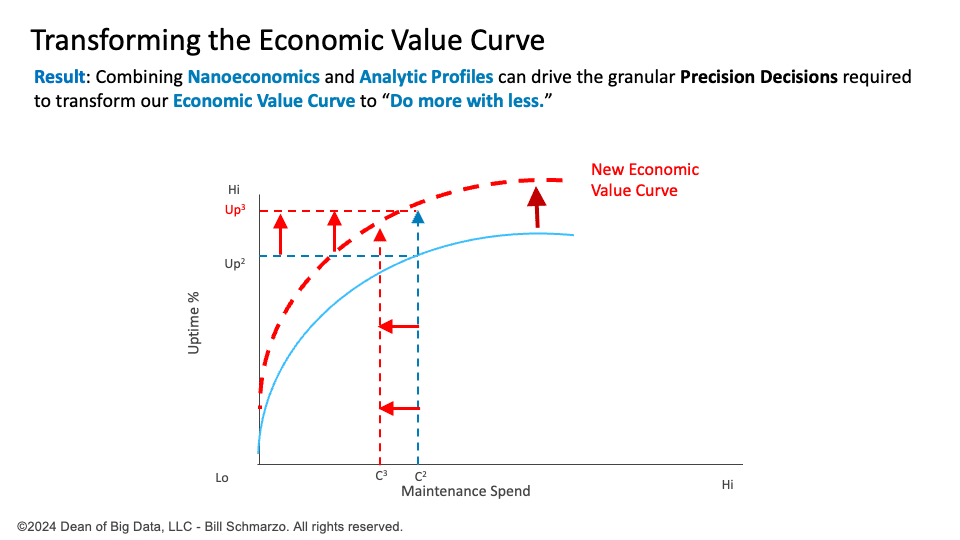

Step 4: Transforming the Economic Value Curve

Organizations can significantly improve operational effectiveness and resource optimization by embracing Nanoeconomics and creating granular entity-level Analytic Profiles. This approach reshapes the traditional Economic Value Curve, often constrained by the Law of Diminishing Returns, into a more dynamic and flexible model that continuously adapts based on real-time data (Figure 5).

Figure 5: Transforming the Economic Value Curve

Operationalizing and scaling AI, Nanoeconomics, and analytic profiles can empower organizations to achieve “Do More with Less” through granular, entity-based actions and decisions, including:

Resource Optimization:

- Prioritize resources to high-value activities and opportunities: Allocate time, money, and staffing to the most impactful projects and initiatives.

- Eliminate low-value activities and opportunities: Identify and remove tasks and processes that do not contribute to organizational goals.

Process Efficiency:

- Reengineer to create dynamic operational processes: Develop intelligent workflows that automate or eliminate ineffective steps, ensuring streamlined and efficient processes.

- Eliminate re-work: Implement systems and checks to complete tasks correctly the first time, reducing the need for corrections.

Quality and Reliability

- Optimize matching specific offers and treatments to particular individuals: Use analytic profiles to tailor products and services to individual needs, improving satisfaction and engagement.

- Replace or fix parts only when they need to be fixed: Implement predictive maintenance to ensure repairs are made only when necessary.

- Fix products right the first time: Enhance quality control processes to ensure products meet rigorous performance standards before being deployed.

- Improve supplier and vendor quality and reliability: Collaborate closely with suppliers to ensure they meet quality standards and delivery timelines.

Waste Reduction

- Eliminate waste, shrinkage, and fraud: Detect behavioral and performance anomalies to prevent inefficiencies and dishonest activities.

- Optimize just-in-time products and services fulfillment: Align production and delivery schedules closely with customer demand to minimize excess inventory and reduce storage costs.

Personalization and Adaptation

- Expand hyper-personalization to drive more effective engagements: Utilize detailed customer profiles to provide highly personalized experiences that increase customer loyalty, product sales, and profits.

- Learn and adapt more quickly to economic, market, cultural, political, technological, and societal changes: Use real-time analytics that can continuously learn and adapt to stay agile and responsive to changes in the external environment.

Summary: “Doing More with Less”

The “Doing More with Less” principle encapsulates how AI, nanoeconomics, and entity-level analytic profiles can drive greater efficiency and productivity, ultimately making the AI Dividend a tangible reality. AI technologies can uncover critical entity-level insights that lead to more effective decision-making and streamlined operations by optimizing resource allocation, re-engineering critical operational tasks, fine-tuning resource utilization, minimizing wasted effort, and scaling operational effectiveness. Coupling the AI Dividend with the Economic Multiplier Effect[2] can unlock unprecedented levels of value that can be used to promote a more efficient, sustainable, and prosperous future for all.

The only barrier hindering our ability to realize and reallocate the AI Dividend will be leadership’s fortitude to ensure that the focus is not just on amassing unprecedented levels of personal wealth but on improving the quality of life for everyone.

Remember, what we do in life echoes in eternity.

[1] “AI Dividend” concept is inspired by the “Peace Dividend’s” economic benefits. https://www.linkedin.com/events/shouldleadersinvestmoreindatama7204498853482348545/theater/

[2] The Economic Multiplier Effect occurs when an initial injection of spending (such as government investment or consumer spending) leads to a more significant overall increase in economic activity and national income due to successive rounds of re-spending by businesses and consumers.

That's mindblowing. pic.twitter.com/HnW7milZ5l

That's mindblowing. pic.twitter.com/HnW7milZ5l