Image by Author

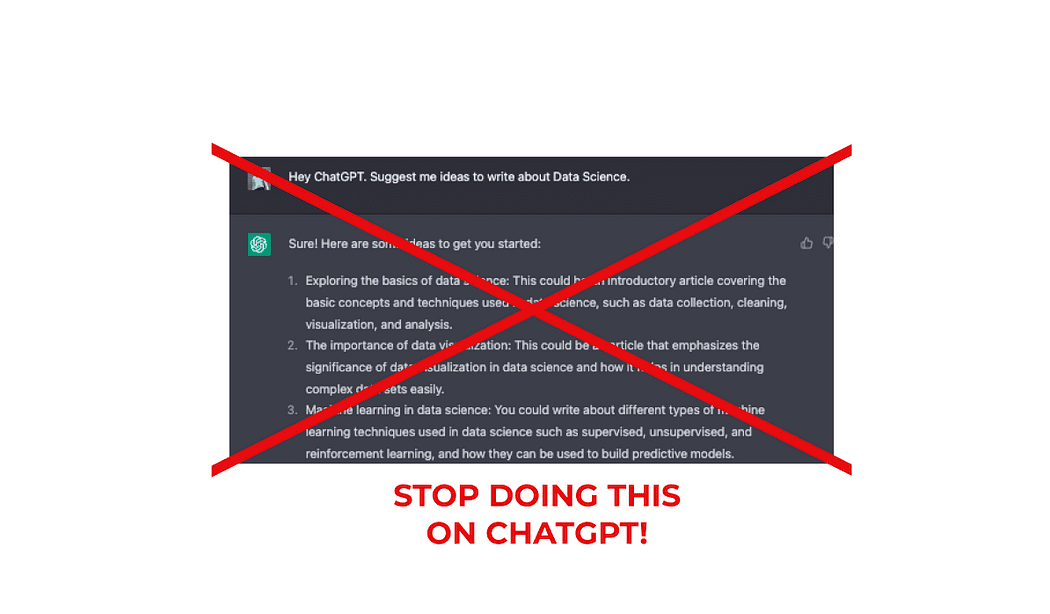

Have you ever felt frustrated with AI-generated content? Maybe you think that ChatGPT output is completely horrible and it’s not living up to your expectations

However, the truth is, getting quality output from AI writing tools like ChatGPT depends heavily on the quality of your prompts.

By training ChatGPT, you can get a personal writing assistant for free!

It’s time to discover the art of crafting powerful prompts to make the most of this cutting-edge technology.

Let’s discover it all together!👇🏻

The problem often lies not with the AI itself, but with the limitations and vagueness of the input provided.

Instead of expecting the AI to think for you, you should be the one doing the thinking and guiding the AI to perform the tasks you need.

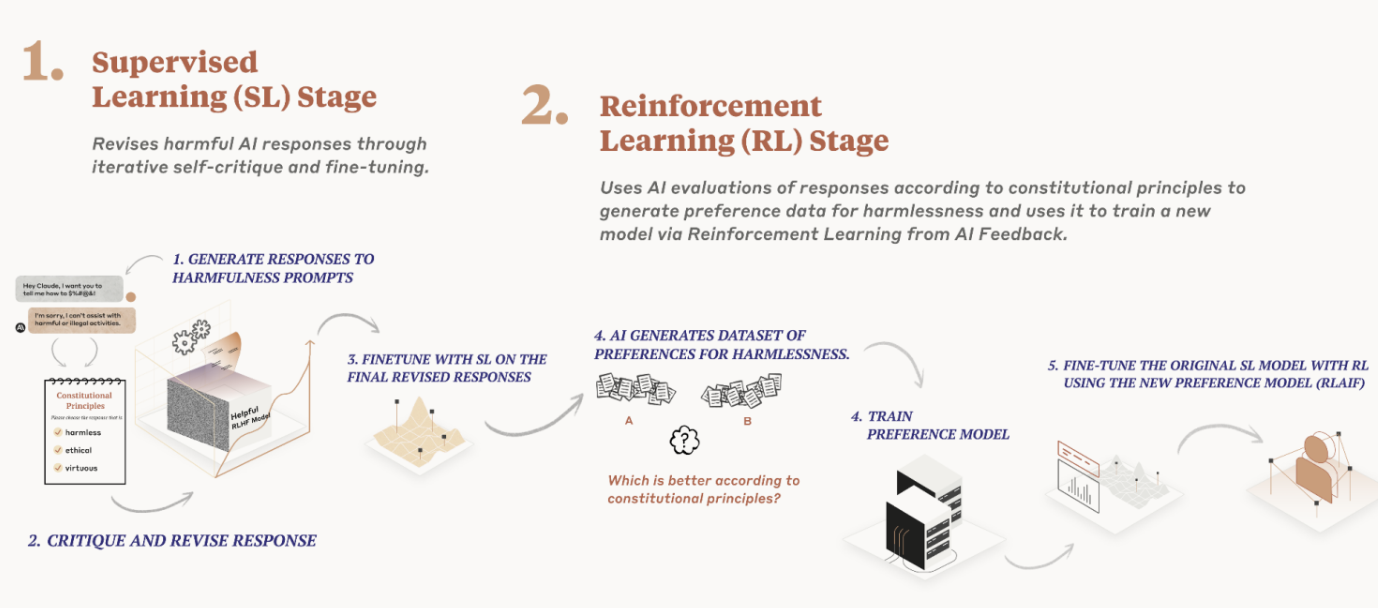

Image by Author

Do you get bad results?

This means you are feeding ChatGPT poorly written and short prompts — and expecting some magical output to happen.

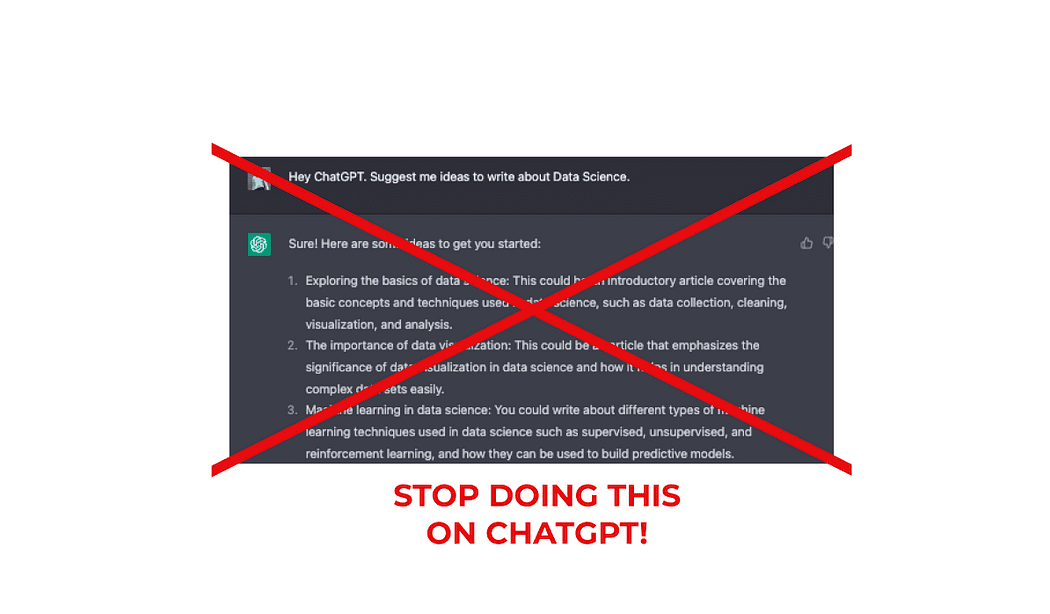

To put it simple, ChatGPT is not good at coming up with things from scratch.This means if you are still feeding ChatGPT with prompts like these:

Generate a LinkedIn post about AI for my account.

Write me a Twitter thread on Data Science

Suggest me some ideas to write about programming

YOU SHOULD STOP!

When giving prompts like this, ChatGPT has to make too many decisions —which generates some poor output.

So always remember.

Poor instructions = Poor results.

So what should you do?

There are 4 main steps to assess this. Let’s break them down ??

#1. Understand your needs and Requirements

To order something, you first need to know what you want.

Right?

So you need to know what you want from the AI, why you want it, and how you want it delivered. This clarity will help you create better prompts and enhance the quality of the output.

So first of all, start standardizing all types of outputs you require from AI.

Image by Author

Let’s put some examples.

- I am starting to be active on my Twitter account — so I would like both tweet ideas and Twitter thread structures.

- I am really active on Medium —so I would like to get inspired to write and generate skeletons for articles.

Nice!

So from this exercise, I have realized I need 4 different types of outputs.

- Ideas to write tweets for Twitter.

- Threads structures for Twitter.

- Ideas to write on Medium.

- Article skeletons for Medium.

Let’s keep the Twitter structure as an example.

To further understand how to improve your writing using ChatGPT, I recommend the following article 🙂

5 Features to Maximize Your Writing Potential on Medium with ChatGPT

And how to use it to leverage your writing

#2. Treat AI like a Digital Intern

Imagine you’ve hired an intern — you wouldn’t give them only one brief explanation and expect them to do everything great at first, right?

Image by unDraw.

Let’s imagine I want to post a thread on twitter about using the Google Cloud Platform. It makes no sense just to let my intern know I want a Twitter thread of GCP for tomorrow — and that’s it.

If you do so… maybe you should change your approach, my friend 😉

Then ChatGPT — or any other AI tool — is just the same.

Always provide your AI with a detailed checklist, explain the purpose behind the task, and be open to clarify any doubts the AI might have.

This means I can not say:

Hey ChatGPT. Write me a Twitter thread about the Google Cloud Platform.

The previous prompt is way too vague.

- How many tweets do you want?

- What writing style?

- What subtopics should ChatGPT emphasize?

- What is my language tone — friendly, professional…?

You are letting an AI too many decisions to make for you — and that’s why its output is going to be a mess.

⚠️ Always use AI tools to leverage your work — not to substitute you.

And this brings us to the following step…

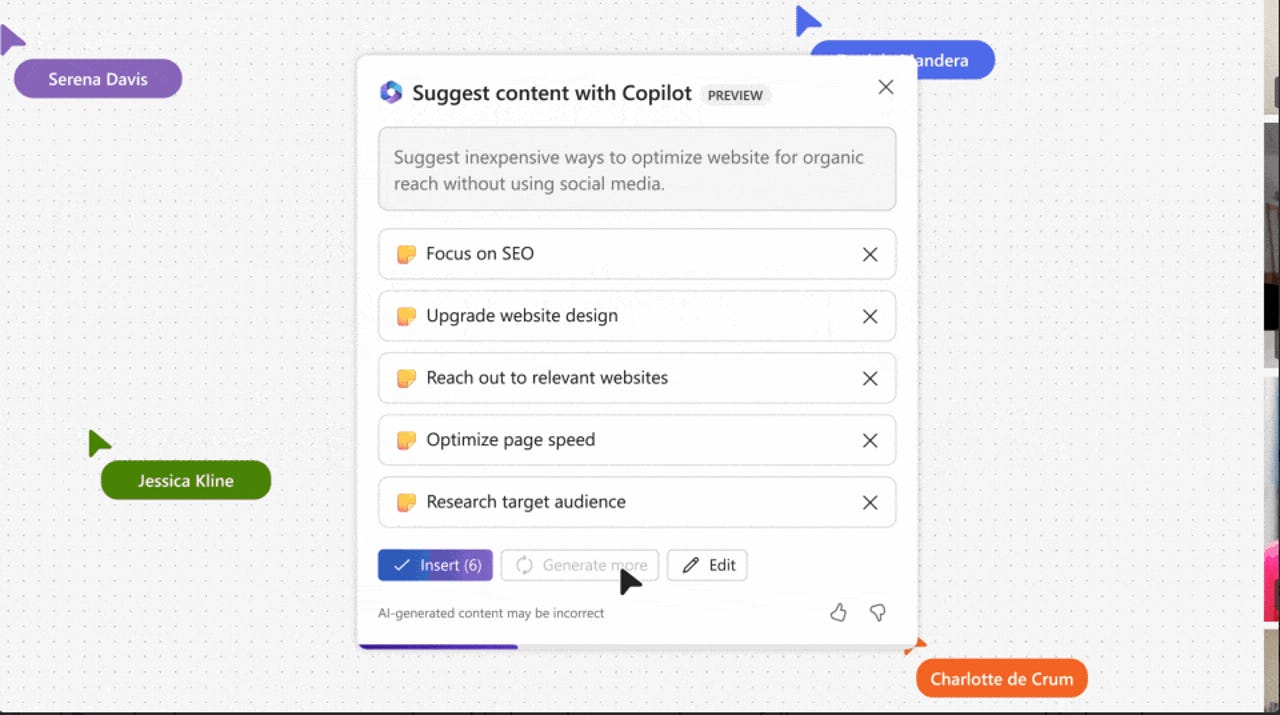

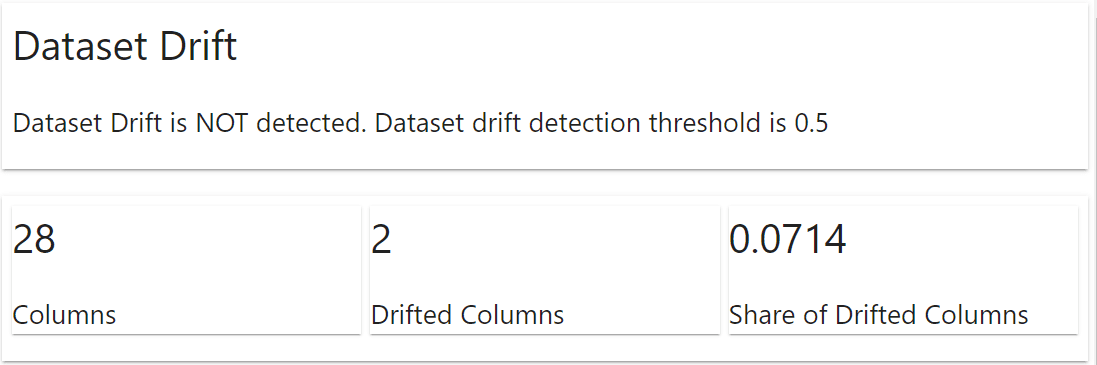

#3. Create Constraints and Avoid Assumptions

This is the key to the process. To get specific and accurate output, provide your AI with clear and well-defined information. When you give vague or broad prompts, you can’t expect the AI to deliver precise results.

Instead, let AI know exactly what you want to get.

- A good contextualization — what kind of output do you want?

- A specific topic — with subtopics to emphasize.

- A specific structure — like how many tweets, words…

- A specific format for the output — what writing style to use, what tone…

- A specific list of things to avoid — what you do not want to mention.

So let’s start creating our own prompt to generate Twitter threads.

1. Add some good contextualization

I want ChatGPT to generate a Twitter thread for me. However, what is a Twitter thread?

I first need to make sure ChatGPT understands what I mean by the Twitter thread.

This is why any good prompt needs to start with a good contextualization.

[ 🧑🏼🏫 First I let ChatGPT know I will train it to get some specific output]

Hey ChatGPT. I am going to train you to create Twitter threads.

[ 🐦 Then I explain what this specific output consists of]

Twitter threads are a series of tweets that outline and highlight the most important ideas of a longer text or some specific topic.

2. Add a specific topic

I want ChatGPT to write a twitter thread about some specific topic. Now it is the moment to explain more about the topic.

In this case, I want this thread to talk about Google Cloud Platform free tiers.

[ ☁️ I explain ChatGPT the main topic]

The twitter thread will talk about Google Cloud Platform free tier services.

[ ⚙️ I outline what I want it to mention for sure and what to emphasize]

I want you to talk about Google cloud platform environment, all its services and its utility for data science. However, you need to emphasize all these advantages are free for ever when your usage is contained within some tiers. Mention google big query and cloud functions, that are two of the most important services for analytics.

3. Add a specific structure

Now it is the turn to let ChatGPT know what is the structure of the output. This part can be more generic or more detailed depending on your needs. I usually detail as much as possible, to end up with a good draft from which to start.

[ 📝 I specify the whole structure I want to receive from ChatGPT]

A first tweet with a short but concise message, letting people know what’s the thread about. It is important not to be more than 30 words, use key hashtags and convince people to read the whole thread. Emphasize the utility of the thread for them.

A second tweet that makes a short intro and let the reader get contextualized and understand why are they still reading the thread. It is important to keep the reader reading.

4 or 5 tweets that outline and describe the most important parts of the article. These should summarise the main ideas of the topic I explained to you before.

A last tweet with some conclusions and letting people know why your thread is worth it.

A final tweet inviting them to retweet your thread and follow you.

4. A specific format for the output

A final comment about the format of the output to be generated. Usually, I include how ChatGPT should behave and what kind of writing style it should use.

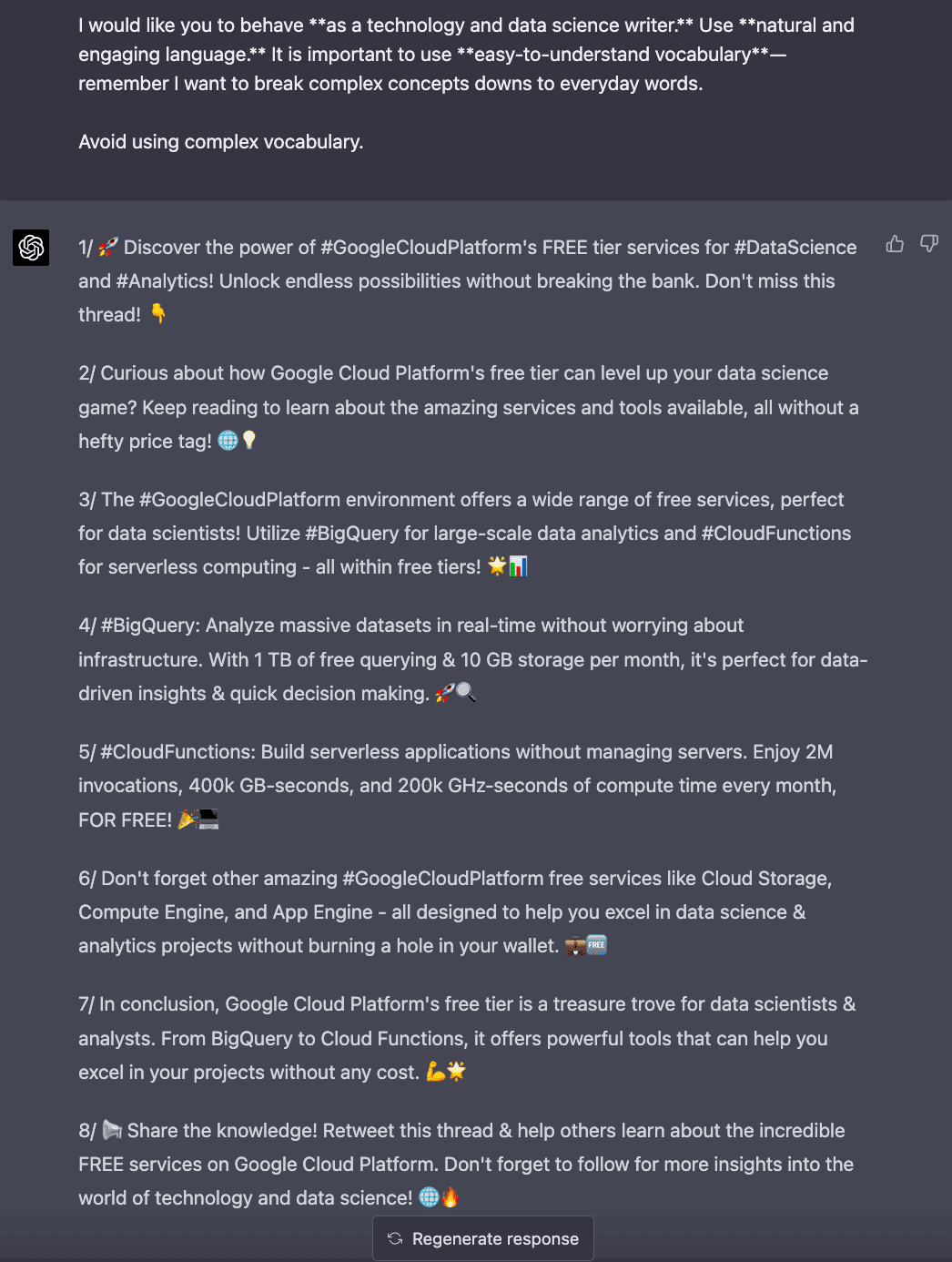

[ 🔖 I specify the format of the output I want]

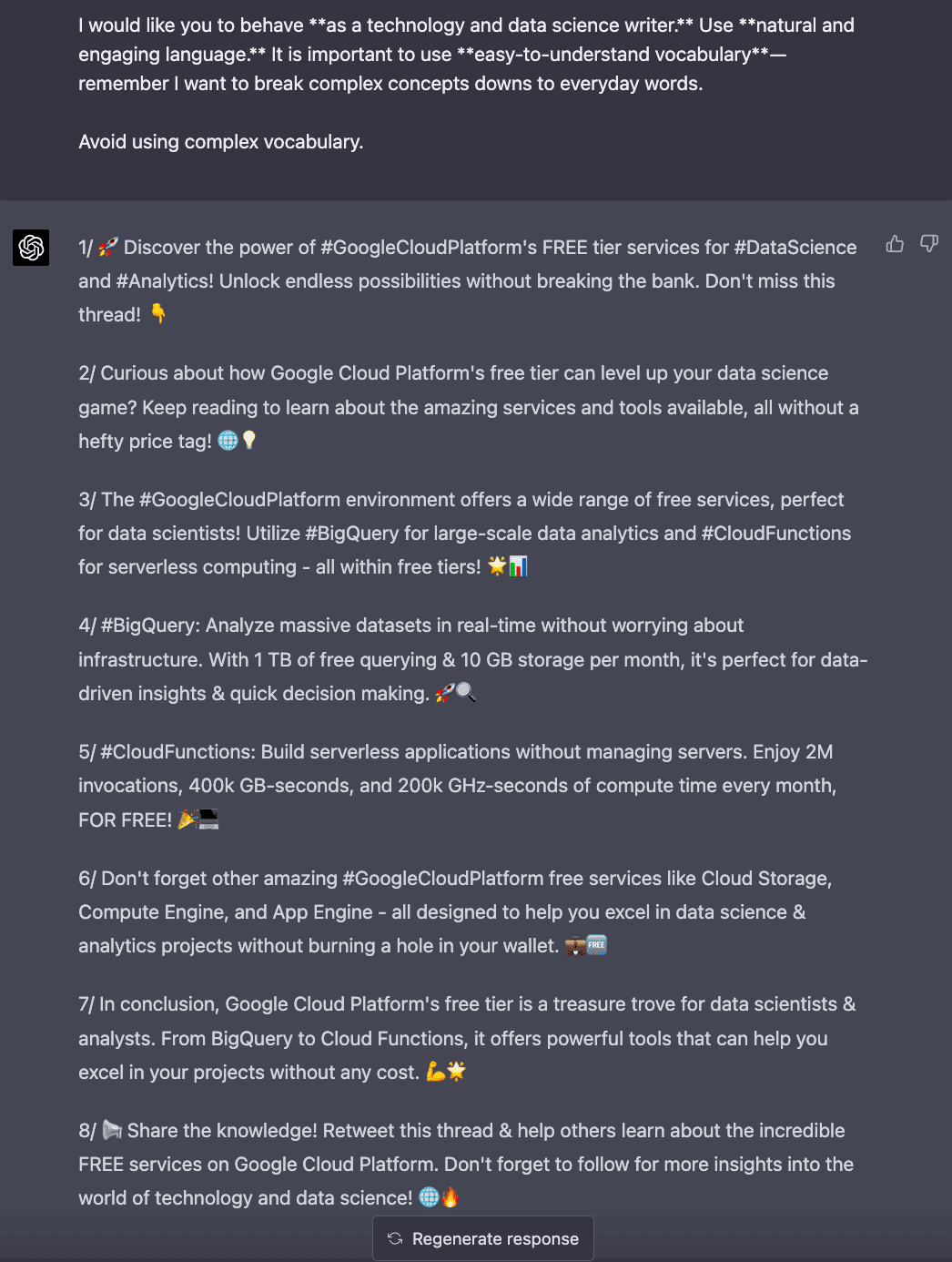

I would like you to behave as a technology and data science writer. Use natural and engaging language. It is important to use easy-to-understand vocabulary — remember I want to break complex concepts down to everyday words.

5. A specific list of things to avoid

In this case, if there’s something that you do not want ChatGPT to mention, let it know. In my case, I don’t want it to use complicated vocabulary.

[ ❌ I always tell ChatGPT to avoid complex language]

Avoid using complex vocabulary.

#4. Iterate and Refine your Input

If the AI generates incorrect output, it’s probably due to an issue with your input. Don’t be afraid to rework your prompts multiple times.

Remember, even though you’re using natural language to talk with a machine, you should think of it as writing code for the AI.

Prompt writing is an iterative game — you will not get it right on the first try. But like training an employee, the upfront time investment is worth it. Because once you have a working, reliable prompt, you can use it forever

— By Dickie Bush

⚠️ It is important to consider there must be no thinking required in any of the tasks we order to ChatGPT. We think and ChatGPT executes.

So if I use the prompt I have just created to get a Twitter thread from ChatGPT, it replies to me directly the following output.

Screenshot of the ChatGPT interface. ChatGPT giving me a Twitter thread output.

You can repeat and regenerate the response as many times as you want so you get a some good result. I always use ChatGPT output as a first draft from which to start and end up with a good Twitter thread for my account.

My final result can be found below.

Main Conclusions

In conclusion, it’s not the AI that’s falling short, but rather the way we interact with it. To make the most of ChatGPT and similar tools, we must refine our approach and focus on becoming thinkers who guide the AI in executing.

By following these tips and taking responsibility for the input, you’ll find that AI-generated content can be a valuable asset in your content creation arsenal.

So, let’s start crafting effective prompts and unlock the full potential of AI writing!

Josep Ferrer is an analytics engineer from Barcelona. He graduated in physics engineering and is currently working in the Data Science field applied to human mobility. He is a part-time content creator focused on data science and technology. You can contact him on LinkedIn, Twitter or Medium.

Original. Reposted with permission.

More On This Topic

- FluDemic — using AI and Machine Learning to get ahead of disease

- Stop Blaming Humans for Bias in AI

- Stop (and Start) Hiring Data Scientists

- Visual ChatGPT: Microsoft Combine ChatGPT and VFMs

- Snowflake and Saturn Cloud Partner To Bring 100x Faster Data Science to…

- Stop Running Jupyter Notebooks From Your Command Line