Introduction: Understanding Quantum Machine Learning

Quantum Machine Learning (QML) is an emerging field combining two revolutionary technologies: quantum computing and machine learning. This intersection can revolutionize artificial intelligence, computing, and data analysis by harnessing the unique properties of quantum mechanics.

The principles of quantum computing and traditional machine learning are combined in QML and can enable unparalleled computational power and problem-solving capabilities. QML leverages quantum bits (qubits) to represent and process data, exploiting quantum superposition, entanglement, and interference to explore multiple solutions simultaneously.

Quantum superposition allows qubits to exist in various states simultaneously ( 0, 1, or both), while entanglement creates strong correlations between qubits, even when separated by large distances. Quantum interference is critical in designing and implementing quantum algorithms for machine learning tasks.

Though the field is still developing, and many applications are in their infancy, QML holds great promise for overcoming current limitations in classical machine learning.

The future of Quantum Machine Learning is certainly promising, but what exactly does it hold for us?

Envisioning the Future of Quantum Machine Learning

Key areas set to benefit from Quantum Machine Learning (QML) include personalized medicine, drug discovery, logistics optimization, materials science, artificial intelligence, cryptography, and secure communications. By enabling more accurate modeling and prediction, QML can redefine its competitive advantage, alter commercial operating models, and reshape entire sectors.

However, realizing the full potential of QML depends on overcoming challenges such as developing more advanced quantum hardware and efficient algorithms tailored for specific applications.

Organizations that adopt these emerging technologies can drive innovation, create value, make data-driven decisions that are not possible with traditional computing, and tackle complex global challenges like climate change and resource scarcity.

A significant point to consider is that the learning curve of quantum computing is steep. Consequently, a delayed adoption strategy may become risky, emphasizing the importance of gaining a significant edge over rivals.

The benefits of QML are numerous, especially when considering its potential applications and role in achieving sustainability goals.

QML Applications and its Role in Achieving Sustainability Goals

In healthcare, QML expedites drug discovery and personalized treatments. In finance, it can optimize trading algorithms and risk assessment. Moreover, QML contributes to the fight against climate change by enhancing renewable energy technologies, accelerating materials discovery, and optimizing resource management.

The transformative potential of QML extends to various applications, including smart cities, traffic management, and supply chain optimization. One urgent challenge is tripling our energy storage to limit global warming to two degrees by 2050. QML, through its powerful computational abilities, could be crucial in designing and optimizing next-generation technologies, such as more potent, durable, and affordable energy storage systems.

These advancements can drive market share gains and higher profits for forward-thinking businesses. QML’s ability to concurrently run many simulations facilitates quick testing, comparison, error correction, and deployment of goods or services, further catalyzing innovation across industries.

To fully grasp the implications and potential applications of QML, we need to understand the quantum algorithms and techniques that power it.

Quantum Algorithms and Techniques

Quantum algorithms like Quantum Support Vector Machines (QSVM), Quantum Neural Networks (QNN), and Grover’s and Shor’s algorithms are central to the advancement of Quantum Machine Learning (QML). QSVM and QNN offer efficient data classification, pattern recognition, and optimization, outperforming traditional machine learning techniques.

Separately, Grover’s algorithm, which accelerates unstructured search problems, and Shor’s algorithm, with its efficient factoring of large numbers and implications for cryptography (e.g., RSA), highlight the immense power of quantum computing and inspire new techniques in QML.

Despite this progress, QML is still in its early stages. Continued research and development are needed to unlock its potential fully. This includes the creation of new algorithms tailored explicitly to diverse QML applications.

Given the complexities and potential of QML, organizations must gear up to meet the challenges and seize the opportunities it offers.

Gearing Up for Quantum Machine Learning

Organizations must prioritize developing in-house quantum expertise, collaborating with quantum startups, partnering with quantum hardware providers, and creating quantum-ready software. Investment in research and development is also essential.

It is crucial to foster a culture of innovation within these organizations. Promoting collaboration between quantum and classical ML experts will help harness the potential of quantum technology and gain a competitive advantage.

Additionally, understanding the unique challenges and limitations of quantum computing is important. Issues such as qubit coherence and error rates present complexities in this emerging field. Gaining a firm grasp of these challenges will help organizations navigate and make significant strides in quantum machine learning. However, while gearing up for QML, organizations must also prepare to confront several challenges in this field.

Unmasking the Challenges of Quantum Machine Learning

Key challenges and limitations facing Quantum Machine Learning (QML) include hardware constraints, short qubit coherence times, error correction, and talent shortages. There is also a need for more practical, large-scale use cases.

Addressing these challenges requires a multi-faceted approach. Investment in next-generation quantum hardware and quantum error correction codes is necessary. There is also a need for standardized tools, programming languages, and training and education programs. Moreover, developing efficient quantum algorithms tailored to specific applications is essential.

Cybersecurity and privacy concerns present another challenge that must be addressed to ensure successful QML integration. Policymakers, researchers, and businesses must collaborate to create an enabling environment for developing and deploying QML. This collaboration fosters innovation while mitigating potential risks.

Beyond these technical and practical challenges, ethical considerations also play a major role in widely adopting technologies like QML.

Ethical Considerations

As quantum machine learning (QML) advances, it raises significant data security concerns, such as the potential to crack widely used cryptographic schemes like RSA. Beyond security, ethical considerations surrounding QML are diverse, encompassing data privacy, algorithmic bias, and equitable access to quantum technologies.

For example, improperly designed QML applications might inadvertently exacerbate existing biases, resulting in unfair consequences for certain groups. Business leaders and policymakers must prioritize the responsible development and deployment of QML technologies to address these concerns. Their goal should be to foster innovation while ensuring that benefits are broadly shared and potential risks mitigated.

Regulatory frameworks and guidelines must be established to promote fairness, accountability, transparency, and privacy. These measures will help protect users’ rights and build trust in these cutting-edge systems. By working together, stakeholders can harness the power of QML while effectively addressing its complex ethical challenges.

To ensure the ethical use and continued development of QML, attracting and retaining skilled professionals in the field is crucial.

Talent Acquisition and Workforce Development

Companies and educational institutions must adopt strategies to attract, retain, and develop top talent in QML. This includes introducing specialized training and education programs and establishing collaborations with research organizations and universities.

Encouraging interdisciplinary collaboration, particularly among physics, computer science, and mathematics, is another critical aspect of workforce development and drives progress in QML.

In this highly competitive field, offering competitive compensation and benefits is essential for attracting and retaining skilled professionals. With a culture of innovation and collaboration, organizations can ensure they have a skilled workforce well-prepared to navigate the complexities of quantum technologies.

Addressing these challenges and capitalizing on the opportunities provided by QML requires more than individual talent; it demands fostering global cooperation.

Fostering Global Cooperation

Global cooperation and collaboration at an international level between academia, industry, and governments are vital for propelling research, innovation, and the responsible development of Quantum Machine Learning (QML). Stakeholders must establish international research centers, public-private partnerships, and regulatory frameworks that foster knowledge sharing and collaboration. Developing ethical guidelines on a global scale is also crucial to ensure the responsible deployment of QML applications. Noteworthy international initiatives and organizations, such as Quantum Economic Development, can fast-track the development and implementation of quantum technologies. This coordination can help maximize societal benefits while mitigating risks and unintended consequences.

Conclusion

Quantum Machine Learning (QML) has immense potential to transform industries and aid environmental sustainability. However, as we unlock its potential, significant challenges must be addressed, including developing advanced quantum hardware, talent acquisition, and privacy protection.

The limits of traditional computing power could constrain the future of Machine Learning (ML). QML provides a pathway to overcome these constraints and accelerate our digital transition, opening new horizons for ML.

To responsibly leverage QML, we must foster innovation and collaboration across businesses, academia, and governments, ensuring ethical considerations are at the forefront. By navigating these complexities, we can ensure that Quantum Machine Learning does not just become a part of our future but shapes it, driving us towards a more sustainable and technologically advanced society.

“Quantum Machine Learning is our North Star in the vast cosmos of technology. It stands at the unique intersection of quantum physics and machine learning, illuminating our path beyond the limits of classical computing. Like a guiding light piercing through complexity, it promises advancement and a radical transformation of our world. Yet as we navigate this uncharted universe, we must be the astronomers, explorers, and ethicists, ensuring our journey brings us to a sustainable, inclusive, and profoundly human future. Quantum Machine Learning is not just the next chapter in our story—it’s a whole new epic waiting to unfold.” – Amitkumar Shrivastava.

This article is written by a member of the AIM Leaders Council. AIM Leaders Council is an invitation-only forum of senior executives in the Data Science and Analytics industry. To check if you are eligible for a membership, please fill out the form here.

The post Council Post: Shaping Tomorrow – The Transformative Potential Of Quantum Machine Learning appeared first on Analytics India Magazine.

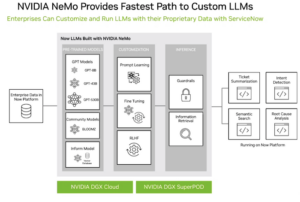

With their tendency to hallucinate incorrect information, foundation models like GPT-4 that have been trained with data from the public domain are not necessarily ready for enterprise use cases, specifically those requiring a high level of accuracy.

With their tendency to hallucinate incorrect information, foundation models like GPT-4 that have been trained with data from the public domain are not necessarily ready for enterprise use cases, specifically those requiring a high level of accuracy.