Image by Author

If you are working in the data industry or aspire to do so, you might be wondering if it’s time for a career change.

Will generative models like ChatGPT be the end of data scientists?

As someone who has worked in data science for three years, I’d like to provide my take on this.

In an article I wrote some time back, I strongly disagreed with the notion that automated AI software could ever replace data scientists. My argument was that these tools would improve organizational efficiency to some extent, but lacked customizability and required human involvement at every stage.

But that was back in February 2022, way before ChatGPT, OpenAI’s revolutionary language model, was released.

When ChatGPT was first made public, it was based on GPT-3.5, a model capable of understanding natural language and code.

Then, in March 2023, GPT-4 was released. This algorithm outperforms its predecessor in solving problems based on logic, creativity, and reasoning.

Here are some facts about GPT-4:

- It can write code (like, really well)

- It passed the bar exam

- It outperformed most state-of-the-art models on machine learning benchmarks

This model can turn a sketch into a fully-fledged website and acts as a great assistant to programming and data science tasks.

And it is already being used by organizations to improve efficiency.

The CEO of Freshworks, Girish Mathrubootham, says that programming tasks that once took his employees 9 weeks to complete are now being done in a few days with ChatGPT.

With generative AI, coding workflows in this company are being completed approximately 20 times faster than usual. This will lead to a massive decrease in turnaround time, which means that companies can get more done faster.

The Bad — Why Your Job Is At Risk

Product Integrations

So far, we’ve just talked about programming.

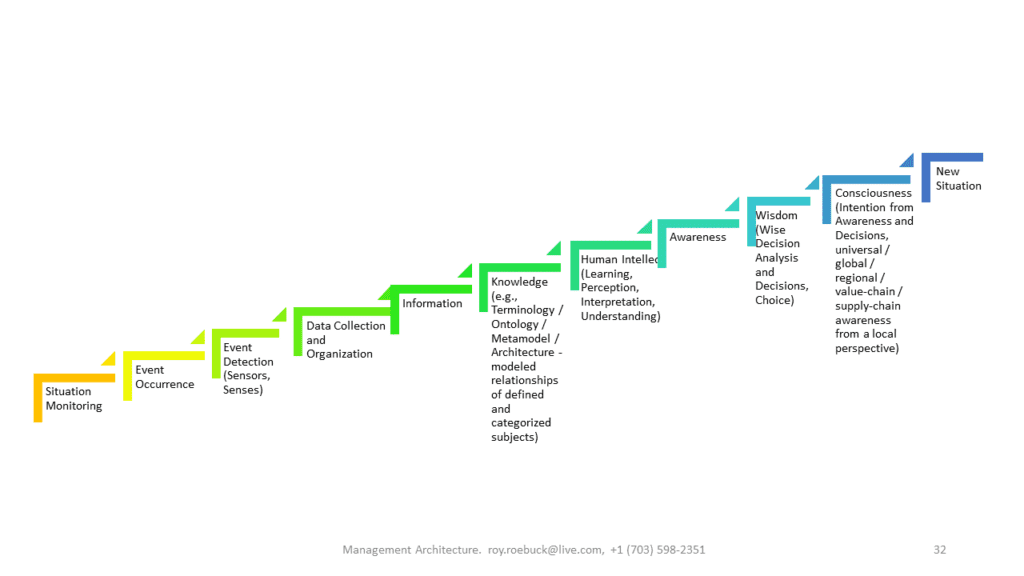

There are other aspects to a data scientist’s job — such as data preparation, analysis, visualization, and model building.

In my experience, data scientists are currently highly in demand because of the diverse variety of skills they are expected to have.

Apart from building statistical models and learning to code, these professionals also need to use SQL for data extraction, work with software like Tableau and PowerBI for visualization, and effectively communicate insights to stakeholders.

With LLMs like ChatGPT, however, the barrier to getting into a field like data science or analytics will reduce tremendously. Candidates no longer need to possess expertise in various software, and can instead harness the power of LLMs to accomplish in minutes what would typically take hours.

For example, in a company I once worked with, I was asked to complete a timed Excel assessment since a majority of the organization’s database resided in spreadsheets. They wanted to hire someone who was able to quickly extract and analyze this data.

This requirement to hire candidates with expertise in using specific tools, however, will disappear as LLM adoption increases.

For instance, with a ChatGPT-Excel integration, you could simply highlight cells you want to analyze, and ask LLMs questions such as “What is the trend of these sales numbers over the last quarter,” or “Can you perform regression analysis?”

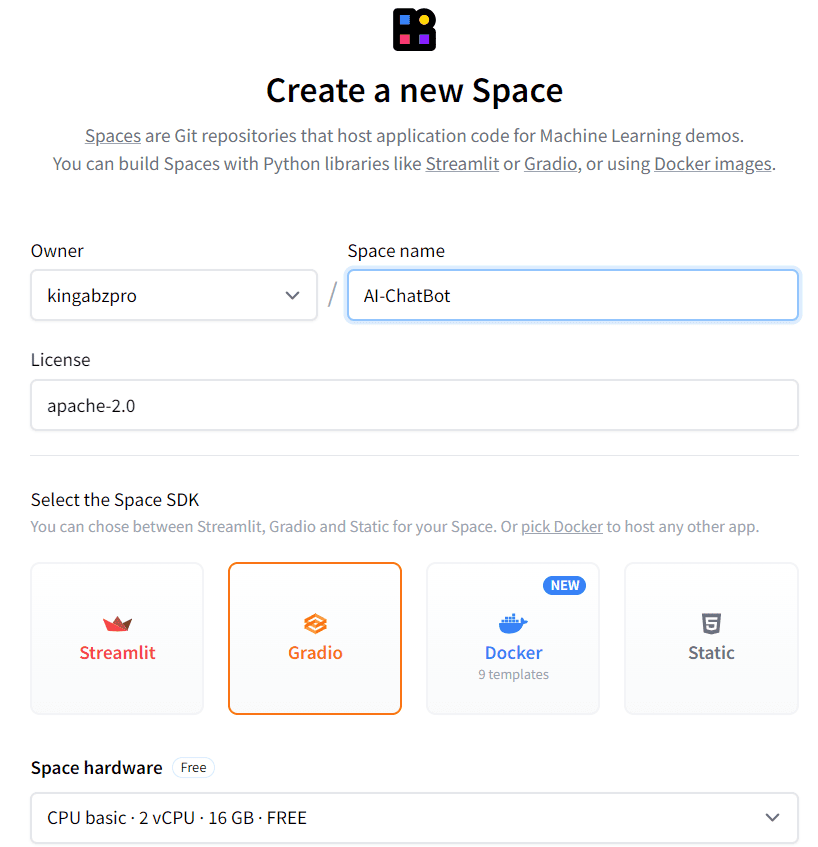

ChatGPTs response to what an Excel integration would look like

Product integrations like this will make Excel and other similar software accessible to people who don’t typically use them, and the demand for experts in the tool will reduce.

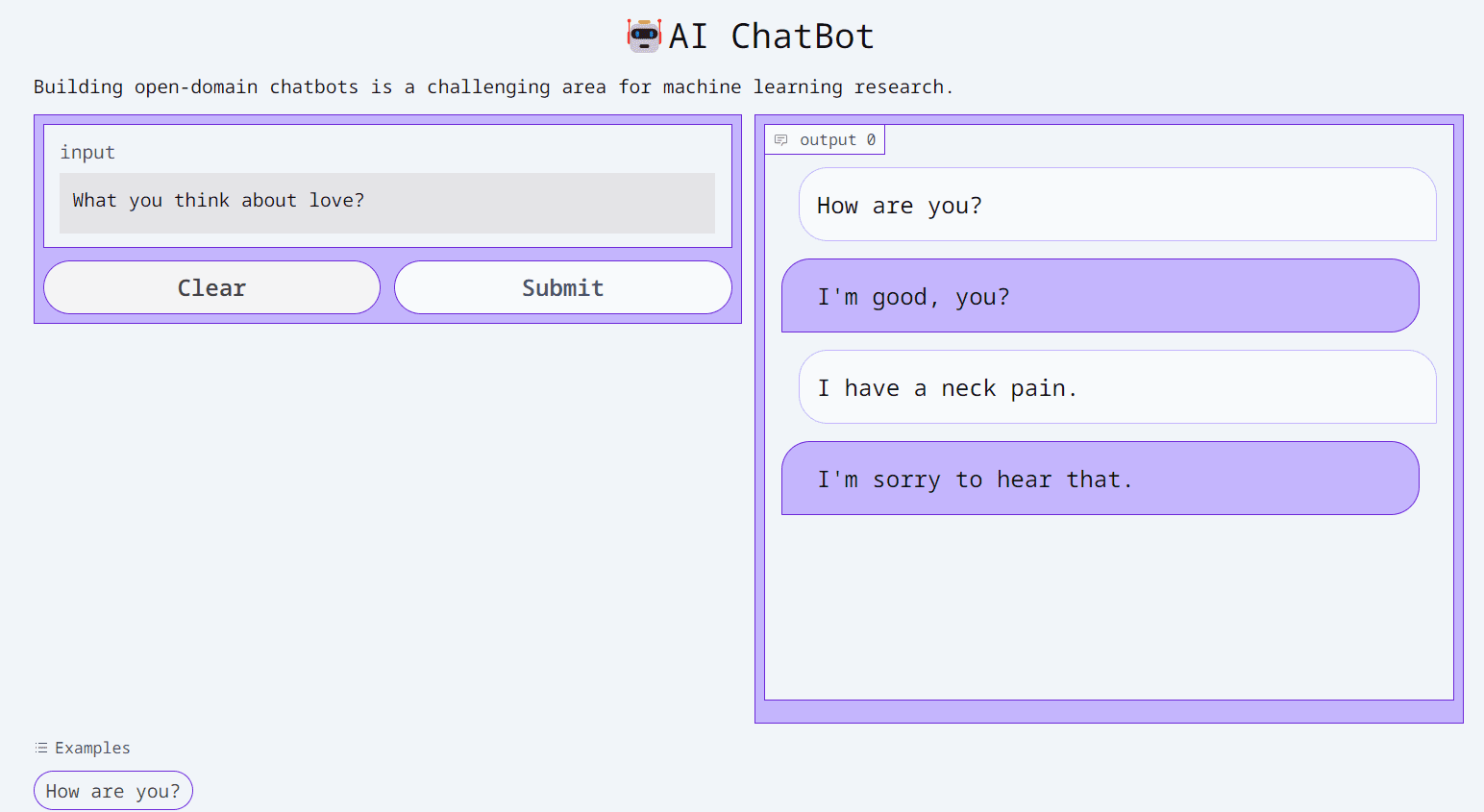

Code Plugins

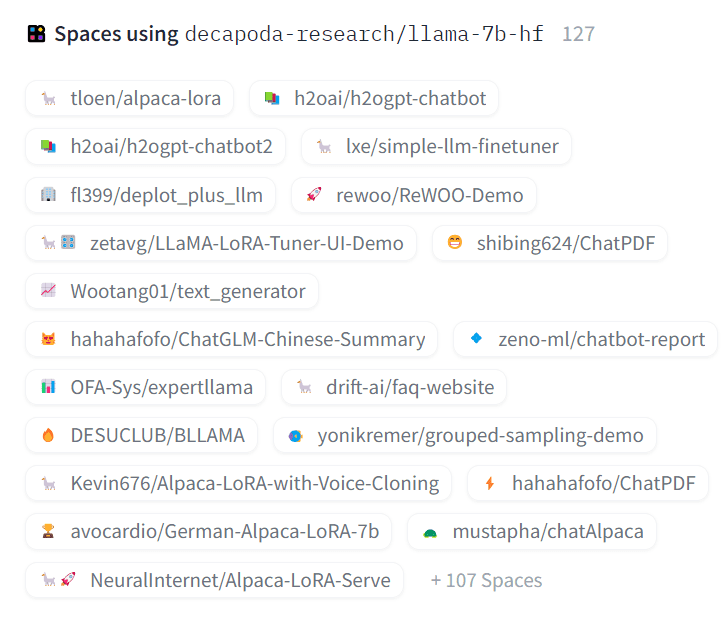

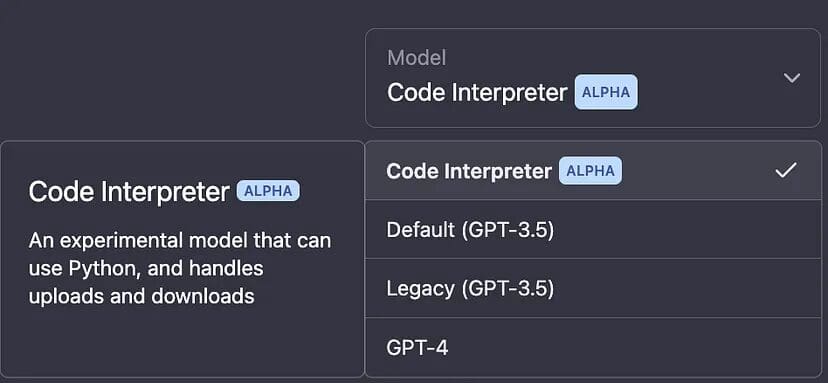

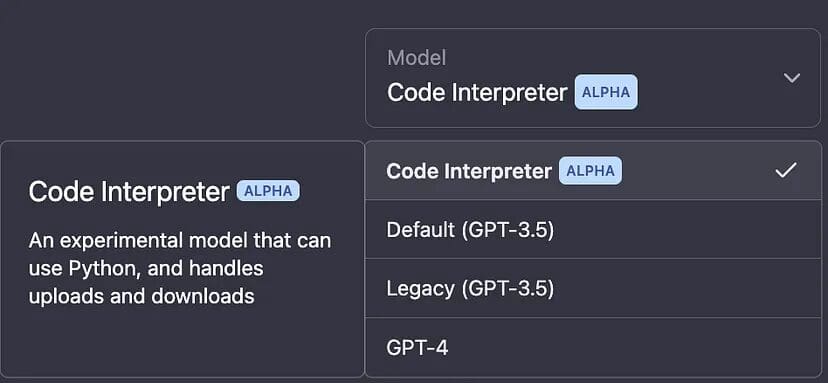

The ChatGPT code interpreter plugin is another example of how data science workflows are becoming democratized. It allows you to run Python code and analyze data in the chat.

Image by “The Latest Now” on Medium

You can upload CSV files and get ChatGPT to help you clean, analyze, and build statistical models on them.

Once you analyze the data and tell it what you want to do (for instance, forecast sales numbers for the next quarter), ChatGPT will tell you the steps you can take to achieve the final outcome.

It will then proceed to do the actual analysis and modeling for you, and explain the output at each stage of the process.

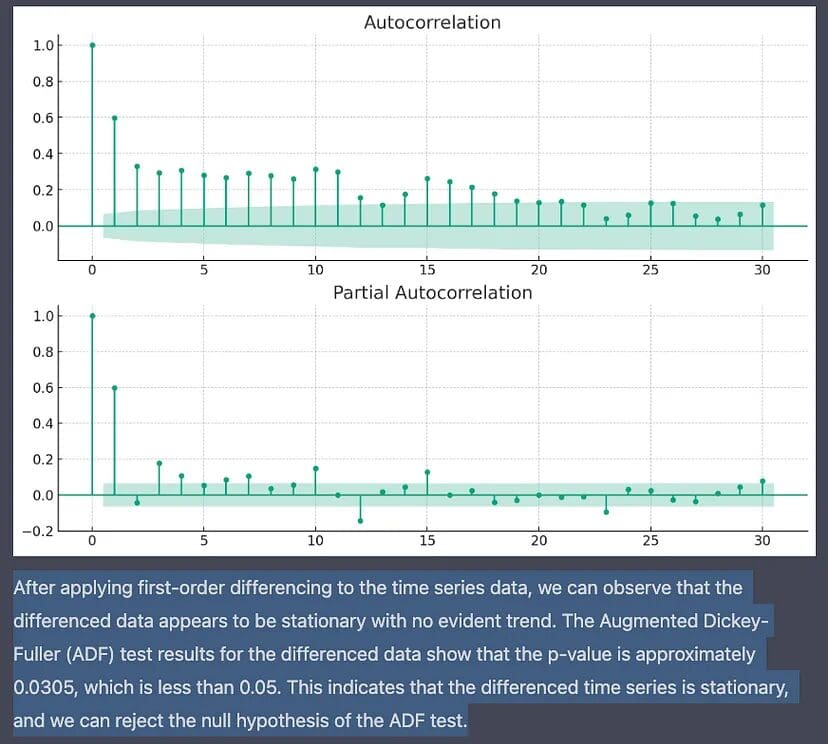

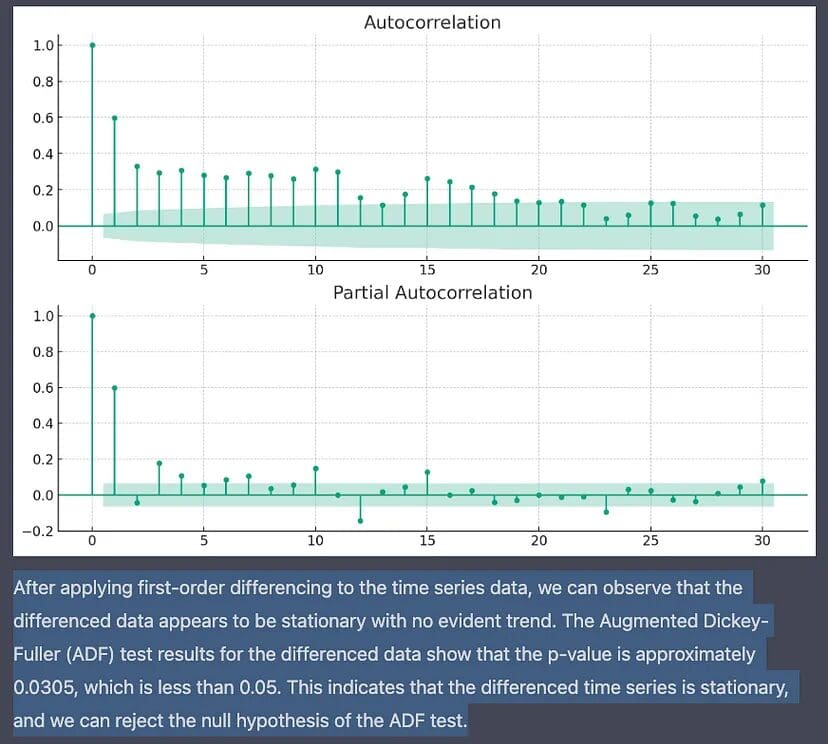

In this article, the author asks ChatGPT’s code interpreter to predict future inflationary trends using the Federal Reserve Economic Data (FRED). The algorithm started by visualizing the current trend in the data.

It then checked the data for stationarity, transformed it, and decided to use ARIMA to perform the modeling. It was even able to find the optimal parameters to use to generate forecasts with ARIMA:

Image by “The Latest Now” on Medium

These are steps that would typically take a data scientist around 3-4 hours to perform, and ChatGPT was able to do it in minutes by simply ingesting the data that was uploaded by the user.

This is an impressive feat, and will dramatically reduce the amount of expertise required to facilitate the model-building process.

So…Is Human Expertise Still Required?

Of course, regardless of how good AI gets at coding and model building, human experts are still required to oversee the process.

ChatGPT often generates incorrect code and makes wrong decisions when building statistical models. Companies still need to hire employees who are good at statistics and programming to oversee the data science process, to ensure that the model is prompted correctly.

LLMs cannot create full-fledged data products, as humans still need to perform tasks like requirement gathering, debugging, and validating the model’s output.

However, companies will not need as many people to perform these tasks as they did before.

Significant efficiency gains like the ones driven by LLMs would mean that teams can start downsizing.

Instead of getting 10 data scientists to do the job, for instance, companies can simply hire 5.

I believe that entry-level data science jobs will be the first ones to get impacted by this development since LLMs can already perform intermediate-level coding and analytical workflows.

Hiring freezes due to AI is already taking place in big tech, and we might be witnessing a scenario in which the data science workforce surpasses the demand for this skill.

How to AI-Proof Your Career in the Age of ChatGPT

Fortunately, it’s not all doom and gloom for us tech and data science professionals. Although LLMs are rapidly improving at tasks like programming and data analysis, they cannot replace human creativity and decision-making.

Here are some ways to AI-proof your career in the age of LLMs:

Gain Business Expertise

Organizations will continue to hire people who generate revenue for the business.

If you have domain expertise in a specific area and understand the intricacies of the company’s operations and customer needs, you are in a unique position to identify opportunities for growth.

The last thing you want to do is to be in competition in AI — you don’t want to be the guy managing a spreadsheet, or the person everyone approaches to create a quarterly performance report. These jobs can easily be automated and will be the first to go in the ChatGPT age.

I would argue that instead of focusing your effort on learning to use specific software that LLMs can master a lot faster than you can, learn to look at the bigger picture. Develop leadership and managerial skills, and understand how AI can be leveraged to achieve the company’s goals with data.

Embrace AI

According to Pew Research Center, only 14% of adults have actually tried ChatGPT. If you are reading this article, using ChatGPT to learn new things, and staying on top of AI advancements, then you are an early adopter.

I suggest incorporating LLMs into your workflows, using products that are integrated with AI, and learning best practices for maximizing efficiency with these models.

This way, you can stay ahead of the curve, and will better understand which parts of your job can be automated, and which ones require human intervention.

Not only will this make you a better data scientist, but when organizations do start incorporating AI into different business areas, you will be in the best position to advise on how it can be used to increase productivity.

In fact, there’s a new role called prompt engineering that has emerged recently, commanding salaries of up to $335,000. A prompt engineer is an expert at getting generative AI applications to do what they want.

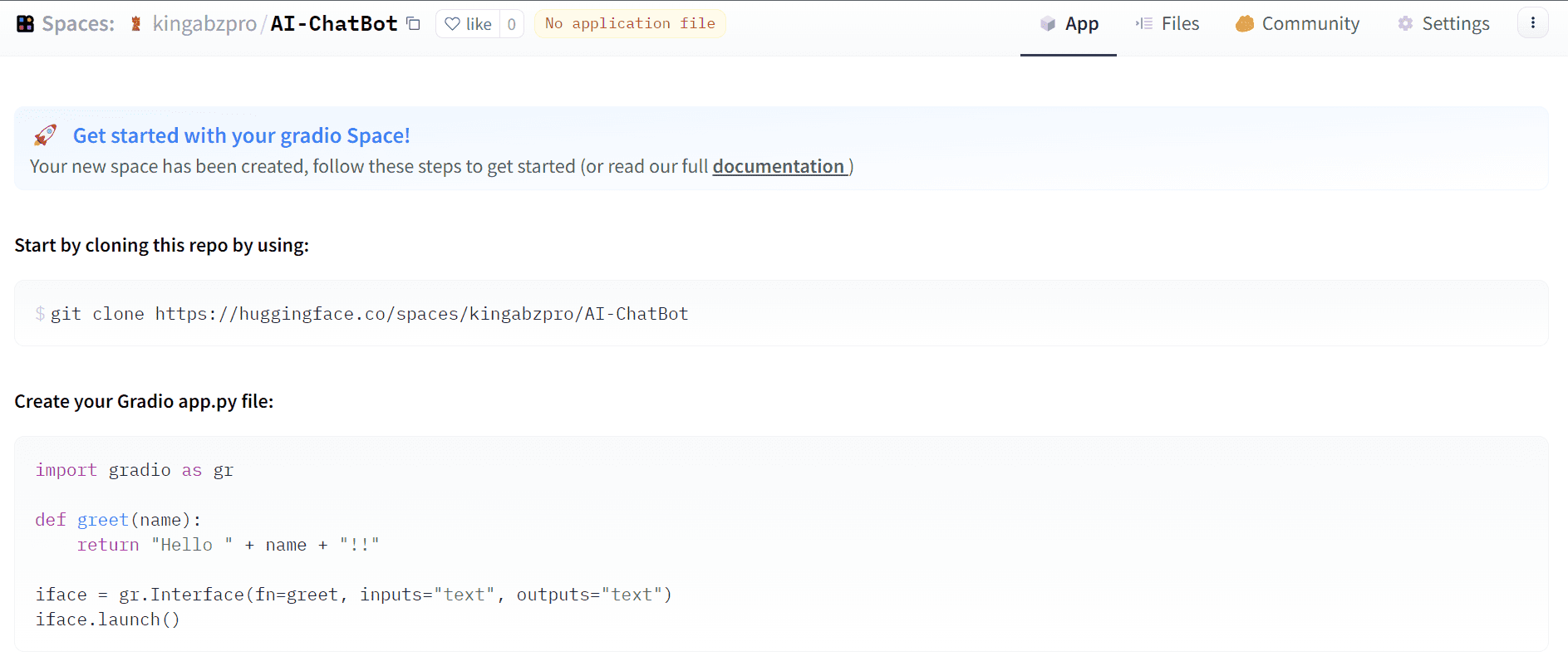

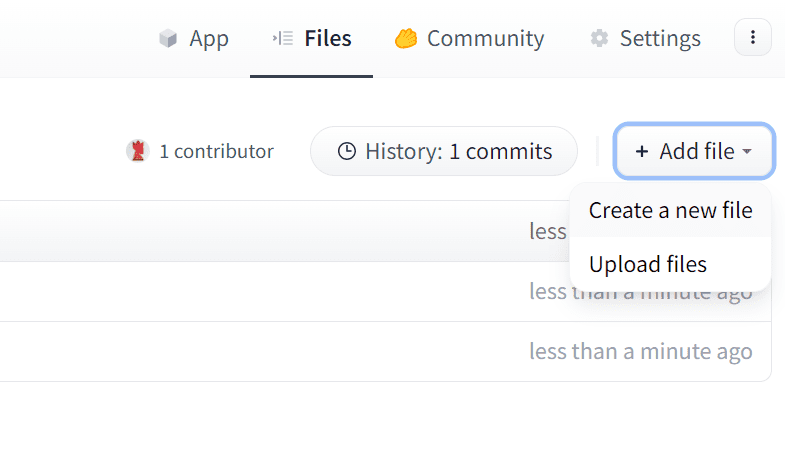

A good prompt engineer is someone who can “project manage” AI into accomplishing tasks like designing web applications.

Regardless of whether you’d like to pursue a job as a prompt engineer, incorporating AI into your existing workflows will give you a competitive edge over people who aren’t currently doing so.

Diversify Your Income

Organizations are going to start restructuring soon, as they start developing new business strategies that incorporate AI.

If this results in mass layoffs, the only way to protect yourself is to have various streams of income that do not rely solely on your full-time job.

I suggest creating a freelance portfolio — working for more than one organization and getting passive income will ensure that your future isn’t dependent on the decisions made by a single employer.

Creating a Personal Brand

Finally, Harvard Business Review suggests creating a personal brand to set yourself apart from the crowd.

Medium writers like Tim Denning and Jessica Wildfire, for example, will still have a devoted base of followers and people who consume their products, even if AI is able to emulate their writing style.

This is because at the end of the day, humans enjoy real stories and want to feel connected to other individuals, and this is something that AI simply cannot provide.

Similarly, organizations will continue to hire industry leaders who are recognized in the field, as a statement of quality and branding. Some ways to build a personal brand include building a data science portfolio, creating content, and constantly upskilling.

Takeaways

Generative models are going to transform the job landscape, and fields like data science, analytics, and programming will be impacted due to the efficiency gains provided by these tools.

However, this doesn’t spell the end for data scientists. Following the strategies outlined above can help you stay ahead of the curve and ensure that you aren’t in competition with AI.

Natassha Selvaraj is a self-taught data scientist with a passion for writing. You can connect with her on LinkedIn.

More On This Topic

- DataLang: A New Programming Language for Data Scientists… Created by…

- 20 Questions (with Answers) to Detect Fake Data Scientists: ChatGPT…

- 20 Questions (with Answers) to Detect Fake Data Scientists: ChatGPT…

- AI is Not Here to Replace Us

- Will DeepMind’s AlphaCode Replace Programmers?

- What will the demand for Data Scientists be in 10 years? Will Data…