What does it take to build a company? A good product, a good team, and good funding. For the first one, you need to start out with an idea. In today’s world, it is probably anything related to AI or generative AI. For grabbing the latter two, if you look at all the startups that are getting funded lately, there is a secret recipe — having a co-founder out of either Stanford, MIT, or Harvard.

It cannot be exactly causation, but there is some correlation between raising huge funds and being a top university graduate. Each year, Crunchbase tallies up which US university graduates received the highest number of recently funded startups. Through 2022-23, Stanford, MIT, UC Berkeley, Harvard, and Cornell have the highest number of funded founders. Similarly, through 2021-22, it was the same but only Cornell was replaced by Columbia. Sadly, there are no Indian premium institutions like IIT or IISc.

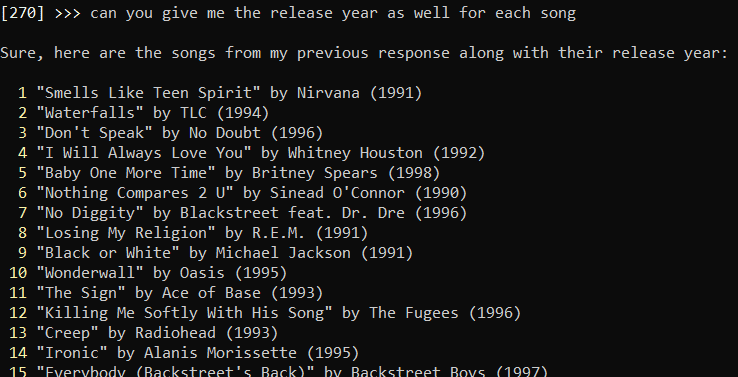

Who’s working on providing startups with a token Stanford grad cofounder?

— Bojan Tunguz (@tunguz) June 25, 2023

Seems like more than finding the right product, it is important to have a founder from the top universities. It is kind of obvious though, given that many of the top companies in history have been created by either a graduate or dropout from these universities such as Mark Zuckerberg’s Meta, Larry Page’s Alphabet, Bill Hewlett’s HP, Elon Musk’s Tesla, and Bill Gates’ Microsoft.

Desperate times, desperate measures

When it comes to generative AI, VCs are ready to fund everyone and everything amid all the hype, especially when it comes from a top university graduate. Unfortunately, a lot of founders now have this figured out and are ready to exploit the situation.

In March, Roshan Patel, founder of Walnut, pulled a prank using this strategy. He created a fake LinkedIn profile by the name Chad Smith with an AI-generated face. Then, all he had to do was mention he was a Stanford dropout, working on a stealth AI startup, and going through Y Combinator — that was it! Within 24 hours, he received a message from a VC about how the firm had heard about “him” from his buddies and would learn more about the startup.

It is funny how VCs funding generative AI startups are also getting fooled by founders made by generative AI. On an interesting note, Stability AI’s founder Emad Mostaque also claimed that he has a master’s degree in computer science from Oxford, but only had a bachelor’s degree after verification.

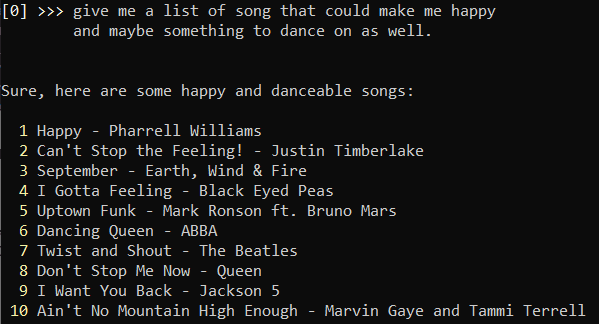

Let’s provide startups with founders instead of fundings

Big VCs have a chance to provide startups with founders from these top universities. On the flip side, graduates from these universities, such as Stanford or Harvard, can join AI startups, essentially giving them one of the biggest tokens for getting funded, in turn, these graduates would get equity in the startup. Sounds like Stanford-as-a-service.

Not just universities, VCs are also funding companies that are started by former employees of Meta FAIR, Google Brain, or Microsoft Research. The most recent example is Reka, an AI startup that came out of stealth mode and announced a $58 million funding from a bunch of ventures. The founders, Yi Tay, Dani Yogatama, Qi Liu, and Cyprien de Masson d’Autume, have all worked for big projects at Google, DeepMind, Meta, and Microsoft.

Similarly, Mistral.AI, the Paris-based startup raised the highest ever seed funding in the world. The $113 million funding was directly affiliated with the founders as the startup was exactly four-weeks old during the time of funding with no product. One of the founders has previously worked with DeepMind and the other two of them are behind the best open source project of Meta — LLaMA. Clearly, the VCs see potential, and money in these founders.

The case is the same with Inflection.AI by Mustafa Suleyman, who has worked with DeepMind before. Anthropic AI and Dario Amodei also have the same story, being a former OpenAI employee. Though these both are now more than a year old companies, it becomes clear that investors see value within the founders, which can be more than the actual product they are building.

The biggest benefactor of all of this is NVIDIA. Coincidently its chief Jensen Huang, also seems to be from Stanford.

The post Stanford as a Service appeared first on Analytics India Magazine.

Conclusion

Conclusion