OpenAI recently said that it would solve AI Alignment within four years — an idea that seems farfetched. And people have reacted. Meta founder Mark Zuckerberg commented that at a time when we are not even able to solve cyber crime issues, solving super alignment is no less than climbing a mountain peak.

Meta AI chief Yann LeCun said that one cannot solve the AI alignment problem in four years. “One doesn’t just ‘solve’ the safety problem for turbojets, cars, rockets, or human societies, either,” he said, adding that engineering-for-reliability is always a process of continuous and iterative refinement.

But, is the AI threat even real?

A few days back ago, ‘The Terminator’ Arnold Schwarzenegger expressed his concerns about AI taking over humanity. He claimed ‘The Terminator’ franchise, which launched his career in 1984, predicted the future of artificial intelligence and how the movie predicted about the machines becoming self-aware and taking over. As AI continues to advance, discussions around its responsible development and potential risks are at the forefront. The concerns surrounding AI turning against humans are not unfounded.

In a blog post, Altman, the founder of Open AI, emphasised the significance of ensuring alignment in the development of super-intelligent AGI. He acknowledged that a misaligned AGI could pose significant harm to the world, and even an autocratic regime with superior AI capabilities could potentially cause similar damage. These remarks underscore the importance of cautious and ethical approaches in shaping the future of AI. In another blog post, he went a step ahead warning about dangers of AI saying “it could lead to the disempowerment of humanity or even human extinction”.

Earlier, in an interview with Fridman, when asked if AI would kill humans, Altman said there was a chance that it would, and it’s important to acknowledge it. “If we don’t treat it as potentially real, we won’t put enough effort into solving it. And I think we have to discover new techniques to be able to solve it.”

It looks like Altman is taking his concerns seriously as OpenAI launched ‘Superalignment’. OpenAI plans to invest a significant investment and resources to create a new research team to ensure its artificial intelligence remains safe for humans. This new team will be co-led by Ilya Sutskever and Jan Leik and the company will dedicate 20% of the compute they’ve secured to date to this effort.

Should we be worried?

Currently, there is no definitive answer to this question, and various experts and founders hold differing viewpoints on the matter. Altman, for instance, emphasises the importance of acknowledging the issue and actively addressing it. In contrast, Meta’s founder, Zuckerberg, takes a different stance, suggesting there is no immediate need for excessive concern. These divergent perspectives reflect the ongoing discourse surrounding the appropriate approach to handling this complex issue.

In an interview with Lex Fridman, Zuckerberg said that we are quite a few steps away from super intelligence so the existential threat is much further out. There are more near term risks of people using AI to do harmful things like fraud or scams etc that need to be tackled now. These are going to be a pretty big set of challenges to tackle. So he is worried that people are focused on the tail risk of existential threat instead of doing a good job with the risks that are more certain near term.

Along the same lines, LeCun said, “I think that the magnitude of the AI alignment problem has been ridiculously overblown and our ability to solve it is widely underestimated.” LeCun thinks that for machines to be in control, they should “want to take control” and our instant assumption that they will obviously dominate humans is purely drawn from science fiction dreams.

Meanwhile, the inventor of Markov Logic Network Pedro Domingos believes that OpenAI is going to waste billions of dollars on an unworkable solution to a fake problem.

In conclusion

Apart from OpenAI, no one else has taken the lead to address the issue of Alignment. Many authors have expressed concerns in their columns regarding alignment but most of them take their inspiration from fiction. Open AI said that currently it doesn’t have a solution for steering or controlling a potentially superintelligent AI, and preventing it from going rogue.

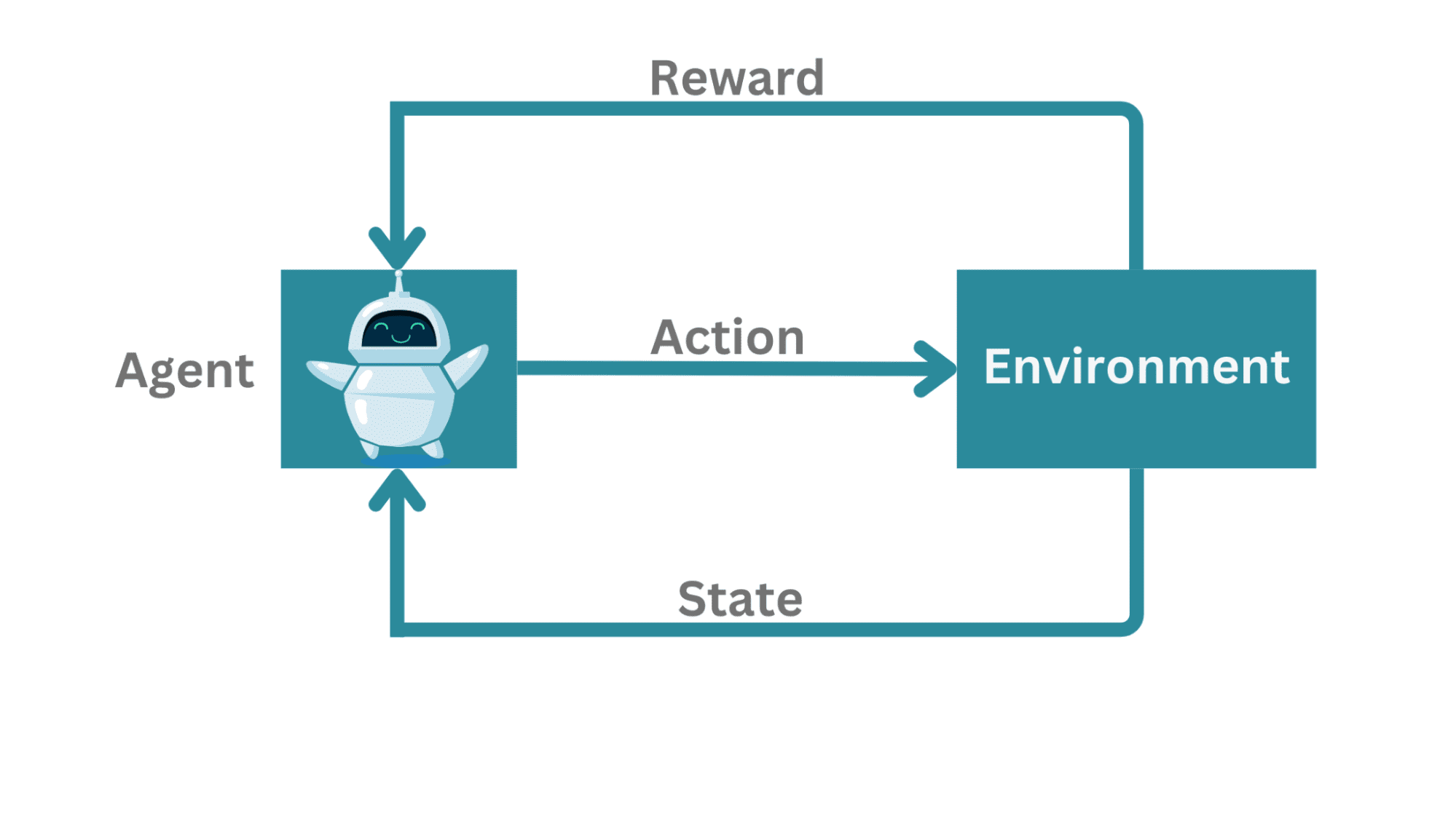

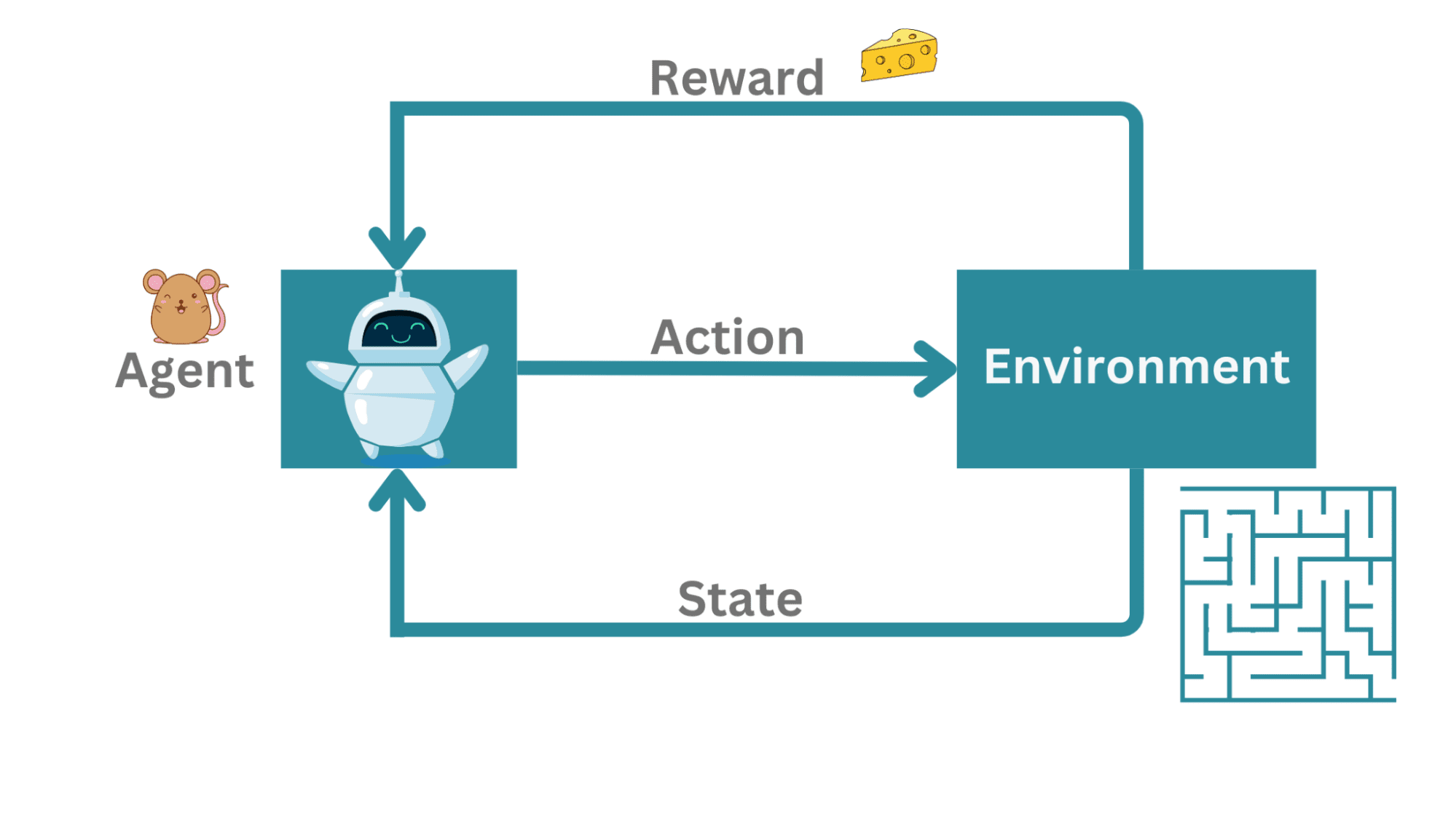

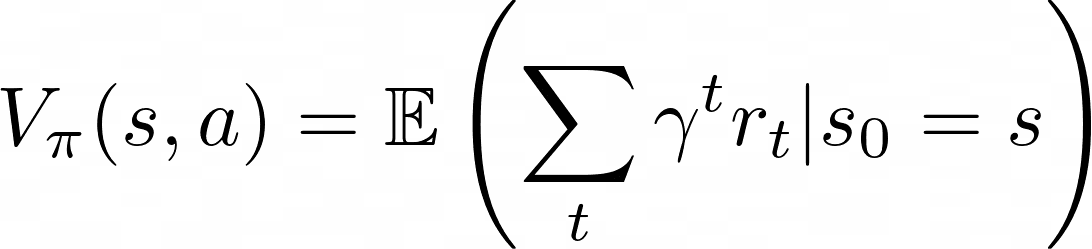

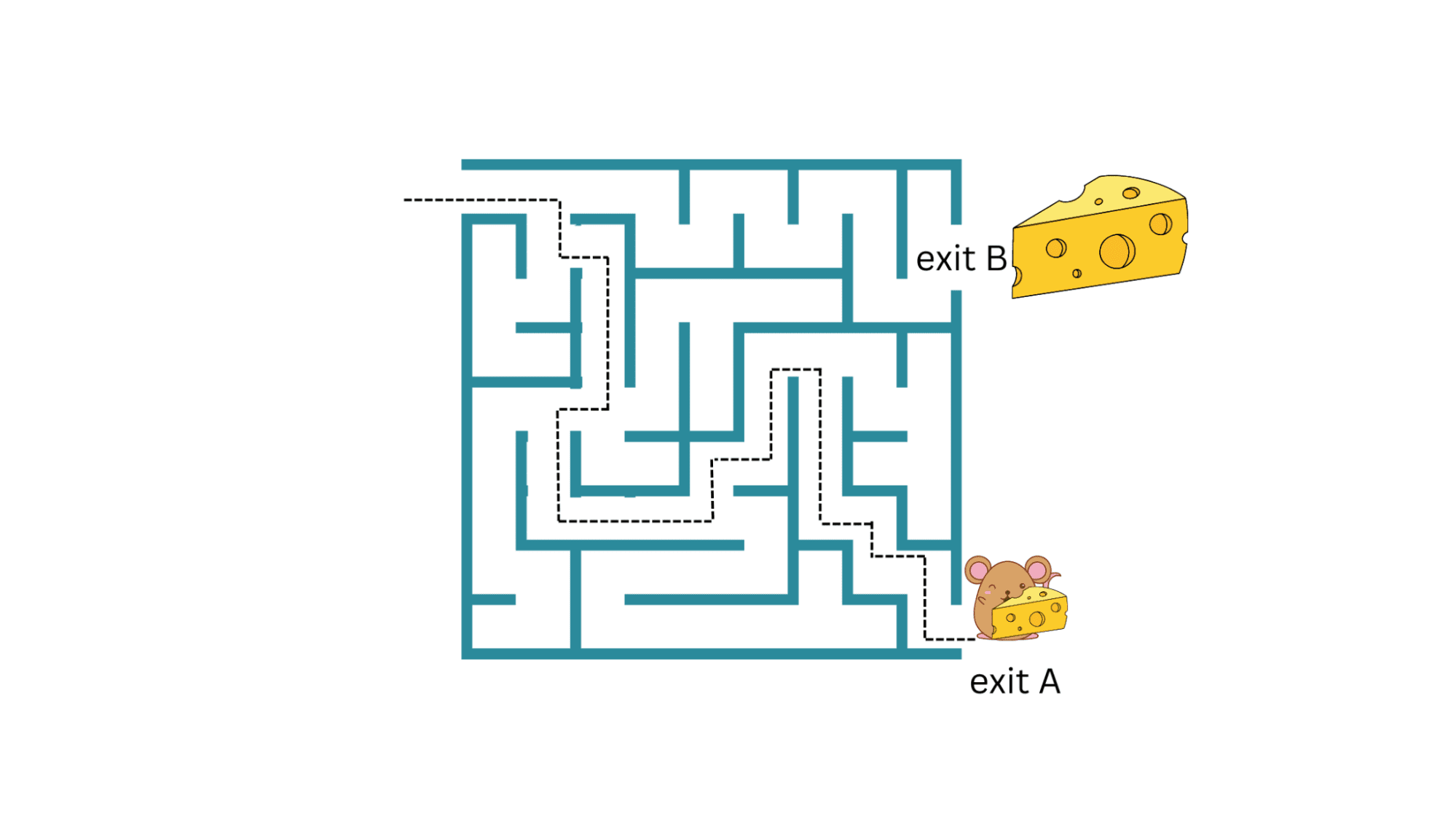

Their present techniques for aligning AI, such as reinforcement learning from human feedback, rely on humans’ ability to supervise AI. But humans won’t be able to reliably supervise AI systems much smarter than us. They need new scientific and technical breakthroughs.

The post OpenAI’s Pursuit of AI Alignment is Farfetched appeared first on Analytics India Magazine.