The conversation around the use cases of LLMs has been rapidly growing, supercharged by the release of Llama 2, Meta’s new open source model. Even as Meta’s Llama has been released to the public, there is still a huge barrier for entry in terms of being able to run it on local hardware. To remedy this issue and truly open up the power of Llama 2 to everyone, Meta has partnered with Qualcomm to enable the chipmaker to optimise the model for running on-device, powered by the chips’ AI capabilities.

Industry experts are predicting that open LLMs could create a new generation of AI-powered content generation, smart assistants, productivity applications, and more. Adding the capability to natively run LLMs on-device is sure to create a strong new ecosystem of AI-powered applications, akin to the app store explosion that happened with iPhones.

This move will not only democratise access to the model, but also unlock a bunch of possibilities for on-device AI processing. It also comes at a time where the consumer hardware and software industries are waking up to the possibility of AI capabilities at the edge. First spearheaded by Apple’s inclusion of a neural engine in the M1 chip, the addition of a new type of processor on personal computers will finally give developers the tools to create truly democratic AI.

What the Qualcomm-Meta partnership entails

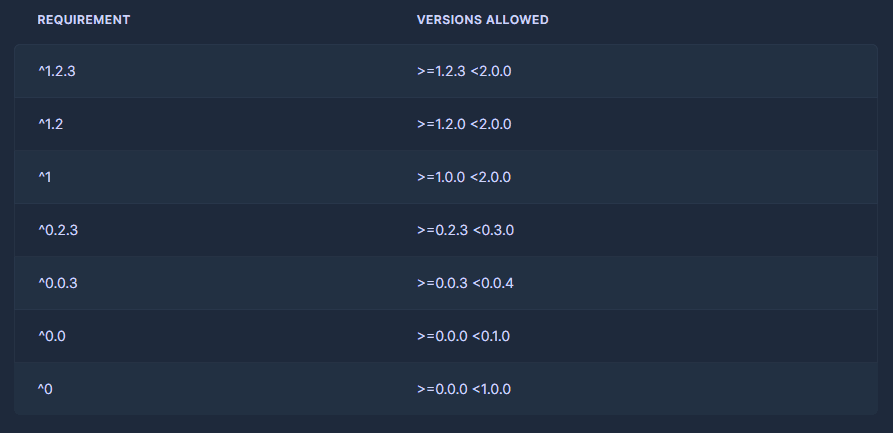

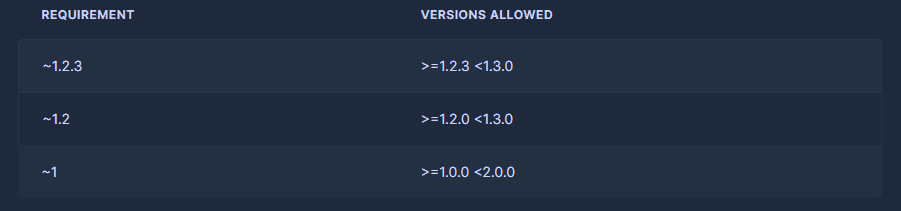

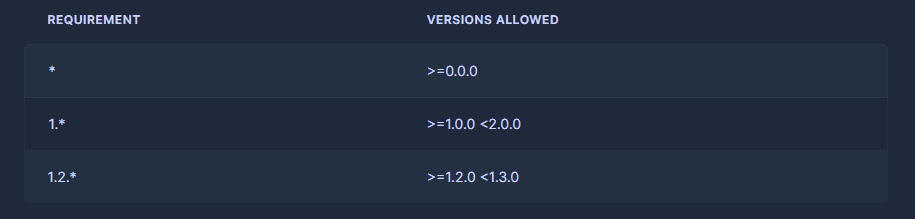

For context, Qualcomm is currently creating a new set of AI-enabled chips under the Snapdragon platform. Using what it calls the Hexagon processor, the chipmaker equips its chips with various AI capabilities. Using an approach called micro tile inferencing, Qualcomm is able to integrate tensor cores, dedicated processing for SegNet, scalar, and vector workloads into an AI processor, which is then integrated into a Snapdragon mobile chip.

As part of its partnership with Meta, Qualcomm will make Llama 2 implementations available on-device, harnessing the capabilities of the new AI-enabled Snapdragon chips. Since the model will be running on-device, developers can not only cut down on cloud computing costs for their applications, but also bring a higher degree of privacy to users, as no data is in transit to servers off-device.

Running the models on the device also brings the additional benefits of being able to use generative AI without connection to the Internet. Moreover, the models can also be personalised to the users’ preferences as it ‘lives’ on the device. Llama 2 will also fit neatly into the Qualcomm AI Stack, a set of developer tools made to further optimise running AI models on-device.

“We applaud Meta’s approach to open and responsible AI and are committed to driving innovation and reducing barriers-to-entry for developers of any size by bringing generative AI on-device,” said Durga Malladi, Qualcomm’s senior vice president and general manager of technology, planning and edge solutions businesses.

Qualcomm has also worked closely with Meta in the past, mainly to make chips for its Oculus Quest VR headsets. The company has also tied up with Microsoft to help scale on-device AI workloads. As part of a partnership with Qualcomm and other chipmakers like Intel, AMD, and NVIDIA, Microsoft introduced the new Hybrid AI Loop toolkit to support AI development at the edge. Taking a zoomed out look at the edge AI hardware and software ecosystem, it is clear that the industry is moving towards AI at the edge, and Llama 2 might have a bigger role to play than anyone thinks.

Setting the open source world on fire

It seems that Meta has learnt a lot from the leak of the first LLaMA model. While the first iteration of this LLM was only available to researchers and academic institutions, the model and its weights were leaked on the Internet through 4chan. This resulted in an explosion of open source LLM innovation using LlaMa as the base model.

In just under a month of its launch, the open source community had already bettered LLaMA in every way possible. Researchers at Stanford University created a version of LLaMA that could be trained for a cost of $600, which then led to the development of many other faster and lighter versions. Most, if not all of these versions, could be run on-device, giving the world access to their very own LLMs.

One developer ported the LLM model to C++, which then resulted in a version of the model that could be run on a phone. The project, dubbed LLaMA.cpp, was fueled by the open source community, resulting in the model’s weights being quantised. This innovation allowed it to run on a Google Pixel 5, albeit generating only 1 token per second.

As part of the latest partnership with Meta, Snapdragon could receive information about the inner workings of the model. This would enable the chipmaker to bake in certain optimisations, allowing Llama 2 to run better than other models. Considering the 2024 release window, it is also likely that Qualcomm will likely explore other partnerships to coincide with the launch of its Snapdragon 8 Gen 3 chip.

The open source community is also sure to contribute its fair share to the (almost) completely open Llama 2. When combined with huge industry momentum for on-device AI, this move is the first of many to support a vibrant on-device AI ecosystem.

The post Meta-Qualcomm Partnership will Bring Llama 2 to the Masses appeared first on Analytics India Magazine.