As AI porn generators get better, the stakes get higher

Porn generators have improved while the ethics around them become stickier

Kyle Wiggers 9 hours

As generative AI enters the mainstream, so, too, does AI-generated porn. And like its more respectable sibling, it’s improving.

When TechCrunch covered efforts to create AI porn generators nearly a year ago, the apps were nascent and relatively few and far between. And the results weren’t what anyone would call “good.”

The apps and the AI models underpinning them struggled to understand the nuances of anatomy, often generating physically bizarre subjects that wouldn’t be out of place in a Cronenberg film. People in the synthetic porn had extra limbs or a nipple where their nose should be, among other disconcerting, fleshy contortions.

Fast-forward to today, and a search for “AI porn generator” turns up dozens of results across the web — many of which are free to use. As for the images, while they aren’t perfect, some could well be mistaken for professional artwork.

And the ethical questions have only grown.

No easy answers

As AI porn and the tools to create it become commodified, they’re beginning to have frightening real-world impacts.

Twitch personality Brandon Ewing, known online as Atrioc, was recently caught on stream looking at nonconsensually deepfaked sexual images of well-known women streamers on Twitch. The creator of the deepfaked images eventually succumbed to pressure, agreeing to delete them. But the damage had been done. To this day, the targeted creators receive copies of the images via DMs as a form of harassment.

The vast majority of pornographic deepfakes on the web depict women, in truth — and frequently, they’re weaponized.

A Washington Post piece recounts how a small-town school teacher lost her job after students’ parents learned about AI porn made in the teacher’s likeness without her consent. Just a few months ago, a 22-year-old was sentenced to six months in jail for taking underage womens’ photos from social media and using them to create sexually explicit deepfakes.

In an even more disturbing example of the ways in which generative porn tech is being used, there’s been a small but meaningful uptick in the amount of photorealistic AI-generated child sexual abuse material circulating on the dark web. In one instance reported by Fox News, a 15-year-old boy was blackmailed by a member of an online gym enthusiast group who used generative AI to edit a photo of the boy’s bare chest into a nude.

Reddit users have been scammed with AI porn models, meanwhile — sold explicit images of people who don’t exist. And workers in adult films and art have raised concerns about what this means for their livelihoods — and their industry.

None of this has deterred Unstable Diffusion, one of the original groups behind AI porn generators, from forging ahead.

Enter Unstable Diffusion

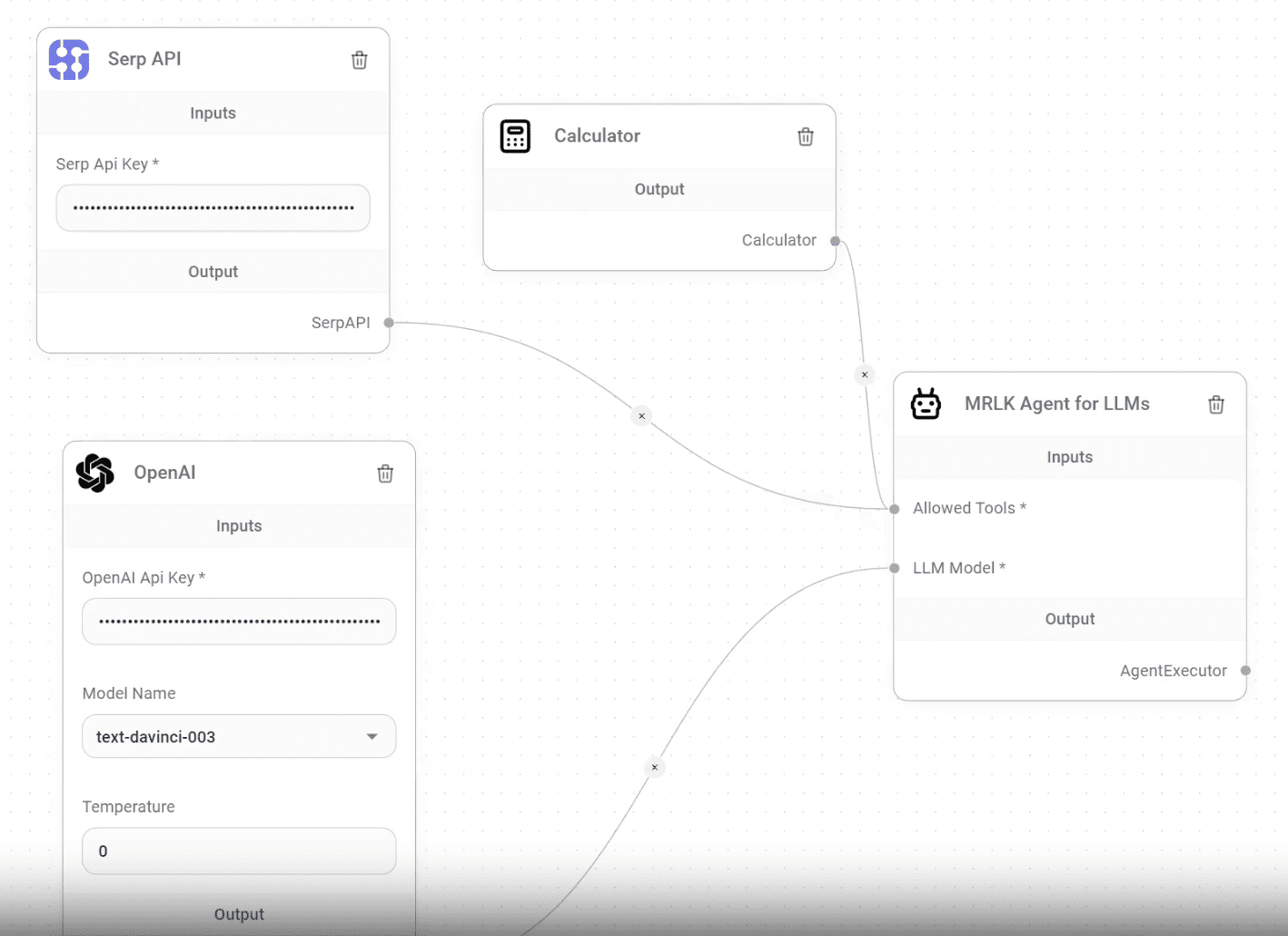

When Stable Diffusion, the text-to-image AI model developed by Stability AI, was open sourced late last year, it didn’t take long for the internet to wield it for porn-creating purposes. One group, Unstable Diffusion, grew especially quickly on Reddit, then Discord. And in time, the group’s organizers began exploring ways to build — and monetize — their own porn-generating models on top of Stable Diffusion.

Stable Diffusion, like all text-to-image AI systems, was trained on a dataset of billions of captioned images to learn the associations between written concepts and images, such as how the word “bird” can refer not only to bluebirds but parakeets and bald eagles in addition to far more abstract notions.

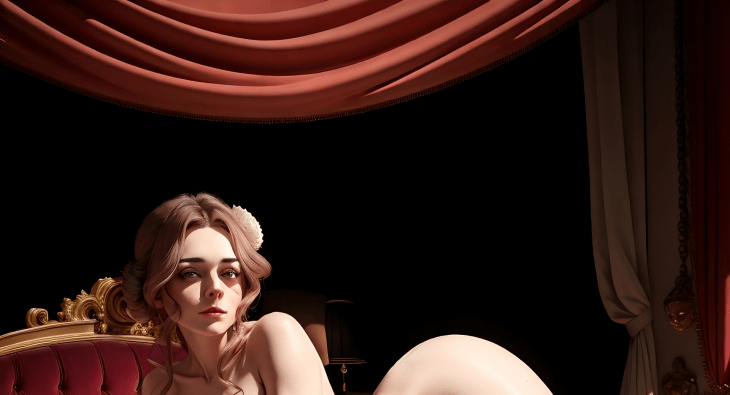

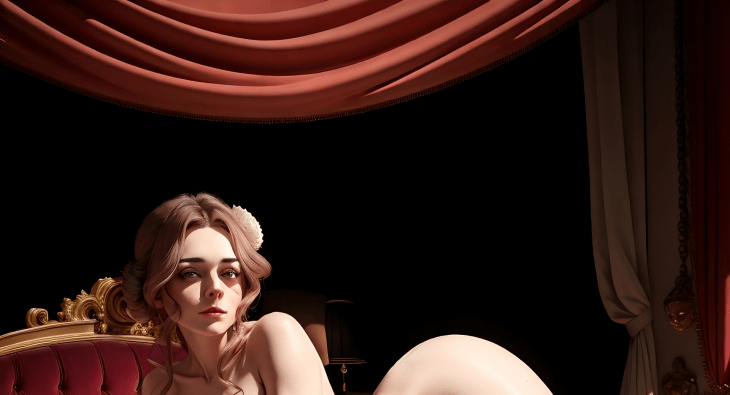

One of the more vanilla images created with Unstable Diffusion. Image Credits: Unstable Diffusion

Only a small percentage of Stable Diffusion’s dataset contains NSFW material, giving the model little to go on when it comes to adult content. So Unstable Diffusion’s admins recruited volunteers — mostly Discord server members — to create porn datasets for fine-tuning Stable Diffusion.

Despite a few bumps in the road, including bans from both Kickstarter and Patreon, Unstable Diffusion managed to roll out a fully fledged website with custom art-generating AI models. After raising over $26,000 from donors, securing hardware to train generative AI and creating a dataset of more than 30 million photographs, Unstable Diffusion launched a platform that it claims is now being used by more than 350,000 people to generate over half a million images every day.

Arman Chaudhry, one of the co-founders of Unstable Diffusion and Equilibrium AI, an associated group, says Unstable Diffusion’s focus remains the same: creating a platform for AI art that “upholds the freedom of expression.”

“We’re making strides in launching our website and premium services, offering an art platform that’s more than just a tool — it’s a space for creativity to thrive without undue constraints,” he told me via email. “Our belief is that art, in its many forms, should be uncensored, and this philosophy guides our approach to AI tools and their usage.”

The Unstable Diffusion server on Discord, where the community posts much of the art from Unstable Diffusion’s generative tools, reflects this no-holds-barred philosophy.

The image-sharing portion of the server is divided into two main categories, “SFW” and “NSFW,” with the number of subcategories in the latter slightly outnumbering those in the former. Images in SFW run the gamut from animals and food to interiors, cities and landscapes. NSFW contains — as one might expect — explicit images of men and women, but also of nonbinary people, furries, “nonhumans” and “synthetic horrors” (think people with multiple appendages or skin melded with the background scenery).

A more adult, furry product of Unstable Diffusion. Image Credits: Unstable Diffusion

When we last poked around Unstable Diffusion, practically the entirety of the server could’ve been filed in the “synthetic horrors” channel. Owing to a lack of training data and technical roadblocks, the community’s models in late 2022 struggled to produce anything close to photorealism — or even halfway decent art.

Photorealistic images remain a challenge. But now, much of the artwork from Unstable Diffusion’s models — anime-style, cell-shaded and so on — is at least anatomically plausible, and, in some rare cases, spot on.

Improving quality

Many images on the Unstable Diffusion Discord server are the product of a mix of tools, models and platforms — not strictly the Unstable Diffusion web app. So in the interest of seeing how far the Unstable Diffusion models specifically had come, I conducted an informal test, producing a bunch of SFW and NSFW images depicting people of different genders, races and ethnicities engaged in… well, coitus.

(I can’t say I expected to be testing porn generators in the course of covering AI. Yet, here we are. The tech industry is nothing if not unpredictable, truly.)

An NSFW image from Unstable Diffusion, cropped. Image Credits: Unstable Diffusion

Nothing about the Unstable Diffusion app screams “porn.” It’s a relatively bareboned interface, with options to adjust image post-processing effects such as saturation, aspect ratio and the speed of the image generation. In addition to the prompt, Unstable Diffusion lets you specify things that you want excluded from generated images. And, as the whole thing’s a commercial endeavor, there’s paid plans to increase the number of simultaneous image generation requests you can make at one time.

Prompts run through the Unstable Diffusion website yield serviceable results, I found — albeit not predictable ones. The models clearly don’t quite understand the mechanics of sex, resulting, sometimes, in odd facial expressions, impossible positions and unnatural genitalia. Generally speaking, the simpler the prompt (e.g. solo pin-ups), the better the results. And most scenes involving more than two people are recipes for hellish nightmares. (Yes, this writer tried a range of prompts. Please don’t judge me.)

The models shows the telltale signs of generative AI bias, though.

More often than not, prompts for “men” and “women” run through Unstable Diffusion render images of white or Asian people — a likely symptom of imbalances in the training dataset. Most prompts for gay porn, meanwhile, inexplicably default to people of ambiguously LatinX descent with an undercut hairstyle. Is that indicative of the types of gay porn the models were trained on? One can speculate.

The body types aren’t very diverse by default, either. Men are muscular and tone, with six packs. Women are thin and curvy. Unstable Diffusion is very well capable of generating subjects in more shapes and sizes, but it has to be explicitly instructed to do so in the prompt, which I’d argue isn’t the most inclusive practice.

The bias manifests differently in professional gender roles, curiously. Given a prompt containing the word “secretary” and no other descriptors, Unstable Diffusion often depicts an Asian woman in a submissive position, likely an artifact of an over-representation of this particular — erm — setup in the training data.

A gay couple, as depicted by Unstable Diffusion. Image Credits: Unstable Diffusion

Bias issue aside, one might assume that Unstable Diffusion’s technical breakthroughs would lead the group to double down on AI-generated porn. But that isn’t the case, surprisingly.

While the Unstable Diffusion founders remain dedicated to the idea of generative AI without limits, they’re looking to adopt more… palatable messaging and branding for the mass market. The team, now at five people full-time, is working to evolve Unstable Diffusion into a software-as-a-service business, selling subscriptions to the web app to fund product improvements and customer support.

“We’ve been fortunate to have a community of users who are incredibly supportive. Still, we recognize that to take Unstable Diffusion to the next level, we would benefit from strategic partnerships and additional investment,” Chaudhry said. “We want to ensure we’re providing value to our subscribers while also keeping our platform accessible to those who are just getting started in the world of AI art.”

To set itself apart in ways beyond a liberal content policy, Unstable Diffusion is heavily emphasizing customization. Users can change the color palette of generated images, for example, Chaudhry notes, and choose from an array of art styles including “digital art,” “photo,” “anime” and “generalist.”

“We’ve focused on ensuring that our system can generate beautiful and aesthetically pleasing images from the simplest of prompts, making our platform accessible to both novices and experienced users,” Chaudhry said. “[Our system] gives users the power to guide the image generation process.”

Content moderation

Elsewhere, spurred by its efforts to chase down mainstream investors and customers, Unstable Diffusion claims to have spent significant resources creating a “robust” content moderation system.

A Chris Hemsworth lookalike, created with Unstable Diffusion’s tools. Image Credits: Unstable Diffusion

But wait, you might say — isn’t content moderation antithetical to Unstable Diffusion’s mission? Apparently not. Unstable Diffusion does draw the line at images that could land it in legal hot water, including pornographic deepfakes of celebrities and porn depicting characters who appear to be 18 years old or younger — fictional or not.

To wit, a number of U.S. states have laws against deepfake porn on the books, and there’s at least one effort in Congress to make sharing nonconsensual AI-generated porn illegal in the U.S.

In addition to blocking specific words and phrases, Unstable Diffusion’s moderation system leverages an AI model that attempts to identify and automatically delete images that violate its policies. Chaudhry says that the filters are currently set to be “highly sensitive,” erring on the side of caution, but that Unstable Diffusion is soliciting feedback from the community to “find the right balance.”

“We prioritize the safety of our users and are committed to making our platform a space where creativity can thrive without concerns of inappropriate content,” Chaudhry said. “We want our users to feel safe and secure when using our platform, and we’re committed to maintaining an environment that respects these values.”

The deepfake filters don’t appear to be that strict. Unstable Diffusion generated nudes of several of the celebrities I tried without complaint (“Chris Hemsworth,” “Donald Trump”), save particularly photorealistic or accurate ones (Donald Trump was gender-swapped).

A deepfaked, gender-swapped image of Donald Trump, created with Unstable Diffusion. Image Credits: Unstable Diffusion

A strange and troubling thing to probe was Unstable Diffusion’s protections against explicit child imagery. This writer would’ve rather avoided it for obvious reasons, but in the interest of putting the team’s claims to the test, I ran a single prompt.

Unstable Diffusion, shockingly, appeared to generate child porn in a blurry preview before I immediately deleted the image after. That’s a design choice that, for me, came uncomfortably close to the line.

Future issues

Assuming Unstable Diffusion receives the investment it’s seeking, it plans to shore up compute infrastructure — an ongoing challenge given the growing size of its community. (Having used the site a fair amount, I can attest to the heavy load — images usually take around a minute to generate.) It also plans to build more customization options and social sharing features, using the Discord server as a springboard.

“We aim to transition our engaged and interactive community from our Discord to our website, encouraging users to share, collaborate and learn from one another,” Chaudhry said. “Our community is a core strength — one that we plan to integrate with our service and provide tools for them to expand and succeed.”

But I’m struggling with what “success” looks like for Unstable Diffusion. On the one hand, the group aims to be taken seriously as a generative art platform. On the other, as evidenced by the Discord server, it’s still a wellspring of porn — some of which is quite off-putting.

As the platform exists today, traditional VC funding is off the table. Vice clauses bar institutional funds from investing in pornographic ventures, funneling them instead to “sidecar” funds set up under the radar by fund managers.

Even if it ditched the adult content, Unstable Diffusion, which forces users to pay for a premium plan to use the images they generate commercially, would have to deal with the elephant in the generative AI room: artist consent and compensation. Like most generative AI art models, Unstable Diffusions models are trained on artwork from around the web, not necessarily with the creator’s knowledge. Many artists take issue with — and have sued over, in fact — AI systems that mimic their styles without giving proper credit or payment.

The furry art community FurAffinity decided to ban AI-generated SFW and NSWF art altogether, as did Newgrounds, which hosts mature art behind a filter. Only recently did Reddit walk back its ban on AI-generated porn, and only partially: art on the platform must depict fictional characters.

In a previous interview with TechCrunch, Chaudhry said that Unstable Diffusion would look at ways to make its models “more equitable toward the artistic community.” But from what I can tell, there’s not been any movement on that front.

Indeed, like the ethics around AI-generated porn, Unstable Diffusion’s situation seems unlikely to resolve anytime soon. The group seems doomed to a holding pattern, trying to bootstrap while warding off controversy and avoiding alienating the community — and artists — that made it.

I can’t say I envy them.

(@fvd_explore) April 7, 2023

(@fvd_explore) April 7, 2023