Dentsu’s prominent customer experience management (CXM) company Merkle has introduced Merkle GenCX, a new service that employs generative AI to enhance customer experiences significantly. Developed within dentsu’s robust Azure OpenAI framework and a secure private environment, this solution utilises AI to analyse substantial amounts of brands’ internal data. This enables the creation of connected customer experiences by gaining deeper insights into customer interactions, behaviors, sentiments, and engagements.

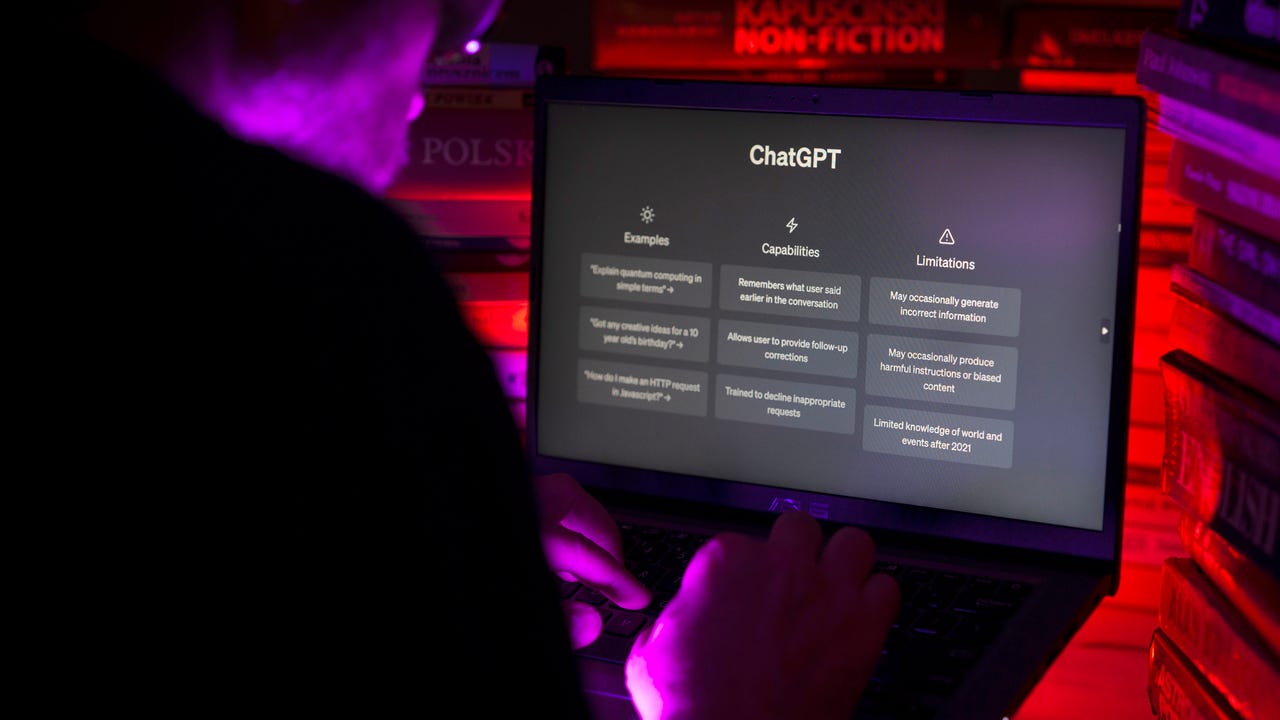

As data continues to expand exponentially and the demand for personalised experiences rises, brands are confronted with the task of effectively utilising their own data. Merkle’s GenCX solution addresses this challenge by constructing extensive knowledge models and harnessing the capabilities of LLMs using their clients’ exclusive data resources. These insights drive improvements in targeting, audience segmentation, creative development, and campaign recommendations through an intuitive chat-based interface. This not only enhances the speed and accuracy of results but also empowers marketers to make more efficient decisions.

Under the Hood

Generative AI models, like those used by Merkle GenCX, are capable of being trained on extensive sets of performance data. They can effectively analyse the complex relationships among various factors, allowing marketers to extract valuable insights from the data. Through the integration of AI-driven insights and decision-making into every customer interaction, brands can enhance the quality of customer experiences. Merkle GenCX harnesses this potential to swiftly create data-driven target audiences and segments, and it can extract valuable business insights from natural language in real time.

As the influence of AI continues to expand, brands have a significant opportunity to utilize this technology for crafting personaliSed and relevant experiences on a large scale. By leveraging their own firsthand data, Merkle GenCX empowers clients to engage with their customers on a more human level, granting them a distinctive competitive edge. Shirli Zelcer, the global head of analytics and data platforms at Merkle, highlights how GenCX enables brands to capitalize on AI, ultimately fostering meaningful connections and gaining a prominent market advantage.

Merkle’s AI & Analytics Play

“Back in the day, we used machine learning methodologies mostly in the predictive modelling space. And now, we use it for natural language processing, image recognition, next-best actions and decisions, and personalised customer experiences. AI has a role in all those different things. It’s not just about modelling anymore, it’s really about taking all of the data from every touch point and being able to process and analyse it and use it in different ways,” said Shirli Zelcer, global head of analytics, Merkle.

According to Shirli, there is a lack of sufficient discussions concerning the ethical aspects of AI. In the past, before the prevalence of machine learning and AI, transparency was higher in model-building processes. As per her, one could easily comprehend the data used, attributes within the model, their relationships, interactions, and their impact on the final algorithm. However, the introduction of new methodologies like machine learning led to a neglect of these considerations.

She added that in the present cultural context, ensuring the absence of underlying bias has gained significant importance. However, in machine learning and AI, attributes incorporated into models can inadvertently represent biases or variables might combine unexpectedly, resulting in unintended bias.

Trusted by Fortune 1000 corporations and global non-profits, Merkle is headquartered in Columbia, Maryland, and operates in more than 50 offices spanning the America, EMEA, and APAC regions. With a workforce exceeding 14,000 individuals, Merkle synergises data, technology, and analytics alongside consumer insights to craft exceptionally personalised marketing approaches. Moreover, the company harnesses the expertise of specialized marketing professionals spanning consulting, creativity, media, analytics, data, identity, CX/commerce, technology, as well as loyalty and promotions to fine-tune its solutions for optimal results.

Read more: How Merkle Implements Ethical AI

The post Merkle Introduces Generative AI Powered GenCX to Revamp Customer Experiences appeared first on Analytics India Magazine.