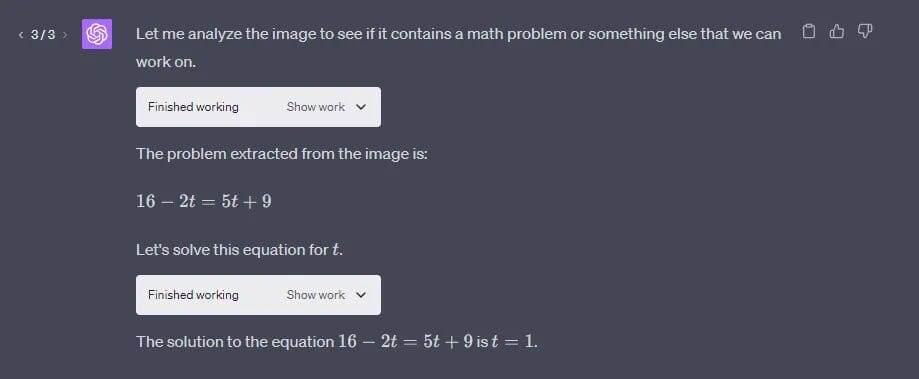

The drama continues. Microsoft seems to be messing with OpenAI. In a not so usual post, Altman clarified on X, saying that OpenAI doesn’t use API-submitted data to train or improve models unless a user explicitly opt-in. But, why?

Update :

Microsoft has deleted the Azure ChatGPT repo. Sam Altman is posting to clarify that the data collected in ChatGPT was safe and is not used to train any new model.

Big confusion among OpenAI users.— zakaria sabti (@SabtiZakaria) August 15, 2023

Microsoft, while announcing Azure ChatGPT, surprisingly had accepted shortcomings of OpenAI’s ChatGPT, in a now deleted post.

“ChatGPT risks exposing confidential intellectual property. One option is to block corporate access to ChatGPT, but people always find workarounds,” said Microsoft.

Further, it said: “ChatGPT on Azure solution accelerator is our enterprise option. This solution provides a similar user experience to ChatGPT but offered as your private ChatGPT.”

When AIM reached out to Microsoft, it denied posting Azure ChatGPT saying GitHub project operates on an open-source model, inviting contributions and suggestions from various members of the developer community. “There is no product known as ‘Azure ChatGPT’ currently being offered by Microsoft”, the company said.

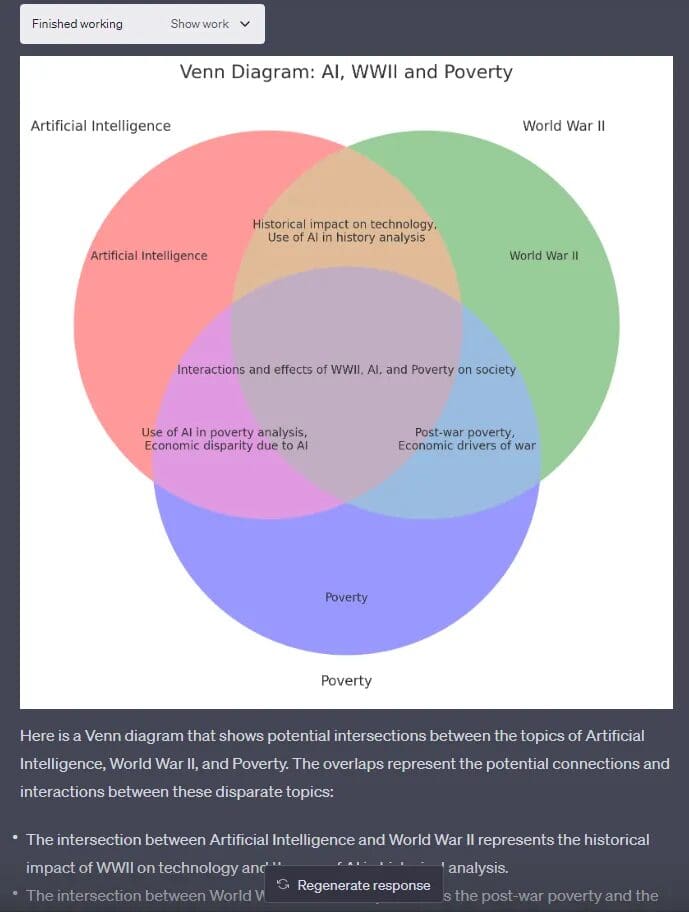

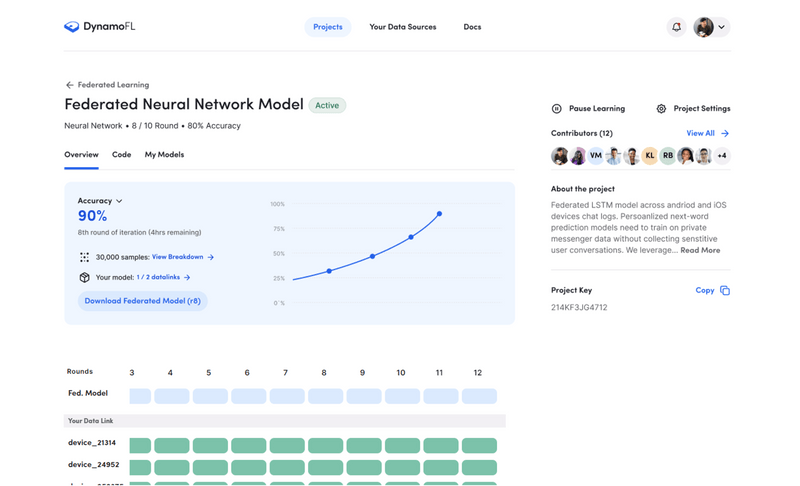

Azure ChatGPT was presented as a secure and private solution tailored for enterprises, assuring data security. However, this announcement created a conflict of interest for OpenAI, casting them in an unfavorable light.

There is definitely confusion between Microsoft and OpenAI and both the parties are not on the same page regarding use of ChatGPT and GPT-4 APIs for enterprise. If Microsoft hadn’t deleted the AzureChatGPT repository, OpenAI would have taken a hit as a brand regarding the trust of their customers.

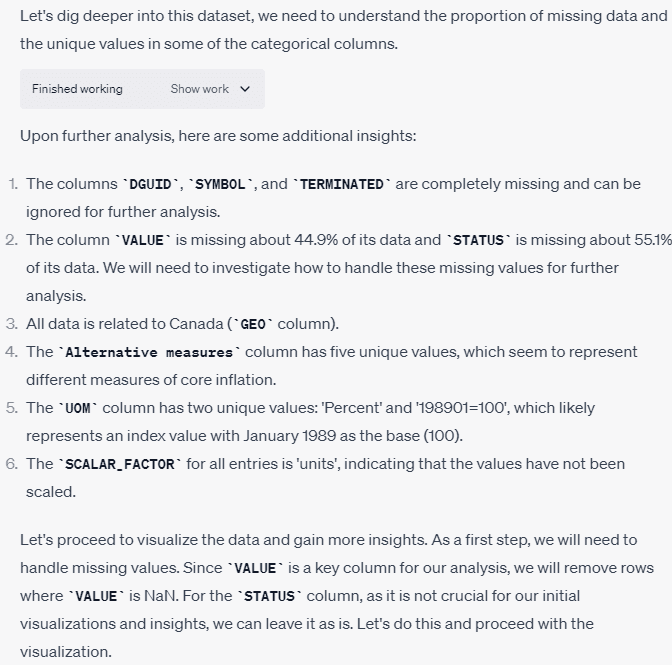

In our previous report, we questioned the data security of the GPT-4 API. Interestingly, OpenAI’s Logan Kilpatrick shared an updated blog on OpenAI’s data privacy measures on Sam Altman’s thread. It laid emphasis on enterprise-grade security which is similar to the purpose of Azure ChatGPT.

“Microsoft, competing with OpenAI,” said uncle Gary, commenting on the Azure ChatGPT launch – which now seems to have mysteriously disappeared from GitHub.

In response, Microsoft’s corporate vice president Peter Lee commented Microsoft has been offering the Azure OpenAI Service since November of 2021 in private preview, and in general availability since January of 2023. “The AOAI Service provides all the same privacy and enterprise compliance features of all our other Azure cloud services, which is important for many uses, such as medical” he said. This, literally, makes no sense whatsoever.

Clearly, Microsoft’s introduction of Azure ChatGPT looks like an attempt to sabotage OpenAI’s reputation. If we check OpenAI’s policies, they clearly have mentioned that they don’t use user’s data in chat history to train their model if the user has turned off the chat history.

Is OpenAI in a toxic relationship?

The partnership between Microsoft and Meta appears to have strained OpenAI’s relationship. Microsoft’s collaboration seems to be creating a competitor for OpenAI’s closed-source models. Microsoft’s move can be seen as a strategic play, allowing them to wield more control. By teaming up with Meta and leveraging Llama 2, Microsoft gained a sense of security and reduced their reliance on OpenAI, altering the dynamics in their favor.

In a recent interaction with AIM, there was a sense of hesitation from the OpenAI spokesperson when asked about the Microsoft-Meta partnership.

Elon Musk also in the past had claimed in an interview with Tucker Carlson in April that “Microsoft has a very strong say, if not directly controls, OpenAI at this point. In response Nadella Microsoft CEO Satya Nadella said it is “factually not correct” to claim that Microsoft controls its partner OpenAI, in an excerpt of a pre-taped interview with CNBC’s Andrew Ross Sorkin.

When Microsoft made multibillion dollar investment in OpenAI at the starting of the year, it made clear that Azure will be OpenAI’s exclusive cloud provider and it will power all OpenAI workloads across research, products and API services. So what was the need to create Azure ChatGPT all of a sudden and then delete it without any notice? Something is clearly not right!

[Updated: 5 p.m, August 16th, 2023] The article has been updated to include Microsoft’s comments.

The post Microsoft is Playing With OpenAI appeared first on Analytics India Magazine.