The C-suite is more familiar with AI technologies than their IT and security staff, according to a report from the Cloud Security Alliance commissioned by Google Cloud. The report, published on April 3, addressed whether IT and security professionals fear AI will replace their jobs, the benefits and challenges of the increase in generative AI and more.

Of the IT and security professionals surveyed, 63% believe AI will improve security within their organization. Another 24% are neutral on AI’s impact on security measures, while 12% do not believe AI will improve security within their organization. Of the people surveyed, only a very few (12%) predict AI will replace their jobs.

The survey used to create the report was conducted internationally, with responses from 2,486 IT and security professionals and C-suite leaders from organizations across the Americas, APAC and EMEA in November 2023.

Cybersecurity professionals not in leadership are less clear than the C-suite on possible use cases for AI in cybersecurity, with just 14% of staff (compared to 51% of C-levels) saying they are “very clear.”

“The disconnect between the C-suite and staff in understanding and implementing AI highlights the need for a strategic, unified approach to successfully integrate this technology,” said Caleb Sima, chair of Cloud Security Alliance’s AI Safety Initiative, in a press release.

Some questions in the report specified that the answers should relate to generative AI, while other questions used the term “AI” broadly.

The AI knowledge gap in security

C-level professionals face pressure from the top down that may have led them to be more aware of use cases for AI than security professionals.

Many (82%) C-suite professionals say their executive leadership and boards of directors are pushing for AI adoption. However, the report states that this approach might cause implementation problems down the line.

“This may highlight a lack of appreciation for the difficulty and knowledge needed to adopt and implement such a unique and disruptive technology (e.g., prompt engineering),” wrote lead author Hillary Baron, senior technical director of research and analytics at the Cloud Security Alliance, and a team of contributors.

There are a few reasons why this knowledge gap might exist:

- Cybersecurity professionals may not be as informed of the way AI can affect overall strategy.

- Leaders may underestimate how difficult it could be to implement AI strategies within existing cybersecurity practices.

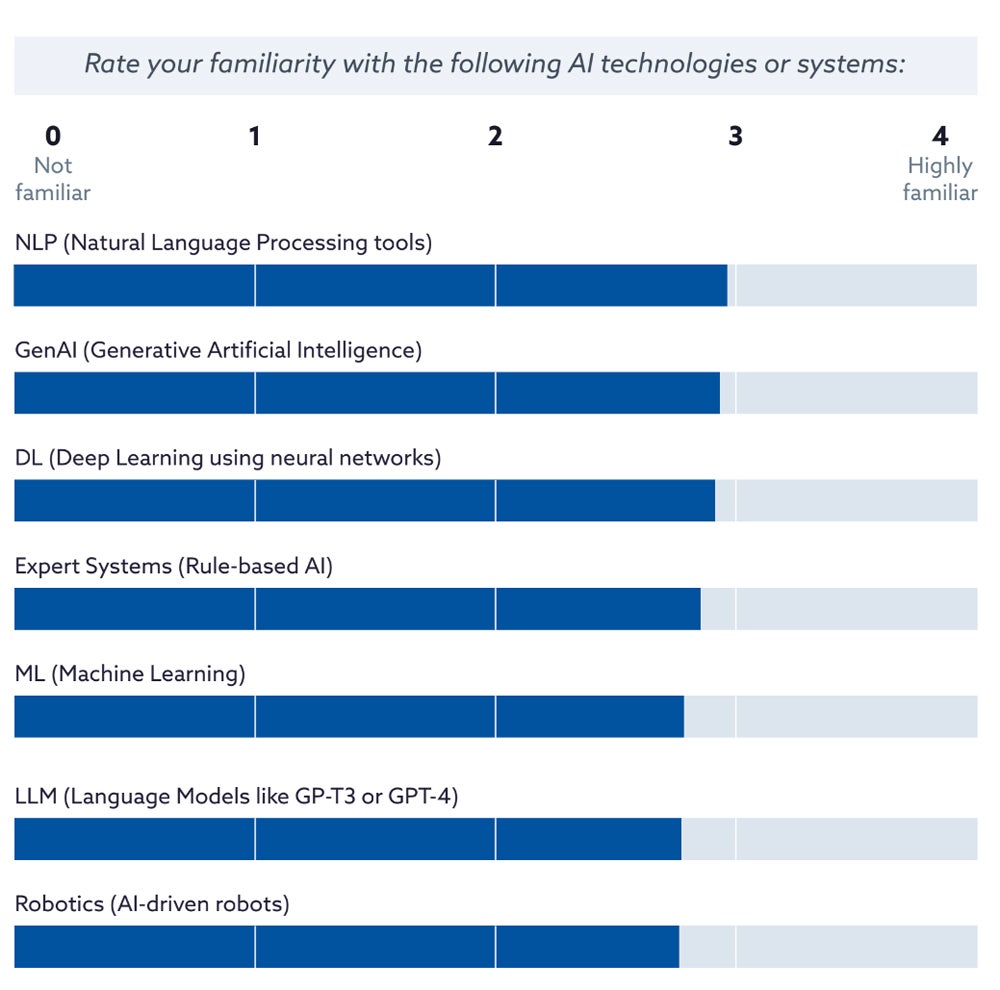

The report authors note that some data (Figure A) indicates respondents are about as familiar with generative AI and large language models as they are with older terms like natural language processing and deep learning.

Figure A

The report authors note that the predominance of familiarity with older terms such as natural language processing and deep learning might indicate a conflation between generative AI and popular tools like ChatGPT.

“It’s the difference between being familiar with consumer-grade GenAI tools vs professional/enterprise level which is more important in terms of adoption and implementation,” said Baron in an email to TechRepublic. “That is something we’re seeing generally across the board with security professionals at all levels.”

Will AI replace cybersecurity jobs?

A small group (12%) of security professionals think AI will completely replace their jobs over the next five years. Others are more optimistic:

- 30% think AI will help enhance parts of their skillset.

- 28% predict AI will support them overall in their current role.

- 24% think AI will replace a large part of their role.

- 5% expect AI will not impact their role at all.

Organizations’ goals for AI reflect this, with 36% seeking the outcome of AI enhancing security teams’ skills and knowledge.

The report points out an interesting discrepancy: although enhancing skills and knowledge is a highly desired outcome, talent comes at the bottom of the list of challenges. This might mean that immediate tasks such as identifying threats take priority in day-to-day operations, while talent is a longer-term concern.

Benefits and challenges of AI in cybersecurity

The group was divided on whether AI would be more beneficial for defenders or attackers:

- 34% see AI more beneficial for security teams.

- 31% view it as equally advantageous for both defenders and attackers.

- 25% see it as more beneficial for attackers.

Professionals who are concerned about the use of AI in security cite the following reasons:

- Poor data quality leading to unintended bias and other issues (38%).

- Lack of transparency (36%).

- Skills/expertise gaps when it comes to managing complex AI systems (33%).

- Data poisoning (28%).

Hallucinations, privacy, data leakage or loss, accuracy and misuse were other options for what people might be concerned about; all of these options received under 25% of the votes in the survey, where respondents were invited to select their top three concerns.

SEE: The UK National Cyber Security Centre found generative AI may enhance attackers’ arsenals. (TechRepublic)

Over half (51%) of respondents said “yes” to the question of whether they are concerned about the potential risks of over-reliance on AI for cybersecurity; another 28% were neutral.

Planned uses for generative AI in cybersecurity

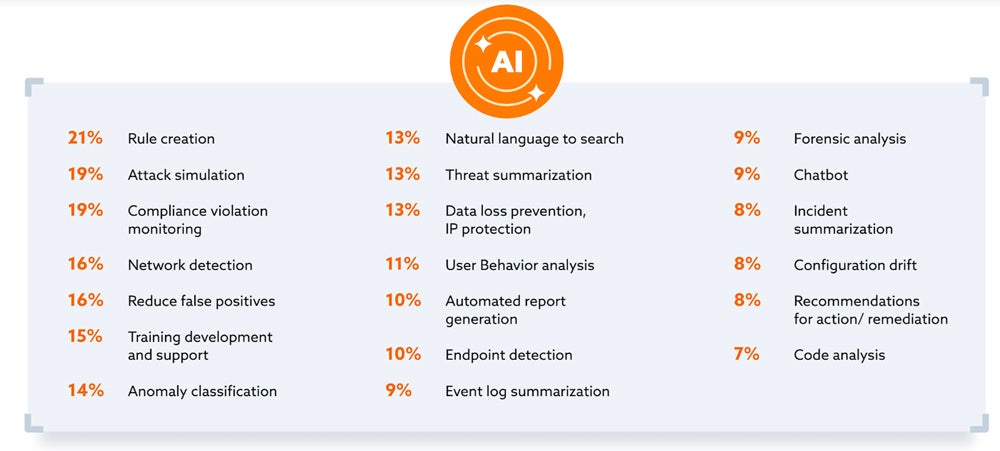

Of the organizations planning to use generative AI for cybersecurity, there is a very wide spread of intended uses (Figure B). Common uses include:

- Rule creation.

- Attack simulation.

- Compliance violation monitoring.

- Network detection.

- Reducing false positives.

Figure B

How organizations are structuring their teams in the age of AI

Of the people surveyed, 74% say their organizations plan to create new teams to oversee the secure use of AI within the next five years. How those teams are structured can vary.

Today, some organizations working on AI deployment put it in the hands of their security team (24%). Other organizations give primary responsibility for AI deployment to the IT department (21%), the data science/analytics team (16%), a dedicated AI/ML team (13%) or senior management/leadership (9%). In rarer cases, DevOps (8%), cross-functional teams (6%) or a team that did not fit in any of the categories (listed as “other” at 1%) took responsibility.

SEE: Hiring kit: prompt engineer (TechRepublic Premium)

“It’s evident that AI in cybersecurity is not just transforming existing roles but also paving the way for new specialized positions,” wrote lead author Hillary Baron and the team of contributors.

What kind of positions? Generative AI governance is a growing sub-field, Baron told TechRepublic, as is AI-focused training and upskilling.

“In general, we’re also starting to see job postings that include more AI-specific roles like prompt engineers, AI security architects, and security engineers,” said Baron.