Humane’s Ai Pin considers life beyond the smartphone

Co-founders Bethany Bongiorno and Imran Chaudhri discuss the history and future of the $699 generative AI wearable

Brian Heater @bheater / 8 hours

Nothing lasts forever. Nowhere is the truism more apt than in consumer tech. This is a land inhabited by the eternally restless — always on the make for the next big thing. The smartphone has, by all accounts, had a good run. Seventeen years after the iPhone made its public debut, the devices continue to reign. Over the last several years, however, the cracks have begun to show.

The market plateaued, as sales slowed and ultimately contracted. Last year was punctuated by stories citing the worst demand in a decade, leaving an entire industry asking the same simple question: what’s next? If there was an easy answer, a lot more people would currently be a whole lot richer.

Smartwatches have had a moment, though these devices are largely regarded as accessories, augmenting the smartphone experience. As for AR/VR, the best you can really currently say is that — after a glacial start — the jury is still very much out on products like the Meta Quest and Apple Vision Pro.

When it began to tease its existence through short, mysterious videos in the summer of 2022, Humane promised a glimpse of the future. The company promised an approach every bit as human-centered as its name implied. It was, at the very least, well-funded, to the tune of $100 million+ (now $230 million), and featured an AI element.

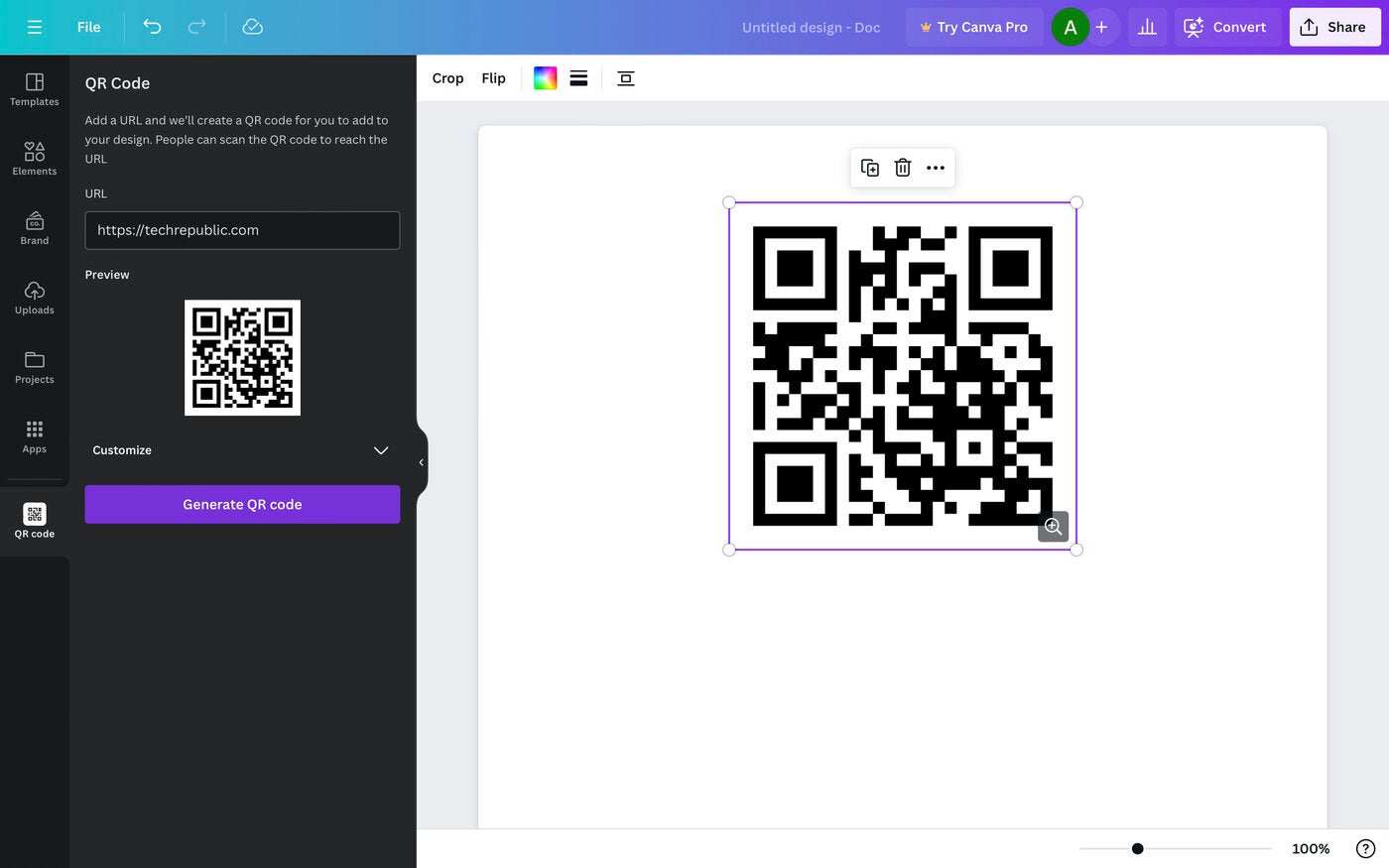

The company’s first product, the Humane Ai Pin, arrives this week. It suggests a world where being plugged in doesn’t require having one’s eyes glued to a screen in every waking moment. It’s largely — but not wholly — hands-free. A tap to the front touch panel wakes up the system. Then it listens — and learns.

Beyond the smartphone

Image Credits: Darrell Etherington/TechCrunch

Humane couldn’t ask for better timing. While the startup has been operating largely in stealth for the past seven years, its market debut comes as the trough of smartphone excitement intersects with the crest of generative AI hype. The company’s bona fides contributed greatly to pre-launch excitement. Founders Bethany Bongiorno and Imran Chaudhri were previously well-placed at Apple. OpenAI’s Sam Altman, meanwhile, was an early and enthusiastic backer.

Excitement around smart assistants like Siri, Alexa and Google Home began to ebb in the last few years, but generative AI platforms like OpenAI’s ChatGPT and Google’s Gemini have flooded that vacuum. The world is enraptured with plugging a few prompts into a text field and watching as the black box spits out a shiny new image, song or video. It’s novel enough to feel like magic, and consumers are eager to see what role it will play in our daily lives.

That’s the Ai Pin’s promise. It’s a portal to ChatGPT and its ilk from the comfort of our lapels, and it does this with a meticulous attention to hardware design befitting its founders’ origins.

Press coverage around the startup has centered on the story of two Apple executives having grown weary of the company’s direction — or lack thereof. Sure, post-Steve Jobs Apple has had successes in the form of the Apple Watch and AirPods, but while Tim Cook is well equipped to create wealth, he’s never been painted as a generational creative genius like his predecessor.

If the world needs the next smartphone, perhaps it also needs the next Apple to deliver it. It’s a concept Humane’s founders are happy to play into. The story of the company’s founding, after all, originates inside the $2.6 trillion behemoth.

Start spreading the news

Image Credits: Alexander Spatari (opens in a new window) / Getty Images

In late March, TechCrunch paid a visit to Humane’s New York office. The feeling was tangibly different than our trip to the company’s San Francisco headquarters in the waning months of 2023. The earlier event buzzed with the manic energy of an Apple Store. It was controlled and curated, beginning with a small presentation from Bongiorno and Chaudhri, and culminating in various stations staffed by Humane employees designed to give a crash course on the product’s feature set and origins.

Things in Manhattan were markedly subdued by comparison. The celebratory buzz that accompanies product launches has dissipated into something more formal, with employees focused on dotting I’s and crossing T’s in the final push before product launch. The intervening months provided plenty of confirmation that the Ai Pin wasn’t the only game in town.

January saw the Rabbit R1’s CES launch. The startup opted for a handheld take on generative AI devices. The following month, Samsung welcomed customers to “the era of Mobile AI.” The “era of generative AI” would have been more appropriate, as the hardware giant leveraged a Google Gemini partnership aimed at relegating its bygone smart assistant Bixby to a distant memory. Intel similarly laid claim to the “AI PC,” while in March Apple confidently labeled the MacBook Air the “world’s best consumer laptop for AI.”

At the same time, Humane’s news standing stumbled through reports of a small layoff round and small delay in preorder fulfillment. Both can be written off as products of immense difficulties around launching a first-generation hardware product — especially under the intense scrutiny few startups see.

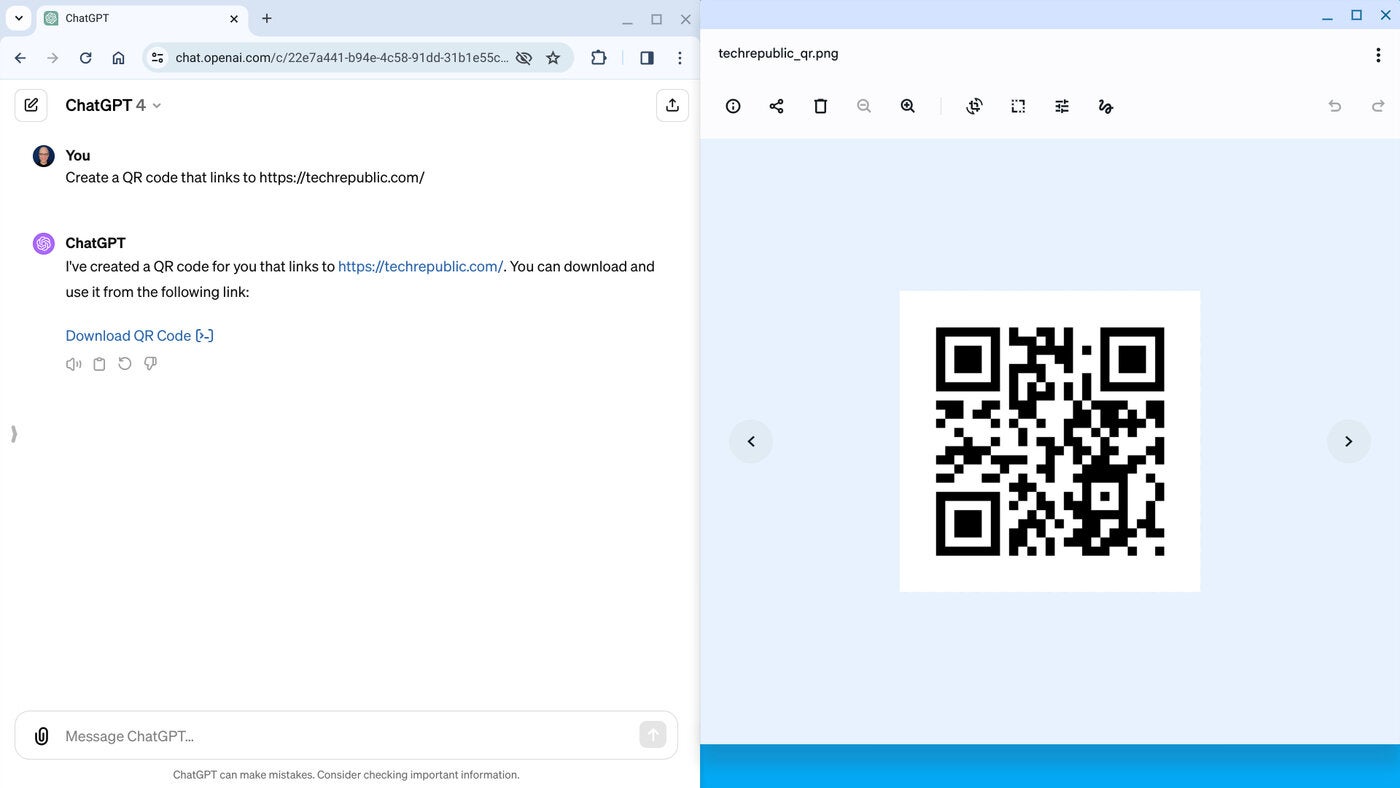

For the second meeting with Bongiorno and Chaudhri, we gathered around a conference table. The first goal was an orientation with the device, ahead of review. I’ve increasingly turned down these sorts of meeting requests post-pandemic, but the Ai Pin represents a novel enough paradigm to justify a sit-down orientation with the device. Humane also sent me home with a 30-minute intro video designed to familiarize users — not the sort of thing most folks require when, say, upgrading a phone.

More interesting to me, however, was the prospect of sitting down with the founders for the sort of wide-ranging interview we weren’t able to do during last year’s San Francisco event. Now that most of the mystery is gone, Chaudhri and Bongiorno were more open about discussing the product — and company — in-depth.

Origin story

Humane co-founders Bethany Bongiorno and Imran Chaudhri.

One Infinite Loop is the only place one can reasonably open the Humane origin story. The startup’s founders met on Bongiorno’s first day at Apple in 2008, not long after the launch of the iPhone App Store. Chaudhri had been at the company for 13 years at that point, having joined at the depths of the company’s mid-90s struggles. Jobs would return to the company two years later, following its acquisition of NeXT.

Chaudhri’s 22 years with the company saw him working as director of Design on both the hardware and software sides of projects like the Mac and iPhone. Bongiorno worked as project manager for iOS, macOS and what would eventually become iPadOS. The pair married in 2016 and left Apple the same year.

“We began our new life,” says Bongiorno, “which involves thinking a lot about where the industry was going and what we were passionate about.” The pair started consulting work. However, Bongiorno describes a seemingly mundane encounter that would change their trajectory soon after.

Image Credits: Humane

“We had gone to this dinner, and there was a family sitting next to us,” she says. “There were three kids and a mom and dad, and they were on their phones the entire time. It really started a conversation about the incredible tool we built, but also some of the side effects.”

Bongiorno adds that she arrived home one day in 2017 to see Chaudhri pulling apart electronics. He had also typed out a one-page descriptive vision for the company that would formally be founded as Humane later the same year.

According to Bongiorno, Humane’s first hardware device never strayed too far from Chaudhri’s early mockups. “The vision is the same as what we were pitching in the early days,” she says. That’s down to Ai Pin’s most head-turning feature, a built-in projector that allows one to use the surface of their hand as a kind of makeshift display. It’s a tacit acknowledgement that, for all of the talk about the future of computing, screens are still the best method for accomplishing certain tasks.

Much of the next two years were spent exploring potential technologies and building early prototypes. In 2018, the company began discussing the concept with advisors and friends, before beginning work in earnest the following year.

Staring at the sun

It’s time for change, not more of the same. pic.twitter.com/I6K5FVYzx2

— Humane (@Humane) July 19, 2022

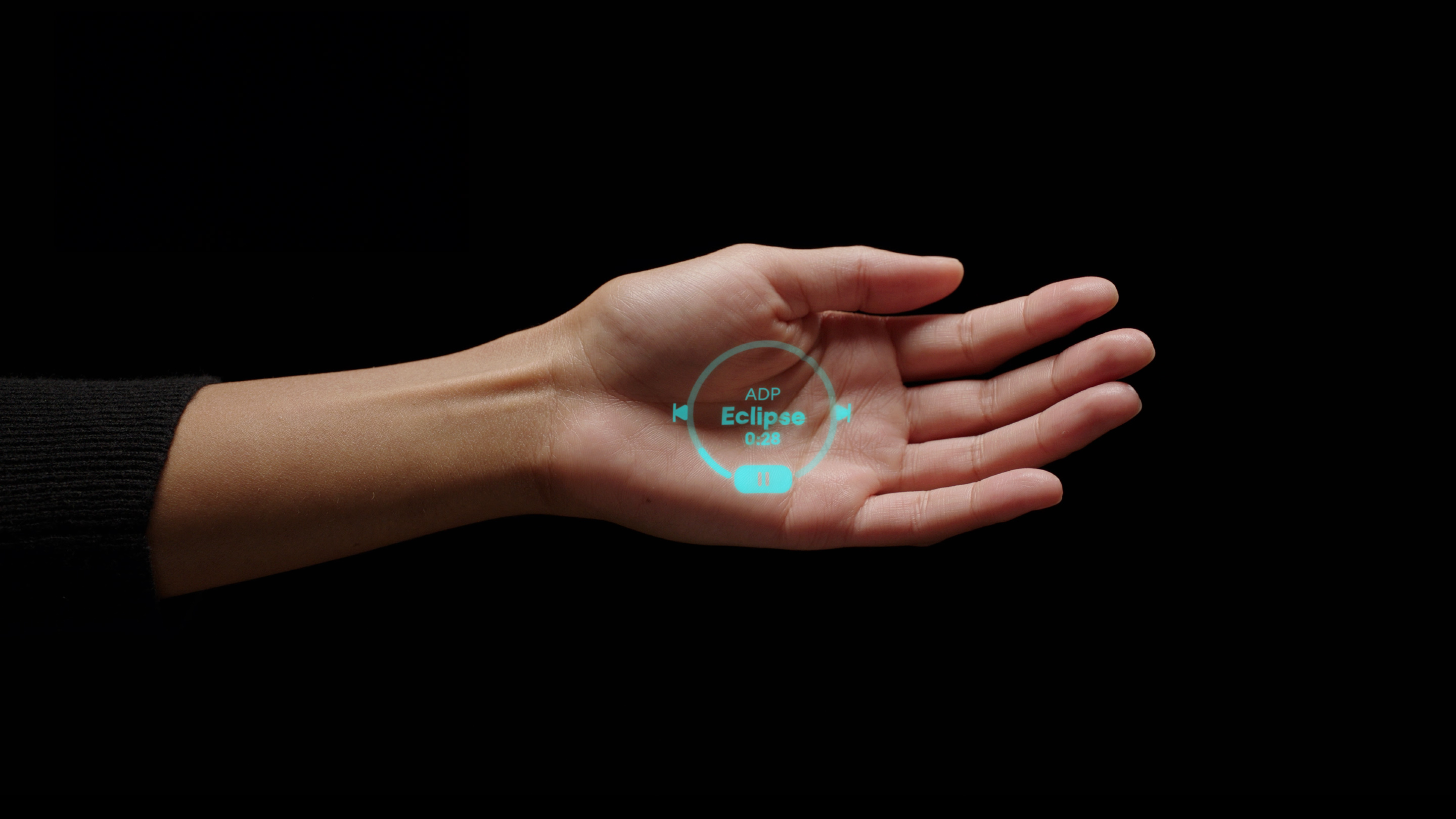

In July 2022, Humane tweeted, “It’s time for change, not more of the same.” The message, which reads as much like a tagline as a mission statement, was accompanied by a minute-long video. It opens in dramatic fashion on a rendering of an eclipse. A choir sings in a bombastic — almost operatic — fashion, as the camera pans down to a crowd. As the moon obscures the sunlight, their faces are illuminated by their phone screens. The message is not subtle.

The crowd opens to reveal a young woman in a tank top. Her head lifts up. She is now staring directly into the eclipse (not advised). There are lyrics now, “If I had everything, I could change anything,” as she pushes forward to the source of the light. She holds her hand to the sky. A green light illuminates her palm in the shape of the eclipse. This last bit is, we’ll soon discover, a reference to the Ai Pin’s projector. The marketing team behind the video is keenly aware that, while it’s something of a secondary feature, it’s the most likely to grab public attention.

As a symbol, the eclipse has become deeply ingrained in the company’s identity. The green eclipse on the woman’s hand is also Humane’s logo. It’s built into the Ai Pin’s design language, as well. A metal version serves as the connection point between the pin and its battery packs.

Image Credits: Brian Heater

The company is so invested in the motif that it held an event on October 14, 2023, to coincide with a solar eclipse. The device comes in three colors: Eclipse, Equinox and Lunar, and it’s almost certainly no coincidence that this current big news push is happening a mere days after another North American solar eclipse.

However, it was on the runway of a Paris fashion show in September that the Ai Pin truly broke cover. The world got its first good look at the product as it was magnetically secured to the lapels of models’ suit jackets. It was a statement, to be sure. Though its founders had left Apple a half-dozen years prior, they were still very much invested in industrial design, creating a product designed to be a fashion accessory (your mileage will vary).

The design had evolved somewhat since conception. For one thing, the top of the device, which houses the sensors and projector, is now angled downward, so the Pin’s vantage point is roughly the same as its wearer. An earlier version with a flatter service would unintentionally angle the pin upward when worn on certain chest types. Nailing down a more universal design required a lot of trial and error with a lot of different people in different shapes and sizes.

“There’s an aspect of this particular hardware design that has to be compassionate to who’s using it,” says Chaudhri. “It’s very different when you have a handheld aspect. It feels more like an instrument or a tool […] But when you start to have a more embodied experience, the design of the device has to be really understanding of who’s wearing it. That’s where the compassion comes from.”

Year of the Rabbit?

Image Credits: rabbit

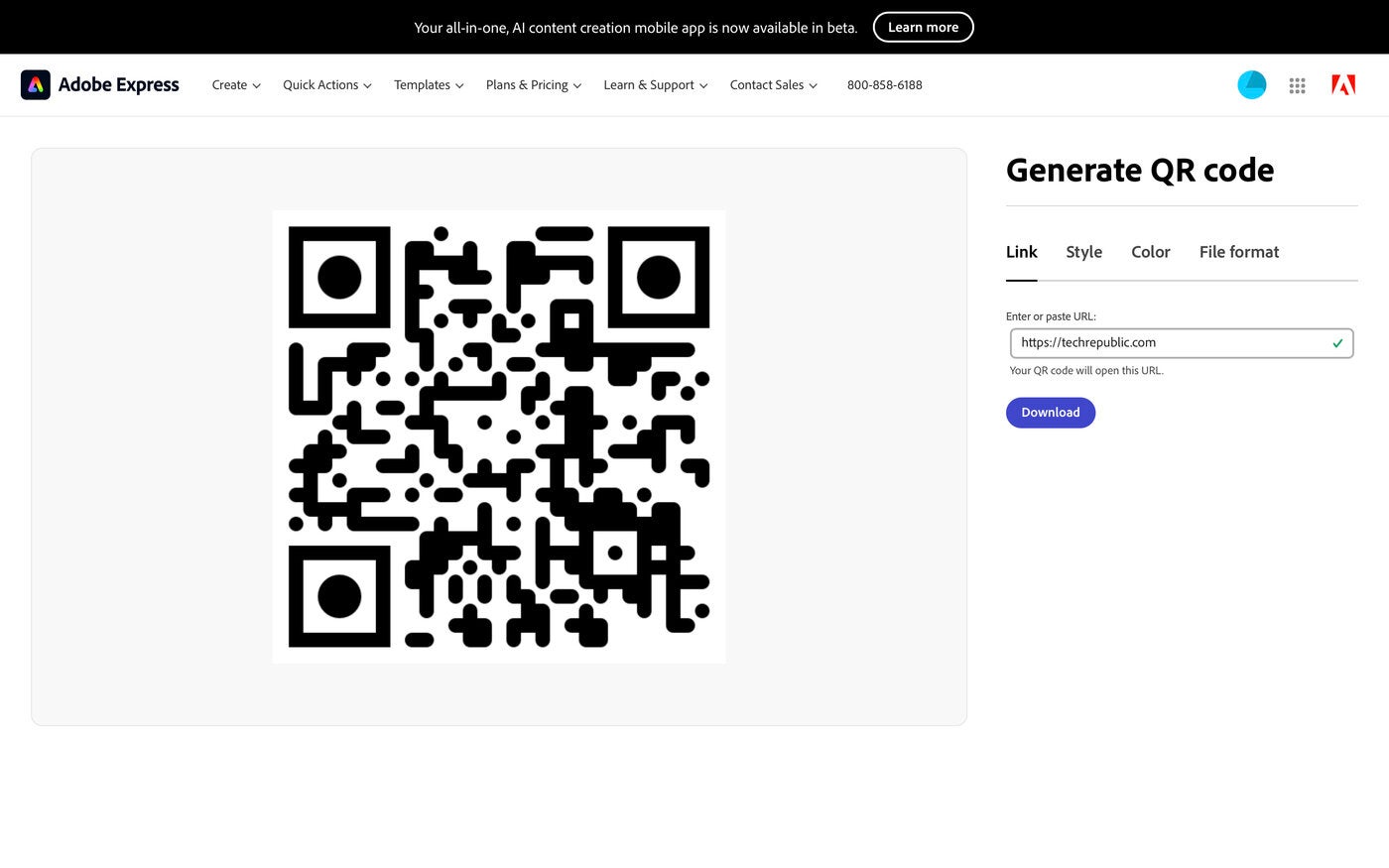

Then came competition. When it was unveiled at CES on January 9, the Rabbit R1 stole the show.

“The phone is an entertainment device, but if you’re trying to get something done it’s not the highest efficiency machine,” CEO and founder Jesse Lyu noted at the time. “To arrange dinner with a colleague we needed four-five different apps to work together. Large language models are a universal solution for natural language, we want a universal solution for these services — they should just be able to understand you.”

While the R1’s product design is novel in its own right, it’s arguably a more traditional piece of consumer electronics than Ai Pin. It’s handheld and has buttons and a screen. At its heart, however, the functionality is similar. Both are designed to supplement smartphone usage and are built around a core of LLM-trained AI.

The device’s price point also contributed to its initial buzz. At $200, it’s a fraction of the Ai Pin’s $699 starting price. The more familiar form factor also likely comes with a smaller learning curve than Humane’s product.

Asked about the device, Bongiorno makes the case that another competitor only validates the space. “I think it’s exciting that we kind of sparked this new interest in hardware,” she says. “I think it’s awesome. Fellow builders. More of that, please.”

She adds, however, that the excitement wasn’t necessarily there at Humane from the outset. “We talked about it internally at the company. Of course people were nervous. They were like, ‘what does this mean?’ Imran and I got in front of the company and said, ‘guys, if there weren’t people who followed us, that means we’re not doing the right thing. Then something’s wrong.”

Bongiorno further suggests that Rabbit is focused on a different use case, as its product requires focus similar to that of a smartphone — though both Bongiorno and Chaudhri have yet to use the R1.

A day after Rabbit unveiled the product, Humane confirmed that it had laid off 10 employees — amounting to 4% of its workforce. It’s a small fraction of a company with a small headcount, but the timing wasn’t great, a few months ahead of the product’s official launch. The news also found its long-time CTO, Patrick Gates, exciting the C-suite role for an advisory job.

“The honest truth is we’re a company that is constantly going through evolution,” Bongiorno says of the layoffs. “If you think about where we were five years ago, we were in R&D. Now we are a company that’s about to ship to customers, that’s about to have to operate in a different way. Like every growing and evolving company, changes are going to happen. It’s actually really healthy and important to go through that process.”

The following month, the company announced that pins would now be shipping in mid-April. It was a slight delay from the original March ship date, though Chaudhri offers something of a Bill Clinton-style “it depends on what your definition of ‘is’ is” answer. The company, he suggests, defines “shipping” as leaving the factory, rather than the more industry-standard definition of shipping to customers.

“We said we were shipping in March and we are shipping in March,” he says. The devices leave the factory. The rest is on the U.S. government and how long they take when they hold things in place — tariffs and regulations and other stuff.”

Money moves

Image Credits: Brian Heater

No one invests $230 million in a startup out of the goodness of their heart. Sooner or later, backers will be looking for a return. Integral to Humane’s path to positive cashflow is a subscription service that’s required to use the thing. The $699 price tag comes with 90 days free, then after that, you’re on the hook for $24 a month.

That fee brings talk, text and data from T-Mobile, cloud storage and — most critically — access to the Ai Bus, which is foundational to the device’s operation. Humane describes it thusly, “An entirely new AI software framework, the Ai Bus, brings Ai Pin to life and removes the need to download, manage, or launch apps. Instead, it quickly understands what you need, connecting you to the right AI experience or service instantly.”

Investors, of course, love to hear about subscriptions. Hell, even Apple relies on service revenue for growth as hardware sales have slowed.

Bongiorno alludes to internal projections for revenue, but won’t go into specifics for the timeline. She adds that the company has also discussed an eventual path to IPO even at this early stage in the process.

“If we weren’t, that would not be responsible for any company,” she says. “These are things that we care deeply about. Our vision for Humane from the beginning was that we wanted to build a company where we could build a lot of things. This is our first product, and we have a large roadmap that Imran is really passionate about of where we want to go.”

Chaudhri adds that the company “graduated beyond sketches” for those early products. “We’ve got some early photos of things that we’re thinking about, some concept pieces and some stuff that’s a lot more refined than those sketches when it was a one-man team. We are pretty passionate about the AI space and what it actually means to productize AI.”