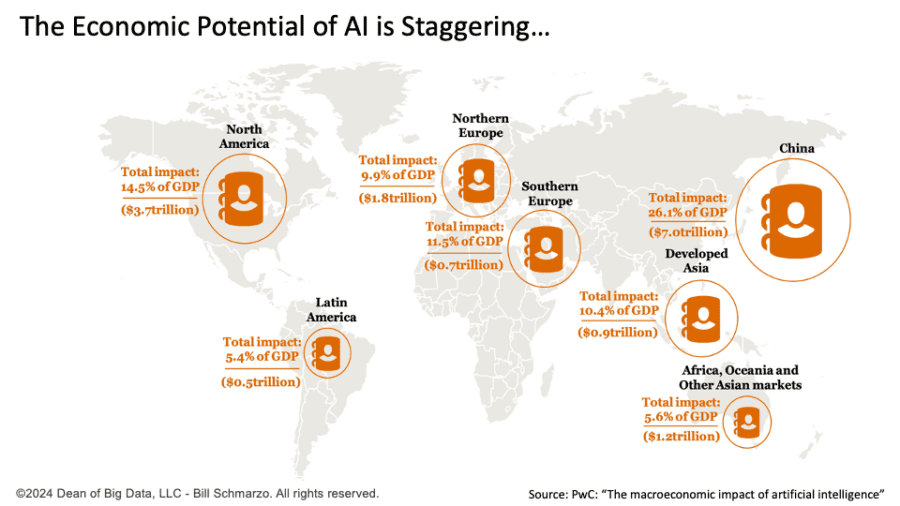

We are truly living in unprecedented times. Artificial Intelligence (AI) is anticipated to transform the global economy by intelligently automating tasks, re-engineering operational processes, and paving the way for new avenues of customer, product, service, and market value creation. According to a report by PwC, AI has the potential to contribute up to $15.7 trillion to the global economy by 2030. This significant figure underscores the transformative influence of AI across all countries, industries, and professions (Figure 1).

Figure 1: The Economic Potential of AI is Staggering…

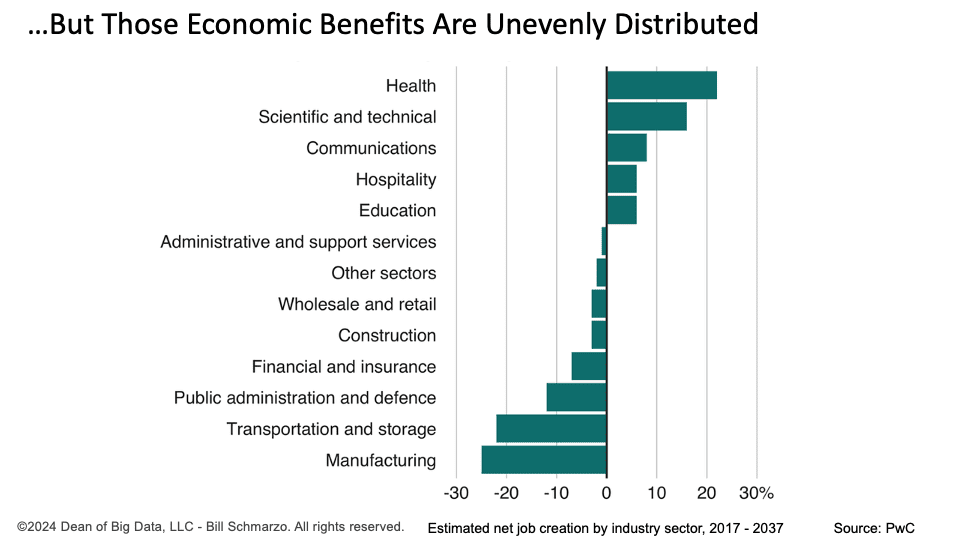

Unfortunately, AI is also likely to displace many workers during that transformation. The World Economic Forum estimates that by 2025, 85 million jobs may be displaced by AI.[1] Many jobs may be eliminated, while others may be downgraded to lower-skilled positions (Figure 2).

Figure 2: …But Those Economic Benefits are Unevenly Distributed

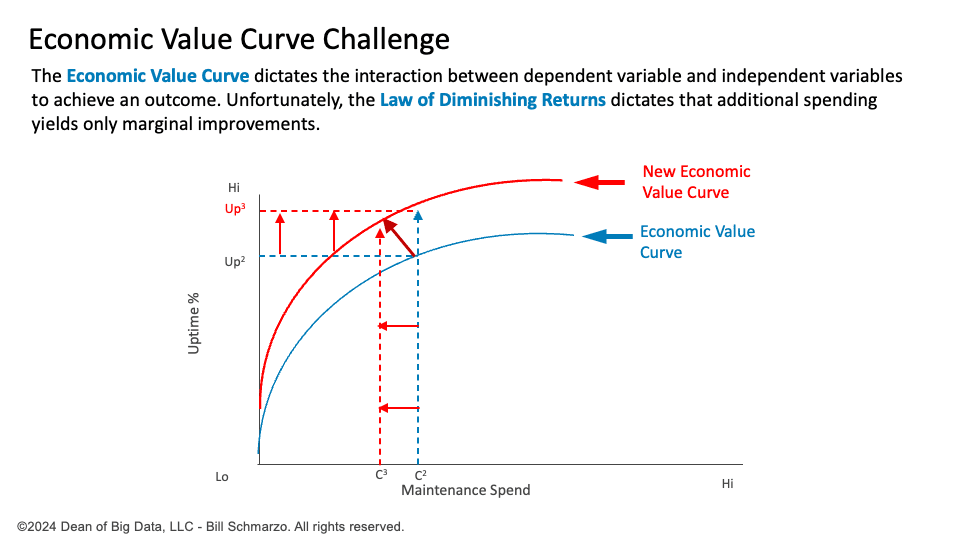

This situation underscores the opportunities and challenges of AI-driven economic transformation. Thankfully, the significant economic advantages of enhanced productivity, improved operational effectiveness, and the facilitation of new customer, product, service, and market innovations will result in financial surpluses as companies become adept at using AI to reshape their economic value curve to “Do more with less” (Figure 3).

Figure 3: Transforming the Economic Value Curve to “Do More With Less”

The challenge lies in capturing and utilizing that financial surplus to benefit all.

Welcome to the “AI Dividend” Opportunity.

The AI Dividend Opportunity

The “AI Dividend” encapsulates the substantial and recurring economic benefits generated by advancements in artificial intelligence (AI), emphasizing the importance of harnessing these gains to improve societal well-being and economic equity.

The AI Dividend marks a generational inflection point for society, driven by increased productivity, efficiency, and innovation across all sectors and professions due to AI. Governments can dramatically enhance overall human, society, and environmental well-being by utilizing this AI Dividend to ensure that the benefits are widely shared and contribute to social equity and human wellness. This investment can propel human progress by fostering curiosity, exploration, creativity, and innovation. However, these societal benefits can only be realized with government and social policies that ensure the economic gains from AI are distributed equitably rather than solely benefiting the privileged few.

The potential loss of jobs driven by AI has been the focus of many media discussions and government leaders’ pontifications. A Universal Basic Income (UBI) has been proposed as a solution to AI-induced job displacement, with studies indicating that UBI can reduce poverty and provide a safety net. However, UBI will likely have severe unintended consequences, such as disenfranchisement and frustration among recipients who may not feel they have “earned” their income. This lack of purpose or contribution to society could lead to citizen frustrations, depression, social instability, and social instability[2].

I have a more pragmatic proposal for what we should do with the AI Dividend…

The Schmarzo AI Dividend Recommendation

Governments should use the AI Dividend to redesign our society significantly, creating a society that values and rewards long-term services that enhance everyone’s quality of life. Here are three immediate actions we could take with the upcoming AI Dividend:

- Raising Minimum Wages: Increasing the minimum wage could ensure workers benefit from economic growth while maintaining employment. Research indicates that higher minimum wages can reduce poverty and income inequality without adverse employment effects (Phys.org).

- Subsidizing Critical Jobs: Subsidizing essential society roles—such as teaching, nursing, healthcare, childcare, public safety, mental health, conservation, public transportation, elderly care, housing, community development, and the arts—that are undervalued by our current economic systems. These sectors are crucial for societal well-being and often suffer from low wages despite high social value. Public investment in these areas can drive economic growth, improve citizen satisfaction, and enhance service quality, leading to broader social benefits (Center for American Progress).

- Promoting Human Creativity. We can unlock human potential and drive cultural and economic progress by funding initiatives that foster innovation and creativity, such as grants for artistic endeavors, research projects, and entrepreneurial ventures. Investing in education and lifelong learning opportunities will empower individuals to explore new ideas and solutions, leading to a more dynamic and innovative society.

The economic powerhouse behind these recommendations is based upon a well-established modern wonder known as the economic multiplier effect.

Mastering the Economic Multiplier Effect

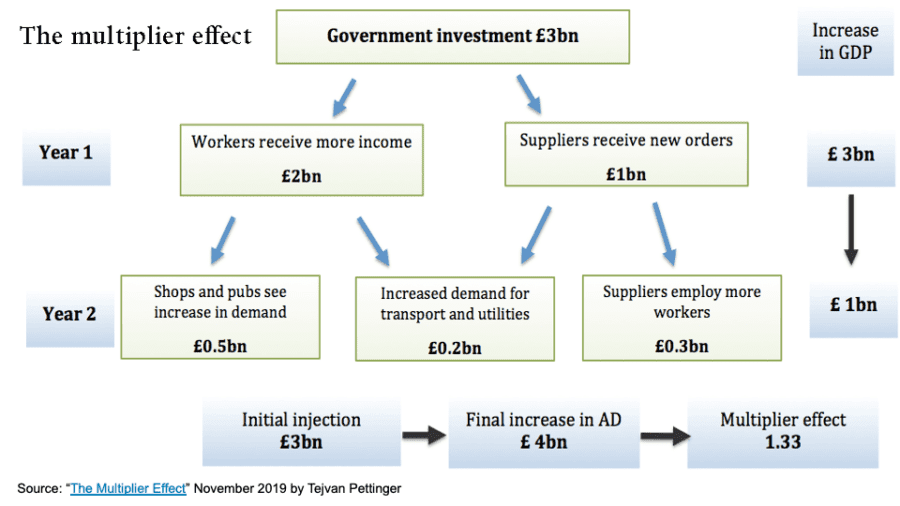

The Economic Multiplier Effect occurs when an initial injection of spending (such as government investment or consumer spending) leads to a more significant overall increase in economic activity and national income due to successive rounds of re-spending by businesses and consumers (Figure 3).

Figure 4: Source: “The Multiplier Effect” November 2019 by Tejvan Pettinger

The economic multiplier effect is a powerful engine that drives the growth and advancement of the modern world. This effect occurs when an initial increase in spending results in additional economic activity and development as the money circulates through the economy. It is a crucial concept in economic theory and policy, illustrating how a change in one sector can have ripple effects throughout the entire economy.

However, the impact and effectiveness of the economic multiplier effect are substantially influenced by the strategic placement of monetary stimulus in the economy. The effectiveness of the economic multiplier effect differs based on the income level of the recipients. Studies indicate that lower-income recipients create a more significant multiplier effect than higher-income recipients.

For example, the Bolsa Família program in Brazil, one of the world’s most extensive cash transfer programs, increased real GDP by R$1.04 for every R$1 spent. Similarly, the GiveDirectly initiative in Kenya, which provided a one-time transfer of $1,000 to poor households, led to a multiplier effect of 2.5, meaning each dollar generated $2.50 in local economic activity. These findings highlight the significant positive impact of cash transfers on low-income households, as they spend more of their additional income, effectively stimulating local economies[3].

Additionally, investments in social programs can lift families out of poverty, boost economic growth, and improve overall societal wellness. For instance, Head Start has demonstrated a benefit-cost ratio of more than 7-to-1, showing the economic impact of focused public investment (Center for American Progress).

Coupling social programs with the economic multiplier effect further supports the idea that utilizing the upcoming AI dividend can help reduce poverty, improve economic well-being, increase human development opportunities, drive economic growth, and more.

Summary: Exploiting the AI Dividend For Social Utopia

To fully realize AI’s potential and ensure that everyone benefits, not just a select few, it is important to implement comprehensive and inclusive government and social policies that prioritize investing the economic gains from AI into areas such as healthcare, education, welfare, safety, and other public services. By doing so, we can improve the quality of life for everyone and work towards creating a society with unprecedented prosperity for all.

The emergence of AI Dividends represents a pivotal moment for society, offering the potential to create a world where everyone, not just the wealthy or privileged, can thrive. However, implementing a Universal Basic Income is not the solution, as it could result in unintended harmful outcomes such as disenfranchisement, frustration, and social unrest among recipients who may feel a lack of purpose or contribution to society.

Instead, we can start with alternative strategies, such as raising minimum wages, subsidizing critical jobs, and promoting human creativity. These measures provide more balanced and sustainable solutions to mitigate job displacement, enhance social equity, and power economic growth.

The time to seize this historic opportunity is now! We cannot allow corporate short-term profits to dictate how the AI Dividend benefits are distributed. We must prioritize long-term prosperity and fulfillment over short-term financial gains. By taking a long-term view and considering the broader impact of the AI Dividend, we can create a more sustainable and equitable future and power unprecedented levels of economic growth that benefit everyone.

[1] Source: BioMed Central

[2] Source: (Center for American Progress).

[3] Sources: Phys.org and BioMed Central.