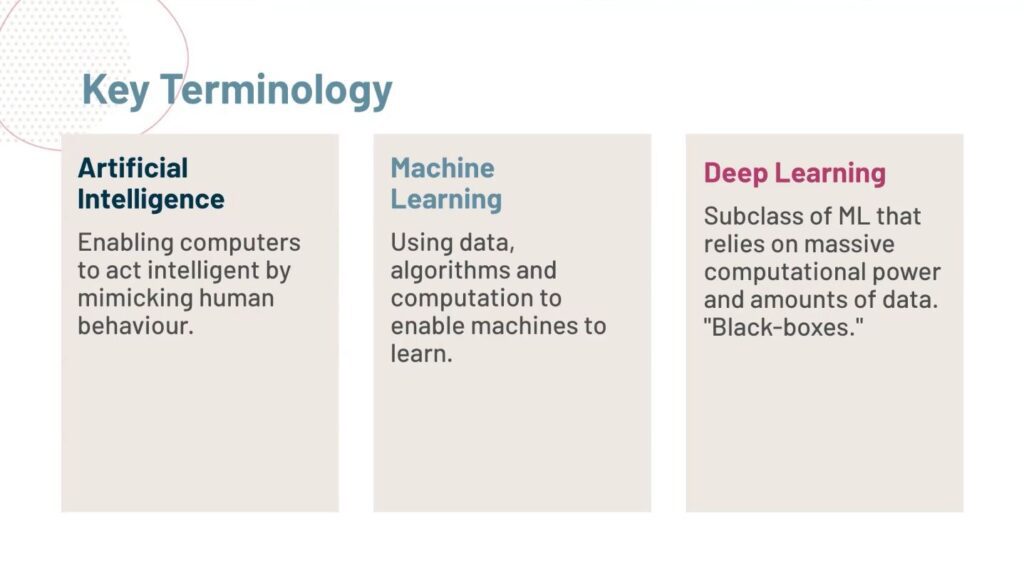

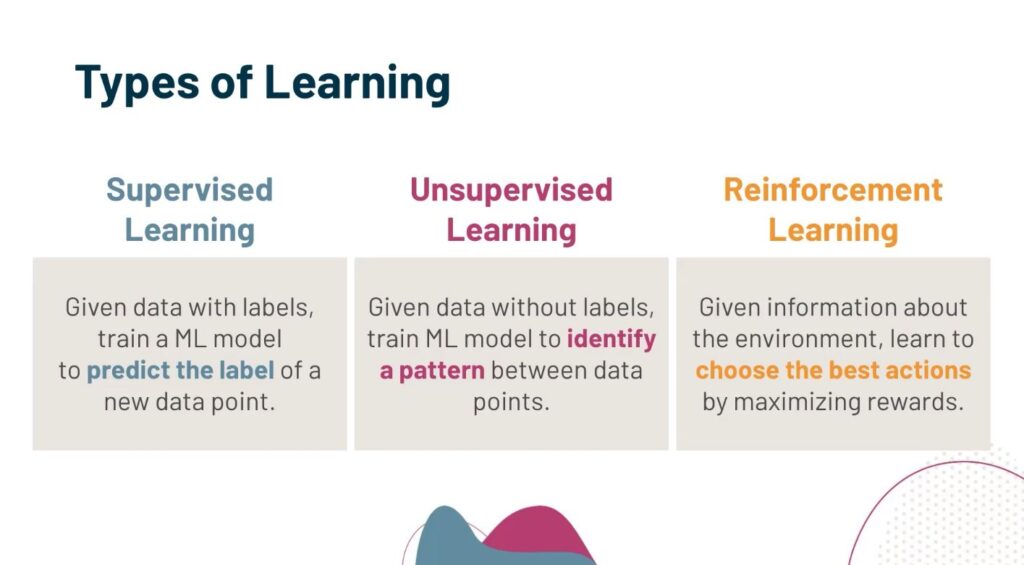

In recent years, Artificial Intelligence has experienced numerous innovations. One of the most significant is the introduction of Gen (Generative) AI technology. This Artificial Intelligence technology focuses on creating new, original, and valuable content against the given prompt.

That’s the reason why it quickly became popular in numerous fields including Education. Gen AI has just positively transformed the learning environment in educational institutes in several ways. In this blog post, we are going to explore some of the most major ways in detail, so stick around with us till the end.

4 Major ways Gen AI is transforming education

Below we have explained four ways through which Generative AI is revolutionizing the field of education in positive ways.

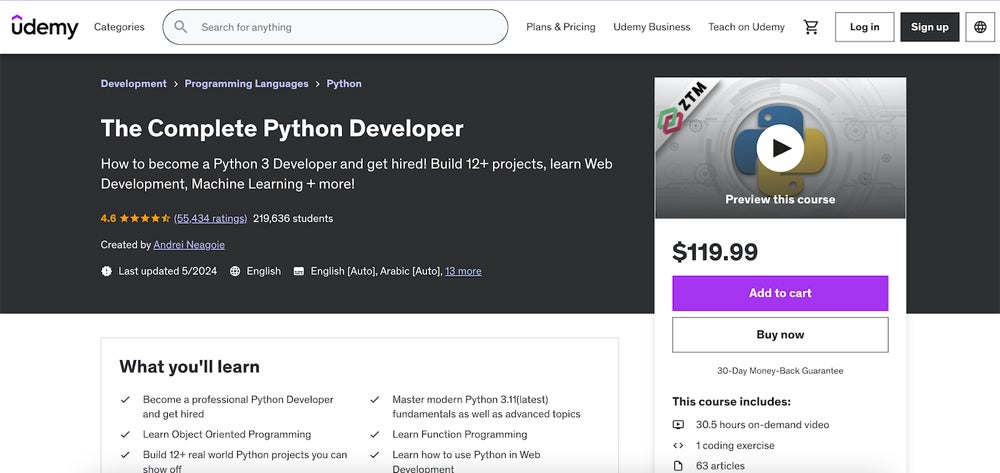

Assistance in better content creation for courses

We all know that creating content for courses is fundamental for any educational institute in the world. It greatly contributes to enhancing students’ academic performance. For instance, well-crafted course material will keep the students interested in it, resulting in better learning.

Previously, creating perfect content for learning courses required a significant amount of time and effort for the teacher or staff. Fortunately, that’s not the case now, after the introduction of Generative AI.

There are numerous tools available on the internet such as a Paragraph generator. It works on Gen-AI technology and allows teachers to create compelling content on any course topic to facilitate students’ learning process. Not only this, but the tool also allows the creation of course content based on the student’s specific writing tone/style such as Simple, Formal, etc.

Another good Gen AI course content creation tool can be a Summary generator. Tools like this one will allow educational staff to craft high-quality summaries of courses in no time. Students can interact with the summaries to quickly get an idea about the entire course.

So, by utilizing these types of tools, the content creation process for courses will not only become quicker but also more effective in terms of quality. This will ultimately lead to enhancing students’ academic performance.

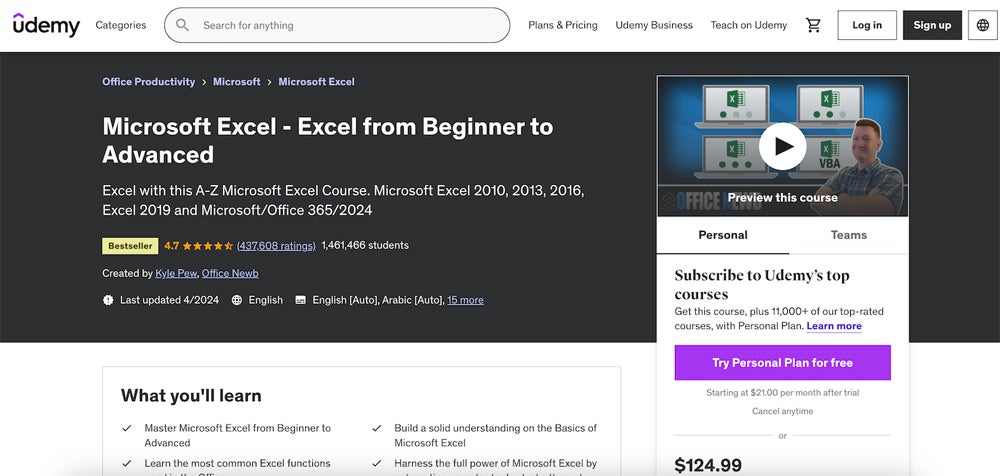

Routine task automation

Just like any other field, education also has numerous routine repetitive tasks. One such task is Grading. And we all know that every class has several students, so carefully evaluating each student’s work and providing constructive feedback will require full energy, time, and focus, which may not be always possible for the teachers.

So, in this sort of scenario, Gen AI tools like CoGrader will be their companion. It will automatically assign grades to the academic material. Not just this, it also provides a detailed explanation or feedback about the grading.

This way, a lot of time and effort for professors will be saved, and students will also get accurate feedback for better learning.

Apart from grading, timetable creation for teachers is a routine yet complex task for the management. But thankfully, Gen AI tools such as EduTime. It will automatically generate timetables in no time.

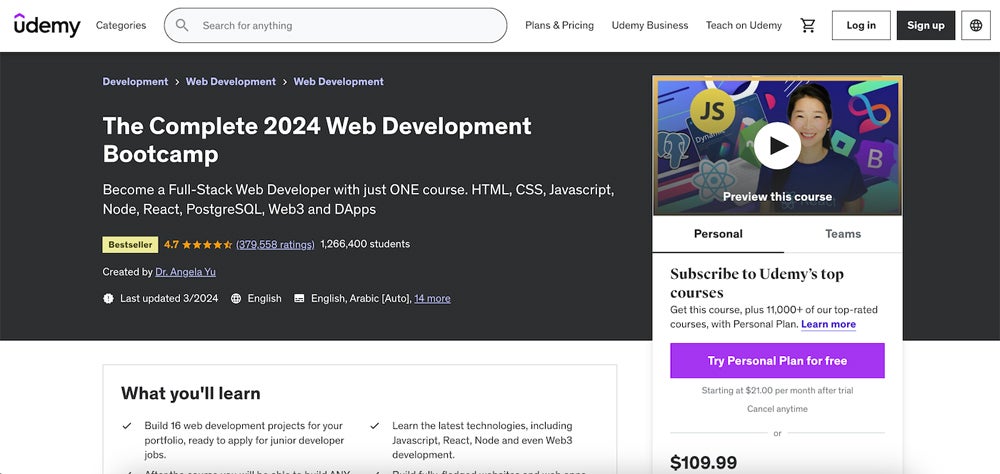

Promoting personalized learning

Do you know? by nature, each student has different learning capabilities. This is because personalized learning is being adopted and promoted all around the world. Gladly, Gen AI also plays a key role. Let us explain how.

There are numerous AI-based virtual tutors or platforms available such as ALEKS by McGraw Hills. It is a learning platform that constantly monitors student’s learning behavior, capability, and knowledge state, and then provides guidelines accordingly for better learning.

It is also important to note that personalized learning is possible with written content, images, and videos. And researches suggests that the human mind learns better from visuals.

For the creation of high-quality visuals, many notable Gen AI tools are available, such as DALL-E 3 by OpenAI. It allows both teachers and students to create fully personalized images to support their overall learning process.

So, by promoting personalized learning through these sorts of tools, both professors and educational institutes can make sure that their students are learning at their full potential.

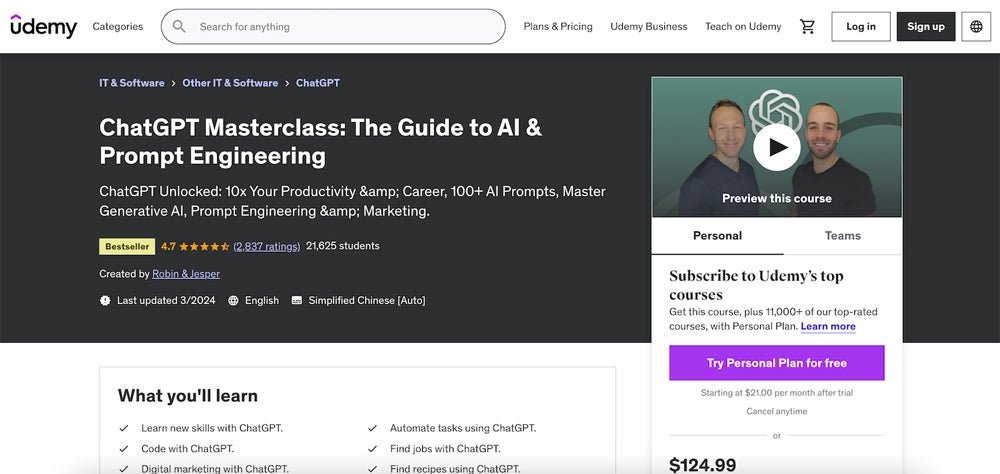

Customized data-based feedback

This is the final way through which Generative AI is transforming the field of education. For maximum academic performance, institutions must provide regular performance feedback reports to students. So, they can find where they are doing great and where they need improvements.

Providing detailed feedback consistently to each student will be difficult for a teacher. So, Gen AI will also help them in this regard. Let us explain how.

There are several advanced tools available on the internet such as School Analytics by PowerSchool. Tools like this one will provide teachers and staff with a dedicated dashboard that will generate useful information about students’ performance that can be used as feedback reports.

Final words

Generative AI is a new invention in the field of Artificial Intelligence. It has transformed numerous fields, especially education in several ways such as on-demand quality content creation for learning purposes, assistance in personalized learning, and many more. In this article, we have explained all these ways in complete detail.