Indian edtech is warming up to large language models (LLMs) to provide hyper-personalised learning experiences to students. Last year, Mayank Kumar, the co-founder and MD at upGrad, told AIM that the edtech firm was exploring the idea of building its own proprietary LLM.

ChatGPT has presented a significant challenge to edtech firms, forcing stakeholders to either embrace its capabilities or risk falling behind.

At a time when some edtech platforms underestimated the power of ChatGPT and ended up on the cusp of failure, others, like Khan Academy, assessed the potential of generative AI early and embraced it.

Let’s look at some exciting use cases of generative AI in education.

Personalised Learning & Course Design

Personalised lesson plans ensure students receive effective education tailored to their needs and interests. AI-powered algorithms can generate these plans by analysing student data, such as past performance, skills, and feedback.

Khan Academy’s AI tutor, Khanmigo, assists both teachers and students by not only providing answers but also guiding the learners to find the answers for themselves. With Khanmigo, teachers can differentiate instruction, create lesson plans and quiz questions, group students, and more.

Teaching Assistance

Generative AI can assist in creating new teaching materials, such as questions for quizzes and exercises, as well as explanations and summaries of concepts. This can be especially useful for teachers who need to create a large amount and variety of content for their classes.

Furthermore, generative AI can facilitate the generation of additional materials to supplement the main course content, such as reading lists, study guides, discussion questions, flashcards, and summaries.

For instance, with Quizizz, an interactive learning platform, one can create interactive, multimedia-rich quizzes to boost student engagement.

Assess Learning Patterns

AI analyses student performance data and identifies patterns of learning difficulties or gaps in understanding. Adaptive platforms use AI and machine learning (ML) algorithms to assess vast amounts of student performance data, which helps evaluate students’ strengths and weaknesses.

For instance, based on the specific requirements and skills of each student, BYJU’S offers tailored learning experiences through the use of an internal AI model, BADRI.

It implements personalised “forgetting curves” to pinpoint each student’s strengths and weaknesses, providing customised questions and learning videos for areas of improvement.

Tutoring Powered by AI

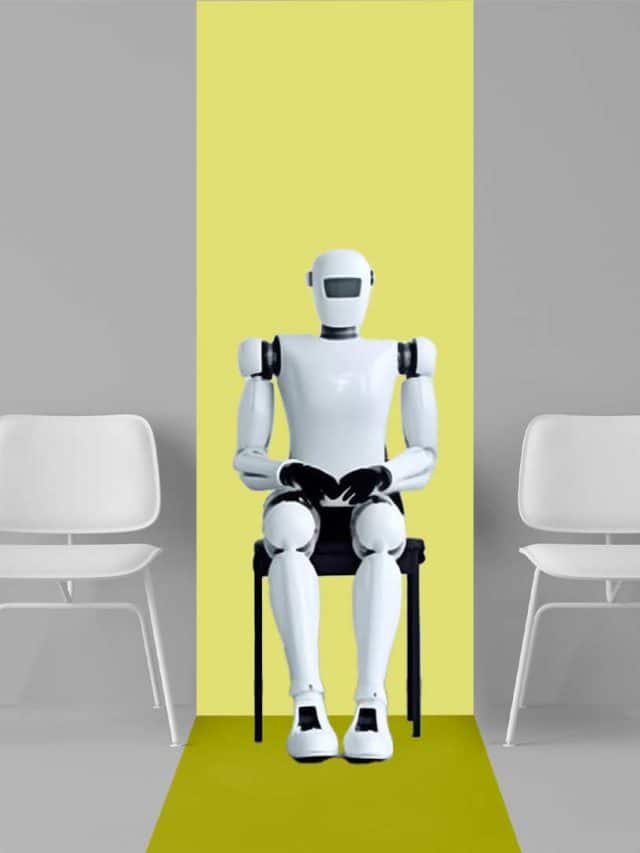

Generative AI can be utilised to create virtual tutoring environments wherein students can interact with a virtual tutor and receive real-time feedback and support. This can be particularly beneficial for students lacking access to in-person tutoring.

Similarly, BYJU’s MathGPT model uses advanced machine learning algorithms to generate accurate solutions for complex mathematical challenges, including trigonometric proofs.

Collaborative Learning Platforms

Apart from teaching assistance or content creation, generative AI can be used for collaborative learning since students find it easier to brainstorm ideas, engage in group discussions, and work together on projects with classmates worldwide.

This feat is achievable through AI-powered virtual platforms that facilitate the exchange of unique ideas, perspectives, and insights, fostering out-of-the-box thinking.

BYJU’S has partnered with Google to provide a collaborative and personalised digital learning platform named ‘Vidyartha’ for schools. This partnership facilitates access to Google Classroom for seamless classroom management, organisation, and learning tracking.

Restoring Old Learning Material

Generative AI models are trained on large datasets of text to learn patterns and structures of language, allowing them to intelligently reconstruct missing or corrupted portions of text documents such as historical manuscripts, books, or course materials.

Using techniques like GANs, generative AI can upscale and enhance the resolution and quality of old, low-resolution images and photographs used in educational materials.

Engaging Content Creation

Using foundational models enables the creation of diverse educational materials, like interactive stories, immersive simulations, and more. By leveraging captivating visuals generated using AI, learning becomes an engaging adventure for learners.

If teachers want to produce images tailored to their course requirements, for instance, NOLEJ, an AI-powered decentralised skill platform, offers an AI-generated e-learning capsule in just three minutes. This capsule features an interactive video and a glossary.

Top 10 Influential AI Leaders in the World in 2024

Top 10 Influential AI Leaders in the World in 2024  10 Jobs That AI Can’t Replace

10 Jobs That AI Can’t Replace  Top 10 Indian AI Companies and Their Stock Share Price

Top 10 Indian AI Companies and Their Stock Share Price  6 Ways to Use GPT4o (Omini) for Free

6 Ways to Use GPT4o (Omini) for Free  Upcoming AI Conference for Engineers in May 2024 View all stories

Upcoming AI Conference for Engineers in May 2024 View all stories

The post 7 Generative AI Use Cases in Education appeared first on AIM.