Image by Author

I spent around $30,000 on a 3-year computer science degree to become a data scientist.

This was an expensive and time-consuming process.

After graduating, I realized that I could’ve just learned all the necessary skills online instead. Top-tier universities like Harvard, Stanford, and MIT have released dozens of courses for anyone to consume.

And the best part?

They’re completely free.

Thanks to the Internet, you can now get an Ivy League education for free from the comfort of your home.

If I could start over, here are 5 free university courses I would’ve taken to learn coding for data science.

Note: Python and R are two of the most widely used programming languages for data science, and as such, most courses in this list focus on one or both of these languages.

1. Harvard University — CS50’s Introduction to Computer Science

Harvard's CS50 course is one of the most popular entry-level programming courses offered by the university.

It takes you through the fundamentals of computer science, covering both theoretical concepts and practical applications. You will be exposed to an array of programming languages, like Python, C, and SQL.

Think of this course as a mini computer science degree packaged into 24 hours of YouTube content. For comparison, CS50 covered what took me three semesters to learn at my own university.

Here’s what you will learn in CS50:

- Programming Basics

- Data Structures and Algorithms

- Web Design with HTML and CSS

- Software Engineering Concepts

- Memory Management

- Database Management

If you want to become a data scientist, a solid foundation in programming and computer science is required. You will often be expected to extract data from databases, deploy machine learning models in production, and build model pipelines that scale.

Programs like CS50 equip you with the technical foundation needed to progress to the next stage of your learning journey.

Course Link: Harvard CS50

2. MIT — Introduction to Computer Science and Programming

MITx's Introduction to Computer Science and Programming is another introductory course designed to equip you with foundational skills in computer science and programming.

Unlike CS50, however, this course is taught primarily in Python and places a heavy emphasis on computational thinking and problem-solving.

Furthermore, MIT’s Intro to Computer Science course focuses more on data science and the practical applications of Python, making it a solid choice for students whose sole aim is to learn programming for data science.

After taking MIT’s Intro to Computer Science course, you will be familiar with the following concepts:

- Python Programming: Syntax, data types, functions

- Computational Thinking: Problem-solving, algorithm design

- Data Structures: Lists, tuples, dictionaries, sets

- Algorithmic Complexity: Big O notation

- Object-Oriented Programming: Classes, objects, inheritance, polymorphism

- Software Engineering Principles: Debugging, software testing, exception handling

- Mathematics for Computer Science: Statistics and probability, linear regression, data modeling

- Computational Models: Simulation principles and techniques

- Data Science Foundations: Data visualization and analysis

You can audit this course for free on edX.

Course Link: MITx — Introduction to Computer Science

3. MIT — Introduction to Algorithms

Once you’ve completed a foundational computer science course like CS50, you can take MIT's Introduction to Algorithms learning path.

This program will teach you the design, analysis, and implementation of algorithms and data structures.

As a data scientist, you will often need to implement solutions that maintain performance even as dataset sizes increase. You also have to handle large datasets that can be computationally expensive to process.

This course will teach you to optimize data processing tasks and make informed decisions about which algorithms to use based on the available computational resources.

Here’s what you’ll learn in Introduction to Algorithms:

- Algorithm Analysis

- Data Structures

- Sorting Algorithms

- Graph Algorithms

- Algorithmic Techniques

- Hashing

- Computational Complexity

You can find all the lectures for Introduction to Algorithms on MIT OpenCourseWare.

Course Link: MIT — Introduction to Algorithms

4. University of Michigan — Python for Everybody

Python for Everybody is an entry-level programming specialization focused on teaching Python.

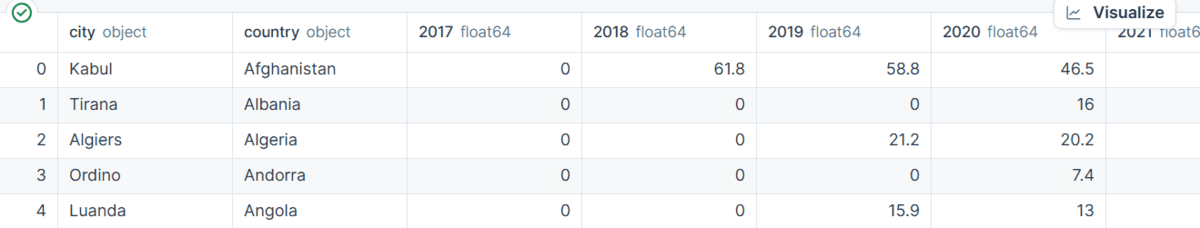

This is a 5-course learning path that covers the basics of Python, data structures, API usage, and accessing databases with Python.

Unlike the previous courses listed, Python for Everybody is largely practical. The specialization focuses on practical application rather than on theoretical concepts.

This makes it ideal for those who want to immediately dive into the implementation of real-world projects.

Here are some concepts you’ll be familiar with by the end of this 5-course specialization:

- Python Variables

- Functions and Loops

- Data Structures

- APIs and Accessing Web Data

- Using Databases with Python

- Data Visualization with Python

You can audit this course for free on Coursera.

Course Link: Python for Everybody

5. Johns Hopkins University — R Programming

You might have noticed that every course so far focuses on Python programming.

That’s because I’m a bit of a Python aficionado.

I find the language versatile and user-friendly, and knowledge of Python is transferable to a broad range of fields beyond just data science.

However, there are some benefits to learning R for data science. R programming was designed specifically for statistical analysis, and there are a range of specialized packages in R for parameter tuning and optimization that aren’t available in Python.

You should consider learning R if you’re interested in deep statistical analysis, academic research, and advanced data visualization. If you’d like to learn R, the R Programming specialization by Johns Hopkins University is a great place to start.

Here’s what you’ll learn in this specialization:

- Data Types and Functions

- Control Flow

- Reading, Cleaning, and Processing Data in R

- Exploratory Data Analysis

- Data Simulation and Profiling

You can audit this course for free on Coursera.

Course Link: R Programming Specialization

Learn Coding for Data Science: Next Steps

Once you’ve completed one or more courses outlined in this article, you will be equipped with a ton of newfound programming knowledge.

But the journey doesn’t end here.

If your end goal is to build a career in data science, here are some potential next steps you should consider:

1. Practice Your Coding Skills

I suggest visiting coding challenge websites like HackerRank and Leetcode to practice your programming skills.

Since programming is a skill best developed through incremental challenges, I recommend starting with the problems labeled “Easy” on these platforms, such as adding or multiplying two numbers.

As your programming skills improve, you can start increasing the level of difficulty and solve harder problems.

When I was starting out in the field of data science, I did HackerRank problems every day for around 2 months and found that my programming skills had dramatically improved by the end of that time frame.

2. Create Personal Projects

Once you’ve spent a few months solving HackerRank challenges, you will find yourself prepared to tackle end-to-end projects.

You can begin by creating a simple calculator app in Python, and progress onto more challenging projects like a data visualization dashboard.

If you still don’t know where to start, check out this list of Python project ideas for inspiration.

3. Building a Portfolio Website

After you’ve learned to code and created a few personal projects, you can display your work on a centralized portfolio website.

When potential employers are looking to hire a programmer or a data scientist, they can view all your work (skills, certifications, and projects) in one place.

If you’d like to build a portfolio website of your own, I’ve created a complete video tutorial on how to build a data science portfolio website for free with ChatGPT.

You can check out the tutorial for a step-by-step guide on creating a visually appealing portfolio website.

Natassha Selvaraj is a self-taught data scientist with a passion for writing. Natassha writes on everything data science-related, a true master of all data topics. You can connect with her on LinkedIn or check out her YouTube channel.

More On This Topic

- KDnuggets News, May 4: 9 Free Harvard Courses to Learn Data…

- 5 Free University Courses to Ace Coding Interviews

- 5 Free University Courses to Learn Data Science

- 5 Free Stanford University Courses to Learn Data Science

- 5 Free University Courses to Learn Computer Science

- 5 Free University Courses to Learn Databases and SQL