Microsoft's Bing has struggled for years to gain a foothold among search engines. But the company's recent deep dive into artificial intelligence (AI) is breathing new life into search, with its AI-powered Bing Chat feature.

Often referred to as Bing ChatGPT, the new Bing is very different to its more popular competitor. It uses GPT-4 and performs more like an AI-powered search engine in a conversational format, but that's just the begining.

Also: How to use Bing Image Creator (and why it's better than DALL-E 2)

Unlike ChatGPT, the new Bing has internet access, giving it the ability to provide more up-to-date responses. ChatGPT, in turn, is only trained on data up to the year 2021, so it cannot provide answers on current events.

How to use the new Bing

What you need: Getting started with the new Bing requires you to use Microsoft Edge and to log in to a Microsoft account. When you access Microsoft Bing, you can choose whether to use the search or chat formats.

On the Bing website, there will be a "Chat" option on the top left.

Any time you perform a Bing search, you can switch to Chat by clicking on it below the search bar.

You can see Bing offers a lot of different options to optimize the conversation.

It's time to ask Bing anything you'd like to know.

FAQ

How is Bing Chat different from a search engine?

The biggest difference between Bing Chat and other AI chatbots, compared to a search engine, is the conversational tone in the rendering of search results. Intelligently formatting search results into an answer to a specific question makes it easier for anyone who is looking to find out something over the internet.

Also: I tried Bing's AI chatbot, and it solved my biggest problems with ChatGPT

Beyond search engine capabilities, Bing Chat is a fully fledged AI chatbot and can do many of the things others can, like ChatGPT. You can now use Microsoft Bing to generate text, such as an essay or a poem, to write code, or to ask complex questions and hold a conversation with follow-up questions.

Does Bing use ChatGPT?

Bing does not use ChatGPT, but it does use GPT-4 in the formulation of its answers, with the exception of the visual input feature. The new Bing is the only way to use GPT-4 for free at this time and Microsoft claims the integration with the latest language model makes Bing more powerful and accurate than ChatGPT.

Also: Want to experience GPT-4? Just use Bing Chat

Many users prefer one or the other. In my experience, I've noticed Bing Chat can sometimes be a bit slow to respond and can miss some prompts, but that's typically remedied by asking a follow-up question such as, "Did you search for that?". However, I also believe the new version of Bing offers users more control over experience and a more intuitive UI.

The GPT 3.5 version of OpenAI's language model powers ChatGPT. When GPT-4 becomes widely available through an updated version of ChatGPT, it will be through OpenAI's subscription service, ChatGPT Plus, which costs $20 a month.

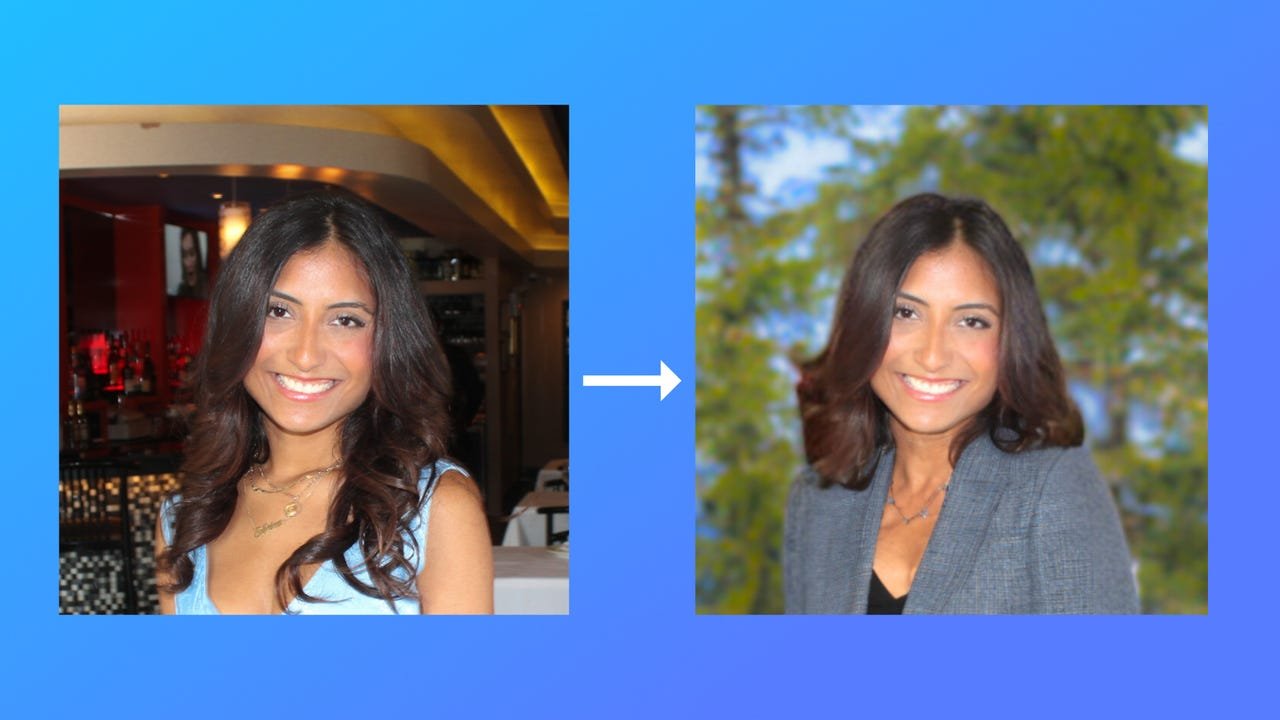

Is there a Bing image creator?

Microsoft recently announced Bing Image Creator. Microsoft is using DALL-E, an artificially intelligent image generator from OpenAI. This is a tool for Microsoft Edge within Bing, as users are able to give Bing a prompt to create images within an existing chat, as opposed to going to a separate website.

Also: The 5 best AI art generators of 2023

Does Bing Chat give wrong answers?

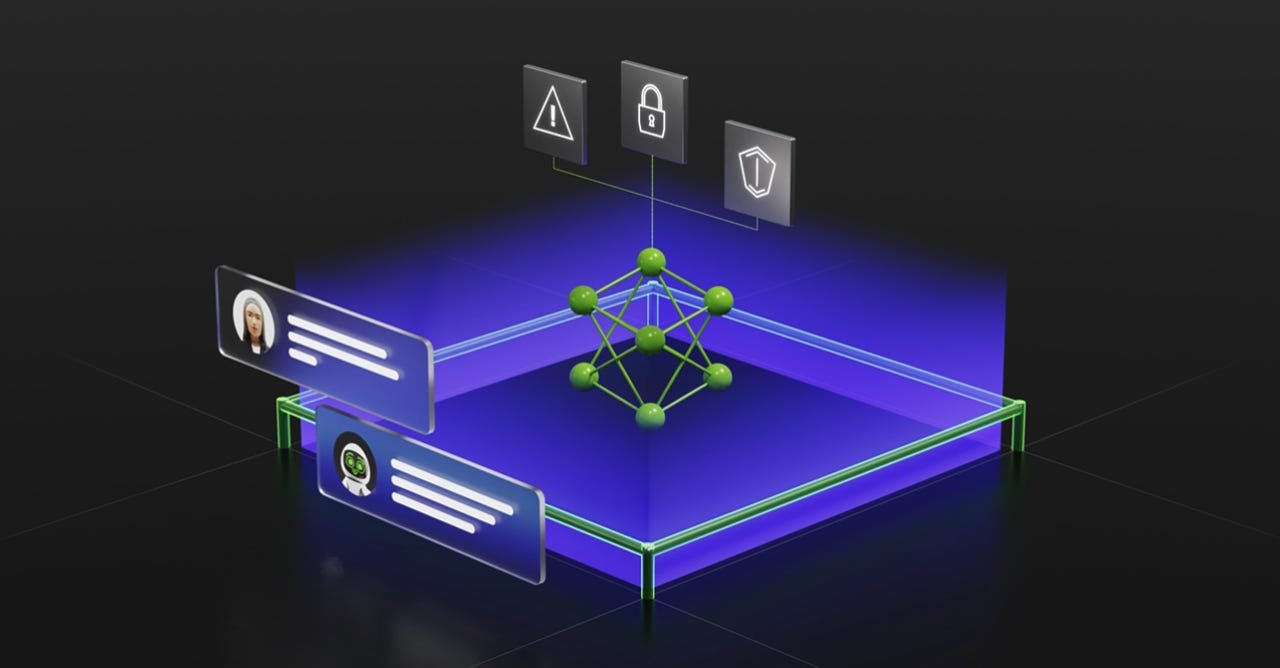

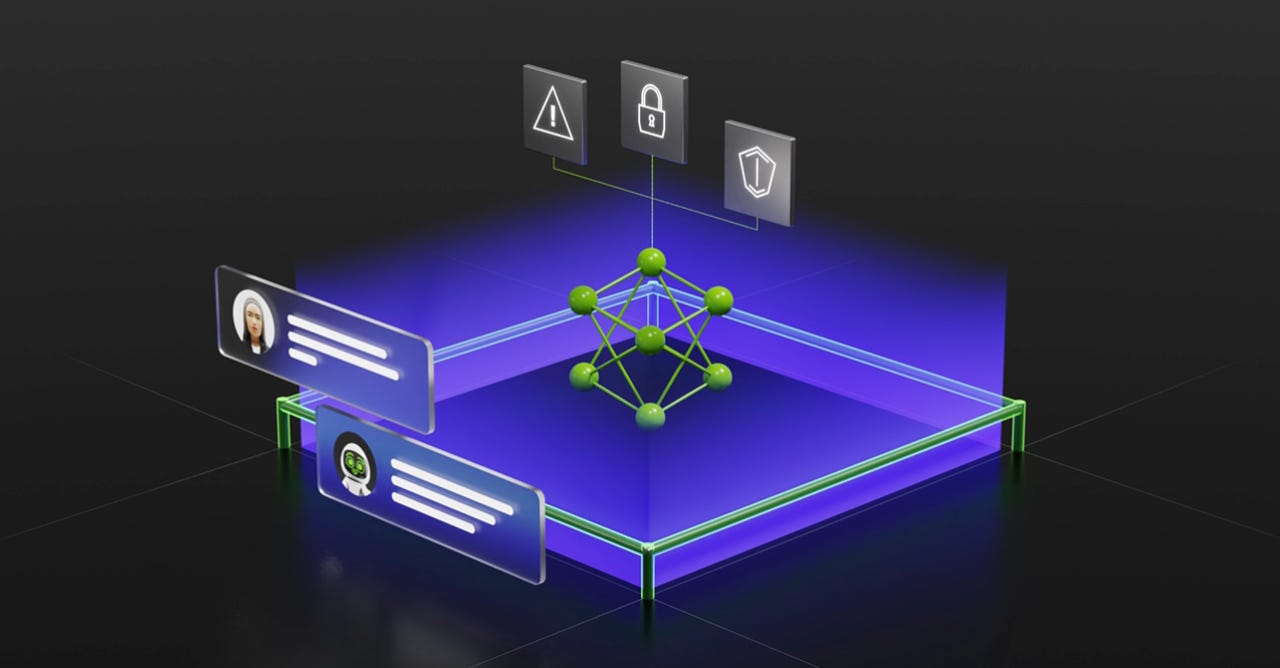

Just like ChatGPT and other large language models, the new AI-powered Bing Chat is prone to giving out misinformation. Most of the output that new Bing offers as answers are drawn from online sources, and we know we can't believe everything we read on the internet. Similarly, when you use the new Bing in chat mode, it can generate nonsensical answers that are unrelated to the original question.

Is Bing Chat free?

Bing Chat is not only free, it's also the best way to preview GPT-4 for free right now. You can use the new Bing to ask questions, get help with a problem, or seek inspiration, but you are limited to 15 questions per interaction and 150 conversations a day.

Also: The best AI chatbots: ChatGPT and other fun alternatives to try

Are my conversations with Bing Chat saved?

Microsoft defaults to clearing your conversations when you click the New Topic button, so your conversations aren't saved beyond the duration of each chat. However, search history is saved in your account, depending on your settings.

Is there a waitlist for the new Bing?

At the time of this publication, if Edge users log in to their account, they should be able to access Microsoft's new AI-powered Bing right away.

Can you use the new Bing on mobile?

If you have the Edge browser on your mobile device, you can use the new AI-powered Bing search in chat mode, much like you would on your computer. There's also the option of skipping the Edge browser and downloading the Microsoft Bing app from your device's app store. This app provides a straight line to the Bing AI chatbot, with the benefit of not having to access a website when you want to use it.

Also: Your Microsoft apps are getting an AI revamp. Here's what we know

Both the Microsoft Bing app and the Edge browser support voice dictation on mobile, so you can ask your questions without even having to type them in.