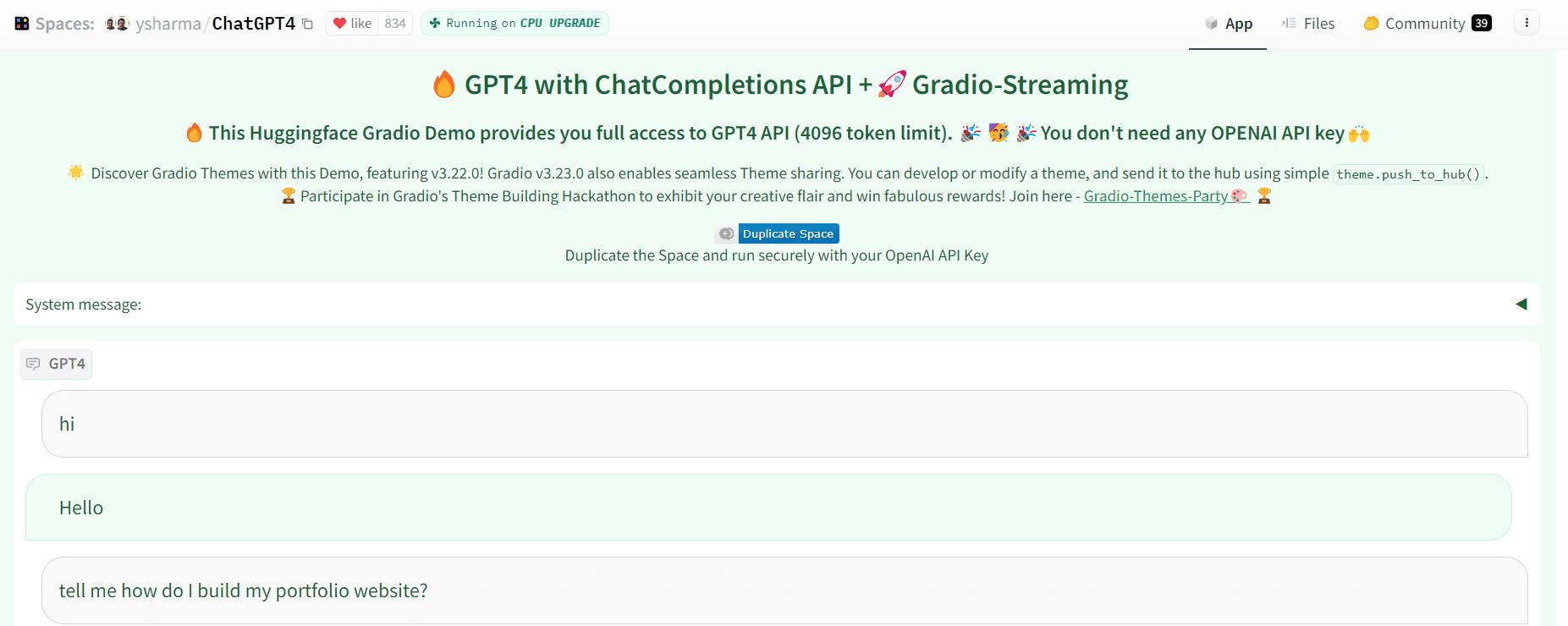

A whole ecosystem, including hardware vendors and application developers, is emerging thanks to generative AI, which will help realise its commercial potential.

In the second half of 2022 and the beginning of 2023, tech pioneers unveiled generative AI solutions, astounding investors, business executives, and the general public with the technology’s capacity to generate wholly original and presumably human-made prose and images.

And well the response has been unprecedented.

One million people flocked to ChatGPT, a generative AI language model from OpenAI that produces original material in response to user requests, in just five days. For iPhone, Apple needed more than two months to achieve the same level of adoption. In comparison to Netflix, Facebook had to wait more than ten months to reach the same user base.

My role as a leader of Data & Analytics has given me a front-row seat to the benefits and risks of generative AI, and I have been spending time in evaluating several generative AI start-ups over the past few months. In my opinion, generative AI is not the omen of doom that detractors claim it to be, even though it is an effective tool that needs human oversight to use responsibly.

Over the past three years, venture capital firms have spent over $1.7 billion in generative AI solutions, with the largest money going towards AI-enabled medication research and AI software writing.

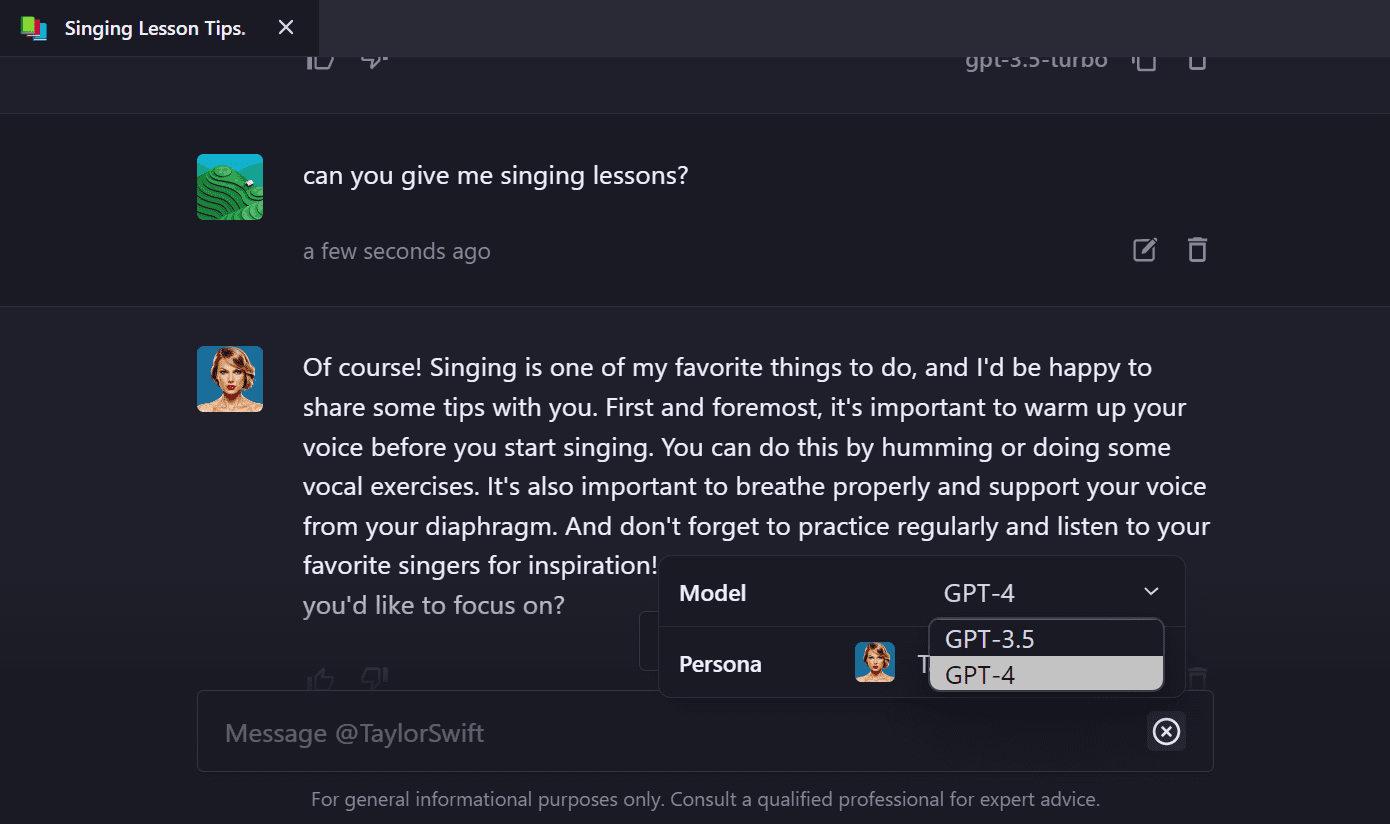

Additionally, ChatGPT is not the only company using generative AI. Within 90 days of its debut, Stability AI’s Stable Diffusion, which can create visuals based on text descriptions, received more than 30,000 stars on GitHub—eight times more quickly than any other package.

A brief explanation of generative AI

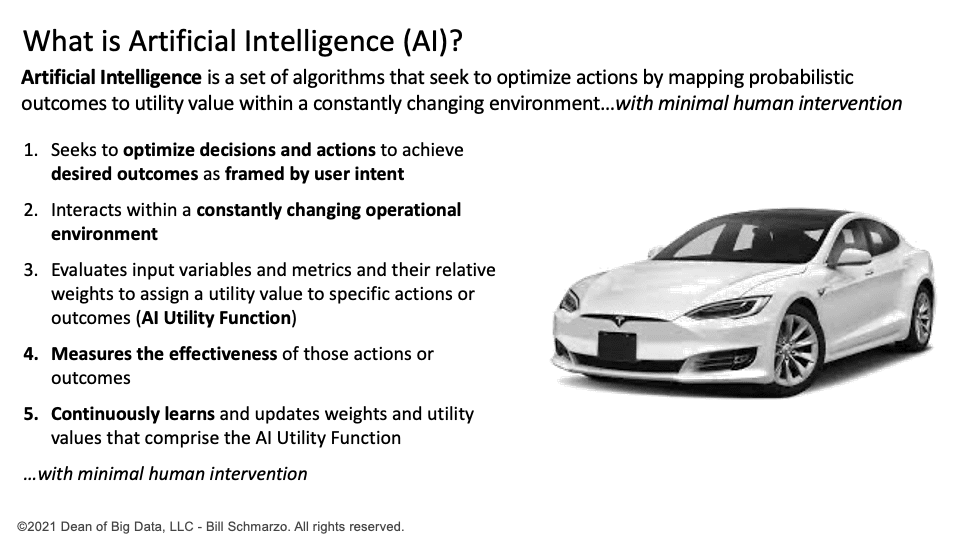

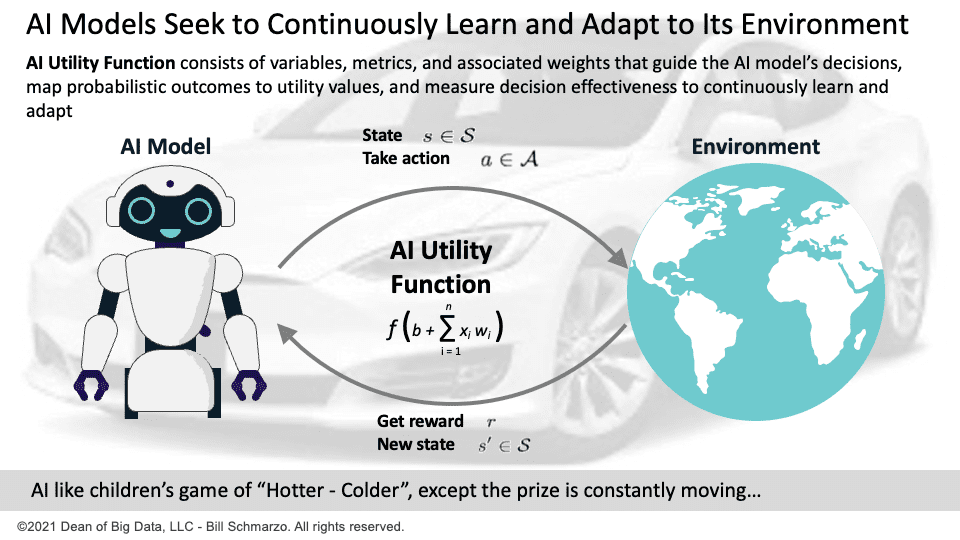

A branch of machine learning known as “generative AI” use algorithms to create new data, such as images, texts, or sounds. It resembles a virtual author or artist producing creative writing and art. However, it’s merely a group of brilliant algorithms at work behind the scenes, not a real artist or writer.

Transformer-based models and GANs (Generative Adversarial Networks) are the two most used generative AI models at the moment.

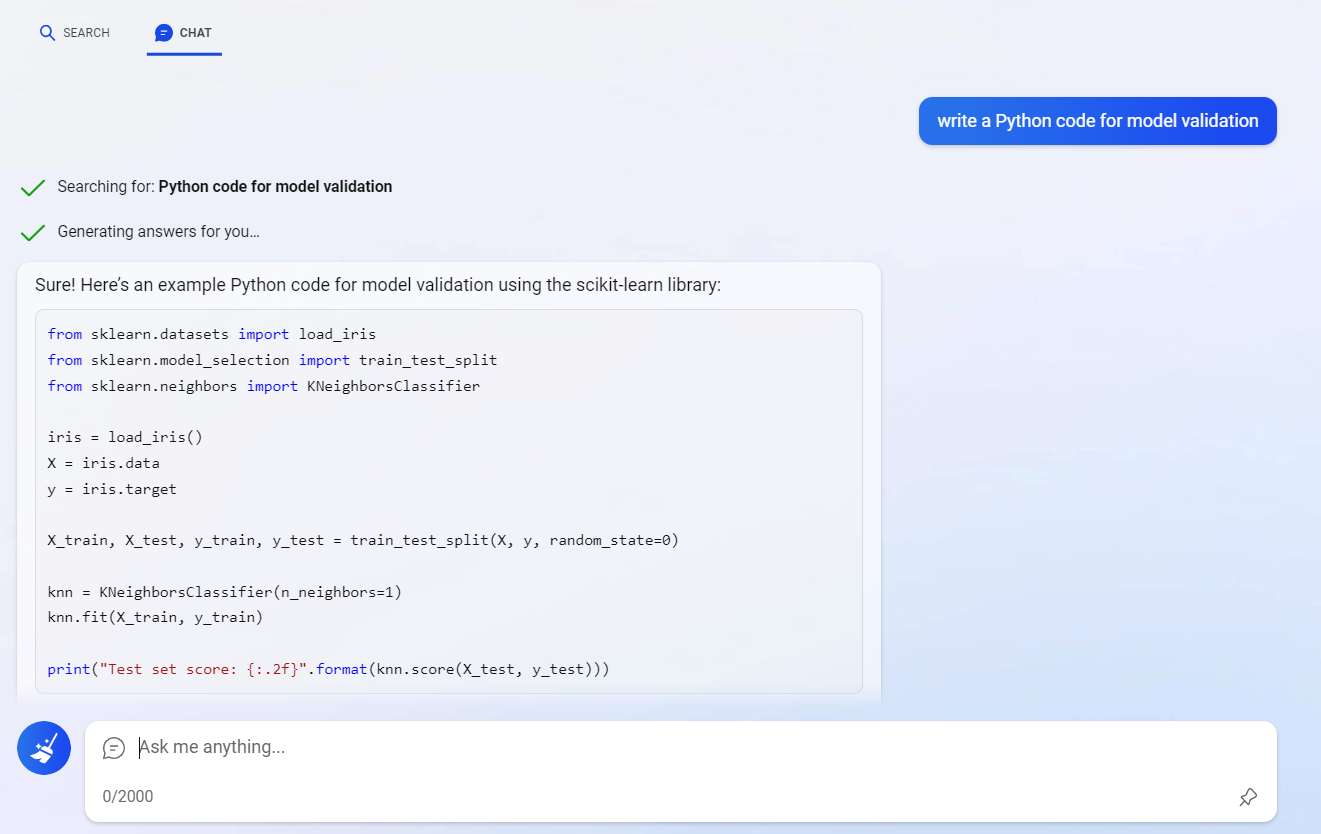

GANs are excellent at converting text and images into visual and multimedia content. Transformer-based models, such as GPT (Generative Pre-Trained) language models, can take in data from the Internet and produce a variety of content, including press releases, whitepapers, and articles for websites.

Why should you care about generative AI?

Well, there are a lot of explanations. Top three are as follows:

- It can create entirely new data that doesn’t already exist. Think about the countless opportunities for investigation and experimentation!

- By producing training data for fresh neural networks or developing top-notch deep learning architectures, it can enhance already-existing algorithms.

- Basically, it’s a machine that creates better machines.

But that’s not all.

Gartner has declared generative AI as one of the most disruptive and rapidly evolving technologies in their 2022 Emerging Technologies and Trends Impact Radar report.

And get this – they’ve made some pretty bold predictions about its future impact.

By 2025, generative AI is expected to generate 10% of all data (currently, less than 1%) and 20% of all test data for consumer-facing applications. Plus, it’ll be used in 50% of drug discovery and development projects by 2025.

And by 2027, a whopping 30% of manufacturers will be using it to improve their product development process.

Generative AI is making waves. So, pretty important stuff, right?

Generative AI Industry-specific use applications

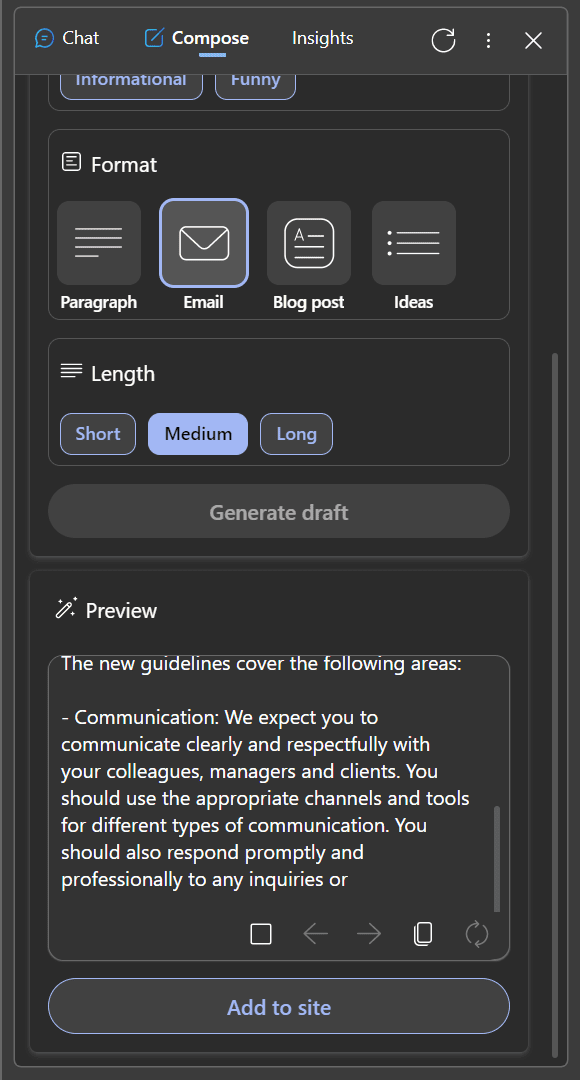

Business models that use generative AI can not only help businesses automate routine work but also increase income. One of the most practical uses of generative AI is content production.

Education

By leveraging generative AI, personalized lesson plans can provide students with the most effective and tailored education possible. These plans are crafted by analyzing student data such as their past performance, skillset, and any feedback they may have given regarding curriculum content. This helps ensure that each student, especially those with disabilities, is receiving an individualized experience designed to maximize success.

Logistics and transportation

The investigation of historically unexplored areas is made possible by generative AI’s precise conversion of satellite photos into map views. For logistics and transportation businesses wishing to explore new areas, this might be extremely helpful.

Travel industry

Systems for face detection and verification at airports can benefit from generative AI. The technology can make it simpler to identify and confirm the identity of travellers by assembling a full-face image of a passenger from images taken from various angles.

Banking

Another area where generative AI is proven to be a useful tool is fraud detection. With the use of their past data, banks are teaching ML and AI algorithms to suggest risk criteria. They can train the system to either ban or allow specific user behaviours depending on the likelihood of fraud by exposing generative AI to prior incidents of fraud and non-fraud. This makes fraud detection quicker and more effective than it would be with just humans.

It’s crucial to remember that the labour being automated is typically low-level, tedious, and repetitive, whether it’s fraud detection or product creation. To guarantee the end product’s quality and safety, human intervention is still required. The true benefit of generative AI is as a multiplier for general-purpose productivity and efficiency.

The Future Of Generative AI

The capabilities of generative AI are unknown to us. More than 30% of new medications and materials are predicted to be discovered by generative AI technology by 2025, which would result in significant cost savings for the healthcare sector. With the ability to foresee future market trends and investment possibilities to lower risk, generative AI is poised to be a potent tool in financial forecasting and scenario building. It has the potential to have a significant impact on the entertainment sector as well, giving businesses the ability to improve visual effects, preserve and colourize movies, and even age or de-age performers’ faces.

Generative AI can analyse enormous volumes of data and patterns, but it cannot take the place of human originality, creativity, and common sense. Therefore, human oversight is essential for its creation and implementation. Businesses and decision-makers should approach generative AI with caution in order to solve ethical issues and make sure that the future involves widening the economic pie to benefit humanity.

This article is written by a member of the AIM Leaders Council. AIM Leaders Council is an invitation-only forum of senior executives in the Data Science and Analytics industry. To check if you are eligible for a membership, please fill out the form here.

The post Council Post: Beyond ChatGPT – Exploring opportunities in the Generative AI value chain appeared first on Analytics India Magazine.