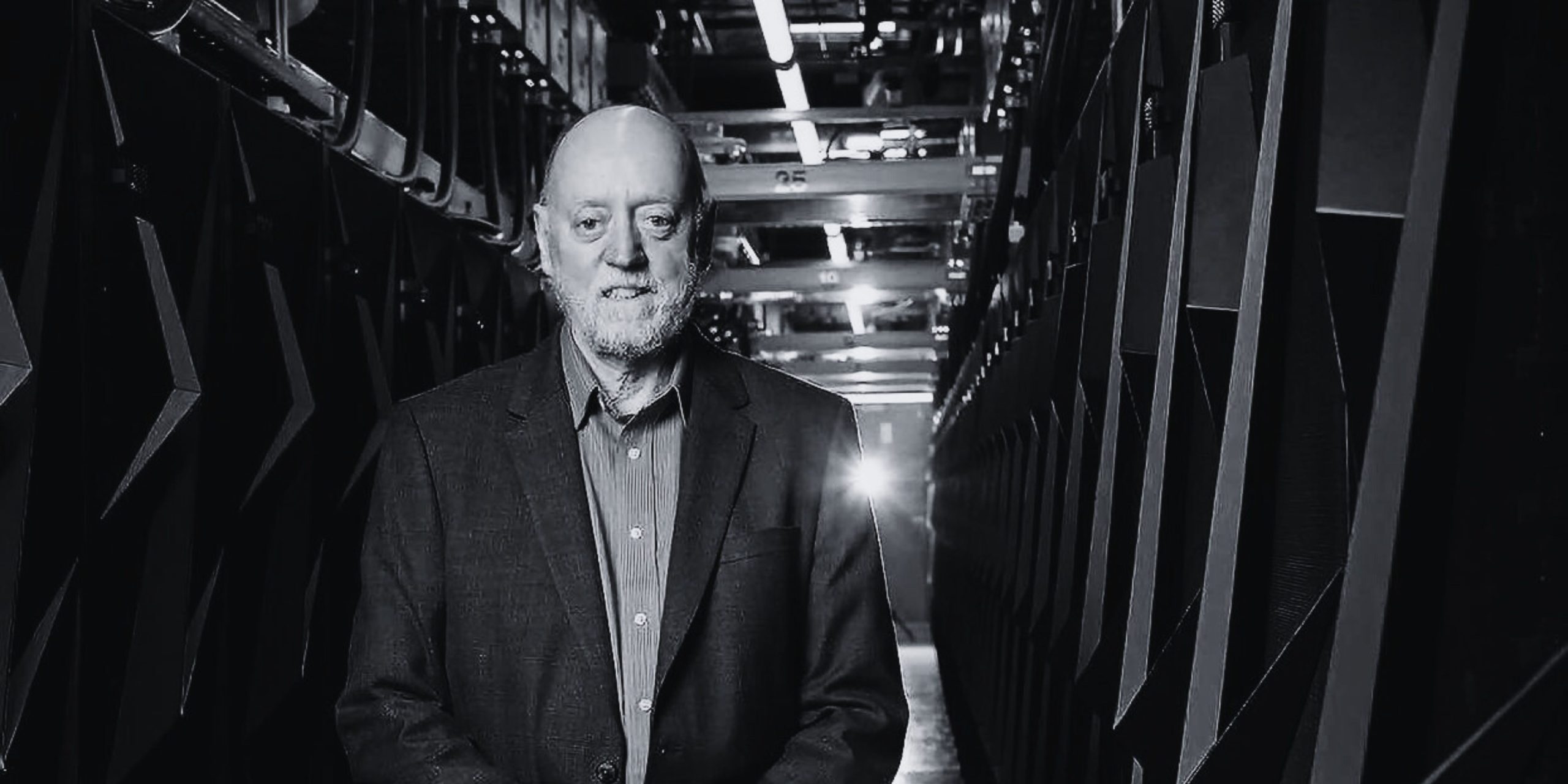

Ask any developer, they will tell you how expensive it is to run, or build a GPT model. The primary reason behind this is the reliance on traditional or outdated GPUs, leading to a pressing issue. “Hardware for machine learning is being exploited today.” averred American computer scientist and Turing award winner Jack Dongarra.

Dongarra further emphasised the need for a better understanding of utilising these resources effectively. He advocated for identifying the appropriate applications and environments where they can be optimally employed. He also underscored the importance of developing software frameworks that drive computations efficiently and reciprocally support hardware capabilities.

So, what’s the solution then? Looking to the future, Dongarra envisions a multidimensional approach to computing, where various technologies, including CPU, GPU, machine learning, neuromorphic, optical, and quantum computing, converge within high-performance computers.

“Quantum is something that’s on the horizon. So I see each of these devices part of the spectrum by which we might be putting them together in our high performance computer. It’s an interesting array of devices that we will be putting together. Understanding how to use them efficiently will be more challenging,” said the 72-year-old scholar.

Capital vs Innovation

Drawing parallels between capital and innovation Dongarra said, “Amazon has their own set of hardware resources, Graviton. Google has TPU. Microsoft has their own hardware that they’re deploying in their cloud. They have this incredible richness that they can invest in the hardware, the solid, specifically problems they need to address. That’s quite a departure from what we experience in high performance computing where we don’t have that luxury. We don’t have that large amount of resources funding at our disposal to invest in hardware to solve our specific problems.”

While comparing the technological prowess of Apple with traditional computer companies such as HP and IBM, Dongarra pointed to a significant difference in market capitalisation. While Apple’s value soars into the trillions of dollars, the combined market capitalization of HP and IBM falls short of the trillion-dollar mark. “We have cloud based companies with their amount of revenue they can be innovative and build their own hardware. For instance, iPhones consist of many processors designed specifically to aid in what the iPhone does. They’re replacing software with hardware. They do that because it’s faster and that’s quite different from the high performance computing community in general,” he said.

On the other hand, supercomputers are predominantly constructed using off-the-shelf components from players like Intel and AMD. These processors are often supplemented with GPUs, and the overall system is connected through technologies such as InfiniBand or Ethernet. Dongarra explained, that the scientific community, which relies on these supercomputers, faces funding constraints that limit their ability to invest in specialised hardware development.

IBM has been at the forefront of quantum but last week at Think, CEO Arvind Krishna unveiled a vision emphasising the potential of combining hybrid cloud technology and AI alongside quantum computing throughout the upcoming decade. Though the company is often considered traditional with its focus on hardware, the recent leap in generative AI says otherwise.

Story Behind LINPACK

“I wanted to be a high school teacher,” Dongarra said. Computer science was not even developed at the time the polymath was studying. Dongarra’s passion for numerical methods and software development kindled during his time at Argonne National Laboratory near Chicago. As he worked on developing software for numerical computations, his experience solidified his interest in the field. This pivotal moment led him to earn a master’s degree while working full time on designing portable linear algebra software packages that gained global recognition.

Soon after, Dongarra embarked on a journey to the University of New Mexico. During this period, he made a groundbreaking contribution by creating the LINPACK benchmark, which measures the performance of supercomputers. Joined by Hans Meuer, he established the iconic Top500 list in 1993, tracking the progress of high-performance computing and illuminating the fastest computers worldwide.

Today, Dongarra continues to shape the future of computing through his ongoing research at the University of Tennessee, which explores the convergence of supercomputing and AI. As AI and machine learning continue to gain momentum, his work focuses on optimising the performance of algorithms on high-performance systems, enabling faster and more accurate computations.

A ‘2001: Space Odyssey’ Fan

In the 1980s, a luminary emerged in the realm of computing, where innovation reigns supreme: Jack Dongarra. “At almost every turn, we see computational people, looking for alternative ways to solve problems and they see AI as a way. But AI is not going to solve the problem; it’s going to help them in terms of the solution to the problems,” said the 2021 ACM Turing Award recipient. He was awarded for his contributions which ensured that high-performance computational software remains in sync with the advancements in hardware technology.

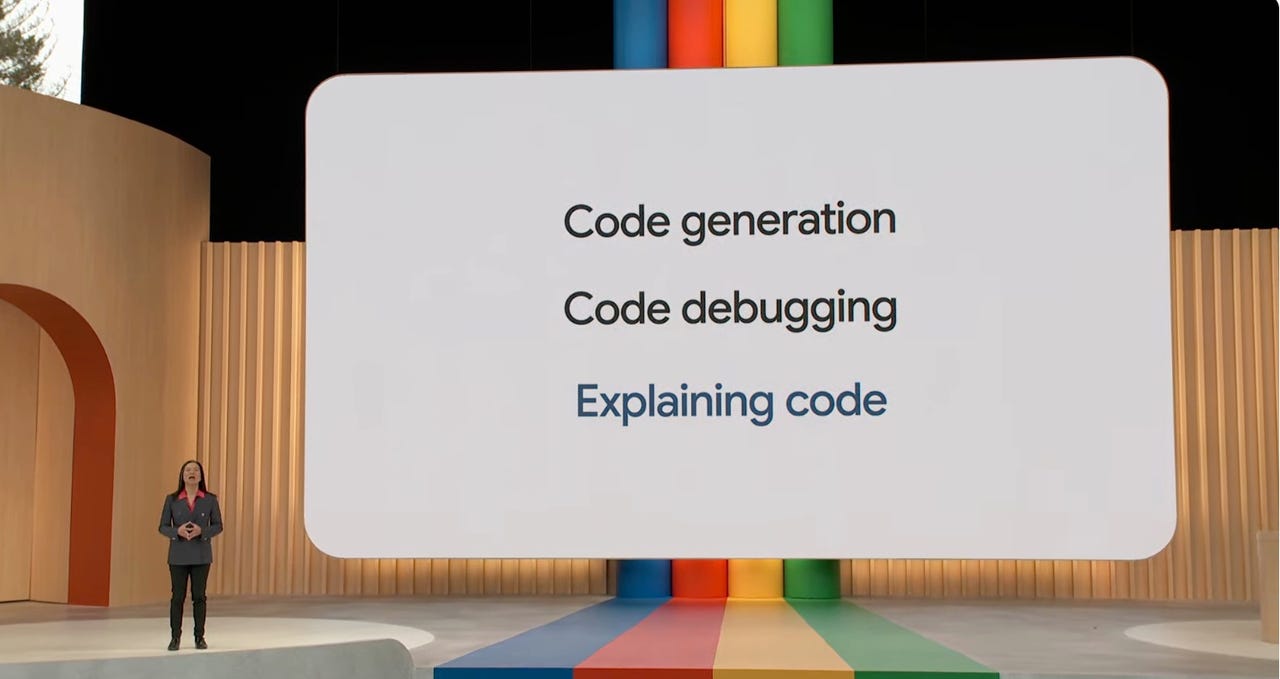

“AI has really taken off recently because of a number of reasons. One is the tremendous amount of data we have today on the internet. Resources that can be mined to help with the training process. So a flood of data that we have available. We have processors that can do the computation at a very fast rate. So we have computing devices which can be optimised and be used very effectively in helping to train,” he said while emphasising the evolving technology.

Dongarra also highlighted the crucial role of linear algebra in AI algorithms, emphasising the importance of efficient matrix multiplies and steepest descent algorithms. “Many things have come into place that allow AI machine learning to really help and be a very useful resource. AI has really had a big impact in many areas of science like drug discovery, climate modelling and biology, in drug discovery in materials and cosmology in high energy physics,” he further added.

Regarding artificial general intelligence (AGI), Dongarra believes that machines should be developed to automate mundane tasks and assist in scientific simulations and modelling. However, he underscores the need for caution, as the vast amount of unfiltered information available on the web can be misleading.

“2001: A Space Odyssey is a relevant movie, in the sense that it looks at AI with a computer that is maybe going a little haywire and takes over a mission and does some damage along the way. I found that fascinating when I first saw it back when it came out and I still enjoy the story. It has many things which are relevant today,” he concluded.

The post Turing Award Winner Warns of Machine Learning Hardware Abuse appeared first on Analytics India Magazine.