Microsoft Excel is the original low-code tool, but data and business logic in an Excel spreadsheet are not managed and not necessarily shared with other business users, so they are not something that can be easily reused outside that spreadsheet. Data in the data platform used by Microsoft’s Power Platform, Dataverse, is richer: There’s metadata that tags business objects like email addresses, invoices and order numbers with details of what should be in them and what business do with them, plus support for business logic, authorization, intelligence and analytics.

Jump to:

- Tool gives Excel data a new home in Dataverse

- New AI-powered tools in Dataverse

- How low-code developers can use Power Fx with Dataverse

- Using SQL with Dataverse

- Securing data via Microsoft Dataverse

Tool gives Excel data a new home in Dataverse

The generative AI Power Apps Copilot can already be used to build applications in Microsoft Dataverse by describing in natural language what a user wants the app to do. For example, they can ask Copilot to add more screens, controls and features as they get more ideas.

Excel to App is a new tool in preview to help users bring in data they already have in spreadsheets. It does exactly what the name suggests: Users can drag and drop unstructured data from Excel — or give Copilot a link to the file — and the Power Platform will analyze it, enrich it with the extra information Dataverse needs, and turn it into an app, Nirav Shah, the vice president of Dataverse at Microsoft explained to TechRepublic.

“Because it’s Power Apps Copilot-enabled, it’s inferring what the table structure should be, how should it name it, what are the descriptions, what are the columns that need to exist and the data types of those columns,” Shah said. “For enumerations (which are lists of possible values), it even automatically generates the values for the option set in the Dataverse schema for you.”

Giving all that Excel data a new home in Dataverse is great for data governance.

“Taking that unmanaged and citizen data out there that’s ungoverned across the enterprise and turning it into a fully managed, structured cloud back end with full authorization policies, governance and security that can scale as the business needs can help alleviate the shadow IT that exists across the enterprise,” Shah pointed out.

The new elastic tables in Dataverse can handle large volumes of non-relational data, up to ingesting tens of millions of rows an hour.

Enterprises already use tools to find “load-bearing” Excel spreadsheets that business users depend on. Now they can encourage them to bring that critical data into Dataverse where the IT team can back up, version and manage it, and other business users can take advantage of it. But, Shah suggested individual users will also want to bring their Excel data into the Power Platform so they can use tools there — like natural language for building the user interface for their app.

“We think this is going to remove a lot of friction,” Shah said. “It provides folks doing personal productivity (tasks in Excel) with a path forward to see the art of the possible with the richness that Dataverse in the Power Platform can provide them.

“Dataverse is the native backend that’s interconnected across the whole Power Platform and making the transition from Excel, all the richness and capabilities we’ve got across the rest of the Power Platform. The fact that you can do this in under a minute really removes the barriers for developers to start leveraging more and more of those capabilities within Dataverse on top of that data.”

New AI-powered tools in Dataverse

Data in Excel might be easy for users to work with individually, but bringing it to Dataverse connects it to a range of new AI tools.

Power Virtual Agent chatbots

Once data is in Dataverse, it’s available for Power Virtual Agents chatbots to use, including the Teams bots users can now make. If a user keeps a list of company hardware assets like projectors in Excel and brings that into Dataverse instead, it could become part of an onboarding chatbot that helps new employees find out how to do things, alongside the official company HR tools.

Those bots can use the Azure Open AI Service to start answering questions the original creator of the bot didn’t design them to handle. For instance, if someone adds VR headsets and HoloLens to the hardware list, they can tell Copilot to include them in the app, and the bot could answer questions about them without the bot author adding those details manually.

Teams Toolkit for Visual Studio and Visual Studio Code

The Teams Toolkit for Visual Studio and Visual Studio Code simplifies creating apps for Teams that use Adaptive Cards as the interface inside Teams. Along with the ChatGPT plugins that Bing is standardizing for its AI chat and Power Platform connectors, the Teams message extensions that can be created with the Teams Toolkit will work as plugins for Microsoft 365 Copilot — the AI tools coming to the Office applications and services, which will have access to data from Dynamics 365 and Power Platform stored in Dataverse.

If you want to do something specific enough times, it might make sense to create an app in Power Apps to do it. Or once the data is in Dataverse, it might be easier to just ask Copilot to give a status update for the best sales opportunities or a list of the top trending customer issues in the last week. But users don’t have to choose as apps made using Power Apps Copilot have Copilot in them, so they can ask Copilot to do things inside the app.

Data hygiene tools

Now that it’s so easy to use the data in Dataverse for AI what Shah calls “data-driven applications,” it’s vital for it to be clean, complete and correct. This means that it must have full customer details with no missing lines in addresses and all the right details on an invoice. New AI-powered data hygiene tools in Dataverse do deduplication and smart data validation for objects like email addresses and URLs, as well as physical addresses.

“Dataverse has the semantic data model with a deeper knowledge of what the implicit value of the data is for emails and addresses because those are concrete data types, so it can automatically provide a lot more richness in terms of data validation,” said Shah.

Cleaning and normalizing data is something that business users might not think of doing, so having it built into the platform will help them get better results.

“We want to simplify and make it more turnkey for developers to get higher quality data into the system so that the insights, the applications, the business processes are providing as much value as possible for end users of the applications and processes developers are building on top of the system,” Shah said.

SEE: TechRepublic’s cheat sheet about data cleansing

How low-code developers can use Power Fx with Dataverse

Low-code developers can also use the Power Fx language, which will be familiar to anyone who has created Excel functions, to write their own custom validations for any instant or on-demand actions — or to build other reusable plugins for business logic and Dataverse rules with triggers and actions that work with Power Platform connectors and web APIs.

“These are a low-code way to develop business logic and incorporate that into the system without having to go into full-fledged .NET development,” said Shah. “You can trigger on specific records being created or updated within the system and then orchestrate what you want to happen using Power Fx to call other APIs within Dataverse to interact with other data in the system or invoke any of our thousand-plus Power Platform connectors (to other data sources) to orchestrate that logic or even build new APIs using Power FX and then expose those as capabilities that can be leveraged from anything built on top of Dataverse.”

That could send an email to customers thanking them for their orders or replicate anything else that users could do with a SQL stored procedure, but do it directly from Dataverse, rather than needing to know how to program a SQL database.

Users can already create rich custom business logic on events and actions in Dataverse, but this simplifies building that without having to do a lot of bespoke development work.

“We’ve removed a lot of the barrier to entry and made it far easier and more composable to use all the building blocks that already exist within the system,” Shah continued. “It’s leveraging the context that we have within the environment and the data models to make it easier and quicker for developers to add that business logic into the system.”

Using SQL with Dataverse

Dataverse is much more than a SQL database, but developers who already know how to use SQL to write queries to explore, filter, aggregate, sort, join and group data can use the new web-based SQL editor in Power Apps Studio to use those SQL queries against Dataverse tables.

That’s useful because it means existing database developers don’t have to learn a new way to query data, but the same technology is also how the different Microsoft Copilots can work with Dataverse data.

“Behind the scenes, what we’re doing is transforming the query from a logical representation that is manifested through the metadata in Dataverse into the physical storage that we’ve got within Dataverse,” Shah explained. “It’s also a key component to how we support many of the Copilot scenarios being built on top of Dataverse.

“The ability for us to take natural language and translate that into a structured query that can run in the context of the user, with their security and the authorization rules apply to them, to be able to respond to those natural language queries for Power App Copilot, and other Copilots across the Microsoft ecosystem is really, at the core, powered by our support for SQL query on top of Dataverse.”

Again, this helps experienced developers work faster, Shah suggested.

“Professional developers don’t have to build and put all those pieces together themselves,” Shah said. “Because we have that understanding, because we have that native connectivity into the broader Power Platform ecosystem, we’re able to connect the dots automatically, so that they can build these app-specific Copilot experiences in a turnkey fashion and get that value out to their users more quickly, easily, than spending the time to build up that scaffolding themselves.”

SEE: How to query multiple tables in SQL in this TechRepublic tutorial

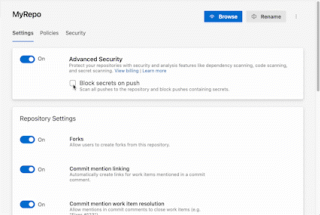

Securing data via Microsoft Dataverse

With so much important data in Dataverse, organizations may be looking for extra security options. If a user manages their own encryption keys in Azure Key Vault, they can now use this Bring Your Own Key option with Dataverse. They can also limit access based on IP address almost in real time with a new IP firewall that lets the security team choose the IP range users can connect from.

If someone tries to take sensitive actions like deleting their account — which might be legitimate but could also suggest their account has been taken over by an attacker — Azure Active Directory continuous access evaluation takes a look at how the account is authenticated and where it’s connecting from. If a user moved to a different IP address by going home or their machine shows up as connecting from an unfamiliar location, and it’s not in the allowed IP range, their request will be blocked, even though they were already logged in and would usually be allowed to do it.

“Workforces are more remote and hybrid and moving across the world in ways which they haven’t been historically,” Shah pointed out. “If you don’t want users to join from a coffee shop down the street, or you want to keep them in your corporate network, the IP firewall provides a mechanism, another defense in depth capability for folks to secure their infrastructure and protect their precious asset, which is their data.”

The policy for what users are allowed to do with that data might be different depending on which department they work for, and now it can change by what location they’re working in.

Microsoft Weekly Newsletter

Be your company's Microsoft insider by reading these Windows and Office tips, tricks, and cheat sheets.

Delivered Mondays and Wednesdays Sign up today

Today at its annual Build conference, Microsoft announced that it’s adopting the same plug-in standard its close collaborator, OpenAI, introduced for ChatGPT, its AI-powered chatbot — allowing developers to build plug-ins that work across ChatGPT, Bing Chat (on the web and in the Microsoft Edge sidebar), Dynamics 365 Copilot, Microsoft 365 Copilot and the newly launched Windows Copilot.

Today at its annual Build conference, Microsoft announced that it’s adopting the same plug-in standard its close collaborator, OpenAI, introduced for ChatGPT, its AI-powered chatbot — allowing developers to build plug-ins that work across ChatGPT, Bing Chat (on the web and in the Microsoft Edge sidebar), Dynamics 365 Copilot, Microsoft 365 Copilot and the newly launched Windows Copilot.