ChatGPT’s new app comes out of the gate hot, tops half a million installs in first 6 days Sarah Perez @sarahintampa / 8 hours

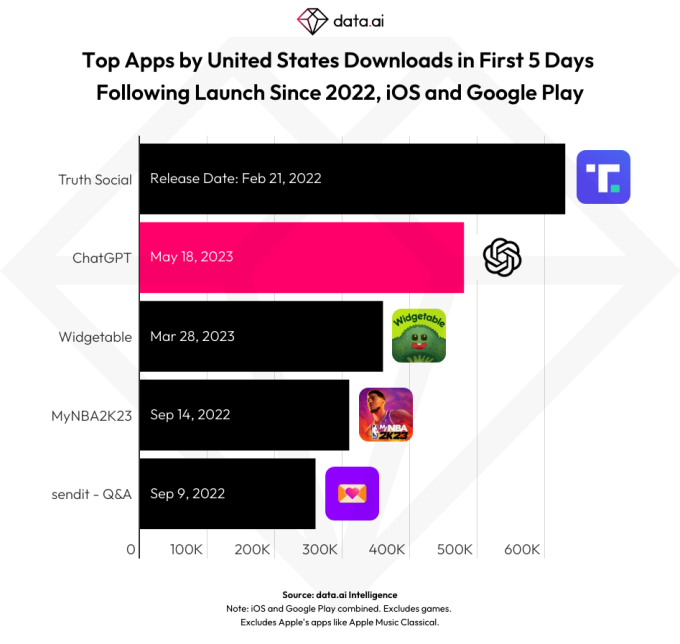

Despite being U.S.- and iOS-only ahead of today’s expansion to 11 more global markets, OpenAI’s ChatGPT app has been off to a stellar start. The app has already surpassed half a million downloads in its first six days since launch, according to a new analysis by app intelligence provider data.ai. That ranks it as one of the highest-performing new app releases across both this year and the last, topped only by the February 2022 arrival of the Trump-backed Twitter clone, Truth Social.

As consumer demand for AI chatbots heated up, other third-party apps calling themselves “ChatGPT” or “AI chatbot” have filled the App Store. While many of these were essentially fleeceware, trying to trick consumers into paying for expensive subscriptions to access their AI, a combined group of top apps still managed to pull in millions in consumer spending. This competitive landscape among AI chatbot apps could have created a tougher market for an official ChatGPT app to gain traction. But as it turns out, that was not the case.

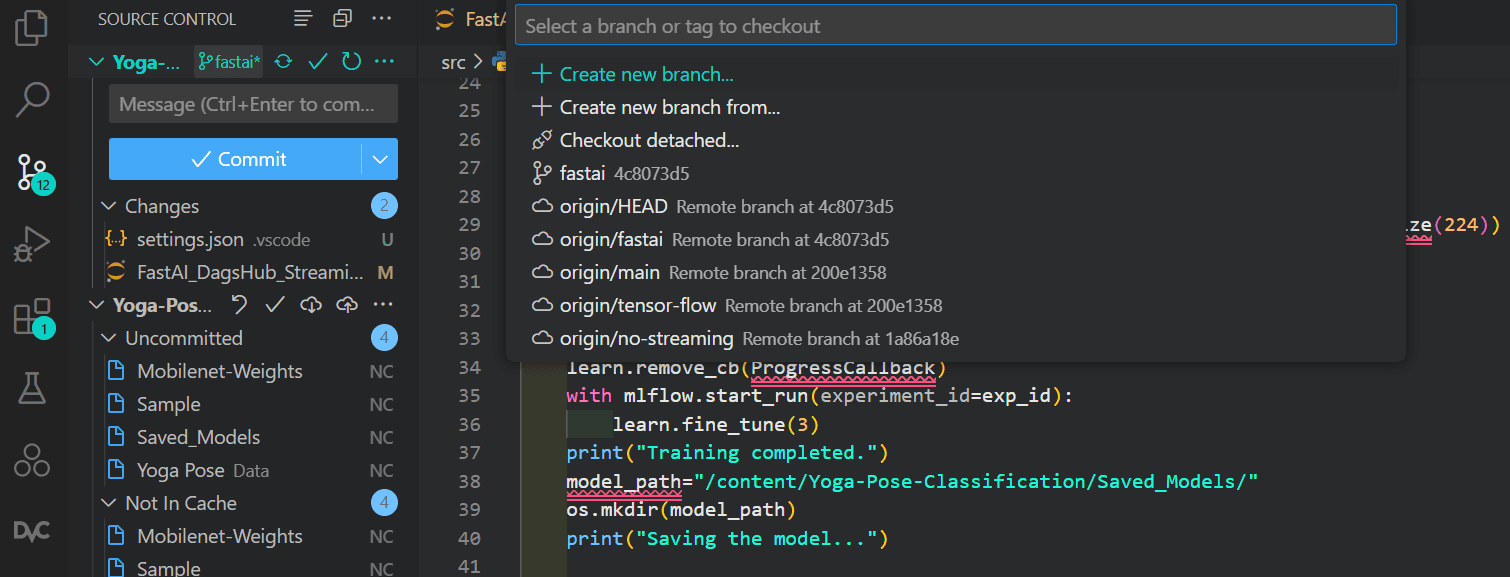

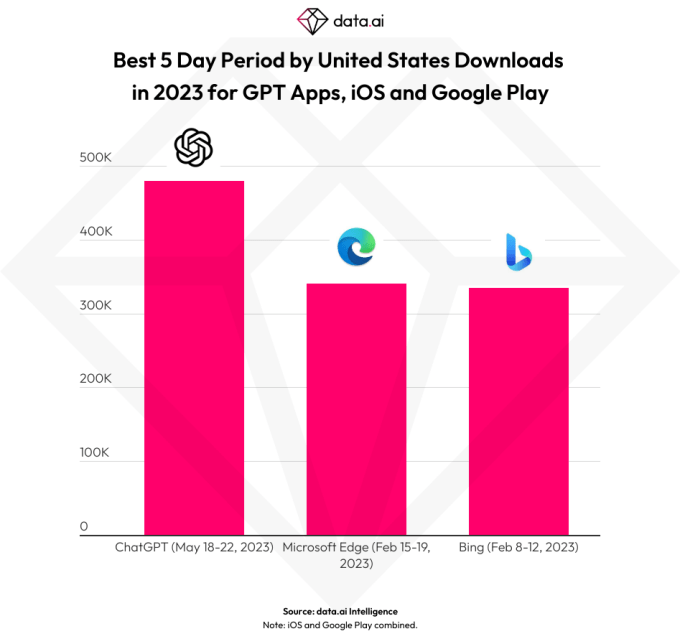

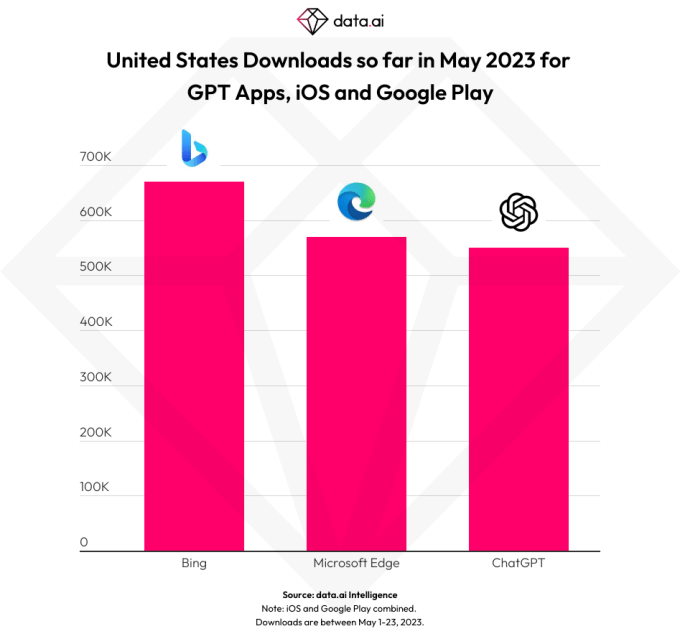

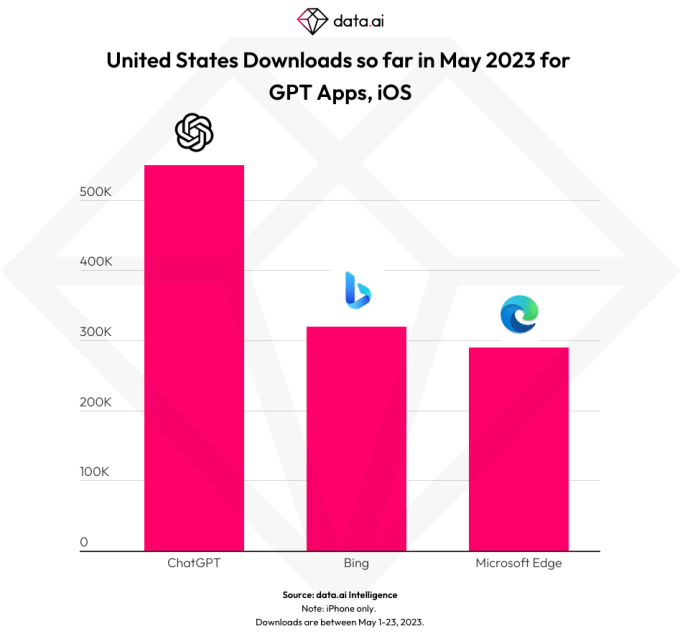

OpenAI’s ChatGPT app outperformed most of its rivals, including other popular AI and chatbot apps as well as Microsoft’s apps, Bing and Edge, which offered some of the first significant third-party integrations of OpenAI’s GPT-4 technology.

Though Bing and Microsoft Edge certainly benefitted from the interest in ChatGPT at their debut, seeing a respective 340K and 335K downloads across iOS and Android in their best five-day periods in February, OpenAI’s ChatGPT app easily topped them, generating 480,000 installs in the first five days of its U.S. launch, when the app was iOS-only.

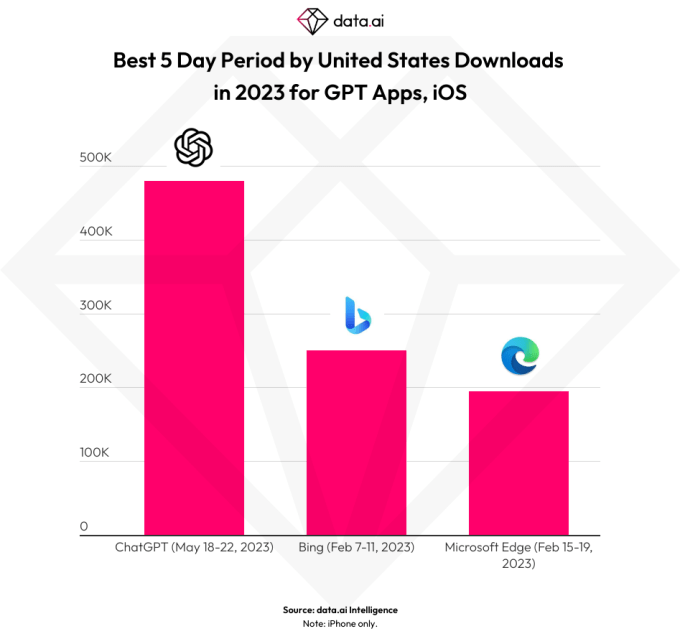

Compared with just Bing and Edge’s iOS downloads alone, ChatGPT was even further ahead with its 480K installs versus Bing’s 250K and Edge’s 195K.

Image Credits: data.ai

However, Bing and Edge were still ahead of ChatGPT when looking at all U.S. downloads in May across both app stores — but not when comparing only iOS installs for the month. That indicates ChatGPT may soon pull ahead of these search-focused alternatives.

Image Credits, above and below: data.ai

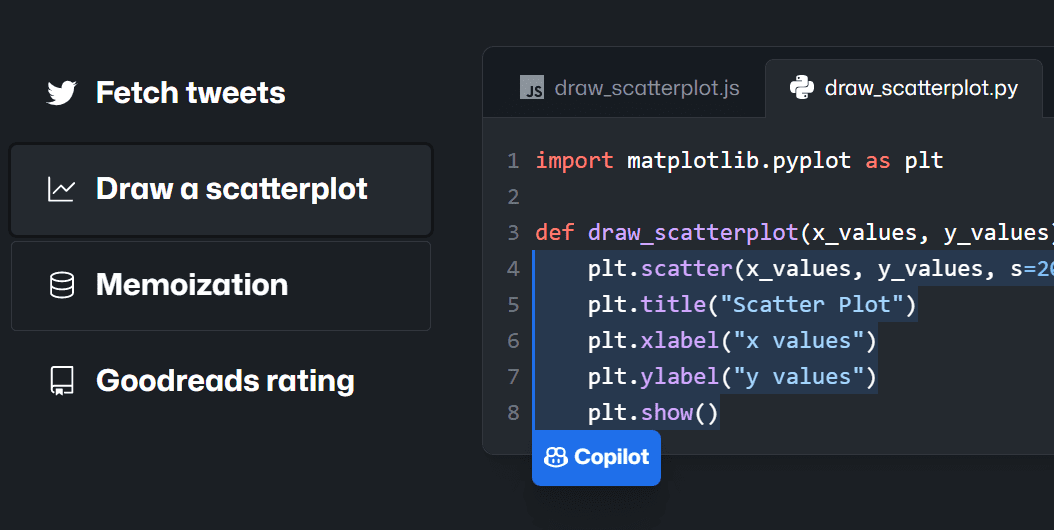

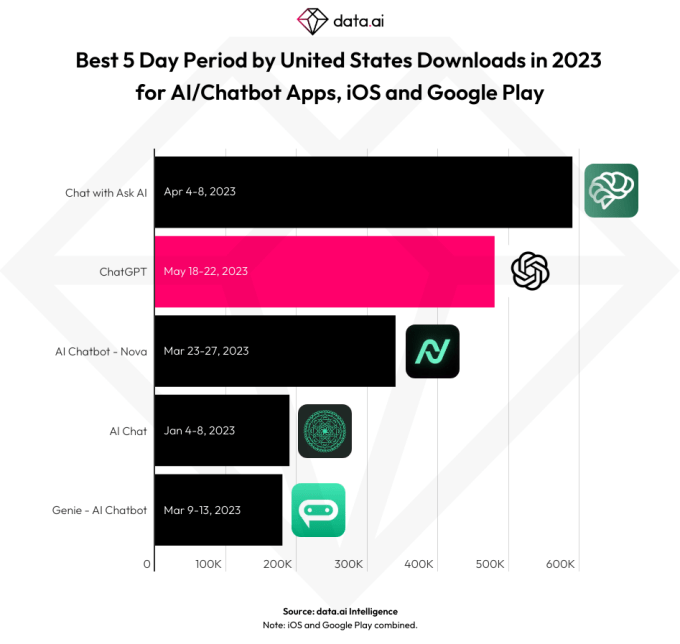

Data.ai’s analysis also found the app outperformed other top AI chatbot apps in the U.S., many of which were generically named in order to capitalize on consumer searches for keywords like “AI” and “chatbot” on the App Store. Here, OpenAI’s ChatGPT found itself in the top five by downloads, when ranked against other apps’ best five-day periods in 2023 across the App Store and Google Play.

The only app to beat it was “Chat with Ask AI,” which saw 590,000 installs from April 4-8, 2023, compared with ChatGPT’s 480,000 installs from May 18-22, the data indicates.

Image Credits: data.ai

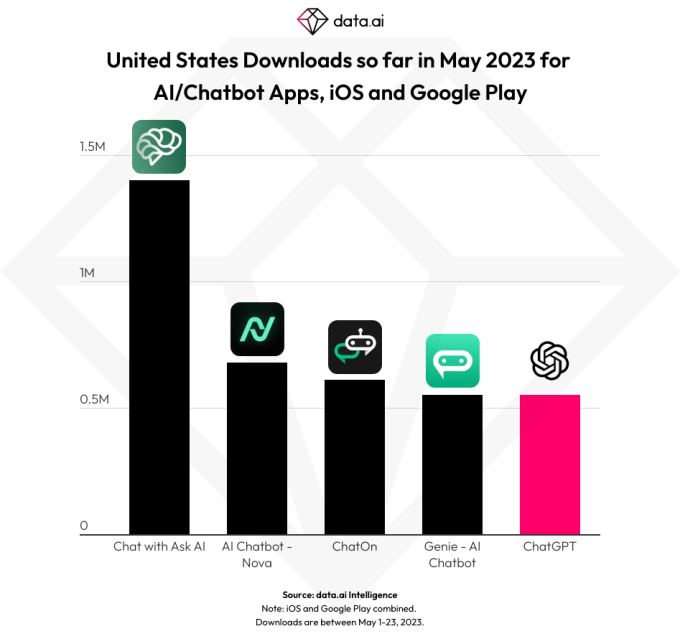

Though it’s only been available for a week, ChatGPT is also already ranking in the top five among AI chatbot apps by downloads in the U.S. in May 2023. At the time of data.ai’s number crunching, it compared ChatGPT and other top chatbot apps for the month of May through the 23rd — so, technically, less than a week since ChatGPT’s launch.

By then, the app had seen 550,000 downloads, tying with Genie – AI Chatbot, the next nearest ranked AI chatbot app by May downloads on the U.S. App Store. A few others were further ahead, however, including ChatOn — AI Chat Bot Assistant (610K installs), AI Chatbot – Nova (680K installs), and Chat with Ask AI (1.4M installs). Still, given how quickly ChatGPT was able to top half a million installs, it may soon beat these rivals.

Image Credits: data.ai

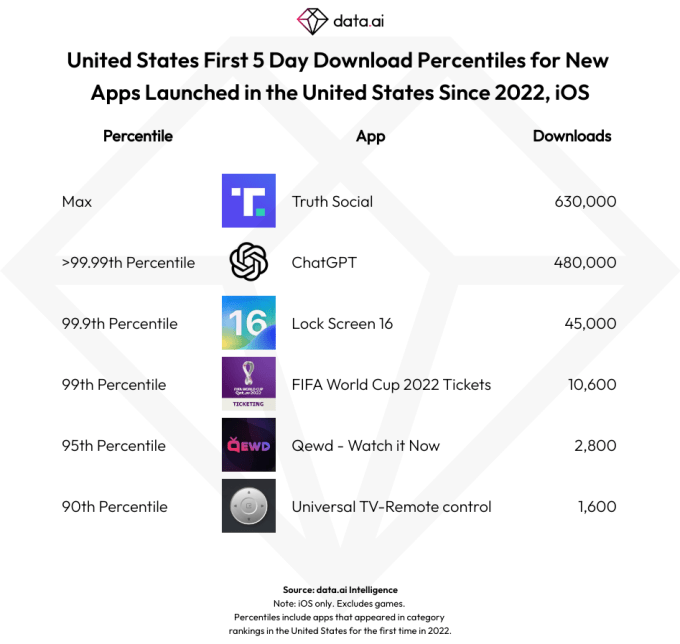

In addition, ChatGPT had one of the best new app debuts this year — and in 2022, data.ai found.

In ChatGPT’s first five days of U.S. iOS downloads post-launch, it generated 480,000 installs, which ranked it as the No. 2 biggest app launch, behind Truth Social, which saw 630,000 downloads. The next largest debuts (i.e, the first 5 days post-launch) included the March 2023 arrival of Widgetable: Lock Screen Widget (360,000 installs), and the 2022 launches of MyNBA2K23 (310,000 installs) and sendtit – Q&A on Instagram (260,000 installs).

This also put ChatGPT in the >99.99th percentile for new app launches in the U.S. since 2022 on iOS.

Data.ai notes that only the top 1% of apps generated more than 10,600 U.S. downloads in their first five days, and the top 0.1% had more than 45,000. Its analysis included data for roughly 39,000 apps that launched in the U.S. on iOS since the start of 2022 and then ranked in the top charts at some point over this period. (The data doesn’t include Apple’s first-party apps, like Apple Music Classical).

Image Credits: data.ai

Of course, installs are only one way of measuring consumer demand and are not as reliable as analyzing how many people then signed up and became active app users.

However, because ChatGPT is still so new, data.ai won’t yet have accurate estimates on metrics like daily or monthly active users, it says — that may take another few weeks to generate.

The official ChatGPT app is now available in 11 more countries

OpenAI launches an official ChatGPT app for iOS