“The great AI race”. Source: Author with Diffusion model in the style of Tiago Hoisel

We’ve grown accustomed to continuous breakthroughs in AI over the last few months.

But not record-breaking announcements that set the new bar at 10 times the one before, which is precisely what Anthropic has done with its newest version of its chatbot Claude, ChatGPT’s biggest competitor.

It literally puts to shame everyone around.

Now, you’ll soon be turning hours of text and information searches into seconds, evolving Generative AI chatbots from simple conversation agents to truly game-changing tools for your life and those around you.

A Chatbot on Steroids, and Focused on Doing Good

As you know, with GenAI we’ve opened a window for AI to generate stuff, like text or images, which is awesome.

But as with anything in technology, it comes with a trade-off, in that GenAI models lack awareness or judgment of what’s ‘good’ or ‘bad’.

Actually, they’ve achieved the capacity to generate text by imitating data generated by humans that, most often than not, hide debatable biases and dubious content.

Sadly, as these models get better as they grow bigger, the incentive to simply give it any possible text you can find, no matter the content, is particularly enticing.

And that causes huge risks.

The alignment problem

Due to their lack of judgment, base Large Language Models, or base LLMs as they are commonly referred to, are particularly dangerous, as they are very susceptible to learning the biases their training data hides because they reenact those same behaviors.

For instance, if the data is biased toward racism, these LLMs become the living embodiment of it. And same applies to homophobia and any other sort of discrimination you can imagine.

Thus, considering that many people see the Internet as the perfect playground to test their limits of unethicality and immorality, the fact that LLMs have been trained with pretty much all the Internet with no guardrails whatsoever says it all about the potential risks.

Thankfully, models like ChatGPT are an evolution of these base models achieved by aligning their responses to what humans consider as ‘appropriate’.

This was done using a reward mechanism described as Reinforcement Learning for Human Feedback, or RLHF.

Particularly, ChatGPT was filtered through the commanding judgment of OpenAI’s engineers that transformed a very dangerous model into something not only much less biased, but also much more useful and great at following instructions.

Unsurprisingly, these LLMs are generally called Instruction-tuned Language Models.

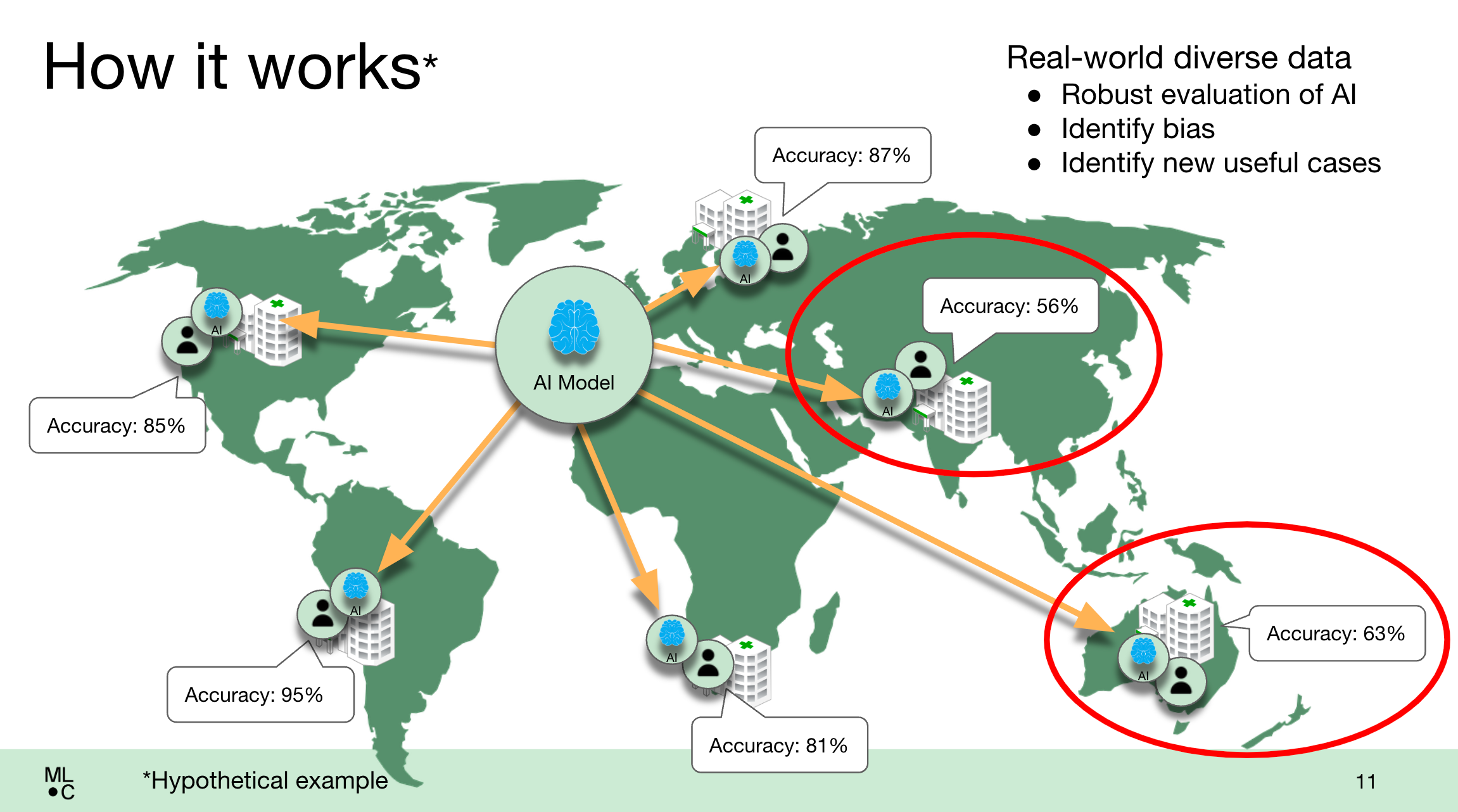

Of course, OpenAI engineers shouldn’t be in charge of deciding what’s good or bad for the rest of the world, as they also have their fair share of biases (cultural, ethnical, etc.).

At the end of the day, even the most virtuous of humans have biases.

Needless to say, this procedure isn’t perfect.

We’ve seen in several cases where these models, despite their alleged alignment, have acted in a sketchy, almost vile way towards their users, as suffered by many with Bing, forcing Microsoft to limit the context of the interaction to just a few messages before things went sideways.

Considering all of this, when two ex-OpenAI researchers founded Anthropic, they had another idea in mind… they would align their models using AI instead of humans, with the completely revolutionary concept of self-alignment.

From Massachusetts to AI

First, the team drafted a Constitution that included the likes of the Universal Declaration of Human Rights, or Apple’s terms of service.

This way, the model not only was taught to predict the next word in a sentence (like any other language model) but it also had to take into account, in each and every response it gave, a Constitution that determined what it could say or not.

Next, instead of humans, the actual AI is in charge of aligning the model, potentially liberating it from human bias.

But the crucial news that Anthropic has released recently isn’t the concept of aligning their models to something humans can tolerate and utilize with AI, but a recent announcement that has turned Claude into the unwavering dominant player in the GenAI war.

Specifically, it has increased its context window from 9,000 tokens to 100,000. An unprecedented improvement that has incomparable implications.

But what does that mean and what are these implications?

It’s all about tokens

Let me be clear that the importance of this concept of ‘token’ can’t be neglected, as despite what many people may tell you, LLMs don’t predict the next word in a sequence… at least not literally.

When generating their response, LLMs predict the next token, which usually represents between 3 and 4 characters, not the next word.

Naturally, these tokens may represent a word, or words can be composed of several of them (for reference, 100 tokens represent around 75 words).

When running an inference, models like ChatGPT break the text you gave them into parts, and perform a series of matrix calculations, a concept defined as self-attention, that combine all the different tokens in the text to learn how each token impacts the rest.

That way, the model “learns” the meaning and context of the text and, that way, can then proceed to respond.

The issue is that this process is very computationally intensive for the model.

To be precise, the computation requirements are quadratic to the input length, so the longer the text you give it, described as the context window, the more expensive is to run the model both in training and in inference time.

These forced researchers to considerably limit the allowed size of the input given to these models to around a standard proportion between 2,000 to 8,000 tokens, the latter of which is around 6,000 words.

Predictably, constraining the context window has severely crippled the capacity of LLMs to impact our lives, leaving them as a funny tool that can help you with a handful of things.

But why does increasing this context window unlock LLMs' greatest potential?

Well, simple, because it unlocks LLMs' most powerful feature, in-context learning.

Learning without training

Put simply, LLMs have a rare capability that enables them to learn ‘on the go’.

As you know, training LLMs is both expensive and dangerous, specifically because to train them you have to hand them your data, which isn’t the best option if you want to protect your privacy.

Also, new data appears every day, so if you had to fine-tune — further train — your model constantly, the business case for LLMs would be absolutely demolished.

Luckily, LLMs are great at this concept described as in-context learning, which is their capacity to learn without actually modifying the weights of the model.

In other words, they can learn to answer your query by simply giving them the data they need at the same time you’re requesting whatever you need from them… without actually having to train the model.

This concept, also known as zero-shot learning or few-shot learning (depending on how many times it needs to see the data to learn), is the capacity of LLMs to accurately respond to a given request using data they haven’t seen before until that point in time.

Consequently, the bigger the context window, the more data you can give them and, thus, the more complex queries it can answer.

Therefore, although small context windows were okay-ish for chatting and other simpler tasks, they were completely incapable of handling truly powerful tasks… until now.

The Star Wars Saga in Seconds

I’ll get to the point.

As I mentioned earlier, the newest version of Claude, version 1.3, can ingest in one go 100,000 tokens, or around 75,000 words.

But that doesn’t tell you a lot, does it?

Let me give you a better idea of what fits inside 75,000 words.

From Frankenstein to Anakin

The article you’re reading right now is below 2,000 words, which is more than 37.5 times less than what Claude is now capable of ingesting in one sitting.

But what are comparable-size examples? Well, to be more specific, 75,000 words represent:

- Around the total length of Mary Shelley’s Frankenstein book

- The entire Harry Potter and the Philosopher’s Stone book, which sits at 76,944 words

- Any of the Chronicles of Narnia books, as all have smaller word counts

- And the most impressive number of all, it’s enough to include the dialogs from up to 8 of the Star Wars films… combined

Now, think about a chatbot that can, in a matter of seconds, give you the power to ask it anything you want about any given text.

For instance, I recently saw a video where they gave Claude a five-hour long podcast of John Cormack, and the model was capable of not only summarizing the overall podcast in just a few words, it was capable of pointing out particular stuff said at one precise moment in time over a five-hour long speaking session.

It’s unfathomable to think that not only this model is capable of doing this with a 75,000-word transcript, but the mind-blowing thing is that it’s also working with data it could be seeing for the first time.

Undoubtedly, this is the pinnacle solution for students, lawyers, research scientists, and basically anyone that must go through lots of data simultaneously.

To me, this is a paradigm shift in AI like few we’ve seen.

Undoubtedly, the door to truly disruptive innovation has been opened for LLMs.

It’s incredible how AI has changed in just a few months, and how rapidly is changing every week. And the only thing we know is that it’s changing… one token at a time.

Ignacio de Gregorio Noblejas has more than five years of comprehensive experience in the technology sector, and currently holds a position as a Management Consulting Manager in a top-tier consulting company, where he has developed a robust background in offering strategic guidance on technology adoption and digital transformation initiatives. His expertise is not limited to work in consulting but in his free time he also shares his profound insights with a broader audience. He actively educates and inspires others about the latest advancements in Artificial Intelligence (AI) through his writing on Medium and his weekly newsletter, TheTechOasis, which have an engaged audience of over 11,000 and 3,000 people respectively.

Original. Reposted with permission.

More On This Topic

- Top 10 Tools for Detecting ChatGPT, GPT-4, Bard, and Claude

- 3 Ways to Access Claude AI for Free

- Meet Gorilla: UC Berkeley and Microsoft’s API-Augmented LLM Outperforms…

- Visual ChatGPT: Microsoft Combine ChatGPT and VFMs

- ChatGPT CLI: Transform Your Command-Line Interface Into ChatGPT

- ChatGPT: Everything You Need to Know