At some point over the past year, you are likely to have engaged in conversations about the prospect of superintelligent AI systems taking over human workers. Or perhaps even more alarmingly, AI ushering in a world reminiscent of science fiction doomsday scenarios.

Paula Goldman isn’t with you on this. The chief ethical and humane use officer at Salesforce believes such discussions are a total ‘waste of time’.

AI systems are already in the copilot phase and are only going to get better over time. “With each advancement in AI, we’re continually refining our safeguards and addressing emerging risks,” she told AIM in an exclusive interview on the sidelines of TrailblazerDX 2024, Salesforce’s developers conference.

The field of AI ethics is not a recent development, Goldman said, it’s been in progress for decades. Issues such as accuracy, bias, and ethical considerations have long been studied and addressed.

“In fact, our current understanding and solutions are built upon this foundation of past research and development. While predicting the future capabilities of AI is challenging, our focus should be on continuously enhancing our safeguards to manage potential risks effectively, ensuring we’re equipped to handle even the most advanced AI technologies,” she added.

Big Fan of the EU AI Act

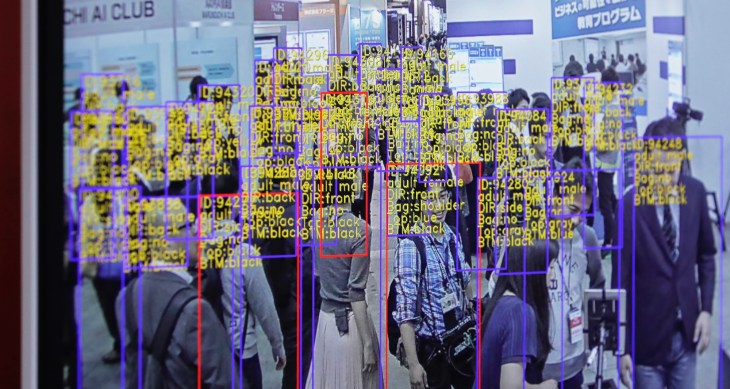

As AI becomes increasingly ubiquitous, the focus has shifted to its ethical implications, prompting governments and lawmakers to introduce stringent policies regarding its use.

The European Union (EU) became the first jurisdiction in the world to introduce the AI Act, the first of its kind, to regulate the technology, which, many lawmakers in the EU believe, could pose potential harm to society if left unregulated.

While different jurisdictions are approaching AI regulation differently, including India, Goldman, in fact, stated that she is a big fan of the EU’s AI Act.

“While there’s ongoing debate about regulating AI models, what’s truly crucial is regulating the outcomes and associated risks of AI, and that’s the general approach the EU AI takes,” Goldman said.

The Act categorises AI systems into four risk levels – unacceptable risk, high risk, limited risk, and minimal or no risk. It focuses on identifying and controlling the highest-risk outcomes, such as fair consideration in job applications or loan approvals, which significantly impact people’s lives.

“Moreover, the EU applies standards not only to those creating the models but also to the apps built on them, the data used, and the companies utilising these products. This comprehensive approach, treating it as a layered process, is often overlooked but is essential for effective regulation.”

However, with regulation comes the fear of hampering innovation, stifling creativity, and impeding the pace of technological advancement.

Nonetheless, Goldman believes that AI regulation is urgently important. “These regulations should be established through democratic processes and involve multiple stakeholders. I am proud of the efforts being made in this regard and emphasise the significance of regulations that transcend individual companies,” she said.

Human at the Helm

At TrailblazerDX, Goldman and her colleagues, which includes Silvio Savarese, chief scientist at Salesforce, stressed on the importance of building trust in AI among consumers.

Savarese even stated that the inability to build consumer “trust could lead to the next AI winter”. Goldman too, along with her colleagues emphasised the critical need to establish transparency and accountability in AI systems to foster consumer trust and prevent potential setbacks in AI adoption.

“At Salesforce, we believe trusted AI needs a human at the helm. Rather than requiring human intervention for each AI interaction, we’re crafting robust controls across systems that empower humans to oversee AI outcomes, allowing them to concentrate on high-judgement tasks that demand their attention the most,” Goldman said.

Salesforce’s approach has been to empower its customers by handing over control, acknowledging that they are best positioned to understand their brand tone, customer expectations, and policies.

“For instance, with the Trust Layer, we plan to allow customers to adjust thresholds for toxicity detection according to their needs. Similarly, with features like Retrieve Augmented Generation (RAG), customers can fine-tune the level of creativity they desire in AI-generated responses.

“Additionally, the incidents concerning AI ethics underscore the importance of government intervention in establishing regulatory frameworks, as these issues may vary across different regions and cultures. Hence, AI regulation by governments is deemed crucial,” she added.

Safeguarding is a Balancing Act

Moreover, as companies ship their AI products, it also becomes critical for companies to work with their customers to ensure ethical and responsible use and eliminate risks.

“At Salesforce, we release products when we deem them ready and responsible, but we also maintain humility, recognising that technology evolves continuously. While we rigorously test products internally from various angles, it’s during pilot phases with customers that we truly uncover potential issues and areas for improvement,” she said.

According to Goldman, this iterative process ensures that products meet certain thresholds before release, and the company continues to learn and enhance them in collaboration with its customers.

“It’s about striking a balance between confidence in our products and openness to ongoing refinement.”

The post Salesforce Chief Ethicist Deems Doomsday AI Discussions a ‘Waste of Time’ appeared first on Analytics India Magazine.