Image generated with Ideogram.ai

I am sure that most of us have used search engines.

There is even a phrase such as “Just Google it.” The phrase means you should search for the answer using Google's search engine. That’s how universal Google can now be identified as a search engine.

Why search engine is so valuable? Search engines allow users to easily acquire information on the internet using limited query input and organize that information based on relevance and quality. In turn, search enables accessibility to massive knowledge that was previously inaccessible.

Traditionally, the search engine approach to finding information is based on lexical matches or word matching. It works well, but sometimes, the result could be more accurate because the user intention differs from the input text.

For example, the input “Red Dress Shot in the Dark” can have a double meaning, especially with the word “Shot.” The more probable meaning is that the Red Dress picture is taken in the dark, but traditional search engines would not understand it. That’s why Semantic Search is emerging.

Semantic search could be defined as a search engine that considers the meaning of words and sentences. The semantic search output would be information that matches the query meaning, which contrasts with a traditional search that matches the query with words.

In the NLP (Natural Language Processing) field, vector databases have significantly improved semantic search capabilities by utilizing the storage, indexing, and retrieval of high-dimensional vectors representing text's meaning. So, semantic search and vector databases were closely related fields.

This article will discuss semantic search and how to use a Vector Database. With that in mind, let’s get into it.

How Semantic Search works

Let’s discuss Semantic Search in the context of Vector Databases.

Semantic search ideas are based on the meanings of the text, but how could we capture that information? A computer can’t have a feeling or knowledge like humans do, which means the word “meanings” needs to refer to something else. In the semantic search, the word “meaning” would become a representation of knowledge that is suitable for meaningful retrieval.

The meaning representation comes as Embedding, the text transformation process into a Vector with numerical information. For example, we can transform the sentence “I want to learn about Semantic Search” using the OpenAI Embedding model.

[-0.027598874643445015, 0.005403674207627773, -0.03200408071279526, -0.0026835924945771694, -0.01792600005865097,...]How is this numerical vector able to capture the meanings, then? Let’s take a step back. The result you see above is the embedding result of the sentence. The embedding output would be different if you replaced even just one word in the above sentence. Even a single word would have a different embedding output as well.

If we look at the whole picture, embeddings for a single word versus a complete sentence will differ significantly because sentence embeddings account for relationships between words and the sentence's overall meaning, which is not captured in the individual word embeddings. It means each word, sentence, and text is unique in its embedding result. This is how embedding could capture meaning instead of lexical matching.

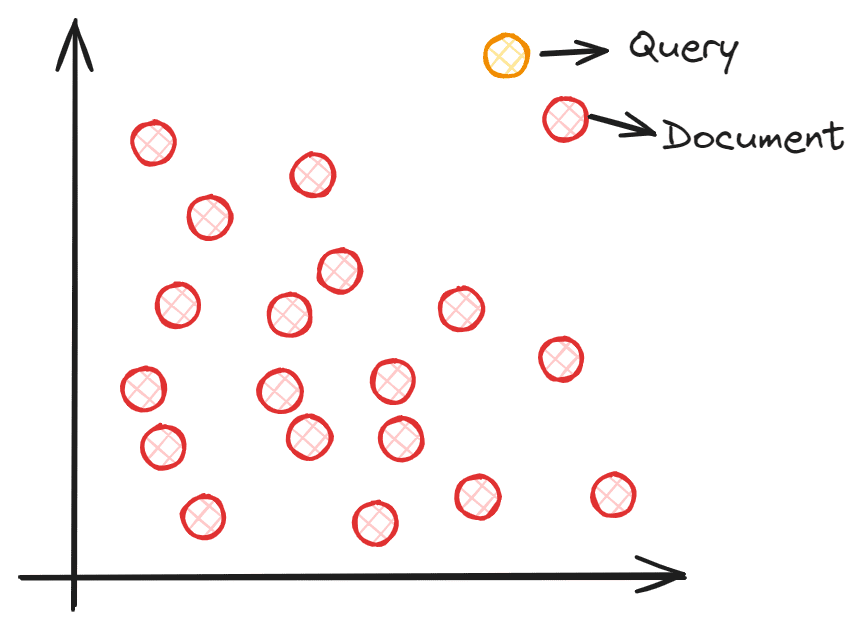

So, how does semantic search work with vectors? A semantic search aims to embed your corpus into a vector space. This allows each data point to provide information (text, sentence, documents, etc.) and become a coordinate point. The query input is processed into a vector via embedding into the same vector space during search time. We would find the closest embedding from our corpus to the query input using vector similarity measures such as Cosine similarities. To understand better, you can see the image below.

Image by Author

Each document embedding coordinate is placed in the vector space, and the query embedding is placed in the vector space. The closest document to the query would be selected as it theoretically has the closest semantic meaning to the input.

However, maintaining the vector space that contains all the coordinates would be a massive task, especially with a larger corpus. The Vector database is preferable for storing the vector instead of having the whole vector space as it allows better vector calculation and can maintain efficiency as the data grows.

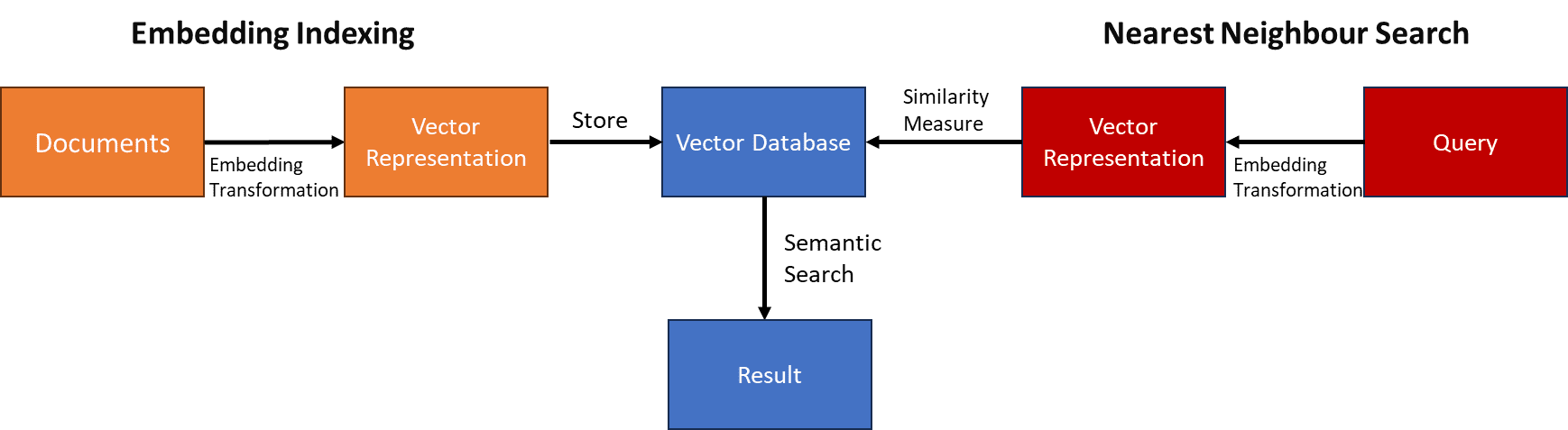

The high-level process of Semantic Search with Vector Databases can be seen in the image below.

Image by Author

In the next section, we will perform a semantic search with a Python example.

Python Implementation

In this article, we will use an open-source Vector Database Weaviate. For tutorial purposes, we also use Weaviate Cloud Service (WCS) to store our vector.

First, we need to install the Weavieate Python Package.

pip install weaviate-clientThen, please register for their free cluster via Weaviate Console and secure both the Cluster URL and the API Key.

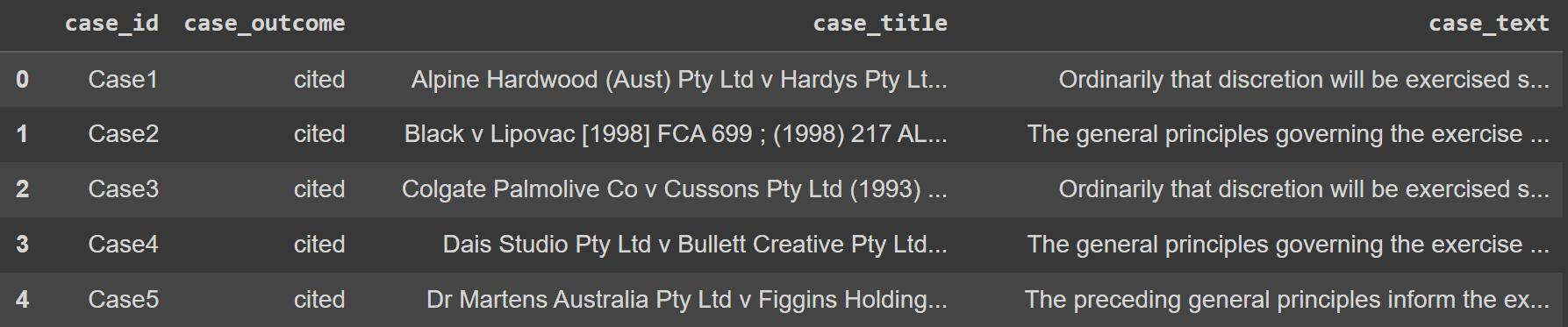

As for the dataset example, we would use the Legal Text data from Kaggle. To make things easier, we would also only use the top 100 rows of data.

import pandas as pd data = pd.read_csv('legal_text_classification.csv', nrows = 100)

Image by Author

Next, we would store all the data in the Vector Databases on Weaviate Cloud Service. To do that, we need to set the connection to the database.

import weaviate import os import requests import json cluster_url = "YOUR_CLUSTER_URL" wcs_api_key = "YOUR_WCS_API_KEY" Openai_api_key ="YOUR_OPENAI_API_KEY" client = weaviate.connect_to_wcs( cluster_url=cluster_url, auth_credentials=weaviate.auth.AuthApiKey(wcs_api_key), headers={ "X-OpenAI-Api-Key": openai_api_key } )The next thing we need to do is connect to the Weaviate Cloud Service and create a class (like Table in SQL) to store all the text data.

import weaviate.classes as wvc client.connect() legal_cases = client.collections.create( name="LegalCases", vectorizer_config=wvc.config.Configure.Vectorizer.text2vec_openai(), generative_config=wvc.config.Configure.Generative.openai() )In the code above, we create a LegalCases class that uses the OpenAI Embedding model. In the background, whatever text object we would store in the LegalCases class would go through the OpenAI Embedding model and be stored as the embedding vector.

Let’s try to store the Legal text data in a vector database. To do that, you can use the following code.

sent_to_vdb = data.to_dict(orient='records') legal_cases.data.insert_many(sent_to_vdb)You should see in the Weaviate Cluster that your Legal text is already stored there.

With the Vector Database ready, let’s try the Semantic Search. Weaviate API makes it easier, as shown in the code below. In the example below, we will try to find the cases that happen in Australia.

response = legal_cases.query.near_text( query="Cases in Australia", limit=2 ) for i in range(len(response.objects)): print(response.objects[i].properties)The result is shown below.

{'case_title': 'Castlemaine Tooheys Ltd v South Australia [1986] HCA 58 ; (1986) 161 CLR 148', 'case_id': 'Case11', 'case_text': 'Hexal Australia Pty Ltd v Roche Therapeutics Inc (2005) 66 IPR 325, the likelihood of irreparable harm was regarded by Stone J as, indeed, a separate element that had to be established by an applicant for an interlocutory injunction. Her Honour cited the well-known passage from the judgment of Mason ACJ in Castlemaine Tooheys Ltd v South Australia [1986] HCA 58 ; (1986) 161 CLR 148 (at 153) as support for that proposition.', 'case_outcome': 'cited'} {'case_title': 'Deputy Commissioner of Taxation v ACN 080 122 587 Pty Ltd [2005] NSWSC 1247', 'case_id': 'Case97', 'case_text': 'both propositions are of some novelty in circumstances such as the present, counsel is correct in submitting that there is some support to be derived from the decisions of Young CJ in Eq in Deputy Commissioner of Taxation v ACN 080 122 587 Pty Ltd [2005] NSWSC 1247 and Austin J in Re Currabubula Holdings Pty Ltd (in liq); Ex parte Lord (2004) 48 ACSR 734; (2004) 22 ACLC 858, at least so far as standing is concerned.', 'case_outcome': 'cited'}As you can see, we have two different results. In the first case, the word “Australia” was directly mentioned in the document so it is easier to find. However, the second result did not have any word “Australia” anywhere. However, Semantic Search can find it because there are words related to the word “Australia” such as “NSWSC” which stands for New South Wales Supreme Court, or the word “Currabubula” which is the village in Australia.

Traditional lexical matching might miss the second record, but the semantic search is much more accurate as it takes into account the document meanings.

That’s all the simple Semantic Search with Vector Database implementation.

Conclusion

Search engines have dominated information acquisition on the internet although the traditional method with lexical match contains a flaw, which is that it fails to capture user intent. This limitation gives rise to the Semantic Search, a search engine method that can interpret the meaning of document queries. Enhanced with vector databases, semantic search capability is even more efficient.

In this article, we have explored how Semantic Search works and hands-on Python implementation with Open-Source Weaviate Vector Databases. I hope it helps!

Cornellius Yudha Wijaya is a data science assistant manager and data writer. While working full-time at Allianz Indonesia, he loves to share Python and data tips via social media and writing media. Cornellius writes on a variety of AI and machine learning topics.

- Python Vector Databases and Vector Indexes: Architecting LLM Apps

- How Semantic Vector Search Transforms Customer Support Interactions

- An Honest Comparison of Open Source Vector Databases

- Vector Databases in AI and LLM Use Cases

- What are Vector Databases and Why Are They Important for LLMs?

- A Comprehensive Guide to Pinecone Vector Databases

https://t.co/fSw615zE8S

https://t.co/fSw615zE8S ) introduces ‘Llama 3 Researcher’ by GroqInc, delivering Llama 3 8B at 876 tokens/s – the fastest speed we benchmark of any model.

) introduces ‘Llama 3 Researcher’ by GroqInc, delivering Llama 3 8B at 876 tokens/s – the fastest speed we benchmark of any model.