Final week’s GTC 2025 present could have been agentic AI’s breakout second, however the core know-how behind it has been quietly enhancing behind the scenes. That progress is being tracked throughout a collection of coding benchmarks, corresponding to SWE-bench and GAIA, main some to consider AI brokers are on the cusp of one thing huge.

It wasn’t that way back that AI-generated code was not deemed appropriate for deployment. The SQL code could be too verbose or the Python code could be buggy or insecure. Nonetheless, that state of affairs has modified significantly in latest months, and AI fashions as we speak are producing extra code for patrons every single day.

Benchmarks present a great way to gauge how far agentic AI has come within the software program engineering area. One of many extra in style benchmarks, dubbed SWE-bench, was created by researchers at Princeton College to measure how nicely LLMs like Meta’s Llama and Anthropic’s Claude can remedy widespread software program engineering challenges. The benchmark makes use of GitHub as a wealthy useful resource of Python software program bugs throughout 16 repositories and offers a mechanism for measuring how nicely the LLM-based AI brokers can remedy them.

When the authors submitted their paper, “SWE-Bench: Can Language Fashions Resolve Actual-World GitHub Points?” to the Worldwide Convention on Studying Representations (ICLR) in October 2023, the LLMs weren’t acting at a excessive degree. “Our evaluations present that each state-of-the-art proprietary fashions and our fine-tuned mannequin SWE-Llama can resolve solely the only points,” the authors wrote within the summary. “One of the best-performing mannequin, Claude 2, is ready to remedy a mere 1.96% of the problems.”

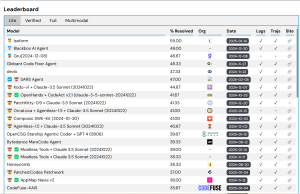

That modified shortly. Right this moment, the SWE-bench leaderboard reveals the top-scoring mannequin resolved 55% of the coding points on SWE-bench Lite, which is a subset of the benchmark designed to make analysis less expensive and extra accessible.

SWE-bench measures AI brokers’ functionality to resolve GitHub points. You’ll be able to see the present leaders at https://www.swebench.com.

Huggingface put collectively a benchmark for Normal AI Assistants, dubbed GAIA, that measures a mannequin’s functionality throughout a number of realms, together with reasoning, multi-modality dealing with, Internet searching, and usually tool-use proficiency. The GAIA assessments are non-ambiguous, and are difficult, corresponding to counting the variety of birds in a five-minute video.

A 12 months in the past, the highest rating on degree 3 of the GAIA check was round 14, based on Sri Ambati, the CEO and co-founder of H2O.ai. Right this moment, an H2O.ai-based mannequin primarily based on Claude 3.7 Sonnet holds the highest total rating, about 53.

“So the accuracy is simply actually rising very quick,” Ambati stated. “We’re not totally there, however we’re on that path.”

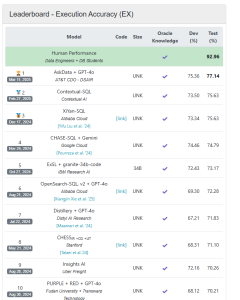

H2O.ai’s software program is concerned in one other benchmark that measures SQL era. BIRD, which stands for BIg Bench for LaRge-scale Database Grounded Textual content-to-SQL Analysis, measures how nicely AI fashions can parse pure language into SQL.

When BIRD debuted in Might 2023, the highest scoring mannequin, CoT+ChatGPT, demonstrated about 40% accuracy. One 12 months in the past, the top-scoring AI mannequin, ExSL+granite-20b-code, was primarily based on IBM’s Granite AI mannequin and had an accuracy of about 68%. That was fairly a bit under the aptitude of human efficiency, which BIRD measures at about 92%. The present BIRD leaderboard reveals an H2O.ai-based mannequin from AT&T because the chief, with an 77% accuracy charge.

The fast progress in producing first rate pc code has led some influential AI leaders, corresponding to Nvidia CEO and co-founder Jensen Huang and Anthropic co-founder and CEO Dario Amodei, to make daring predictions about the place we are going to quickly discover ourselves.

“We aren’t removed from a world–I believe we’ll be there in three to 6 months–the place AI is writing 90 % of the code,” Amodei stated earlier this month. “After which in twelve months, we could also be in a world the place AI is writing primarily the entire code.”

GAIA measures AI brokers’ functionality to deal with a variety of duties. You’ll be able to see the present leaders board at https://huggingface.co/areas/gaia-benchmark/leaderboard.

Throughout his GTC25 keynote final week, Huang shared his imaginative and prescient about the way forward for agentic computing. In his view, we’re quickly approaching a world the place AI factories generate and run software program primarily based on human inputs, versus people writing software program to retrieve and manipulate knowledge.

“Whereas prior to now we wrote the software program and we ran it on computer systems, sooner or later, the computer systems are going to generate the tokens for the software program,” Huang stated. “And so the pc has turn into a generator of tokens, not a retrieval of recordsdata. [We’ve gone] from retrieval-based computing to generative-based computing.”

Others are taking a extra pragmatic view. Anupam Datta, the principal analysis scientist at Snowflake and lead of the Snowflake AI Analysis Crew, applauds the development in SQL era. As an example, Snowflake says its Cortex Agent’s text-to-SQL era accuracy charge is 92%. Nonetheless, Datta doesn’t share Amodei’s view that computer systems will likely be rolling their very own code by the tip of the 12 months.

“My view is that coding brokers in sure areas, like text-to-SQL, I believe are getting actually good,” Datta stated at GTC25 final week. “Sure different areas, they’re extra assistants that assist a programmer get quicker. The human will not be out of the loop simply but.”

BIRD measures the text-to-SQL functionality of AI brokers. You’ll be able to entry the present leaderboard at https://bird-bench.github.io.

Programmer productiveness would be the huge winner due to coding copilots and agentic AI methods, he stated. We’re not removed from a world the place agentic AI will generate the primary draft, he stated, after which the people will are available and refine and enhance it. “There will likely be large positive aspects in productiveness,” Datta stated. “So the affect will likely be very important, simply with copilot alone.”

H2O.ai’s Ambati additionally believes that software program engineers will work intently with AI. Even one of the best coding brokers as we speak introduce “delicate bugs,” so individuals nonetheless want to have a look at it the code, he stated. “It’s nonetheless a reasonably crucial ability set.”

One space that’s nonetheless fairly inexperienced is the semantic layer, the place pure language is translated into enterprise context. The issue is that the English language will be ambiguous, with a number of meanings from the identical phrase.

“A part of it’s understanding the semantics layer of the shopper schema, the metadata,” Ambati stated. “That piece continues to be constructing. That ontology continues to be a little bit of a site information.”

Hallucinations are nonetheless a problem too, as is the potential for an AI mannequin to go off the rails and say or do dangerous issues. These are all areas of concern that firms like Anthropic, Nvidia, H2O.ai, and Snowflake are all working to mitigate. However because the core capabilities of Gen AI get higher, the variety of causes to not put AI brokers into manufacturing decreases.

This text first appeared on BigDATAwire.