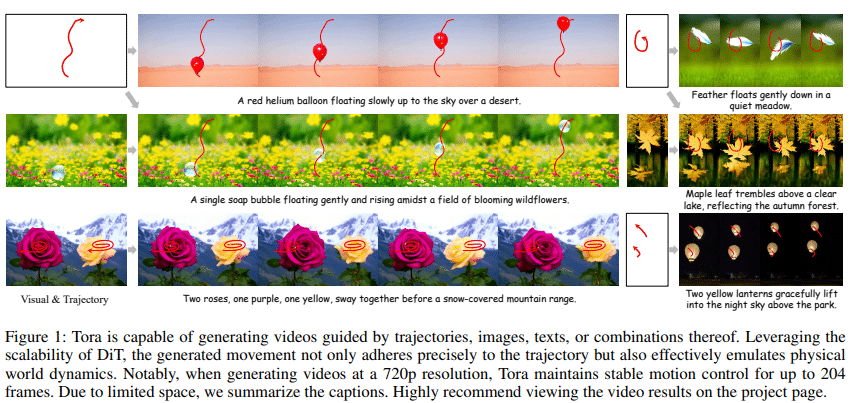

A team of researchers from Alibaba Group has introduced Tora, an AI model that represents a significant advancement in controllable video generation technology. This innovative system combines text, image, and trajectory inputs to produce high-quality videos with unprecedented control over motion and content.

Tora, which stands for Trajectory-oriented Diffusion Transformer, is the first of its kind to integrate trajectory-based control into a Diffusion Transformer (DiT) framework. This novel approach allows for the creation of videos up to 204 frames long at 720p resolution, maintaining consistent motion control and visual quality throughout extended durations.

Read full paper here

The system’s key innovations include a Trajectory Extractor (TE) that converts user-defined trajectories into spacetime motion patches, and a Motion-guidance Fuser (MGF) that seamlessly integrates these motion cues into the video generation process. Additionally, the system employs a two-stage training strategy, which enhances the model’s ability to handle diverse motion patterns.

Tora outperforms existing methods in several key areas, particularly in motion control, where it achieves 3-5 times better trajectory accuracy compared to other state-of-the-art models, especially when generating longer videos of up to 128 frames.

In terms of visual quality, Tora produces smoother and more physically realistic movements, significantly reducing issues like motion blur and object deformation. Additionally, Tora excels in scalability, maintaining effective trajectory control over longer durations and at higher resolutions, unlike previous U-Net-based models.”

The researchers conducted extensive experiments to validate Tora’s performance, including comparisons with existing methods like VideoComposer, DragNUWA, and MotionCtrl. They also performed ablation studies to analyse the impact of various design choices, such as trajectory compression techniques and motion fusion block designs.

This development has significant implications for various industries, including entertainment, advertising, and education, where high-quality, controllable video content is in high demand. Tora’s ability to generate videos with precise motion control while maintaining visual fidelity could revolutionise content creation workflows.

As AI-powered video generation continues to evolve, Tora represents a significant step forward in combining user control with the power of large-scale generative models. This breakthrough paves the way for more accessible and sophisticated video production tools, potentially democratising high-quality video creation for a wider range of users and applications.

The post Alibaba introduces Tora: A Trajectory-oriented Diffusion Transformer for Video Generation appeared first on AIM.